The Power of Data Ingestion with Amazon Kinesis

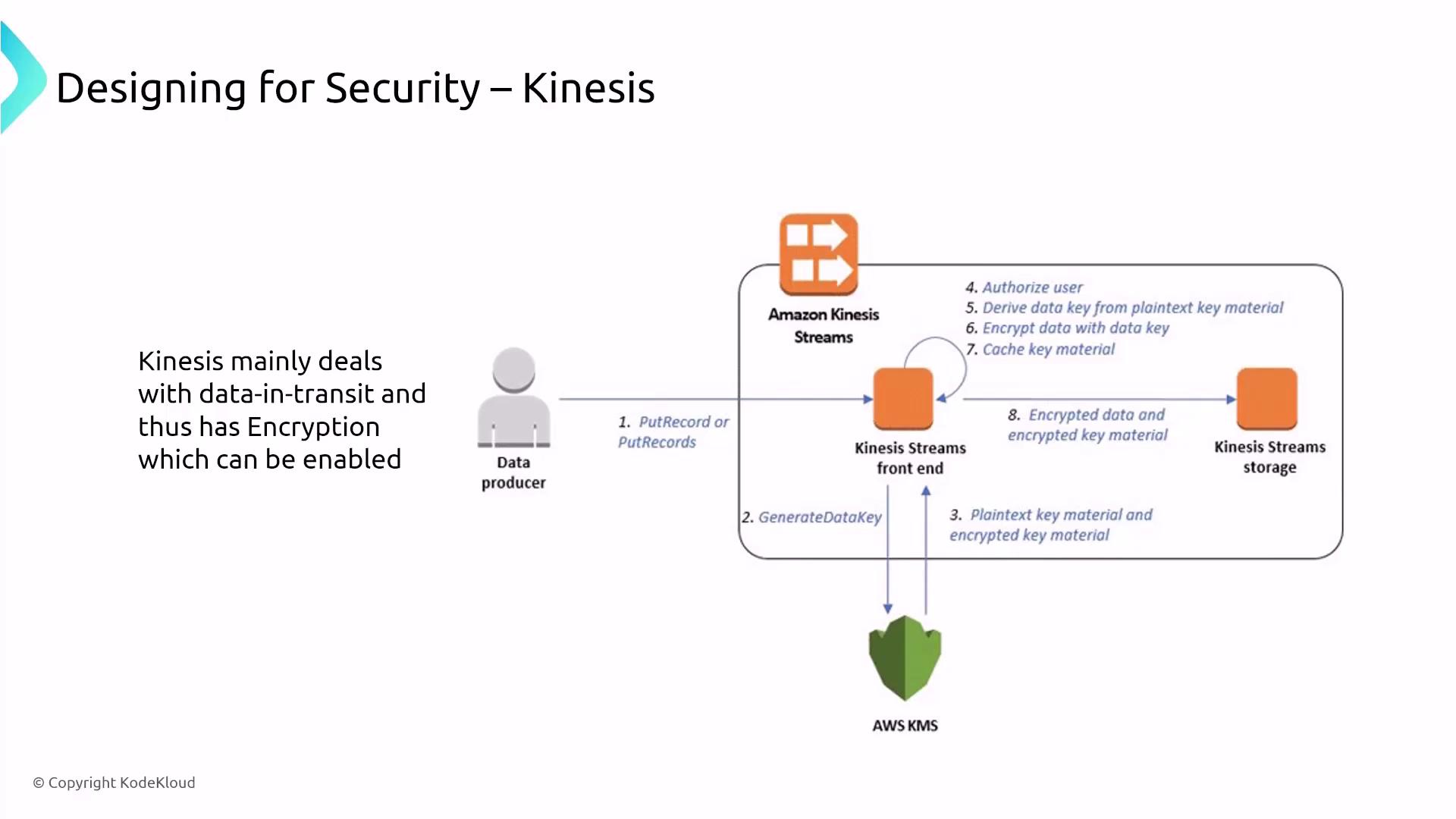

Amazon Kinesis is a managed streaming service similar to Apache Kafka. It operates as a secure streaming bus where records are encrypted by default. When data is ingested, it is temporarily stored in Kinesis stream storage before being moved to an alternative storage solution. Kinesis supports both server-side encryption to protect data at rest and SSL/TLS to secure data in transit, ensuring robust data protection.

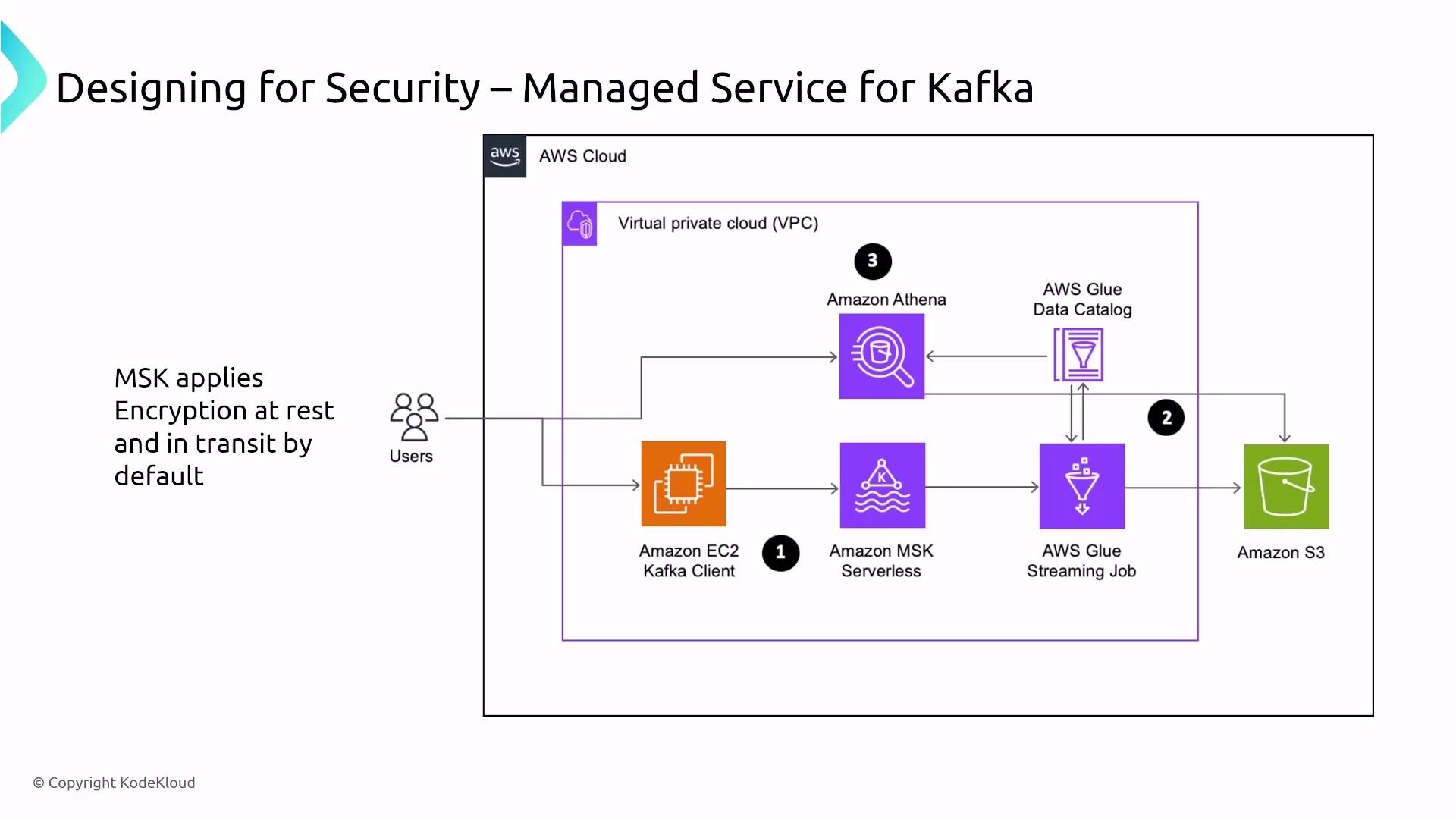

Managed Service for Kafka (MSK)

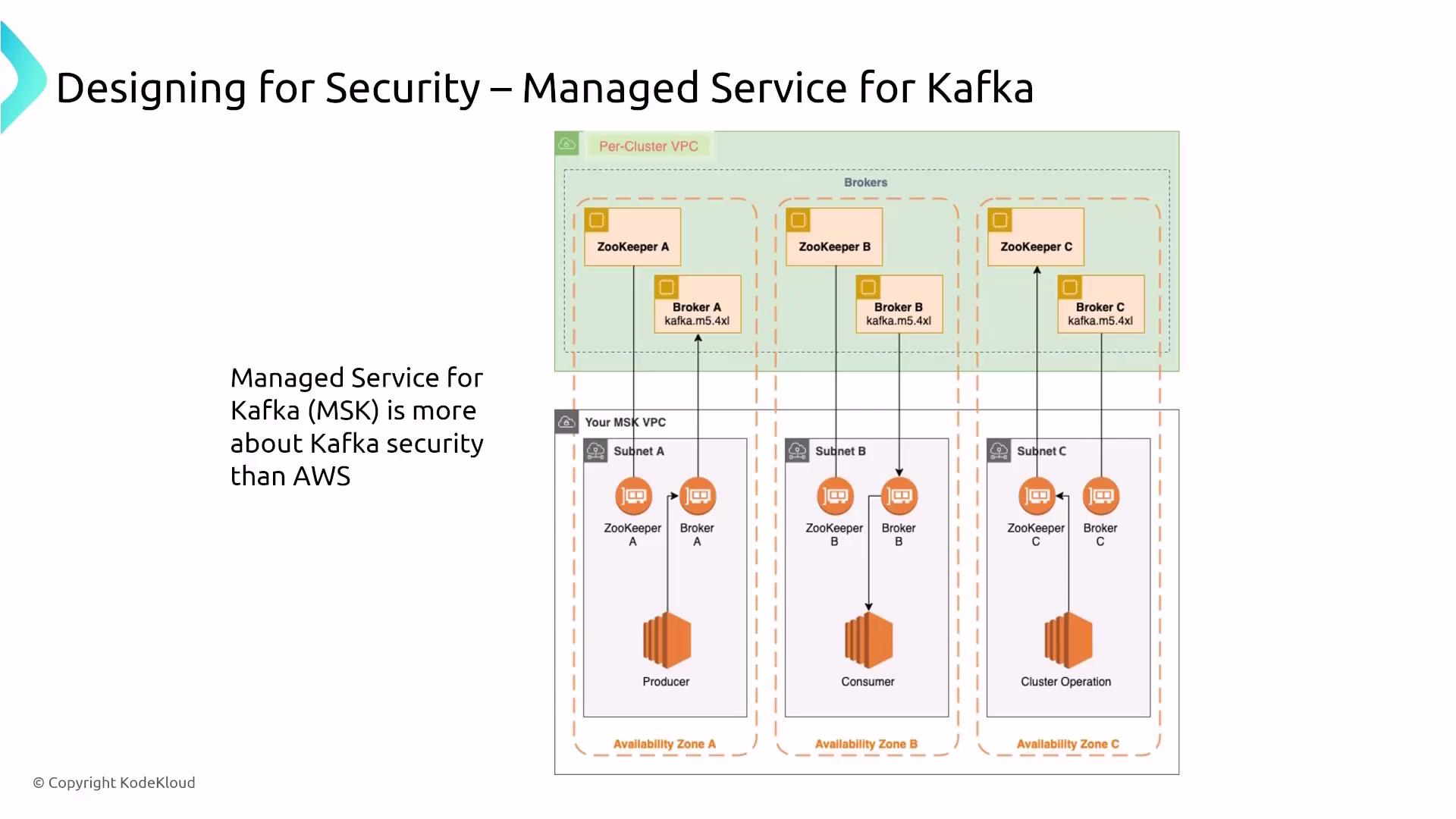

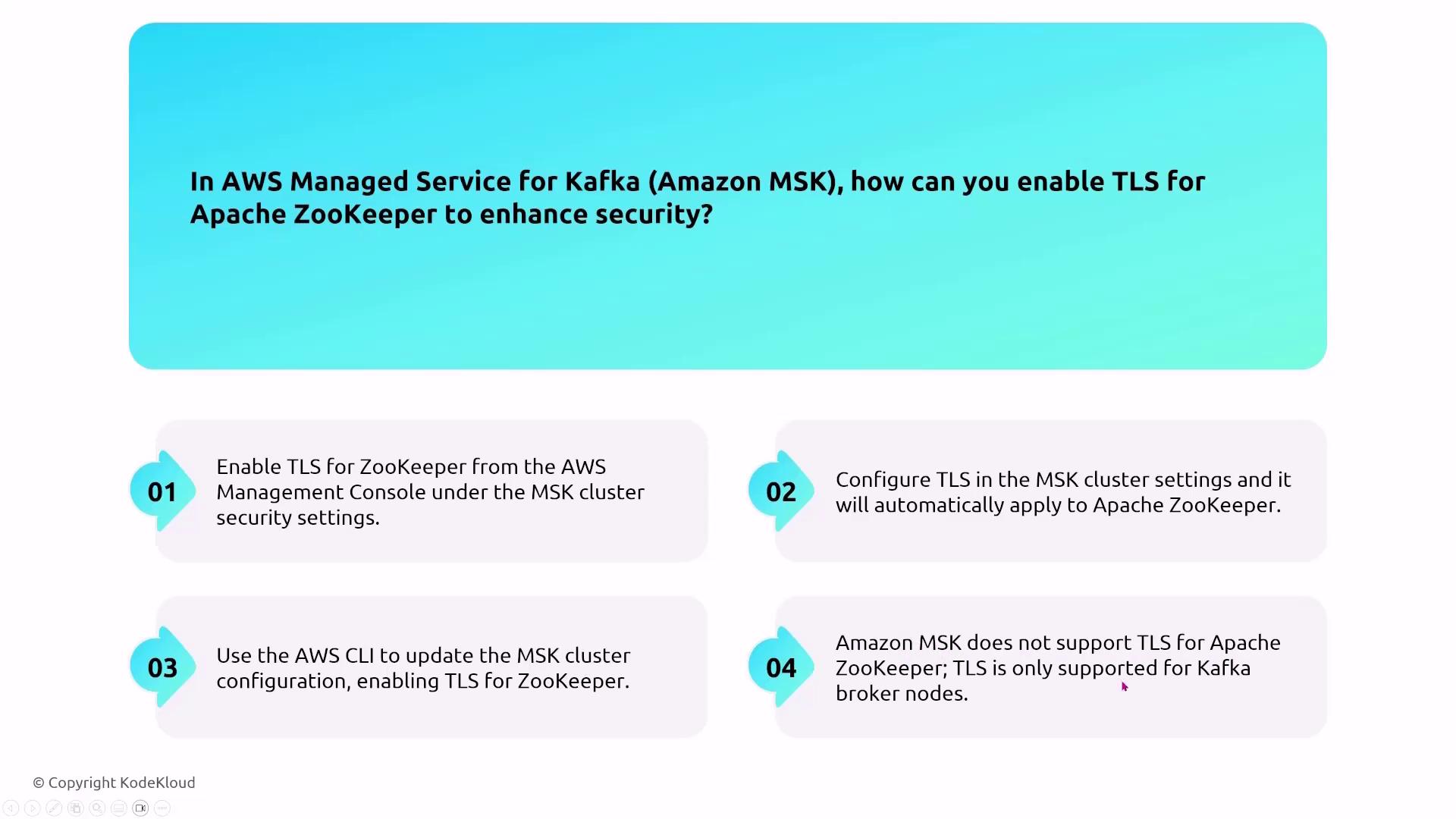

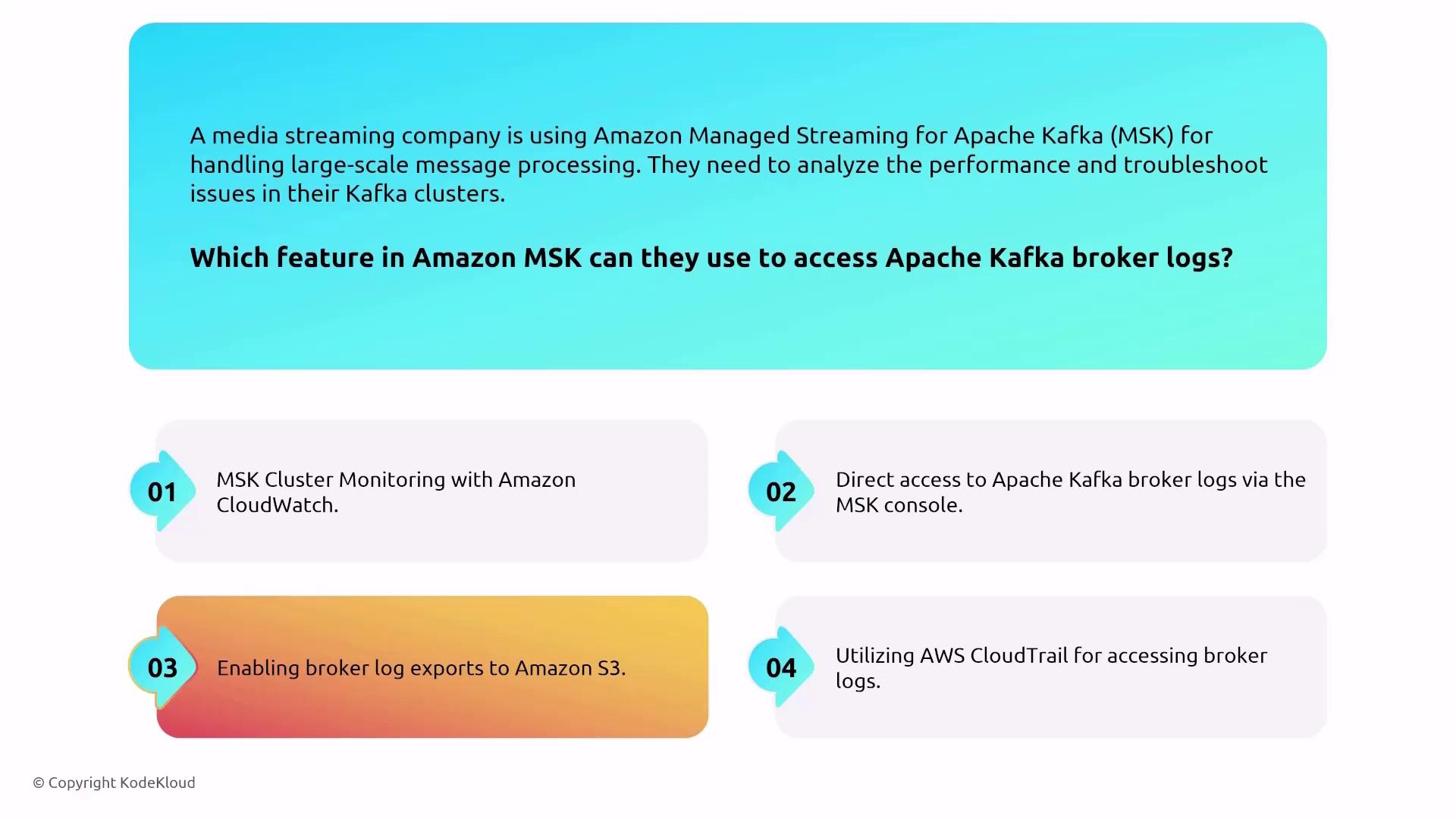

Managed Service for Kafka (MSK) on AWS delivers a similar streaming solution focused on open-source Kafka. Unlike Kinesis with its shard-based model, MSK uses a more complex architecture involving Kafka brokers and ZooKeeper nodes for cluster management. AWS manages much of the heavy lifting—provisioning, configuration, and scaling—allowing you to focus on specifying the appropriate cluster size.

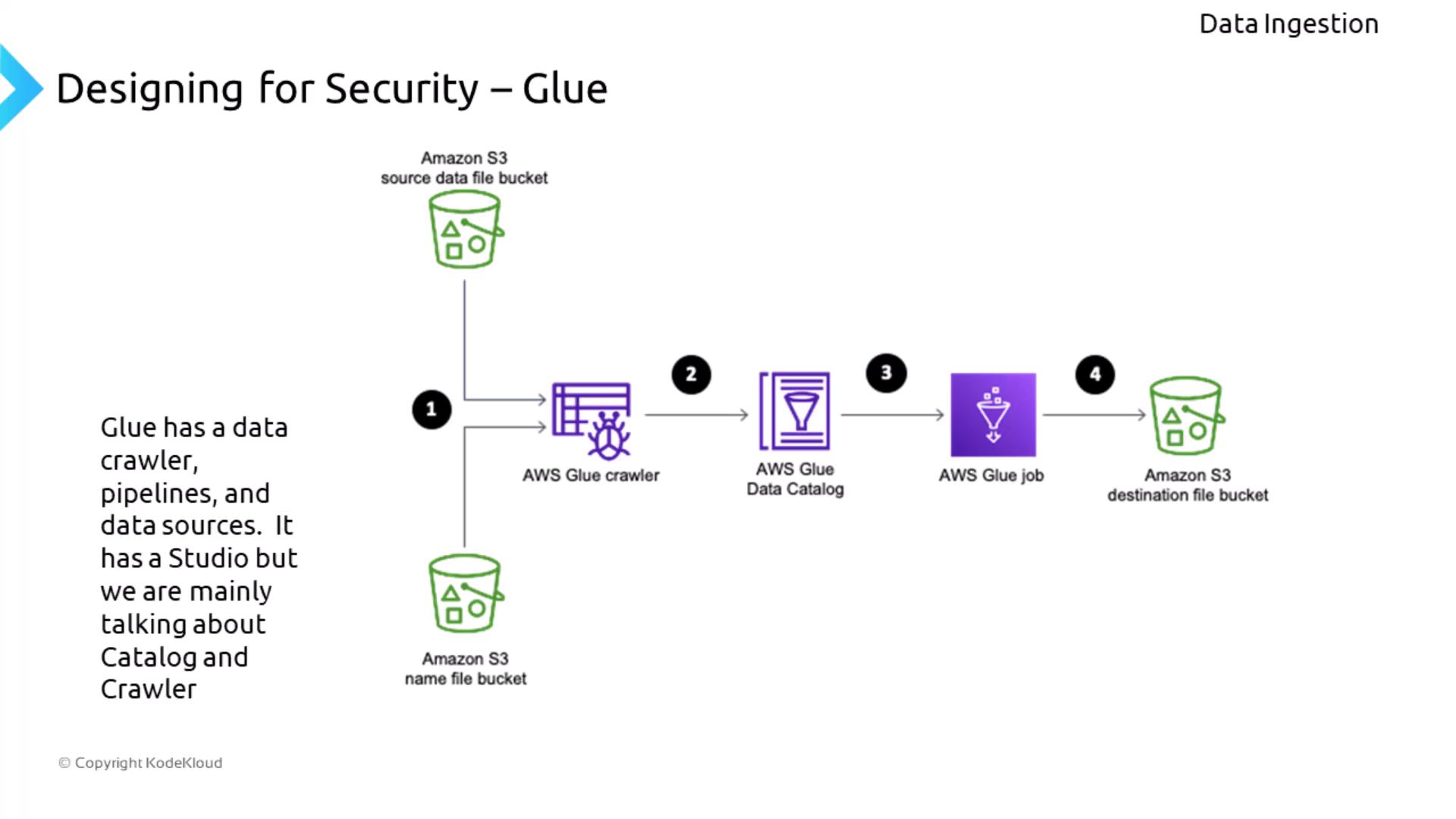

Data Extraction, Transformation, and Load with AWS Glue

AWS Glue is a robust service for ETL (Extract, Transform, Load) operations powered by PySpark. Glue comprises multiple components:- A data crawler that scans various sources (like S3) to populate a data catalog.

- An ETL job engine to execute data transformation tasks.

- Glue Studio, which offers a graphical interface for creating and managing ETL jobs (with security managed by AWS).

When securing AWS Glue, it is advisable to use VPC endpoints for secure, private connectivity, restrict access with specific IAM roles and policies, and avoid storing sensitive data in plaintext within scripts.

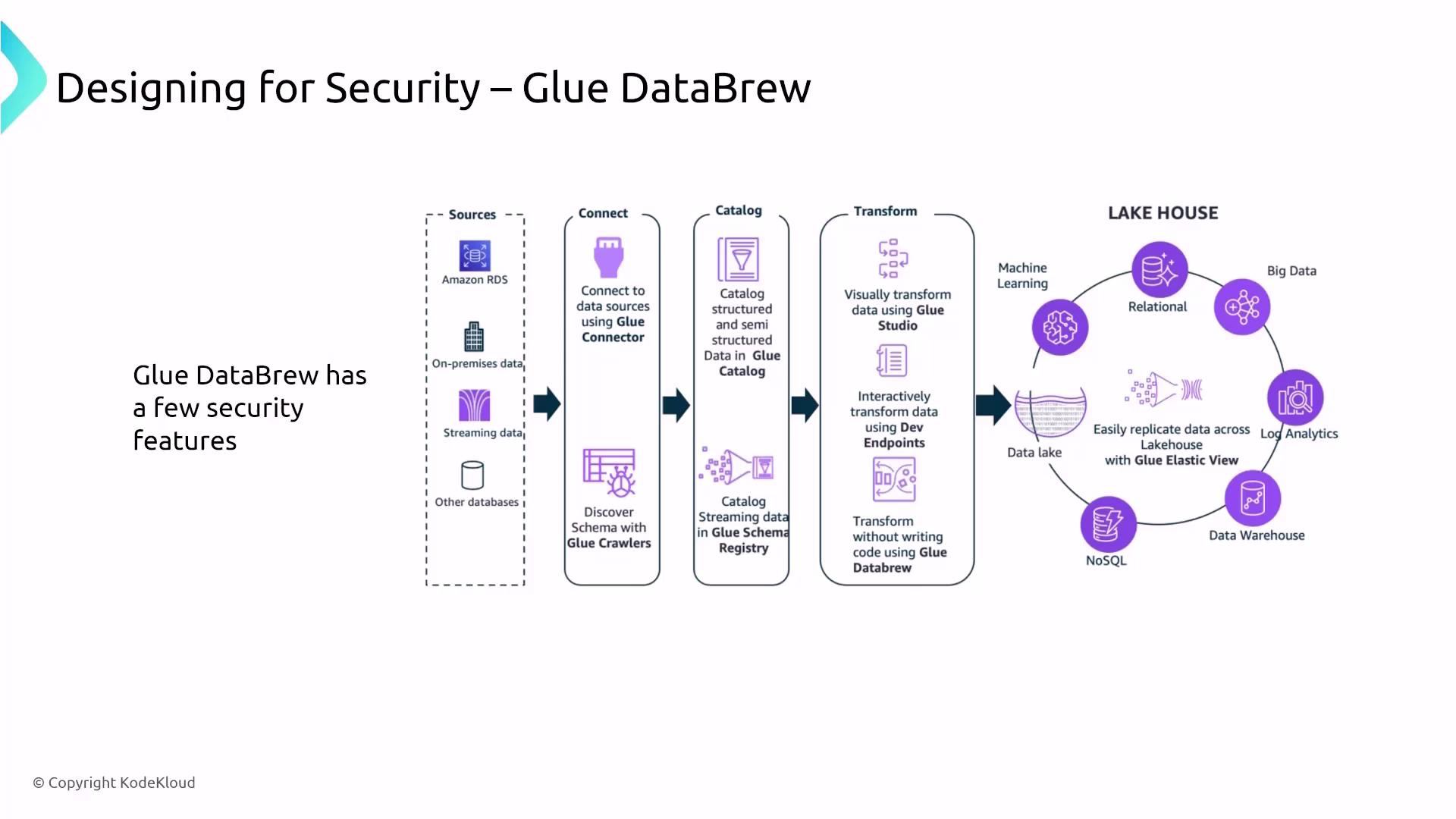

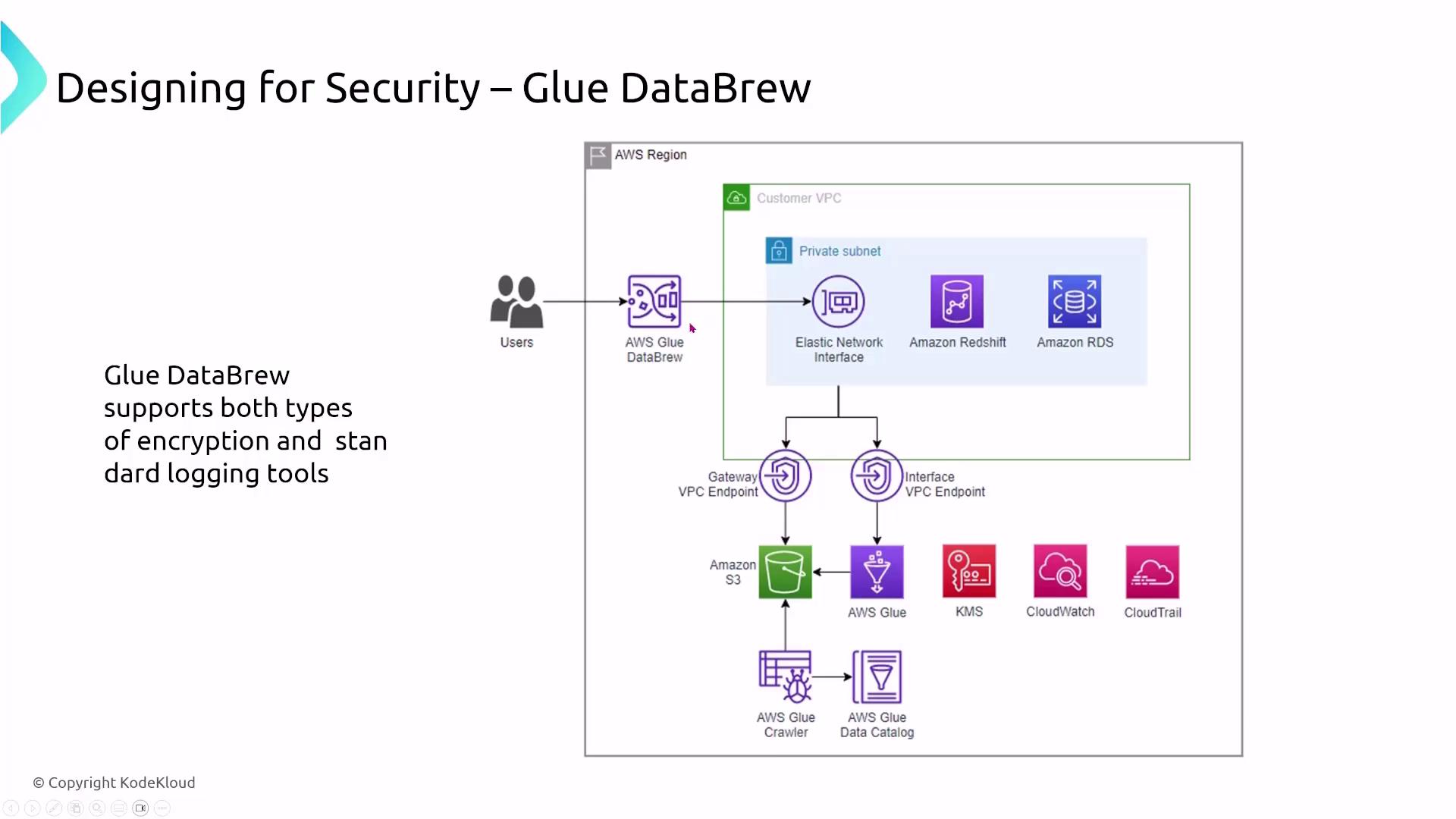

Data Transformation with Glue DataBrew

Glue DataBrew allows users to visually transform and prepare data without the need to write code. Its intuitive drag-and-drop interface simplifies data cleaning, normalization, and preparation for analysis. DataBrew runs in a highly managed environment with security enforced by core AWS services, meaning encryption and logging rely on the settings of services such as S3 and VPC endpoints.

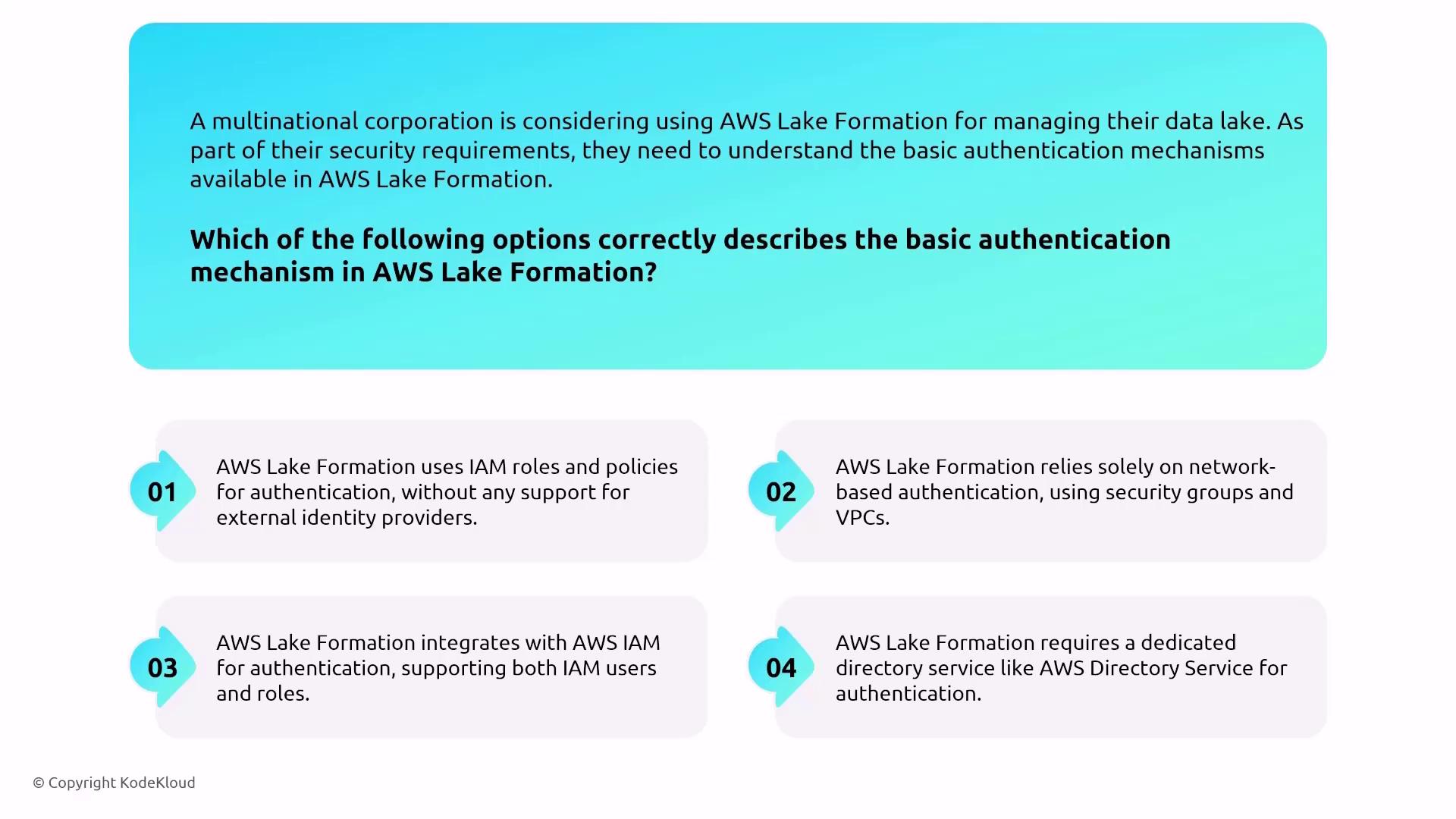

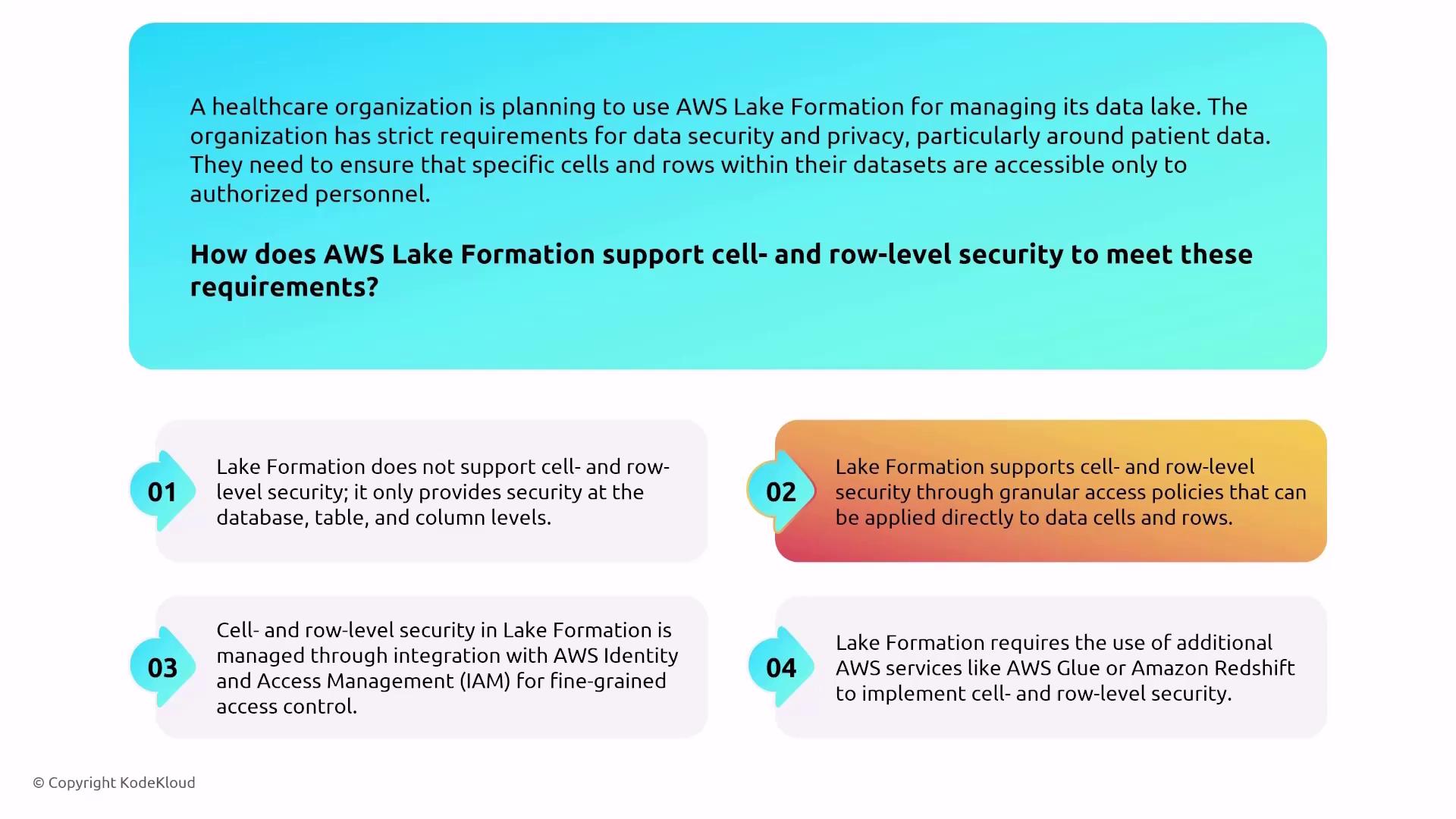

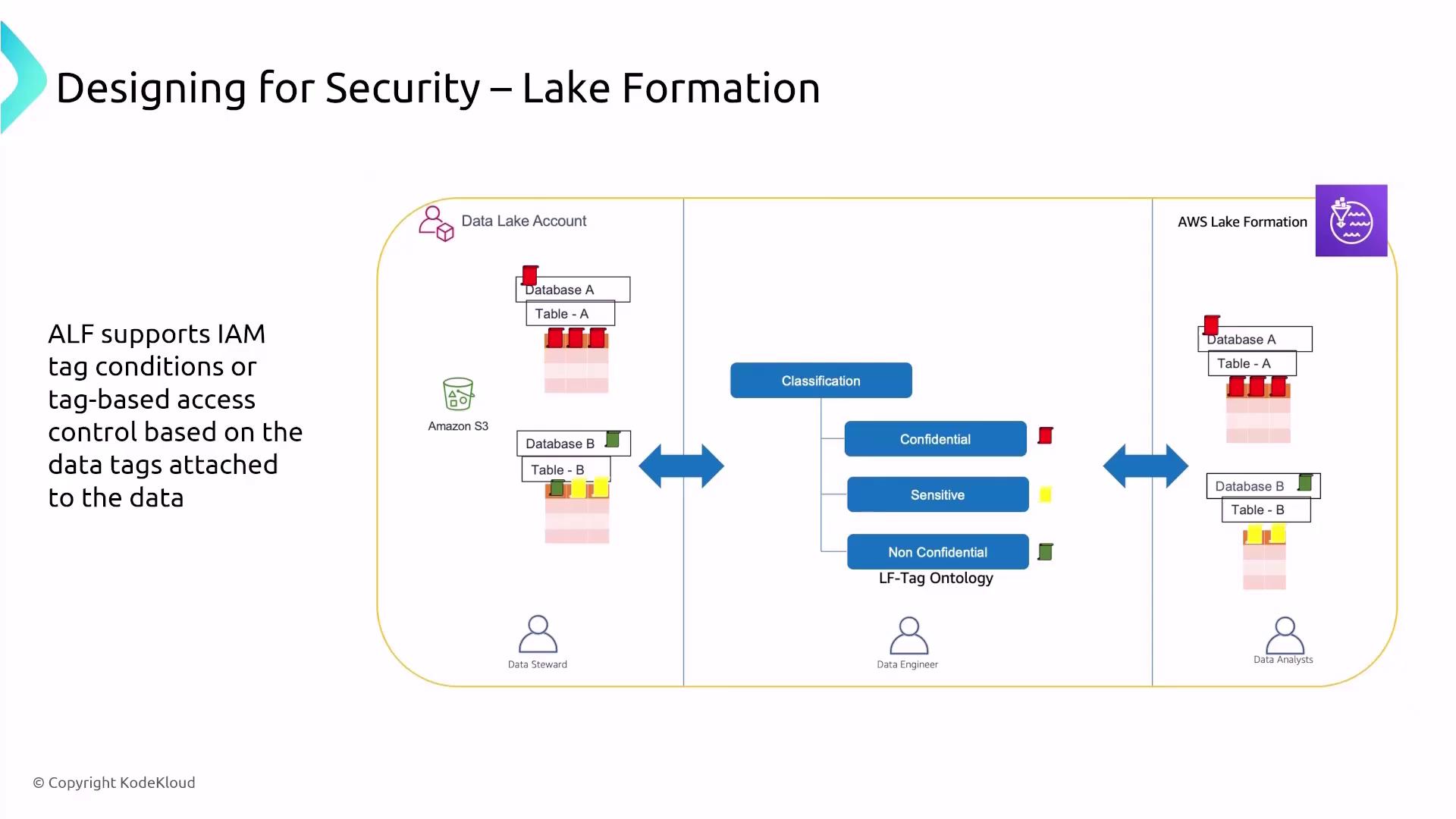

Designing for Security in Data Storage with Lake Formation

Lake Formation provides a robust security and governance layer over AWS data lakes. It integrates closely with IAM (and AWS SSO/Identity Center) to manage access control and permissions. Lake Formation supports fine-grained, cell- and row-level security while leveraging logging tools such as CloudWatch, CloudTrail, and AWS Config to track data access and compliance.

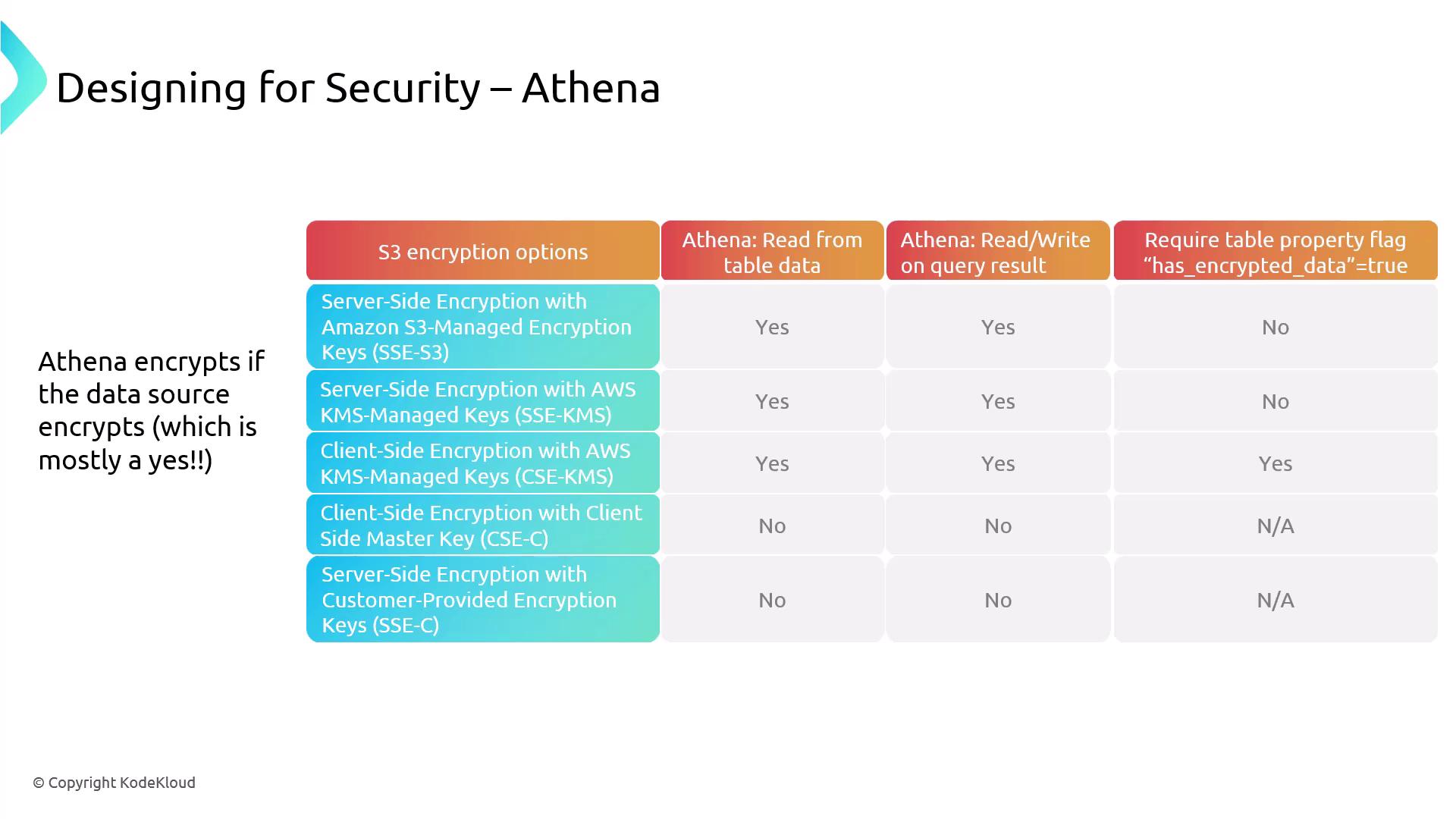

Data Presentation and Query with Amazon Athena

Amazon Athena is a serverless SQL engine perfect for ad hoc analysis on data stored in locations such as S3, Redshift, or data cataloged by Glue and Lake Formation. Rather than storing data, Athena accesses it externally, leveraging pre-existing encryption settings (for example, server-side encryption with KMS or S3-managed keys). Athena relies on IAM for access control and integrates with CloudWatch and CloudTrail for monitoring and logging activity.

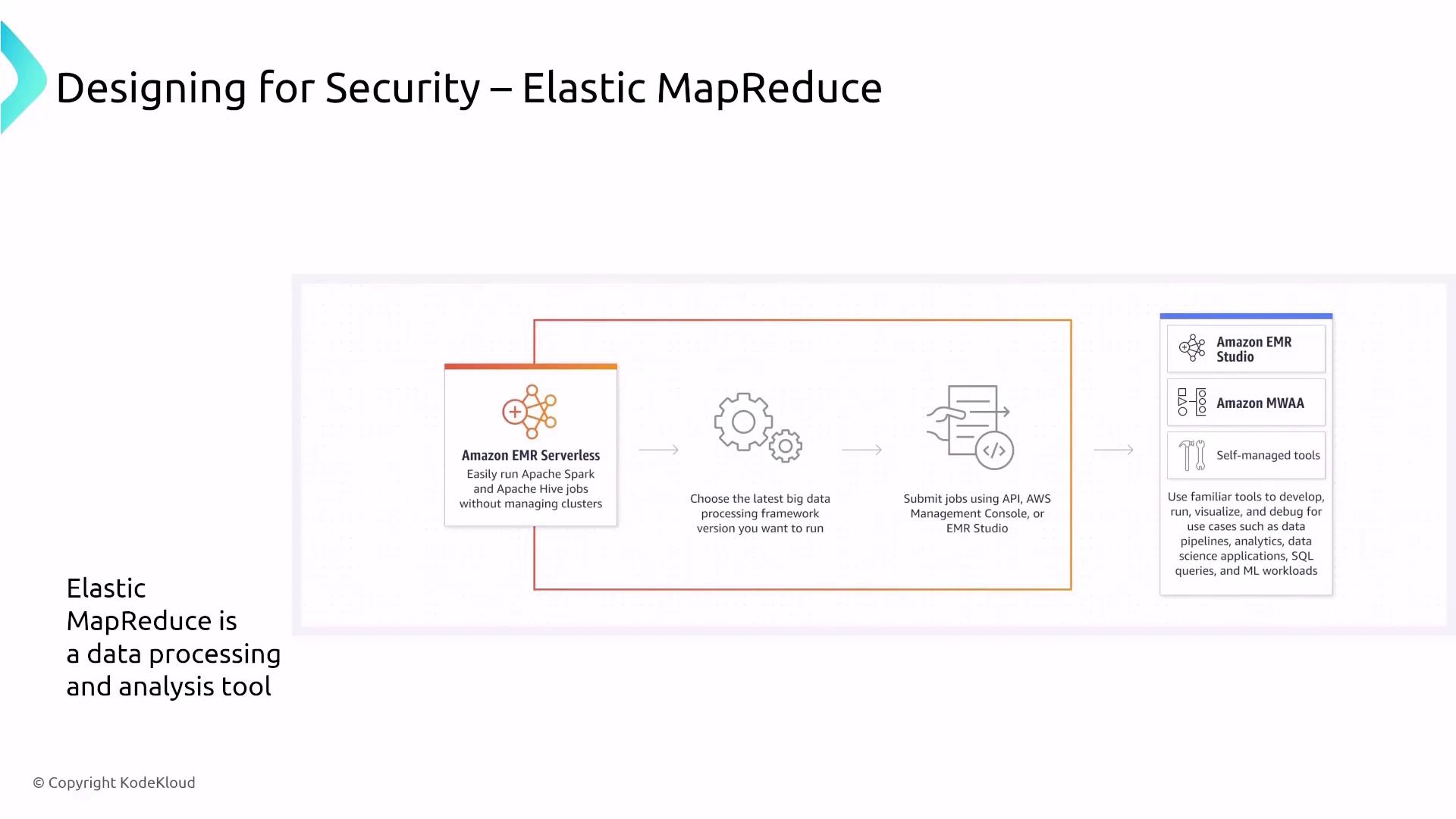

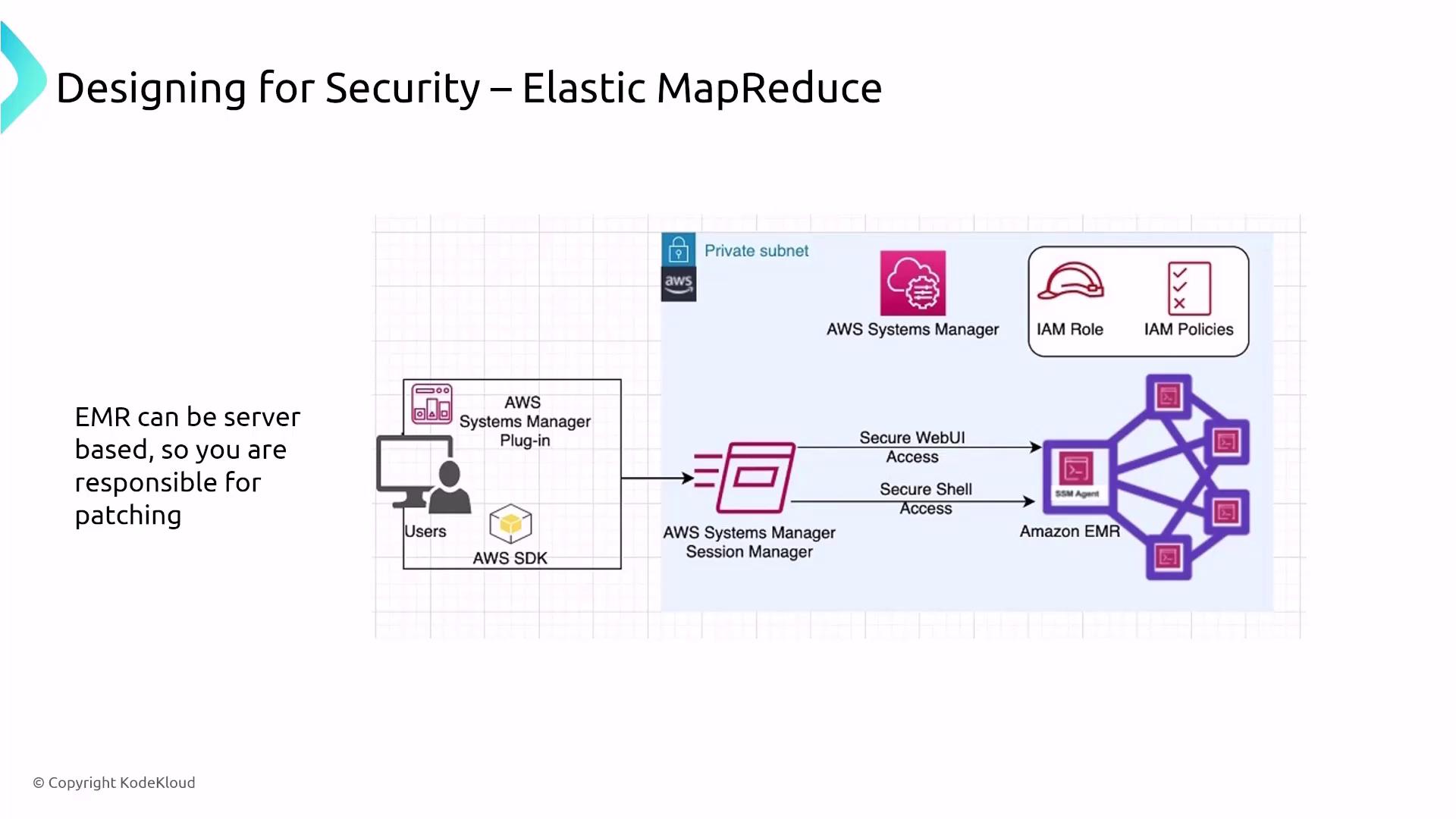

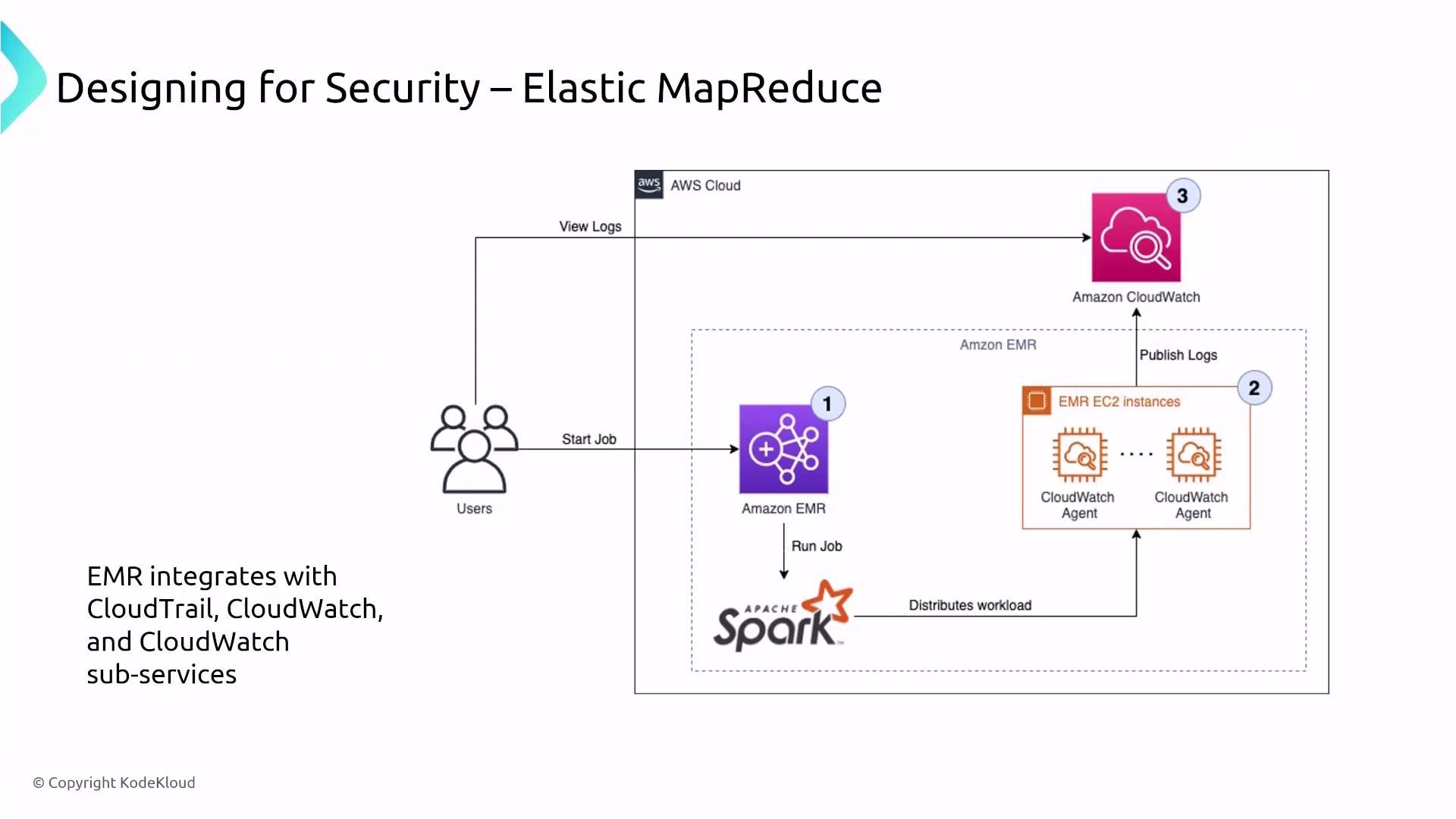

Elastic MapReduce (EMR) and Its Security Considerations

Amazon EMR is a powerful tool for data processing and batch analysis that runs on clusters of EC2 instances. An EMR cluster typically consists of master nodes (for coordination), core nodes (for storage and processing), and task nodes (for additional, ephemeral compute capacity). Although a serverless option is now available, most exam-relevant deployments involve traditional server-based clusters.

This comprehensive overview has covered the security designs integrated into various AWS data services. By understanding encryption, access controls, and monitoring mechanisms—from Kinesis to EMR—you are better equipped to architect secure data pipelines and processing frameworks on AWS. For more information on best practices and service-specific guidance, be sure to explore the official documentation and related resources. Happy architecting!