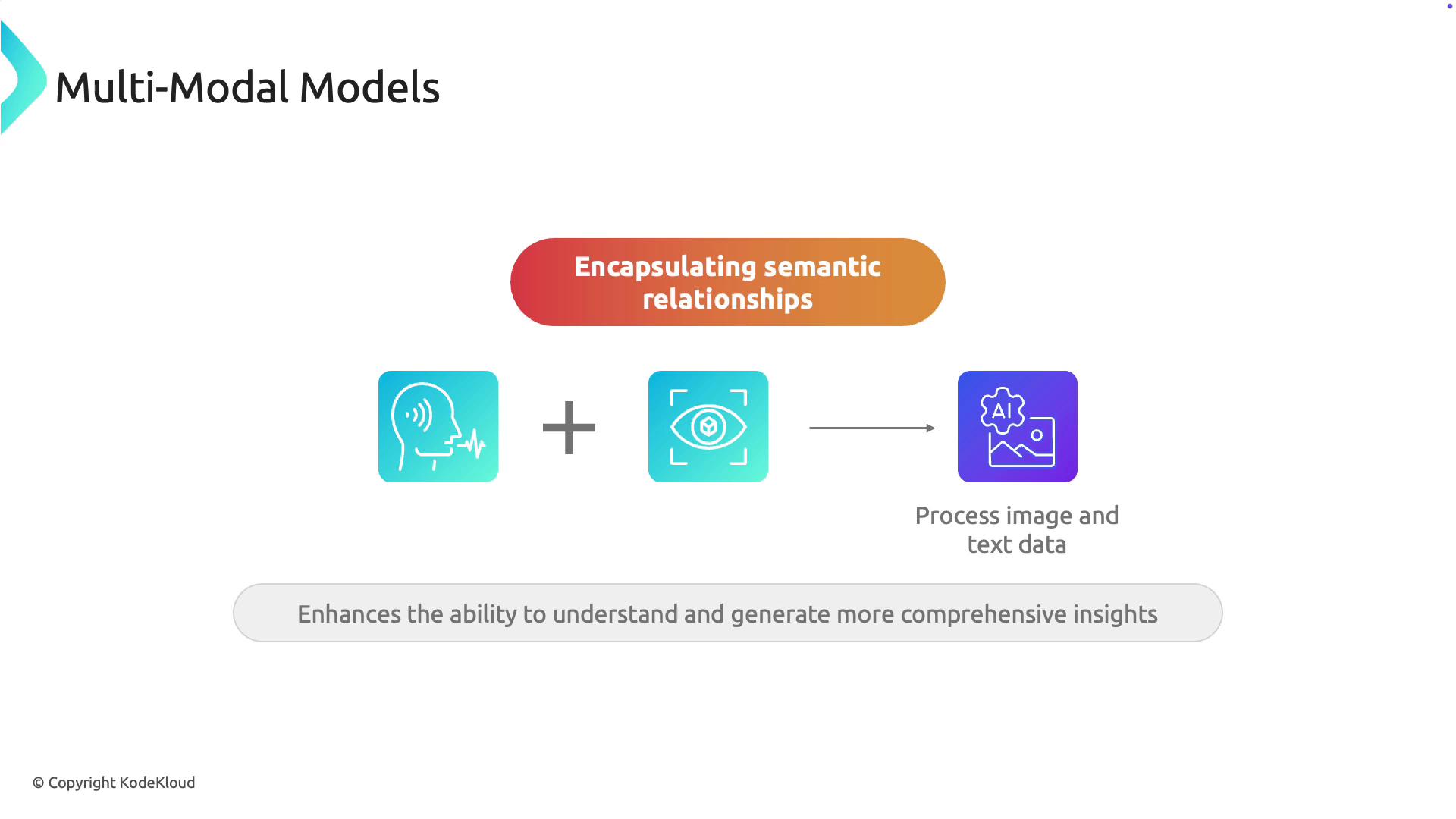

Multimodal models are revolutionizing artificial intelligence by simultaneously processing diverse data types, such as images and text. This fusion of language and vision capabilities makes them exceptionally versatile for a variety of computer vision tasks. When a multimodal model processes content—like a picture of a fruit accompanied by a label reading “apple”—it leverages both visual and textual context. This integrated approach leads to more informed and accurate interpretations.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Core Capabilities

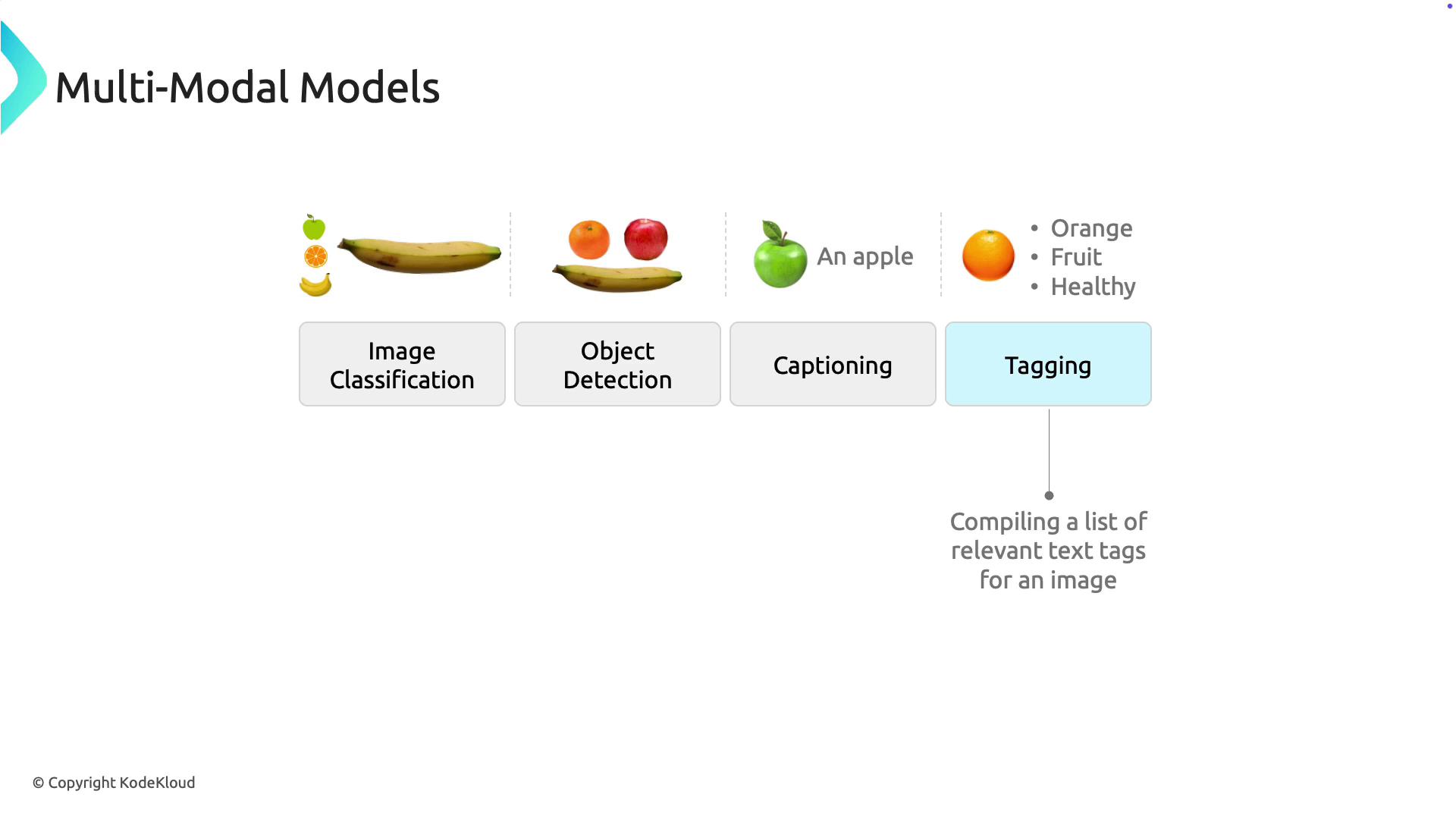

Multimodal models can execute several tasks concurrently:- Image Classification: Automatically categorizes images into predefined classes.

- Object Detection: Identifies and locates objects within an image.

- Image Captioning: Generates descriptive captions that reflect the content of an image.

- Tagging: Associates relevant keywords with images to improve searchability and further training (e.g., tagging an image of an orange with “orange, fruit, healthy, citrus”).

Model Architecture

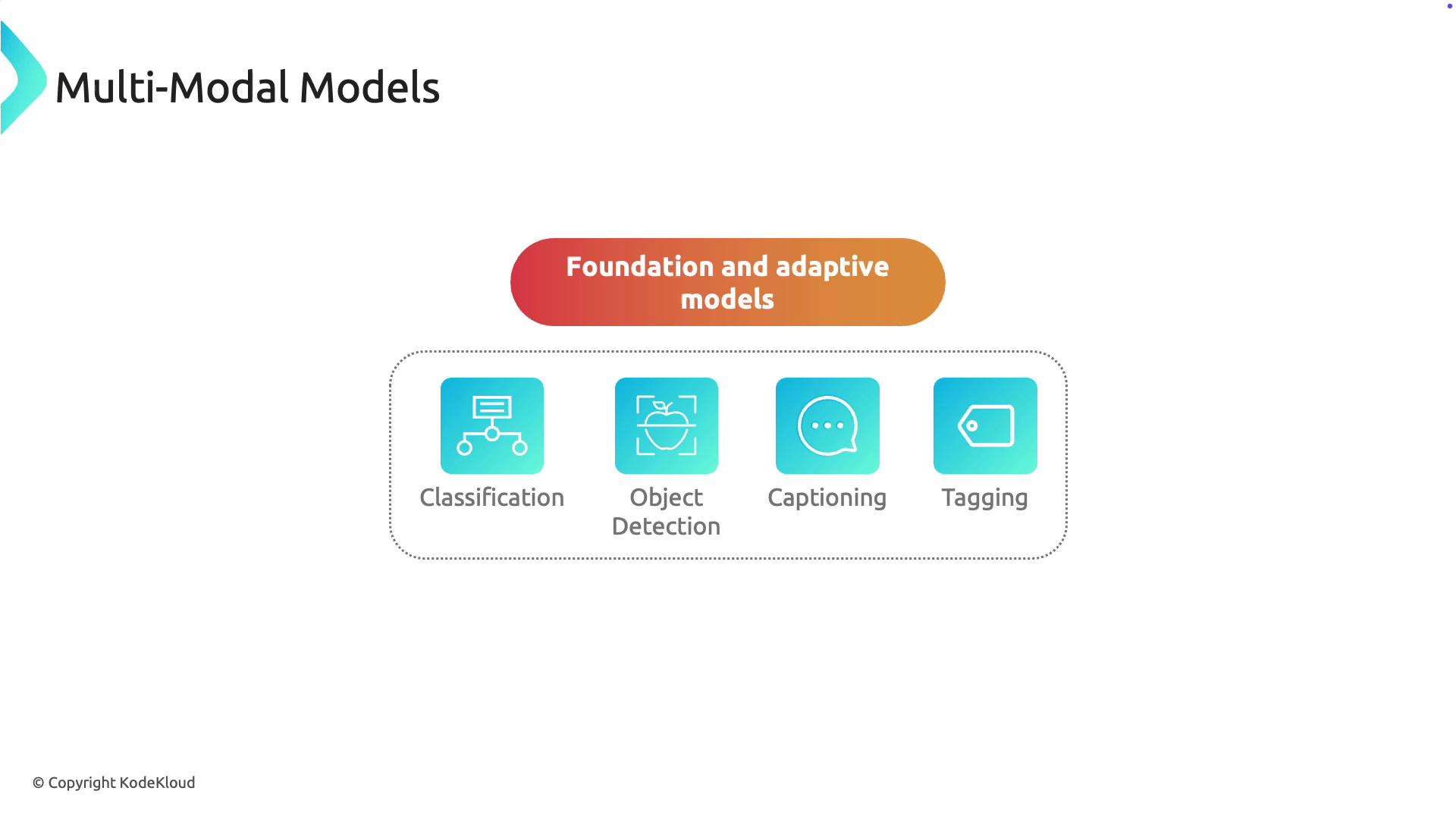

Multimodal models typically consist of two main components:- Foundation Model: A pre-trained model on extensive datasets, providing general knowledge of image and text representations.

- Adaptive Model: A fine-tuned version of the foundation model, optimized for specific tasks such as image classification, object detection, captioning, or tagging.

Microsoft’s Florence model serves as a prominent example of a foundation model. Trained on millions of images coupled with text captions from the internet, Florence comprises two main parts:

- Language Encoder

- Image Encoder