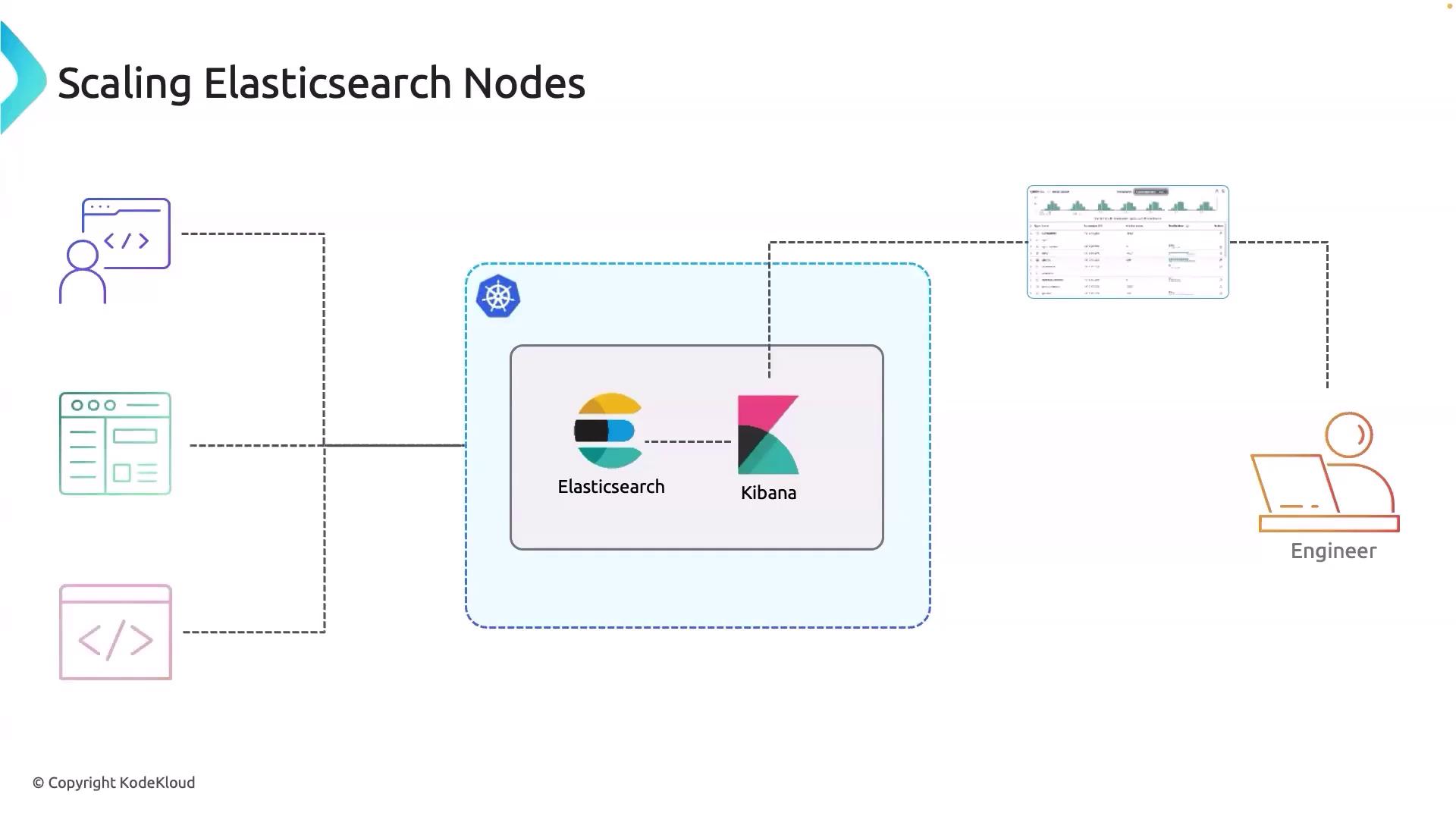

Hello and welcome back! In this article, we discuss key challenges and strategies for scaling Elasticsearch nodes within your Kubernetes cluster. In our current setup, both Elasticsearch and Kibana are deployed on Kubernetes. As an SRE or DevOps engineer, you rely on Kibana to monitor the health of your Kubernetes cluster and applications, while external developers use Elasticsearch and Kibana to build dashboards and execute CLI commands.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Scaling Elasticsearch nodes is essential when:

- The data volume overwhelms current nodes, resulting in performance degradation.

- High query loads force nodes to work inefficiently.

- Performance bottlenecks indicate nodes are nearing their operational limits.

- Ingestion rates exceed the capacity of existing nodes.

- Redundancy and high availability are needed to prevent data loss and downtime.

- Geographical distribution demands optimized performance across regions.

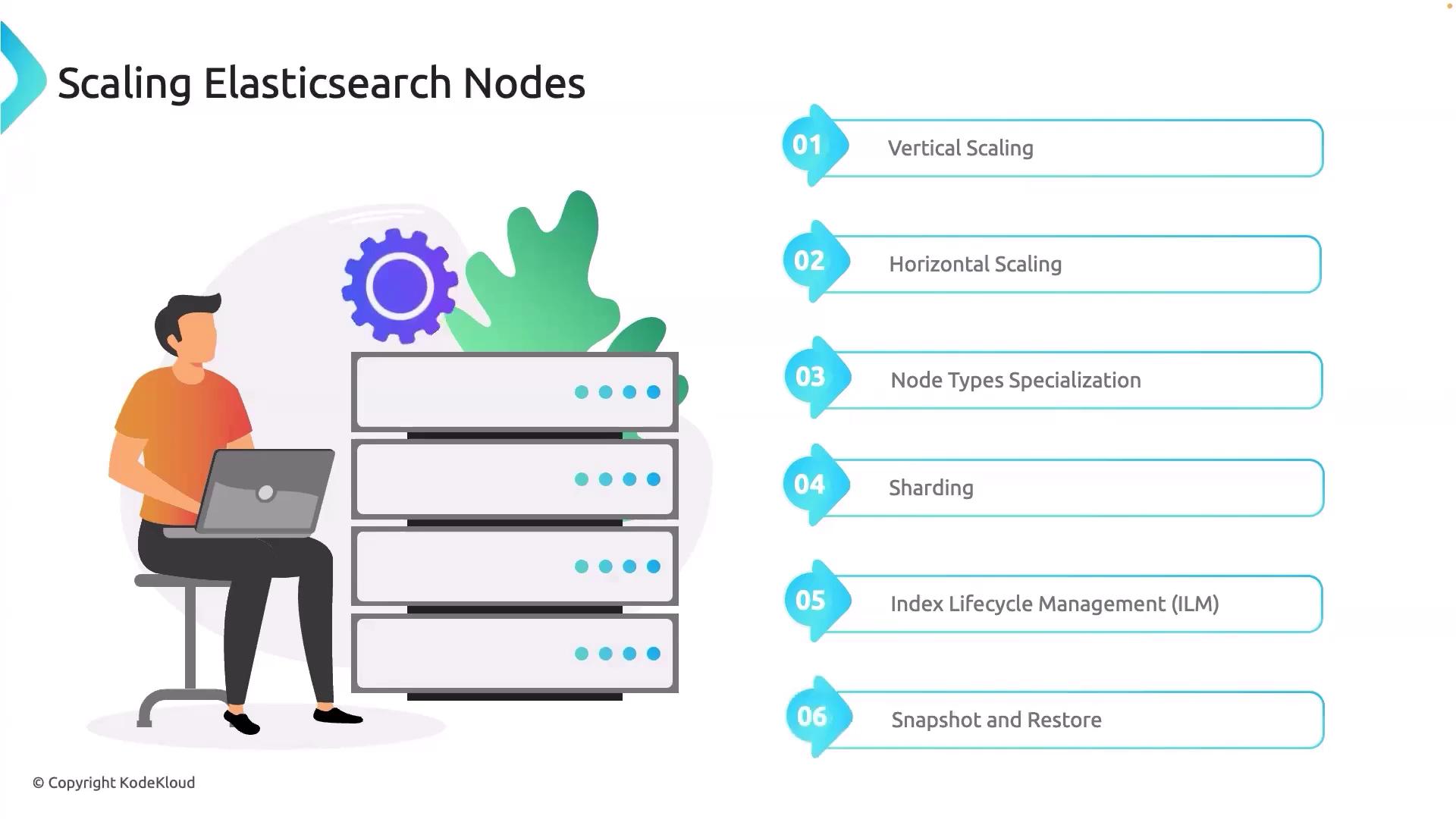

Scaling Strategies

There are two principal approaches when it comes to scaling Elasticsearch nodes:- Vertical Scaling: Increase the resources (CPUs, memory) of existing nodes.

- Horizontal Scaling: Add more nodes to distribute the load more evenly across the cluster.

- Sharding: Distributes data across multiple nodes to enhance search and indexing performance.

- Index Lifecycle Management (ILM): Efficiently manages the lifecycle of your data indices.

- Snapshot and Restore: Provides reliable backup and recovery solutions to maintain data integrity during scaling operations.

Start by experimenting with node type specialization. If further improvements are required, proceed with sharding and lifecycle management techniques, followed by the implementation of snapshot and restore processes. Managed services like Elastic Cloud often primarily utilize vertical and horizontal scaling to expand clusters. More detailed guidance on Elastic Cloud will be covered in future lessons.