Hello and welcome back! In our previous article, we successfully deployed a Fluent Bit application that forwarded logs to Elasticsearch. Now, we will dive into visualizing these logs using Kibana. This article is organized into three parts. In Part 1, we will explore Kibana by creating an index and reviewing the logs. We will evaluate whether the current log structure is sufficient or if it requires improvements. Let’s get started by accessing Kibana in our Kubernetes cluster.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Accessing Kibana in Your Kubernetes Cluster

First, switch to the appropriate namespace using the following command:Navigating Kibana and Creating an Index

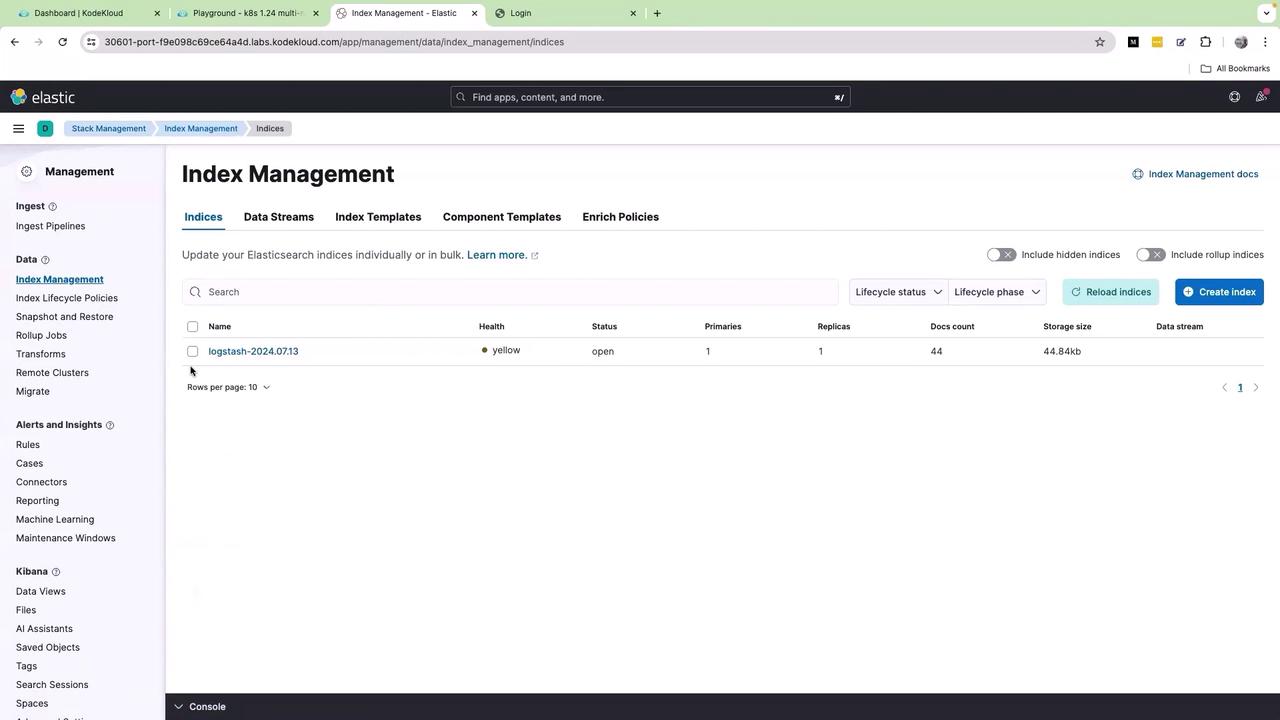

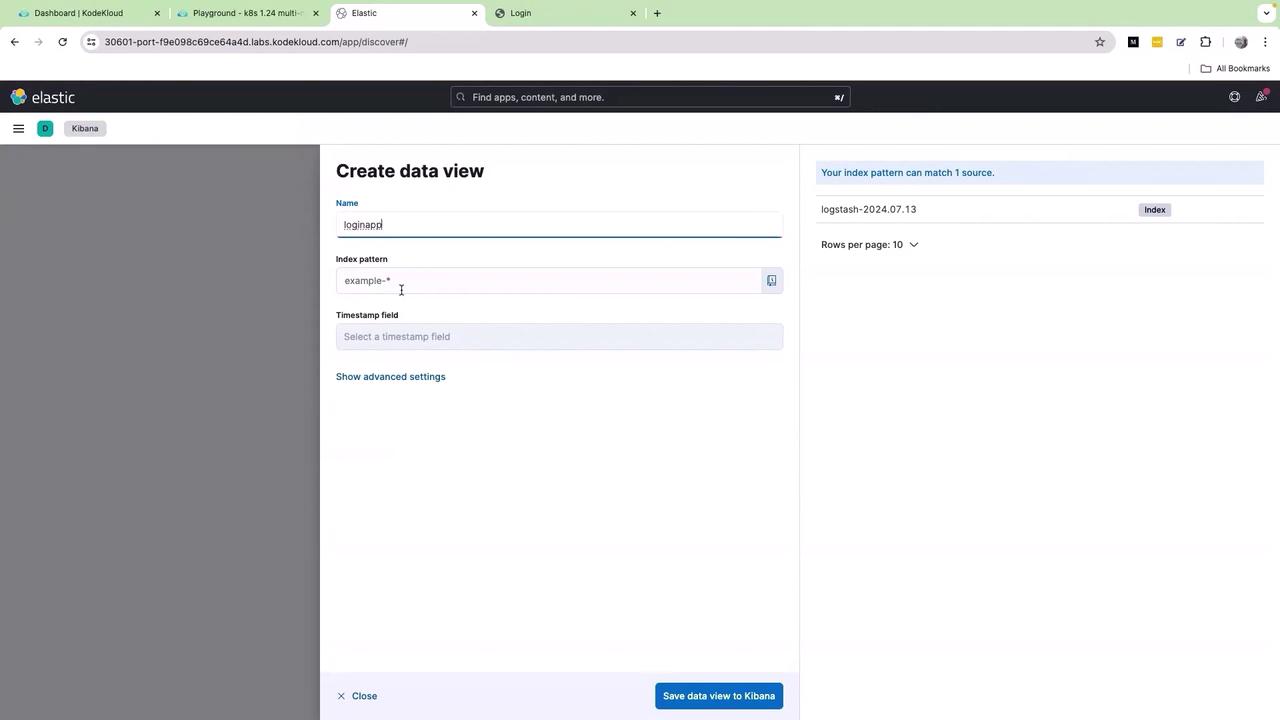

Once the Kibana UI loads, click on the menu icon (the three bars) and navigate to Stack Management → Index Management. Here, you will see an index populated by Fluent Bit:

Real-Time Log Visualization

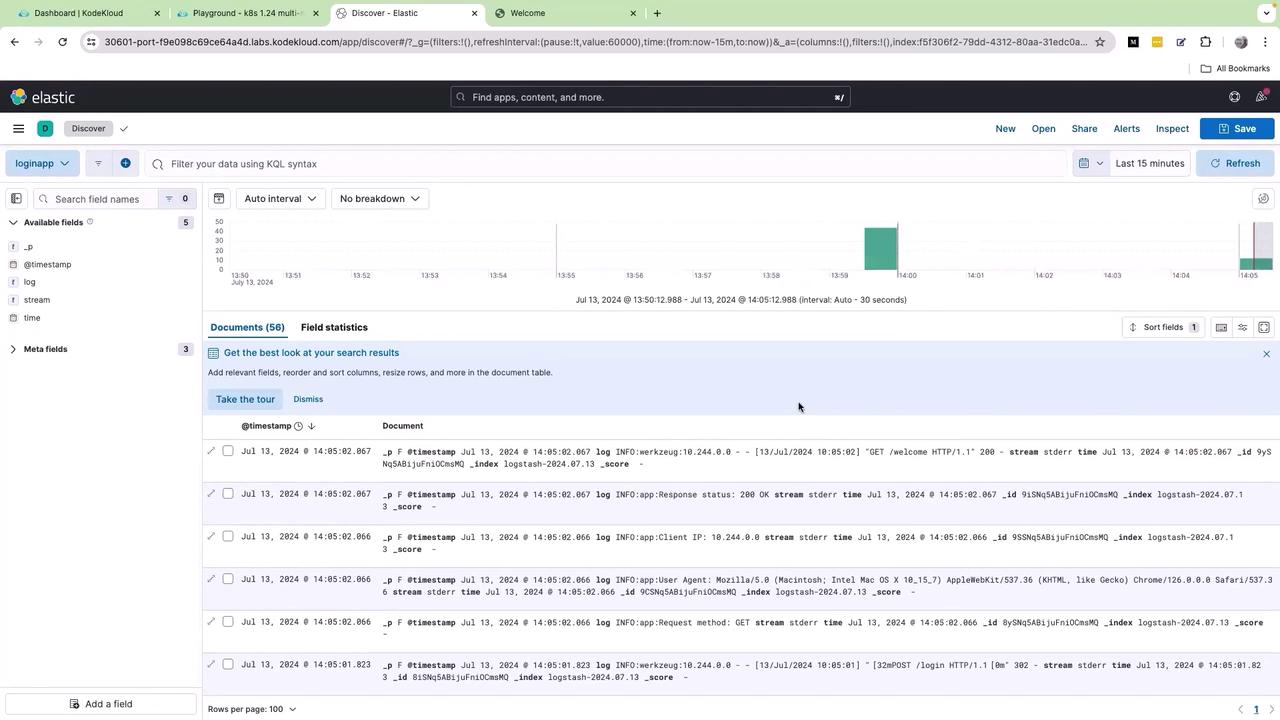

Upon saving, you will be redirected to the Kibana UI where logs are visualized in real time:

Analyzing Log Structure in Kibana

Review the available log fields such as timestamp, log, stream, and time. Click on the log field to view its subfields, which include various log levels such as info and warnings. By selecting the lens icon, you can create a graph based on thelog.keyword field.

However, modifying the graph type (such as switching to lines or vertical bars) might not resolve the clutter issue. The logs remain consolidated in a single field due to the way the application sends its logs. Without additional parsing, building a clear and useful dashboard becomes challenging. It requires reliance on extensive KQL queries or regular expressions to differentiate the log details.

Improving the readability of log data may require advanced log parsing techniques to segregate log details into meaningful fields. This will simplify querying and dashboard creation in Kibana.