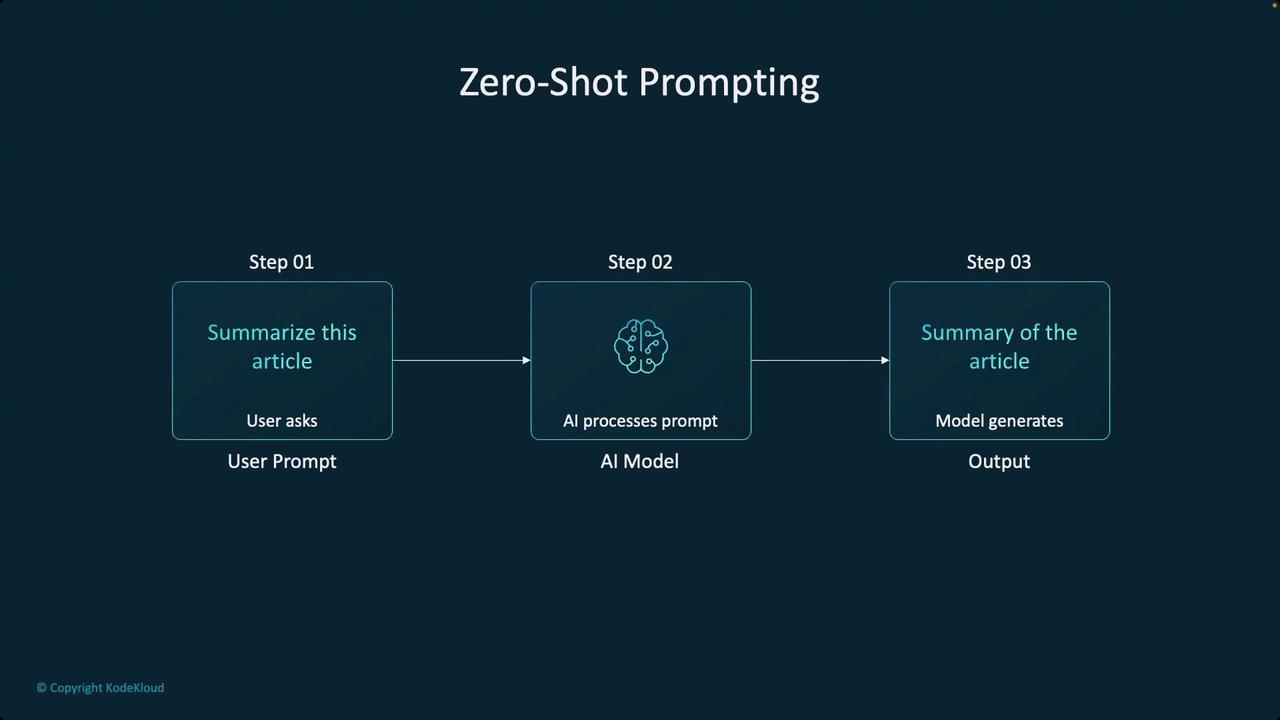

In this article, we explore the concept of zero-shot prompting—a technique used to interact with large language models (LLMs) and foundation models without providing prior examples. As transformers and other foundation models continue to revolutionize artificial intelligence, it becomes crucial to ensure these models understand our specific use cases and datasets. Generally, there are two primary approaches to tailor these models:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

-

Fine-Tuning

Fine-tuning involves adjusting the neural network’s weights to better capture the intricacies of our data. While this method can be highly effective, it is often expensive, less flexible, and may not offer the most optimal results for every scenario. -

In-Context Learning

This approach supplies the necessary context directly within the prompt during the interaction. Through careful prompt engineering, we can significantly enhance the model’s output. Although this method has tremendous potential, its power is sometimes underestimated.

For further insights into improving AI-driven outputs, consider exploring advanced prompt engineering techniques and comparing the benefits of zero-shot prompting versus example-based prompts.