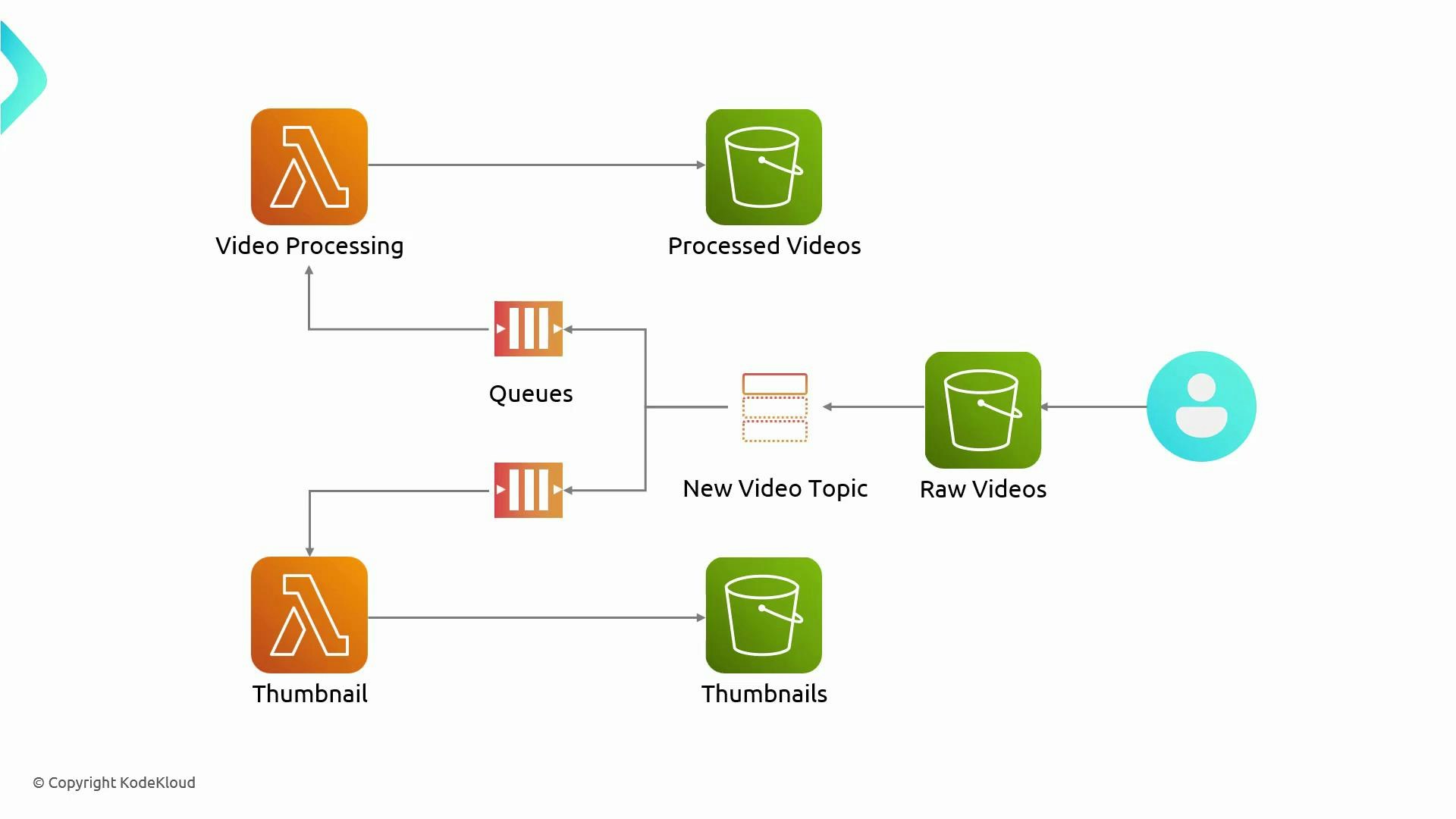

In this guide you’ll learn how to build a simple, serverless video-processing pipeline on AWS using S3 event notifications, Amazon SNS for fan-out, Amazon SQS for durable buffering, and Lambda for processing (transcoding and thumbnail generation). The architecture is scalable, cost-effective, and works well for workloads that need to fan out a single upload to multiple consumers. High-level flow:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- A user uploads a raw video to an S3 bucket (MP4, MOV, etc.).

- S3 publishes an ObjectCreated event to an SNS topic (video-uploaded).

- SNS fans out the notification to two SQS queues:

- video-processing — consumed by a Lambda that transcodes the video (e.g., to HLS) and writes outputs to processed-videos.

- thumbnail-processing — consumed by a Lambda that generates thumbnails and writes outputs to thumbnails.

| Resource type | Purpose | Example name(s) |

|---|---|---|

| S3 bucket | Store raw uploads | raw-videos-kodekloud |

| SNS topic | Fan-out notification | video-uploaded |

| SQS queue | Durable queue for each consumer | video-processing, thumbnail-processing |

| Lambda function | Process messages from SQS | video-processing, thumbnail-processing |

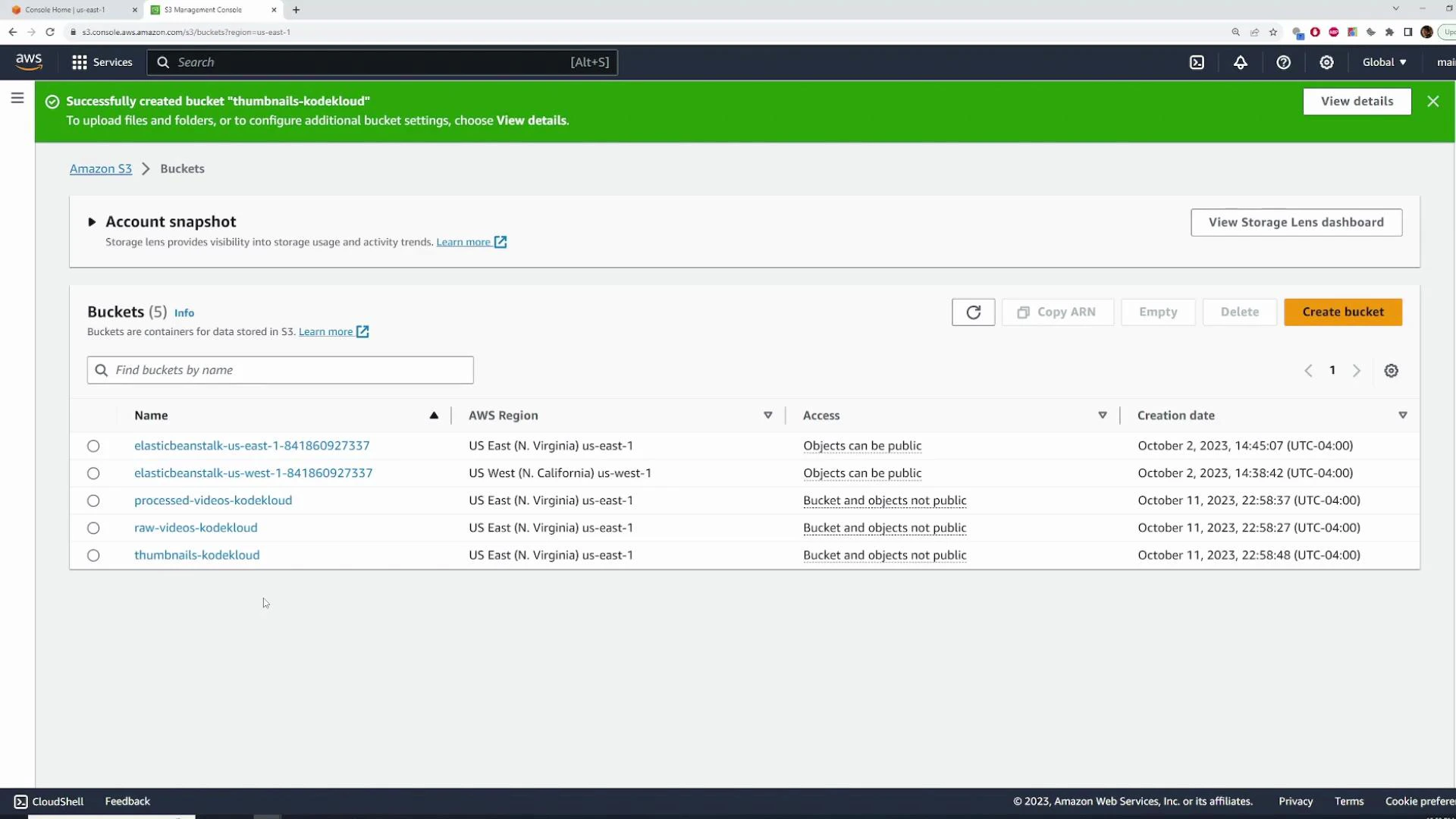

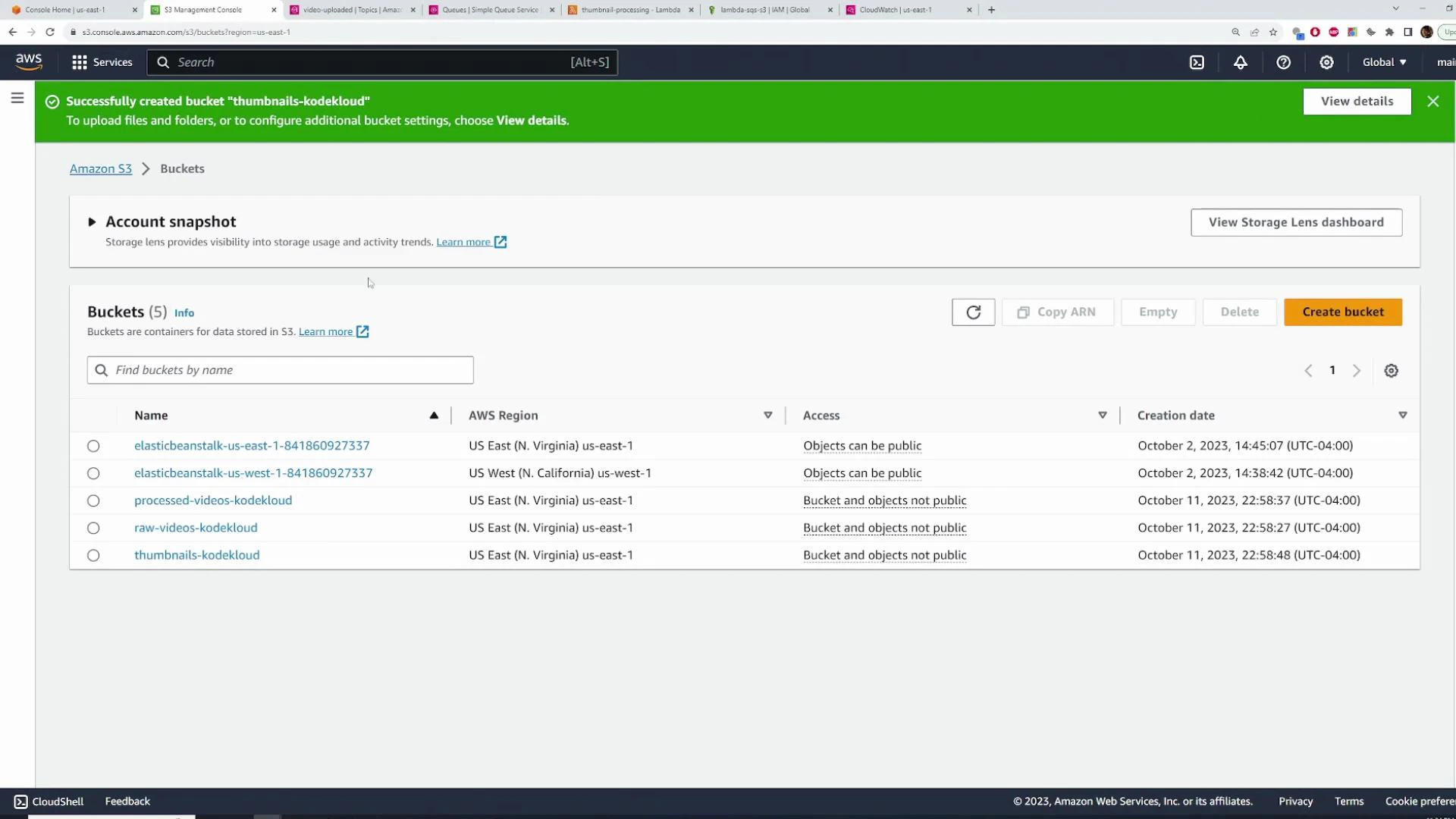

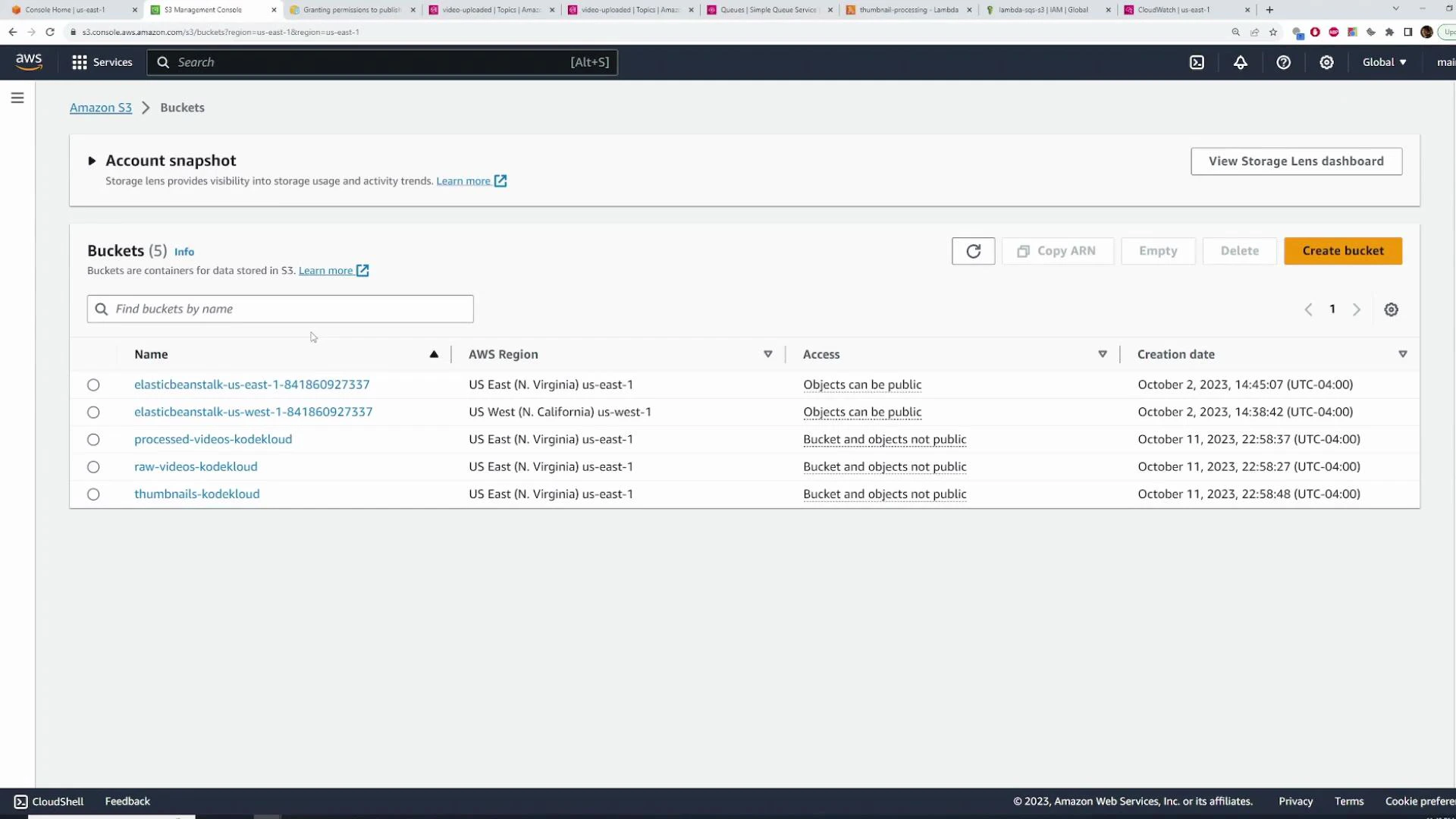

- Create three S3 buckets (you can use default settings for this demo):

- raw-videos-kodekloud (incoming uploads)

- processed-videos-kodekloud (transcoded outputs, e.g., HLS files)

- thumbnails-kodekloud (generated thumbnails)

- In production, consider encryption, lifecycle rules, and access policies.

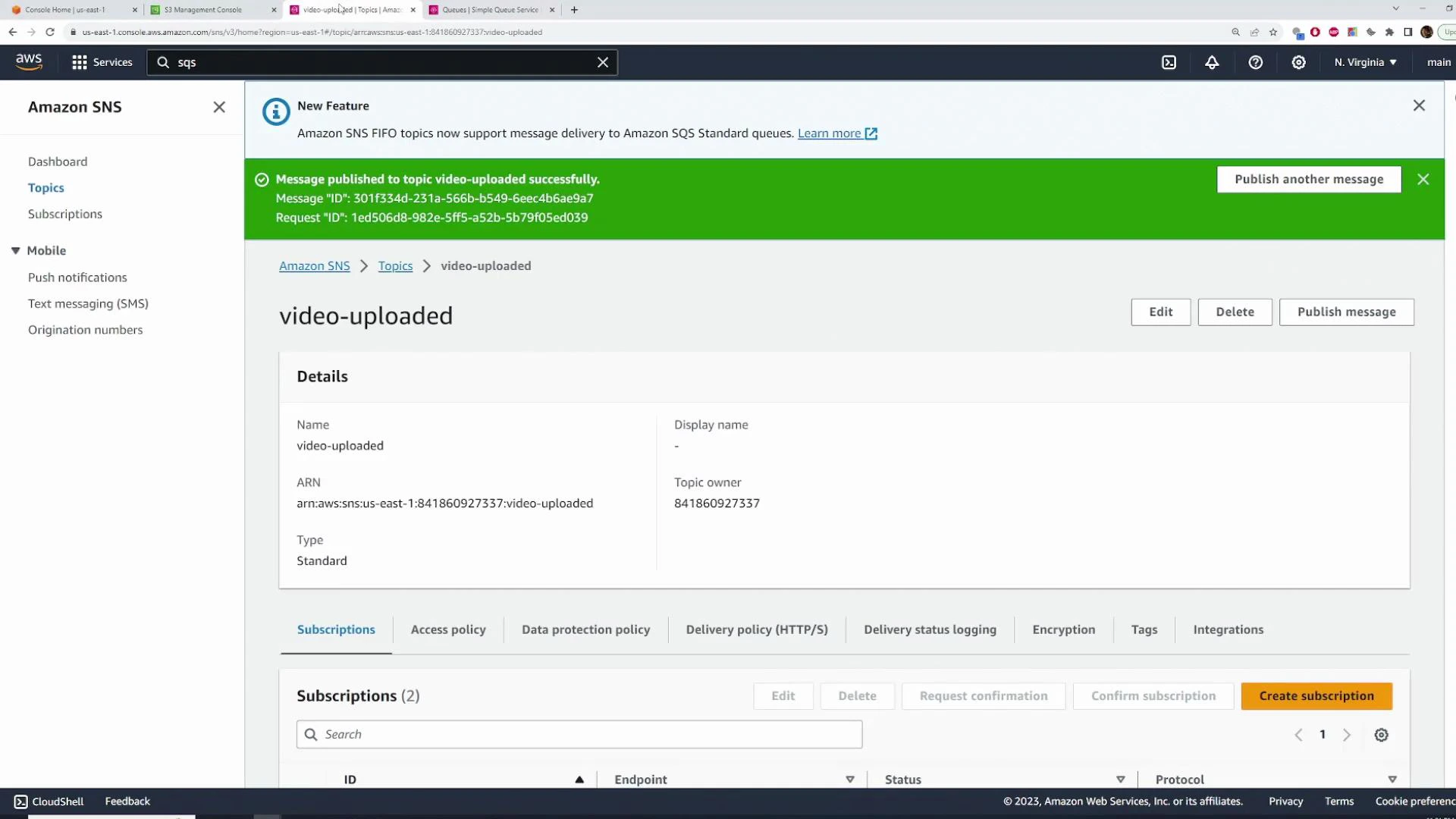

- In the SNS console, create a topic named video-uploaded.

- Choose Standard (FIFO ordering is not required here).

- Keep encryption and access policy defaults for now. You will later add an explicit statement allowing S3 to publish to the topic when you configure S3 event notifications.

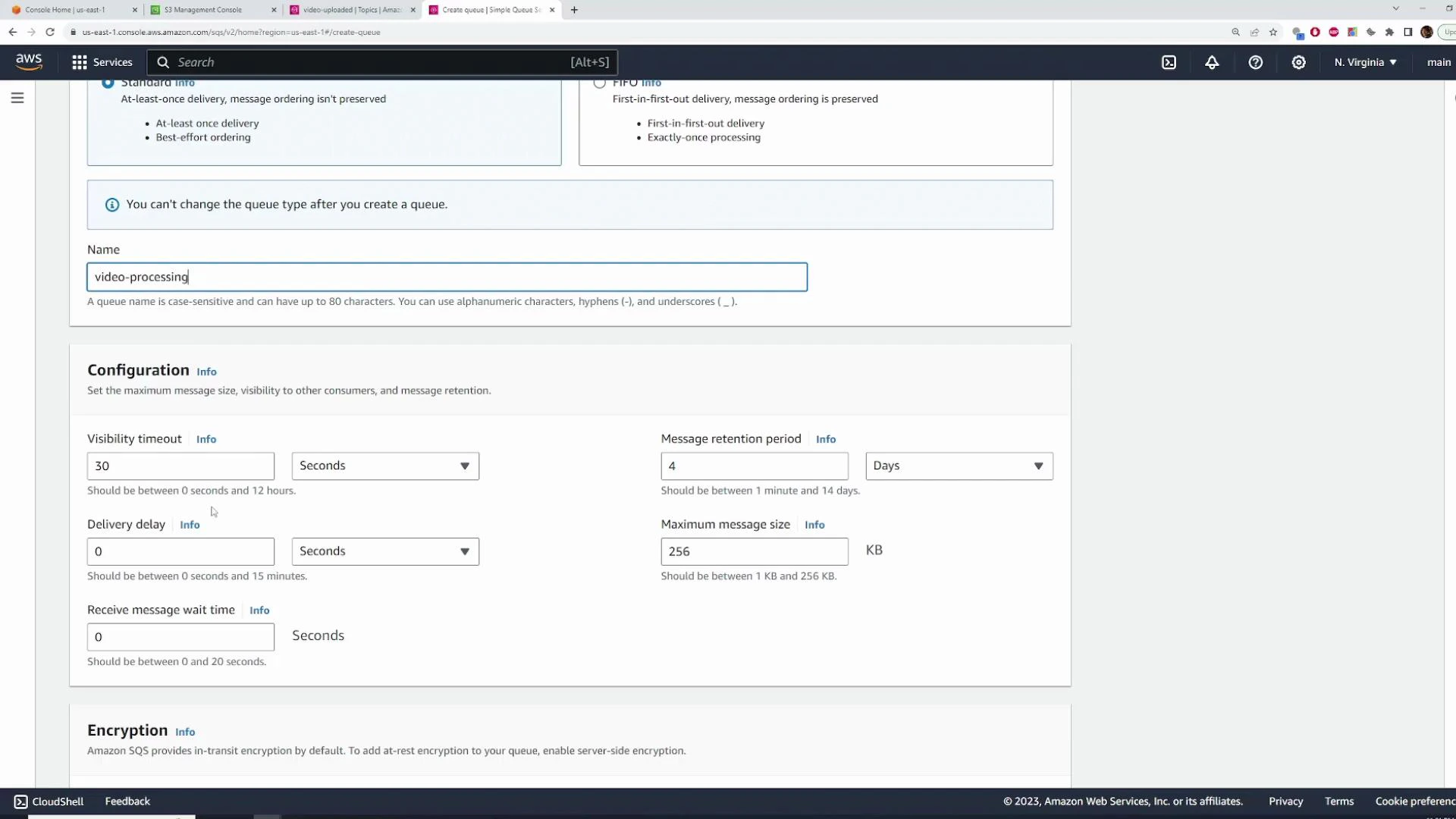

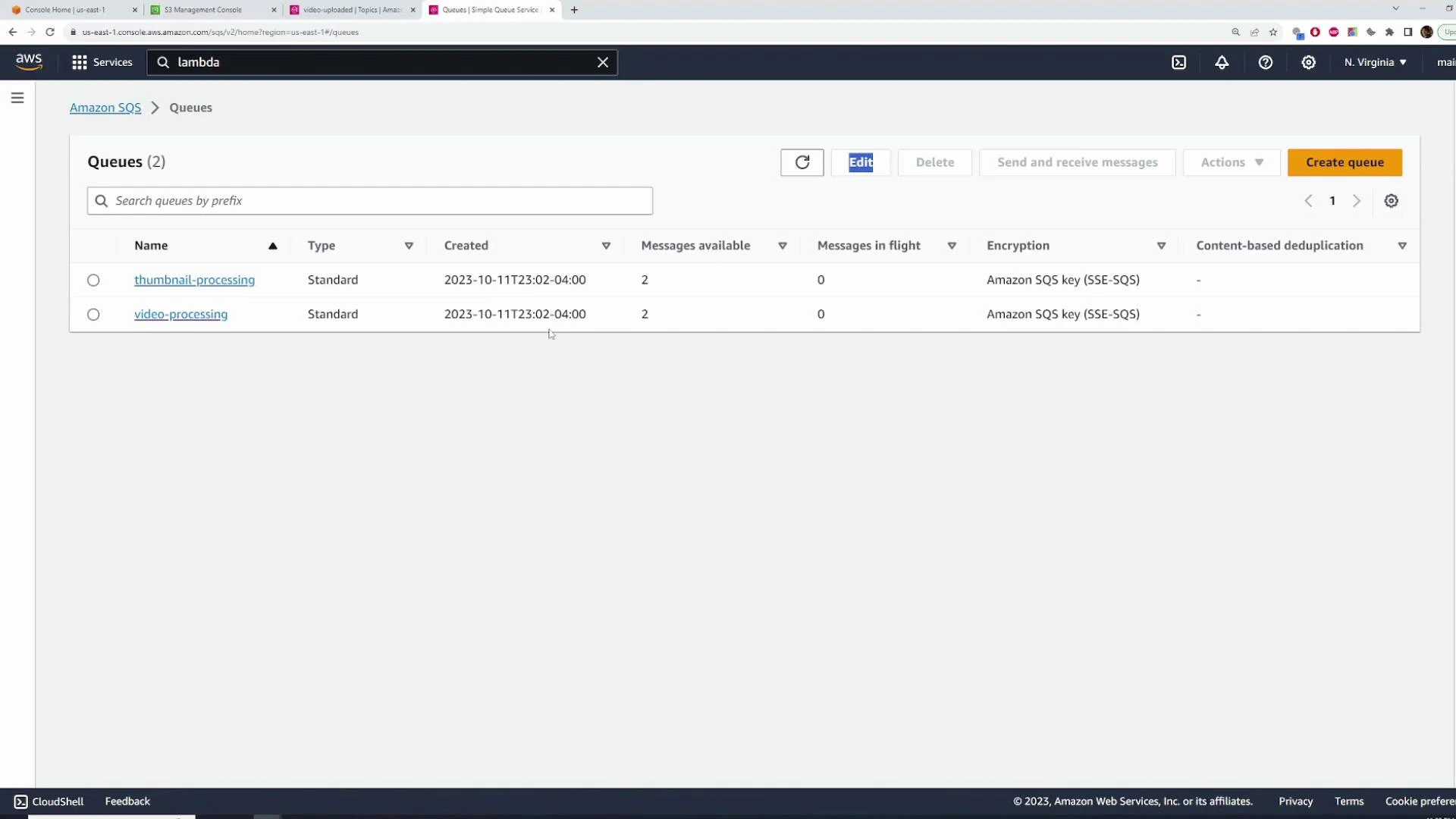

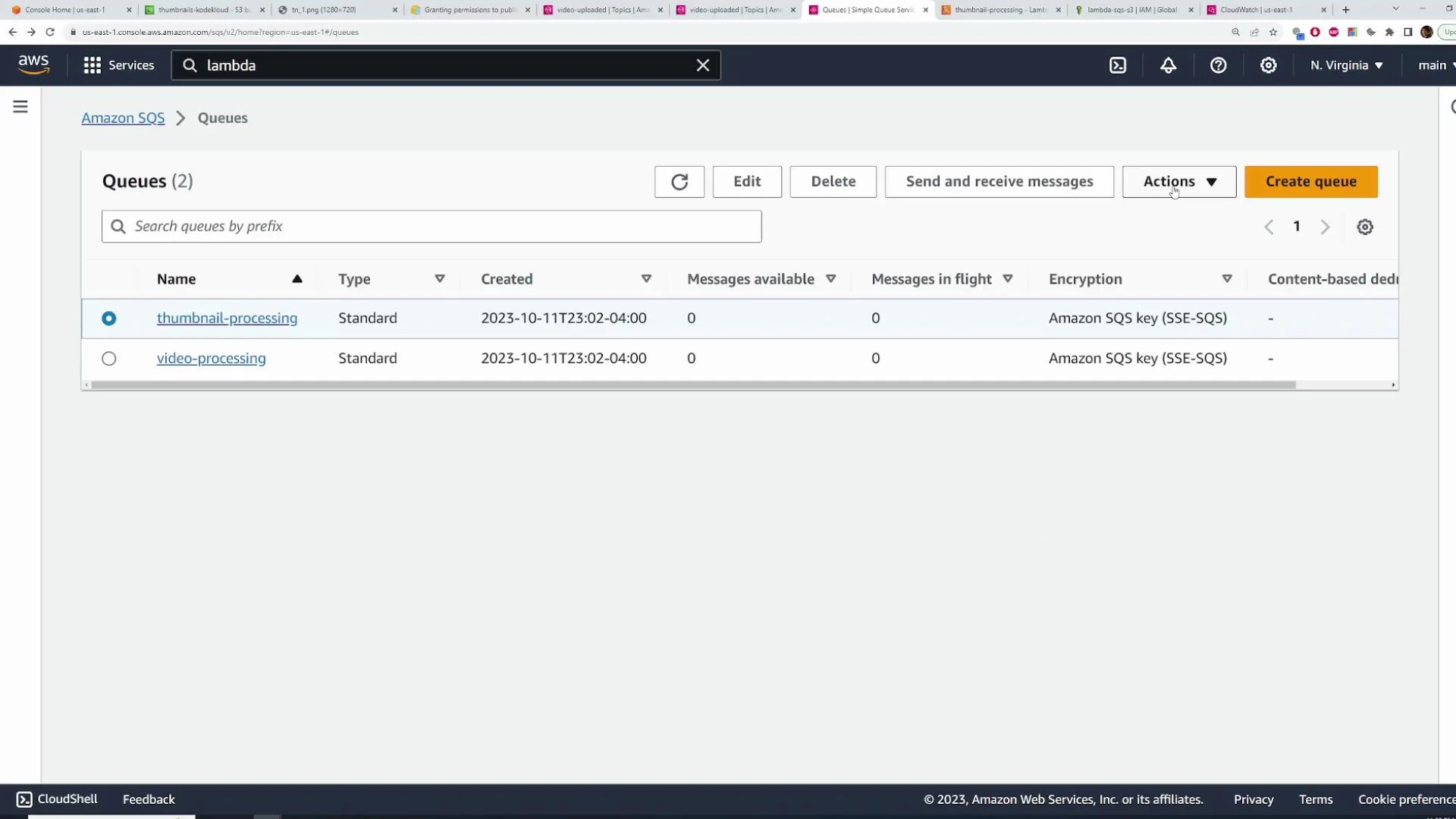

- Create two standard SQS queues:

- video-processing

- thumbnail-processing

- Standard queues provide at-least-once delivery and are usually sufficient for this pipeline.

- Use default visibility timeout, message retention, and delivery delay unless your application requires adjustments.

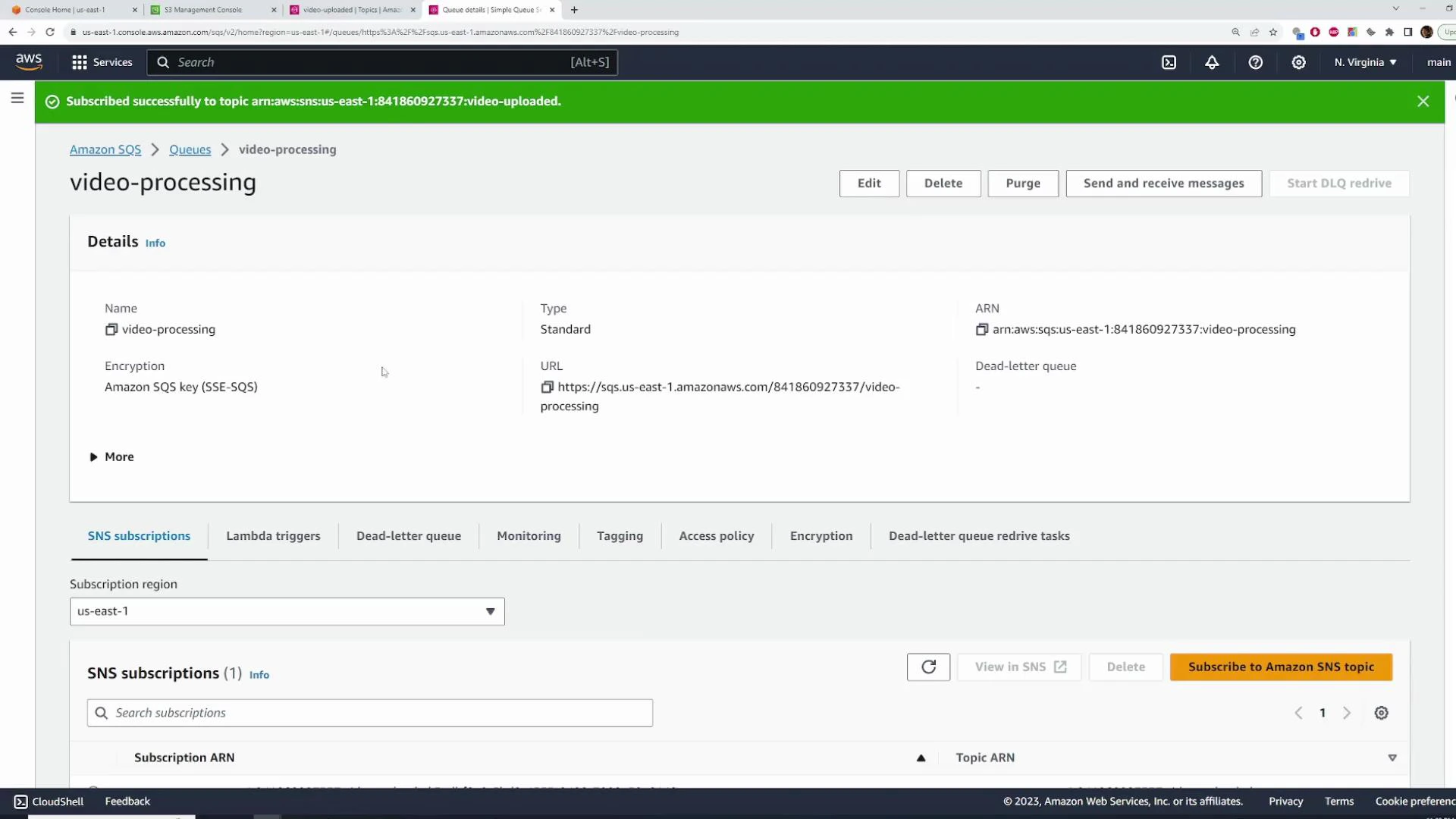

- In the SNS topic, create subscriptions of type Amazon SQS for:

- arn:aws:sqs:REGION:ACCOUNT_ID:video-processing

- arn:aws:sqs:REGION:ACCOUNT_ID:thumbnail-processing

- After subscribing, every publish to video-uploaded will be delivered to both queues.

- Use the SNS console’s Publish message feature to verify subscriptions.

- Publish a message body containing the S3 bucket name and object key (JSON is recommended).

- After publishing, both SQS queues should show the message(s) available for consumers.

- Refresh the SQS console — you should see multiple messages in both queues.

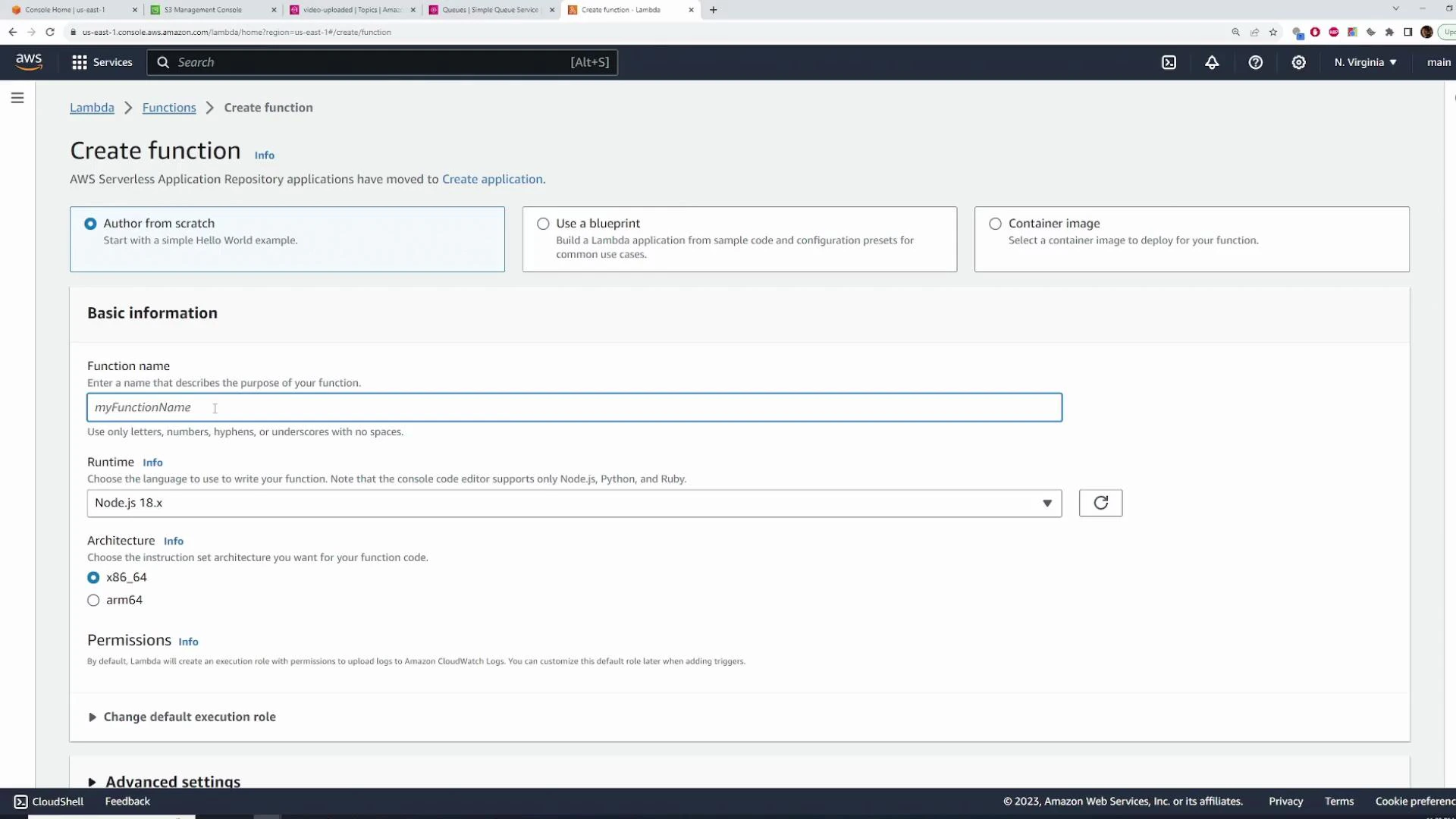

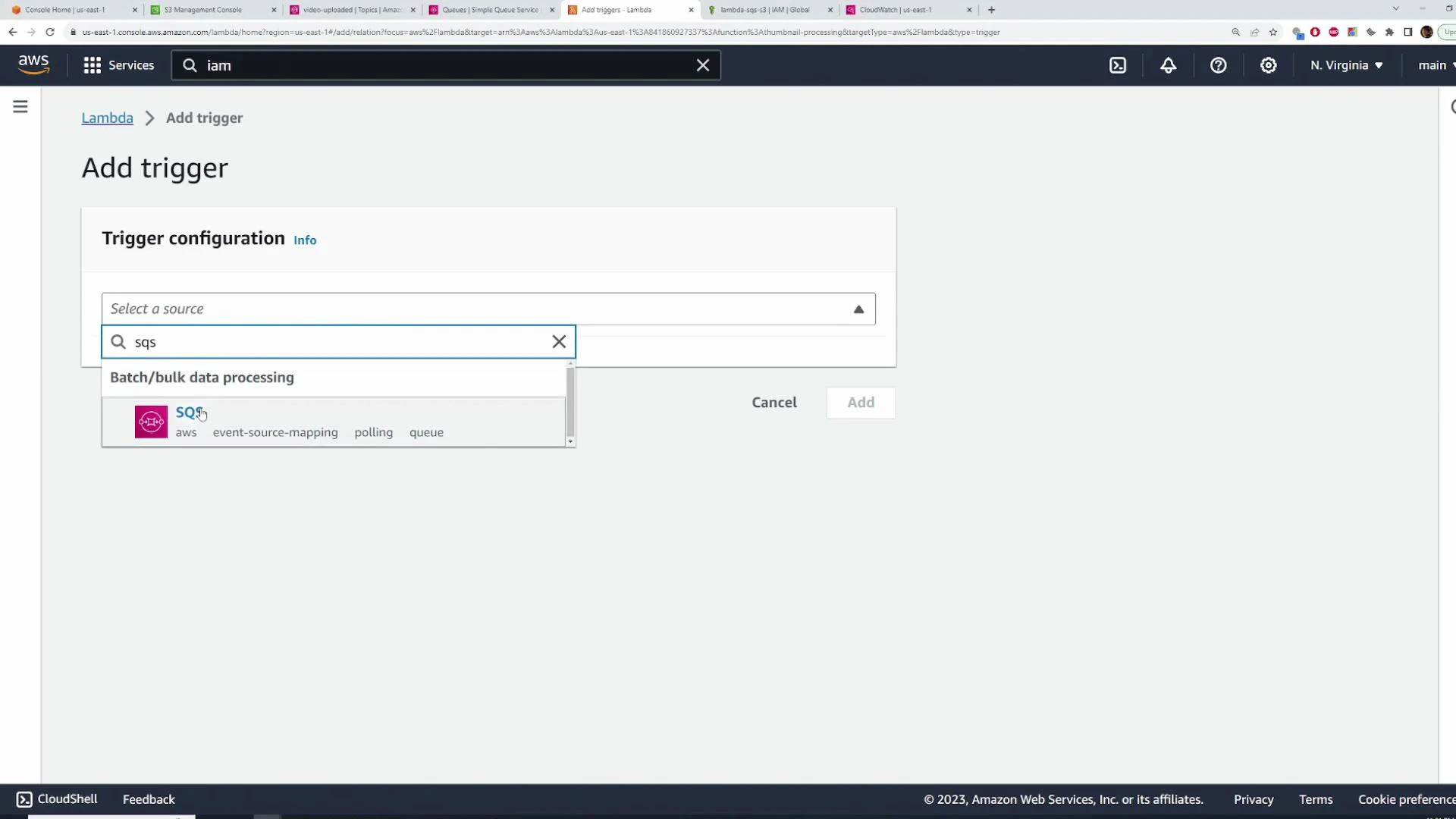

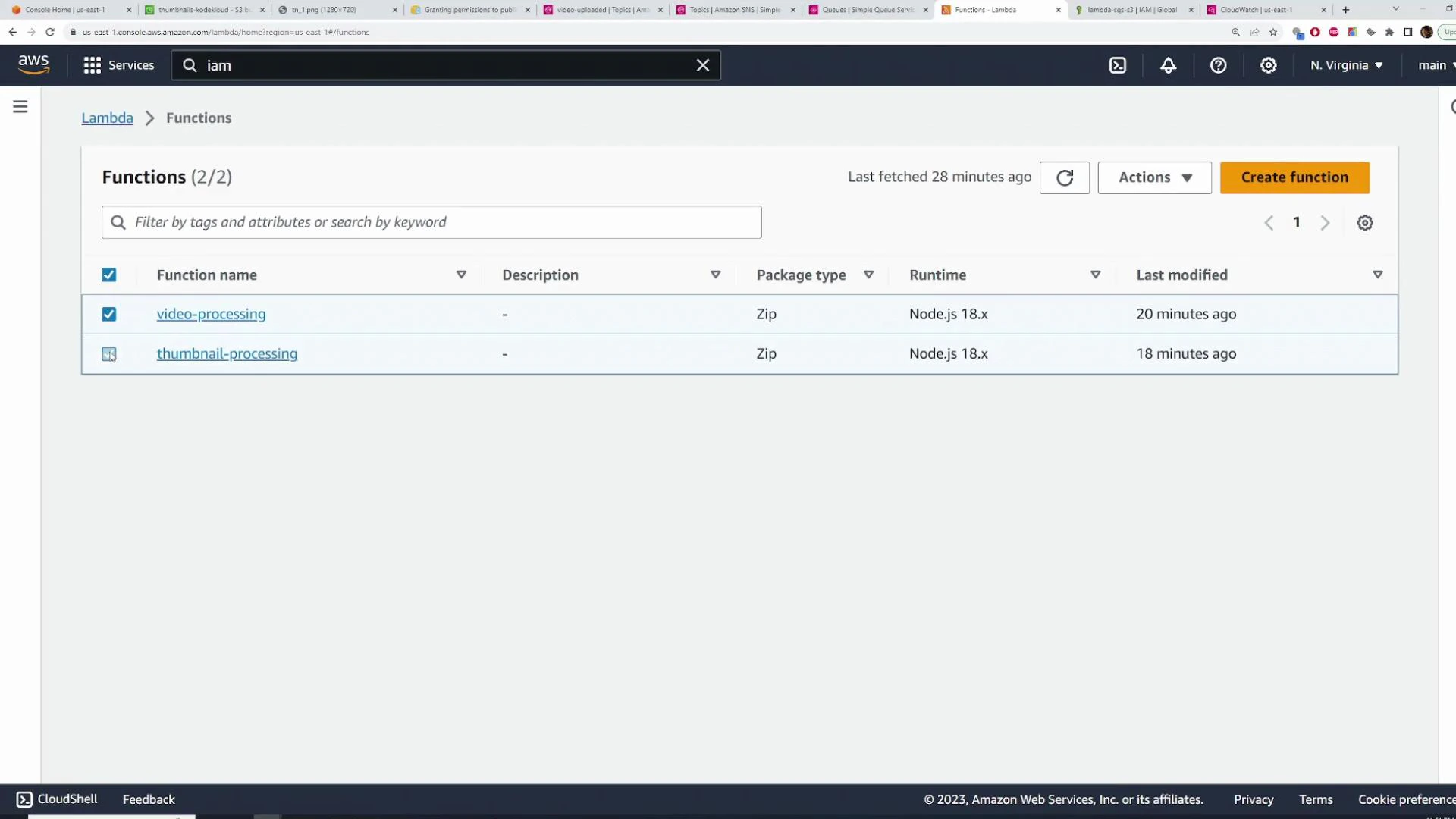

- Create two Node.js 18.x Lambda functions:

- video-processing

- thumbnail-processing

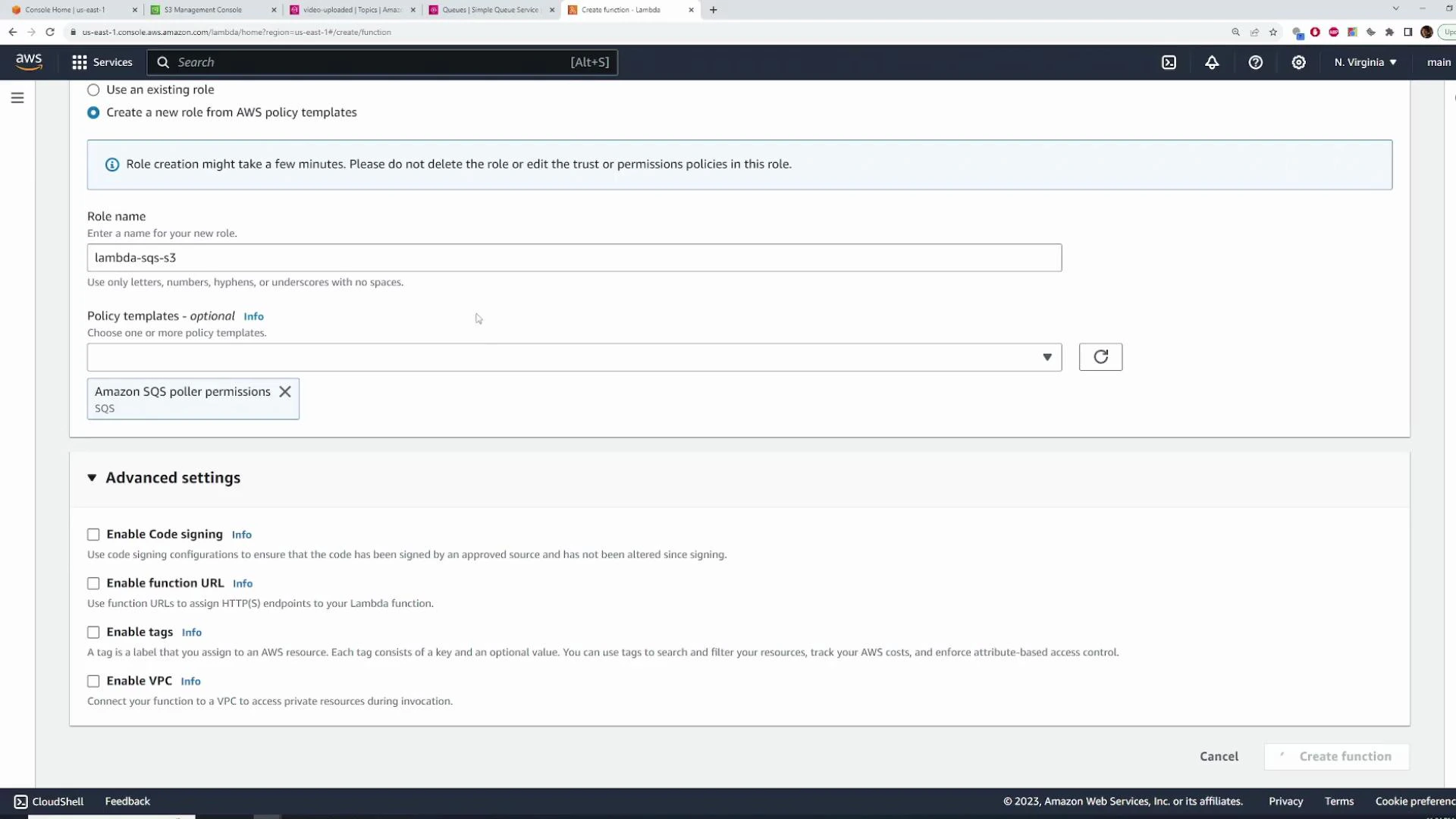

- Create an IAM role (example: lambda-sqs-s3) and attach:

- AWS managed policy that allows Lambda to poll SQS (AWSLambdaSQSQueueExecutionRole or similar).

- S3 permissions (least privilege: GetObject/PutObject on the relevant buckets). For demos you might use AmazonS3FullAccess, but restrict in production.

S3 must be allowed to publish to your SNS topic when you configure S3 event notifications. Add an SNS topic policy statement that allows the s3.amazonaws.com principal to Publish from your bucket’s ARN (we provide an example policy later). Without this, S3 event notifications to SNS will fail.

- When Lambda polls SQS, the invoked event contains event.Records — an array of SQS records.

- Because SNS delivered to SQS, each SQS record’s body is a stringified SNS notification. To get your original payload you typically parse twice:

- JSON.parse(event.Records[i].body) → SNS notification object

- JSON.parse(parsed.Message) → your original message object

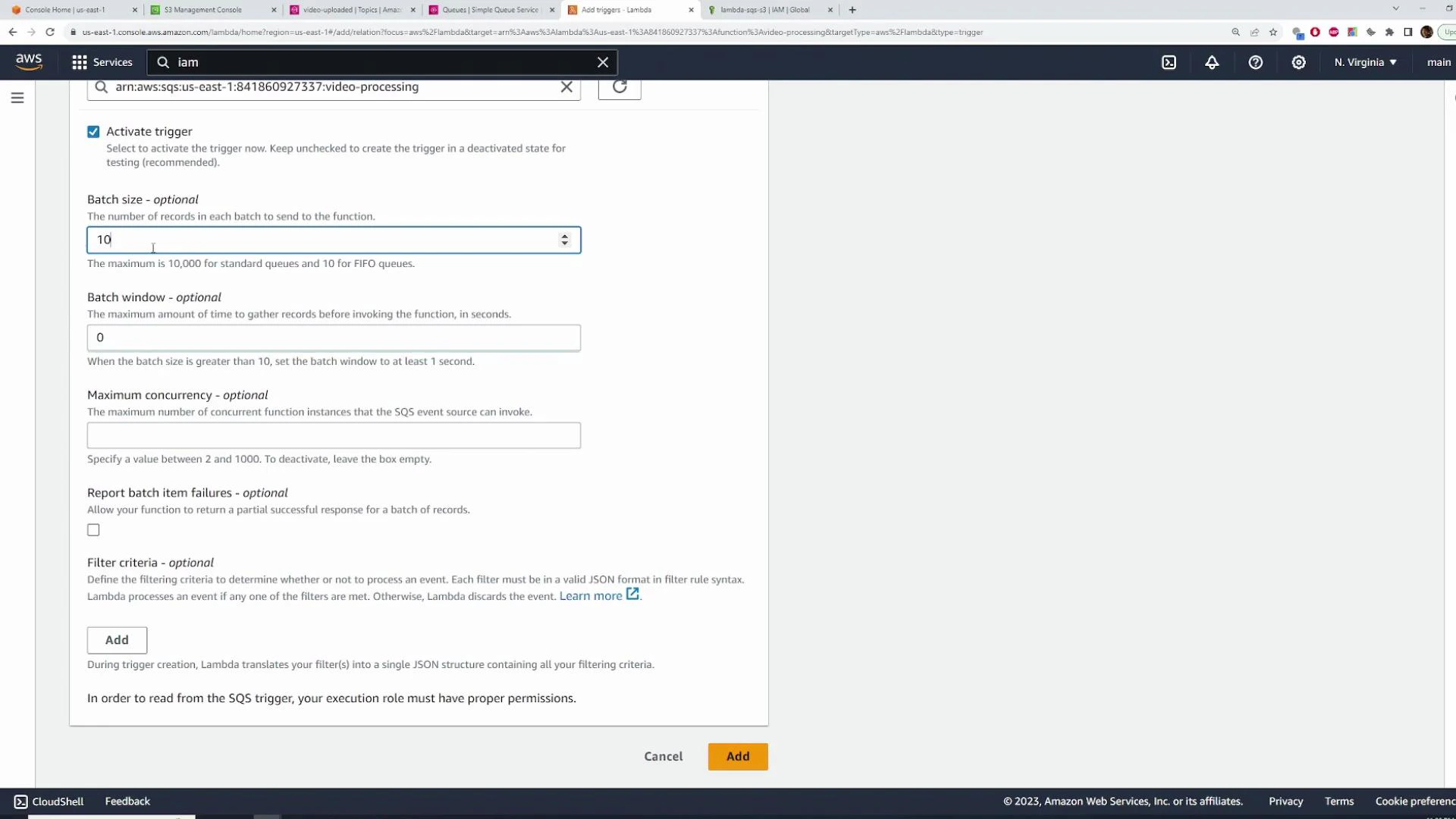

- Configure the SQS trigger on your Lambda with:

- Batch size — max messages delivered per invocation (tune for cost/throughput).

- Maximum batching window — how long Lambda waits to fill a batch before invoking.

- Larger batch sizes improve cost efficiency but require your handler to iterate records and handle partial failures correctly.

- After Lambda runs, view CloudWatch Logs (Monitor → View logs). The logged event shows event.Records[0].body as a stringified SNS notification with a Message property that contains your original JSON string.

- The heavy lifting (ffmpeg, HLS packaging) is out-of-scope for this article, but the following skeleton shows correct message parsing and S3 GetObject usage with AWS SDK v3:

- Typical production steps in the video Lambda:

- Stream the object to /tmp or buffer it,

- Run ffmpeg to transcode to HLS (.m3u8 + .ts segments),

- Upload outputs to processed-videos-kodekloud with PutObjectCommand,

- Remove temporary files to free /tmp.

ffmpeg is not included in Lambda by default. To run ffmpeg you can either use a Lambda Layer containing a static ffmpeg binary or deploy a container-based Lambda with ffmpeg baked into the image. Choose the approach that best fits build and deployment workflows.

- Create thumbnail-processing Lambda, reuse the same IAM role (SQS poller + S3 permissions).

- Use batch size = 1 for simpler thumbnail extraction (one message one invocation is easier to manage).

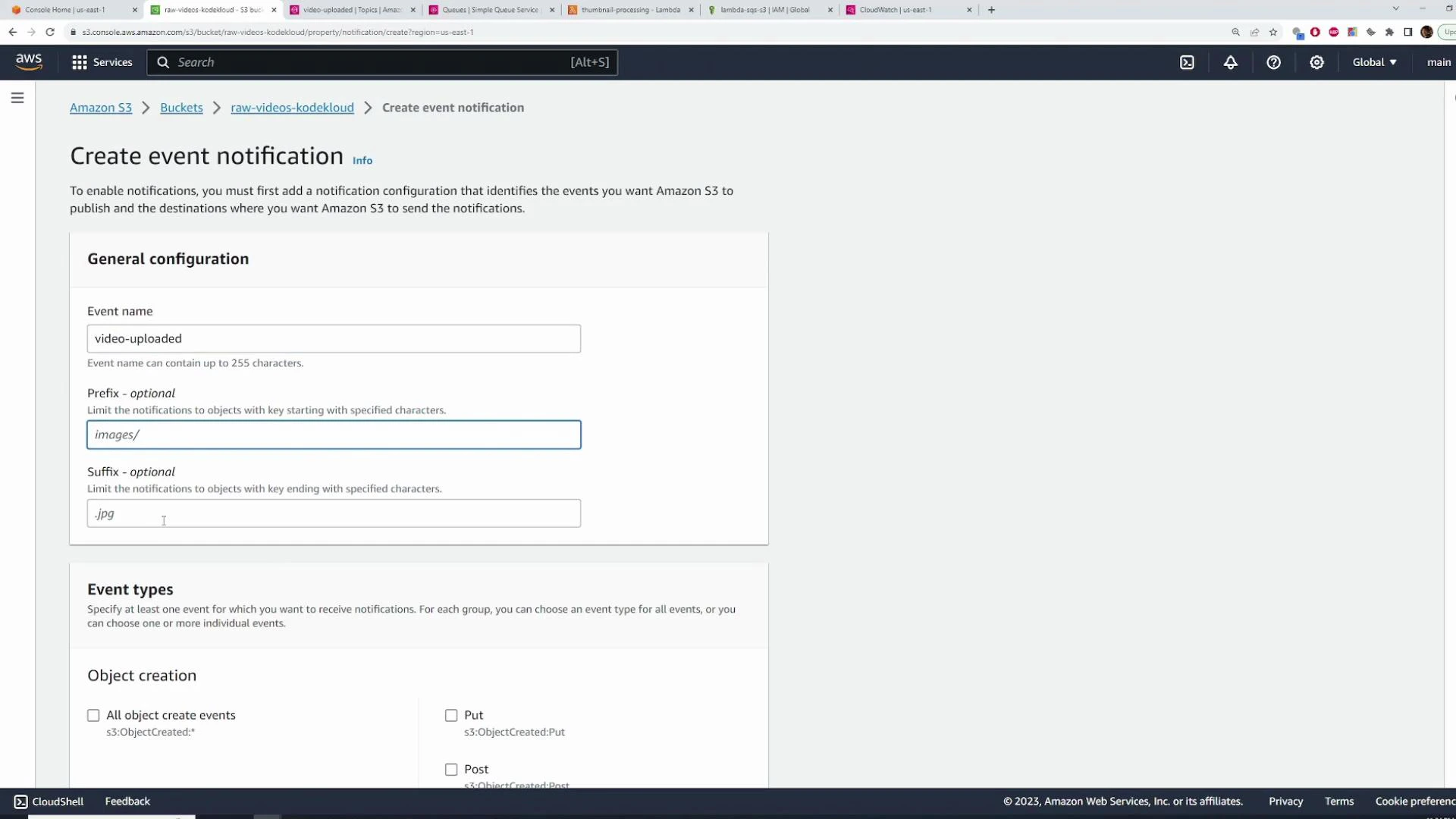

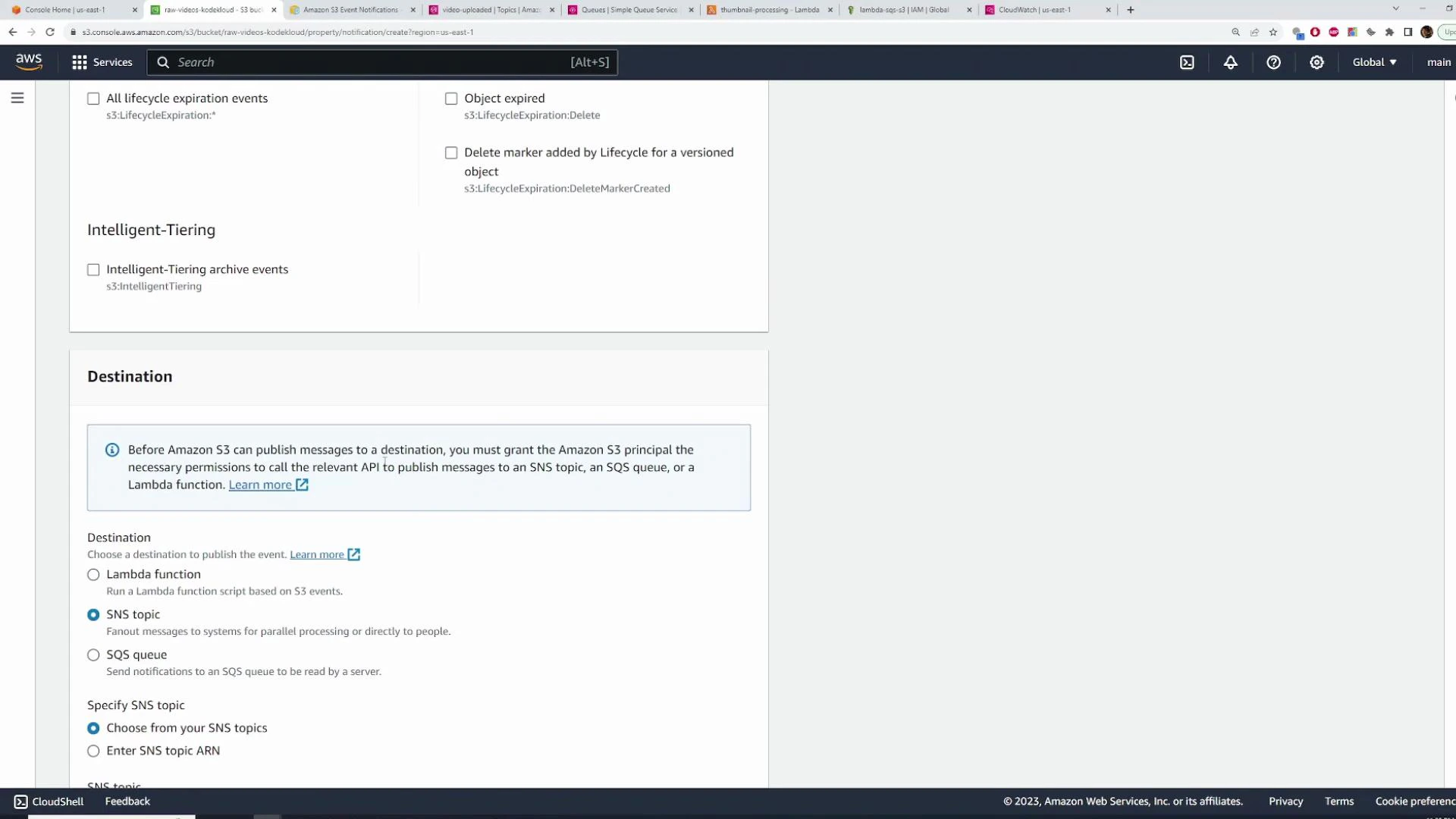

- You can configure S3 to send ObjectCreated:* events to the SNS topic so that uploads automatically trigger the pipeline.

- In the raw-videos-kodekloud bucket:

- Properties → Event notifications → Create event notification

- Event types: ObjectCreated (All object create events)

- Destination: SNS topic → video-uploaded

- Add this statement to the SNS topic’s access policy (Topics → select topic → Edit → Access policy). Replace the ARNs and account IDs with your values:

- Merge this statement into the existing policy’s Statement array and save.

- Then configure the S3 event notification to use the topic.

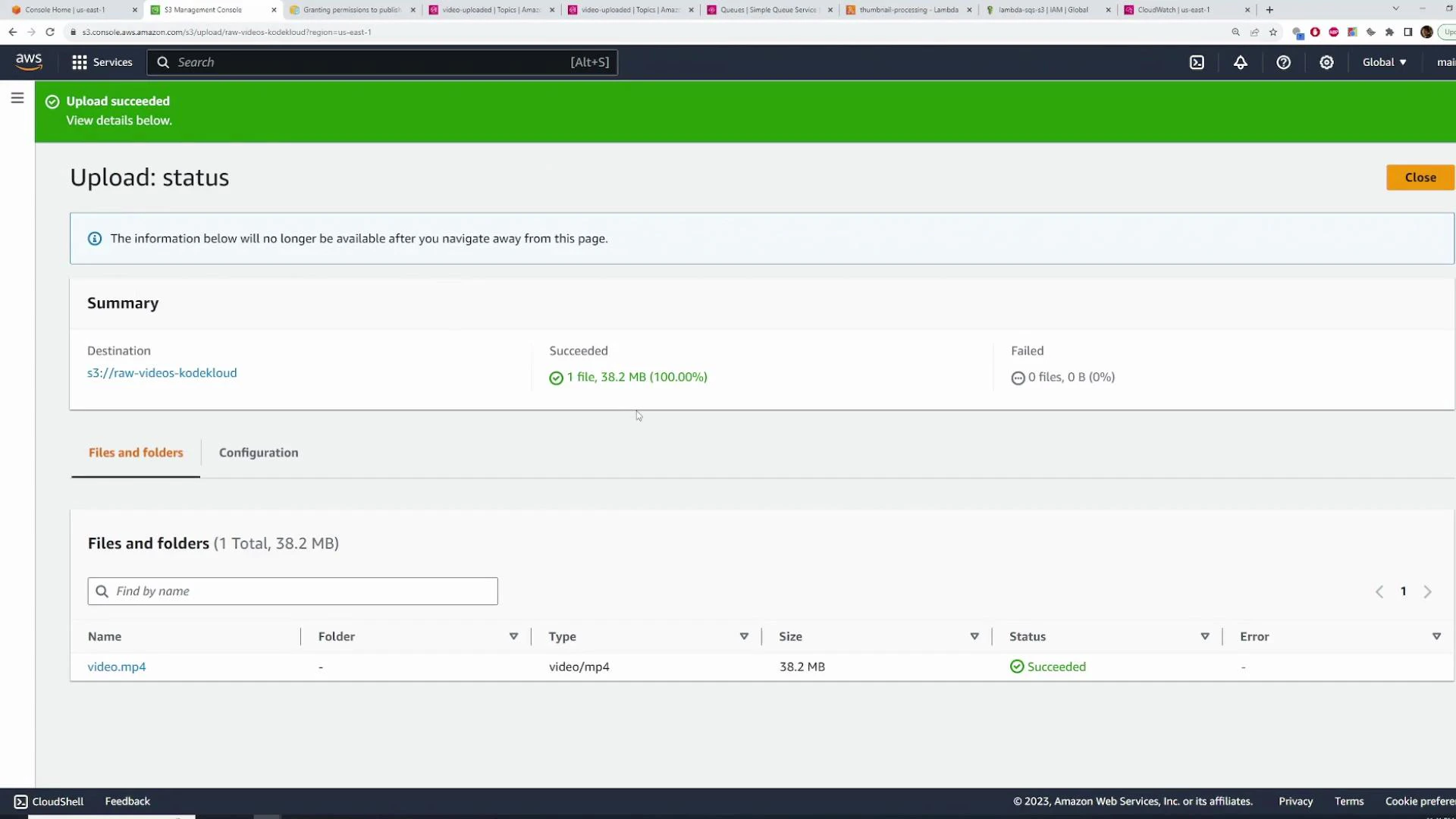

- Upload a sample video to raw-videos-kodekloud (console, SDK, or CLI).

- The expected sequence:

- S3 emits ObjectCreated → SNS topic.

- SNS fans out to both SQS queues.

- Lambda functions (configured with SQS triggers) are invoked to process the file.

- Processed outputs are written to processed-videos-kodekloud and thumbnails-kodekloud.

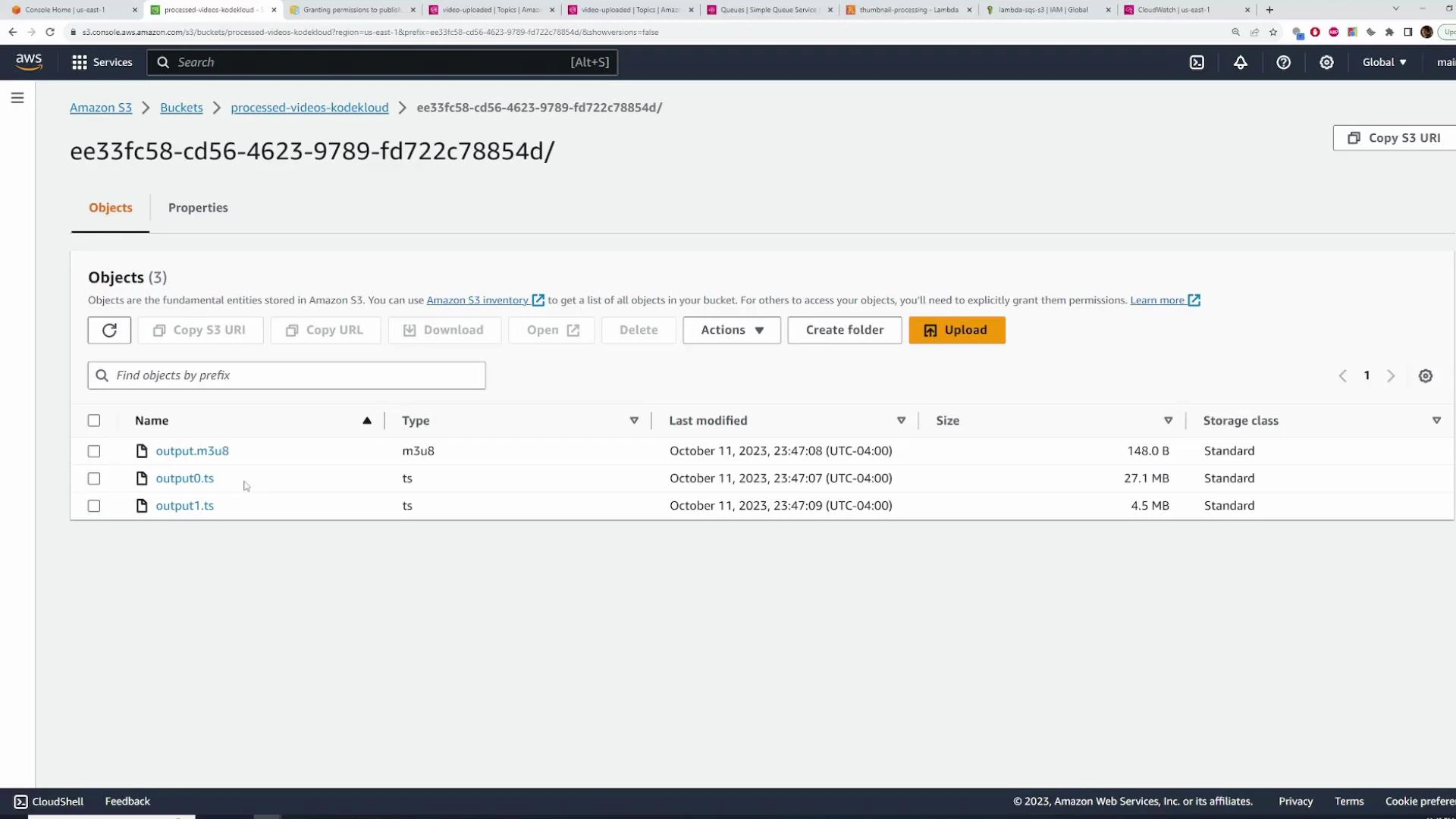

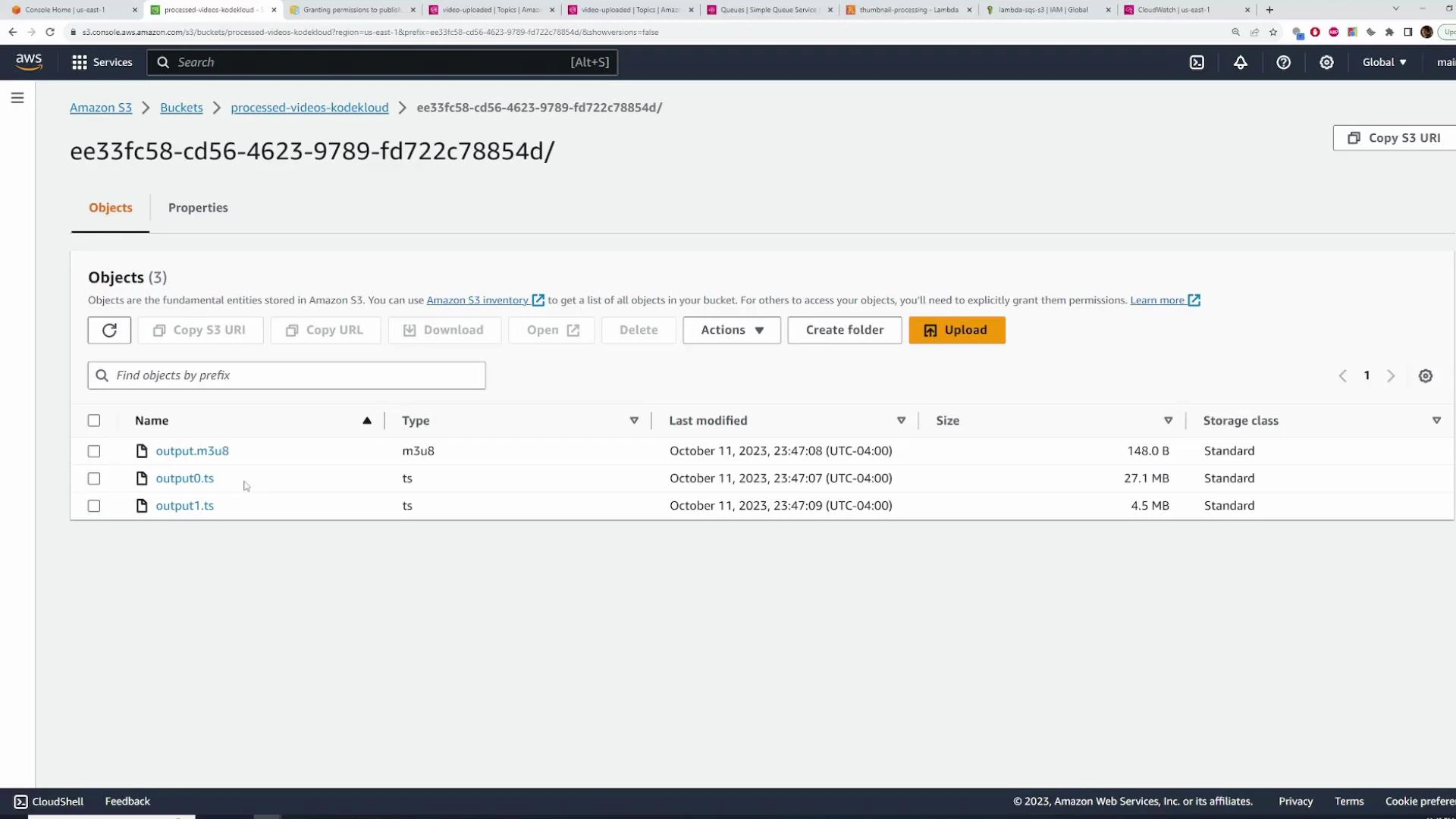

- Check the processed-videos-kodekloud bucket for HLS outputs (.m3u8 playlist and .ts segments).

- Check thumbnails-kodekloud for generated thumbnails.

- The screenshots above illustrate expected outputs after successful Lambda execution.

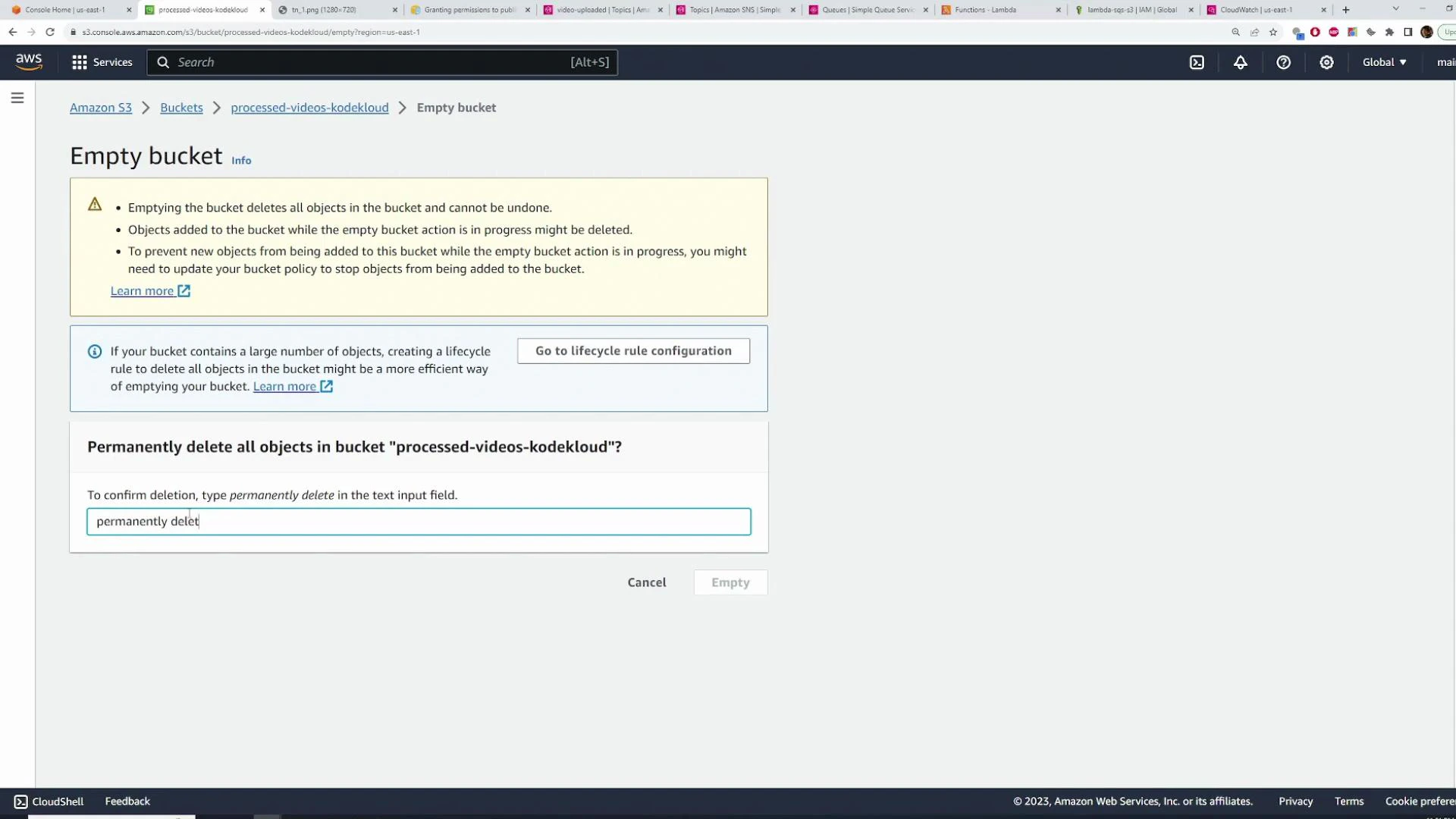

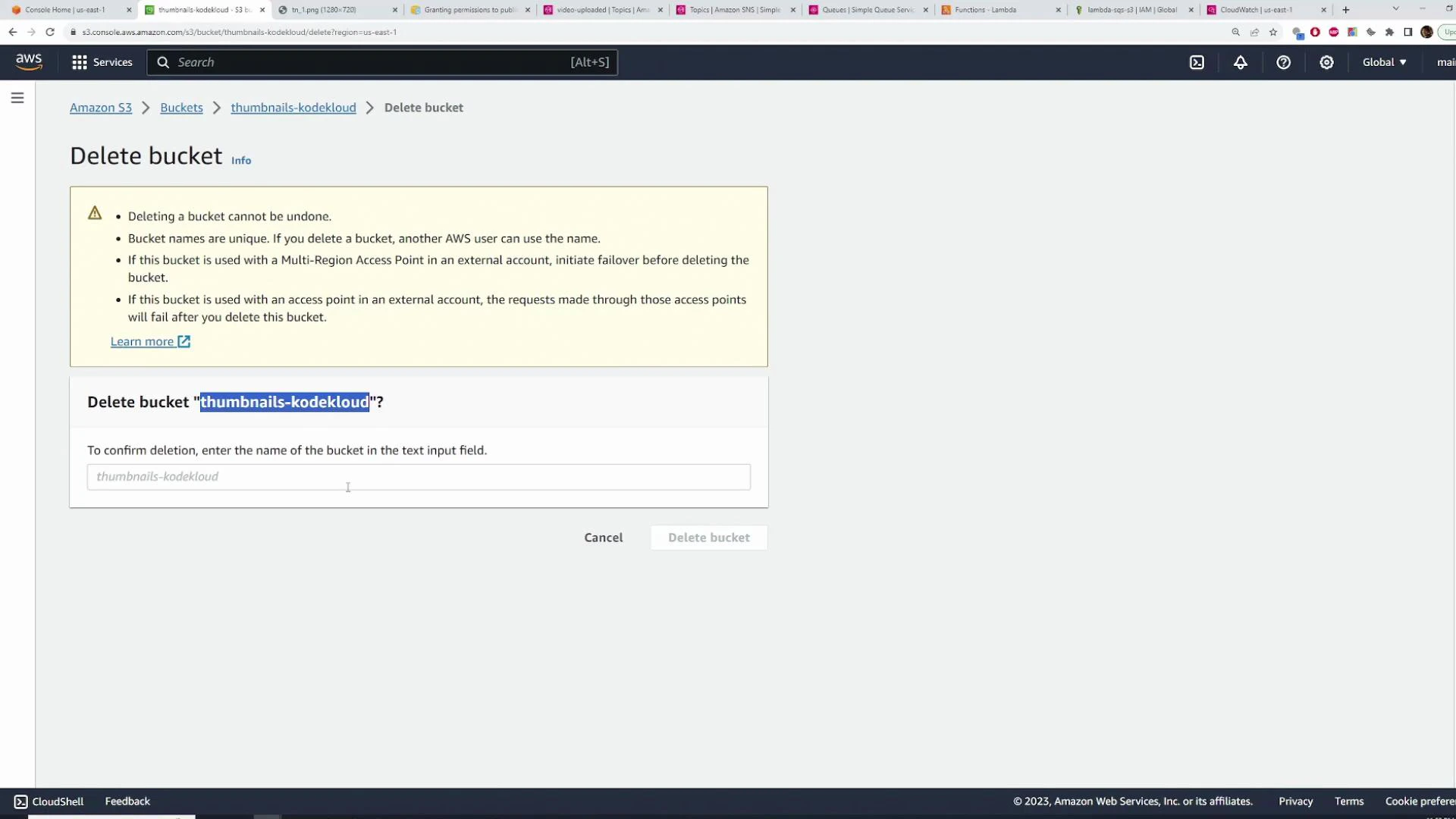

- To avoid charges after testing, delete resources you created:

- Delete SQS queues.

- Delete SNS topic.

- Delete Lambda functions.

- Empty and delete S3 buckets (note: emptying may be required before deletion).

- This guide demonstrated how to wire S3 → SNS → SQS → Lambda to implement a fan-out processing pipeline for uploaded videos. Key takeaways:

- Use SNS to fan-out a single S3 event to multiple consumers via SQS.

- S3 event notifications can publish directly to SNS; ensure SNS topic policies allow S3 to Publish.

- When SNS publishes to SQS, Lambda receives SQS records whose body contains an SNS notification string — JSON.parse twice to retrieve the original payload.

- Tune SQS batch size and batching window on Lambda triggers for cost and throughput trade-offs.

- For ffmpeg in Lambda, use a Layer or container-based Lambda.

- Amazon S3 Event Notifications

- Amazon SNS Developer Guide

- Amazon SQS Developer Guide

- AWS Lambda event source mapping for SQS

- AWS SDK for JavaScript v3

- FFmpeg — packaging options for Lambda: layer vs container

When SNS publishes to SQS, the Lambda handler sees an SQS record whose body contains an SNS notification as a string. You must JSON.parse the SQS record body to get the SNS notification, then JSON.parse the notification.Message to get your original payload.