Welcome back. In the previous section you rolled out laptops across your company. Now your R&D team is building an internal chatbot (think ChatGPT trained on company data). Training that model is painfully slow on CPUs alone—it’s like trying to read a library one page at a time. To accelerate training we need to run thousands of calculations in parallel, which is exactly what GPUs are designed to do. In this lesson you’ll learn:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

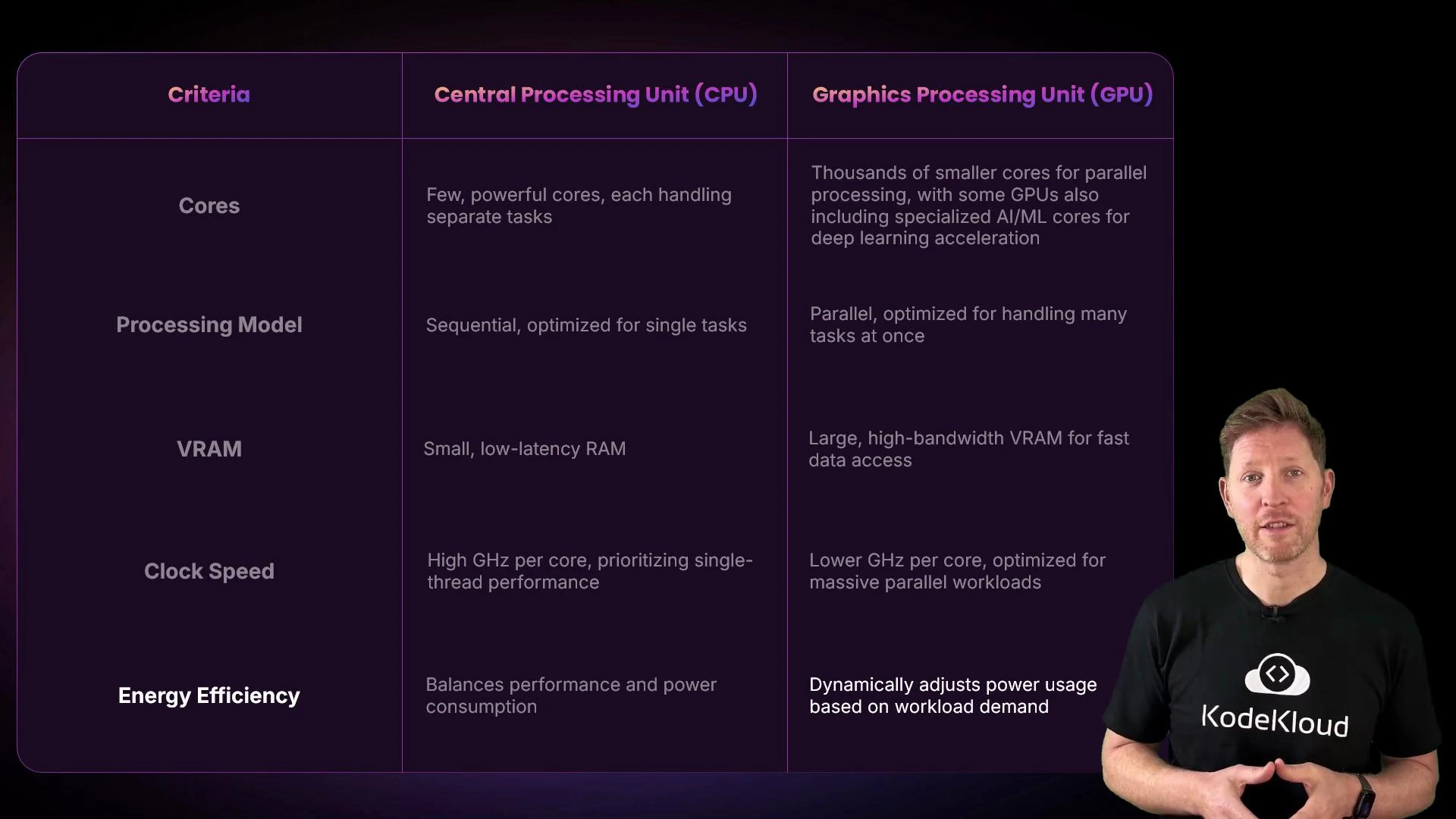

- What architectural differences make GPUs better for certain workloads than CPUs.

- How GPU performance depends on core structure, clock speed, and memory.

- Which GPU features matter for AI training and inference.

- CPU: A few powerful cores optimized for complex, branching, single-threaded or moderately parallel tasks.

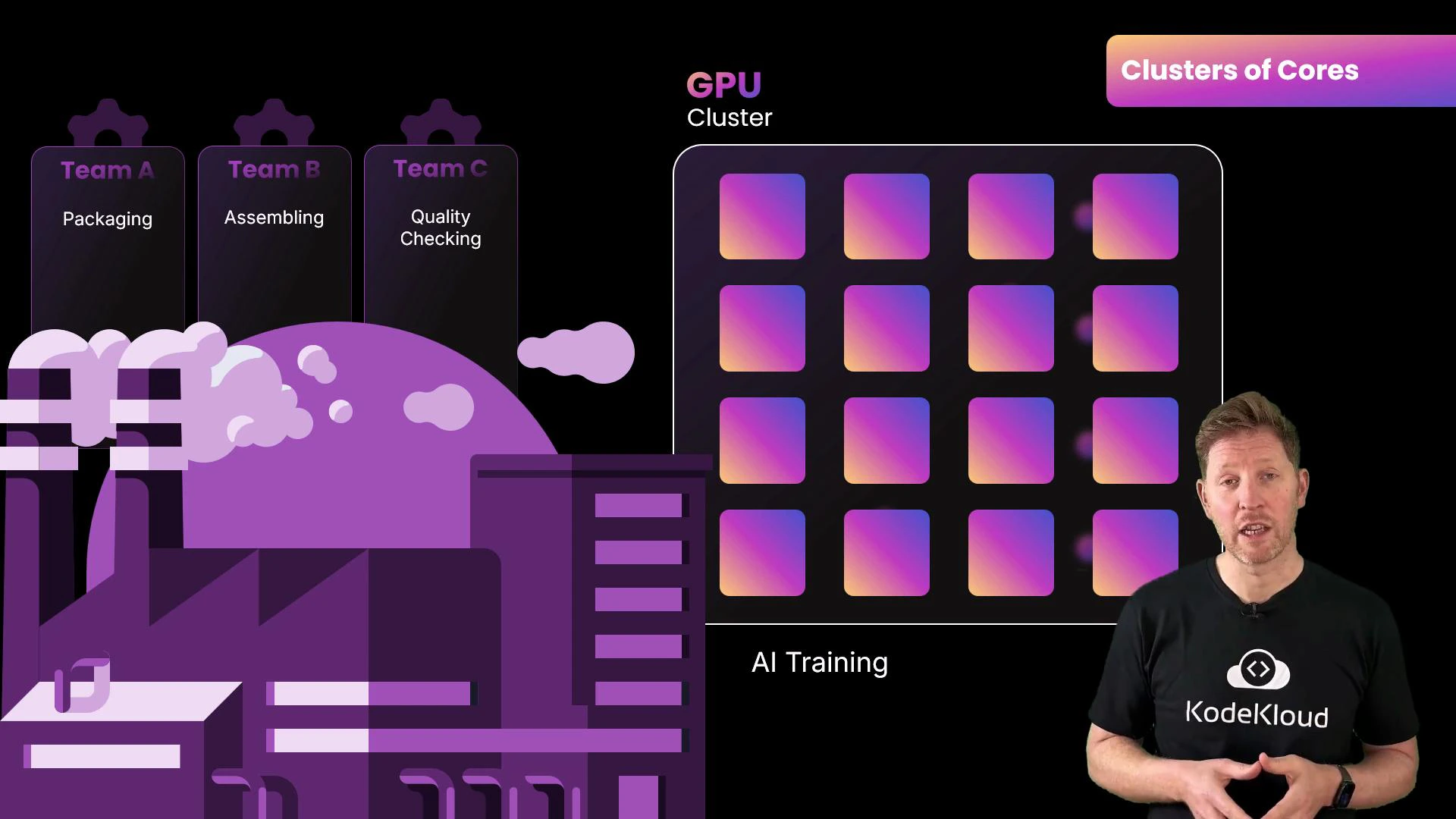

- GPU: Thousands of simpler cores optimized for performing the same operation over large data sets in parallel.

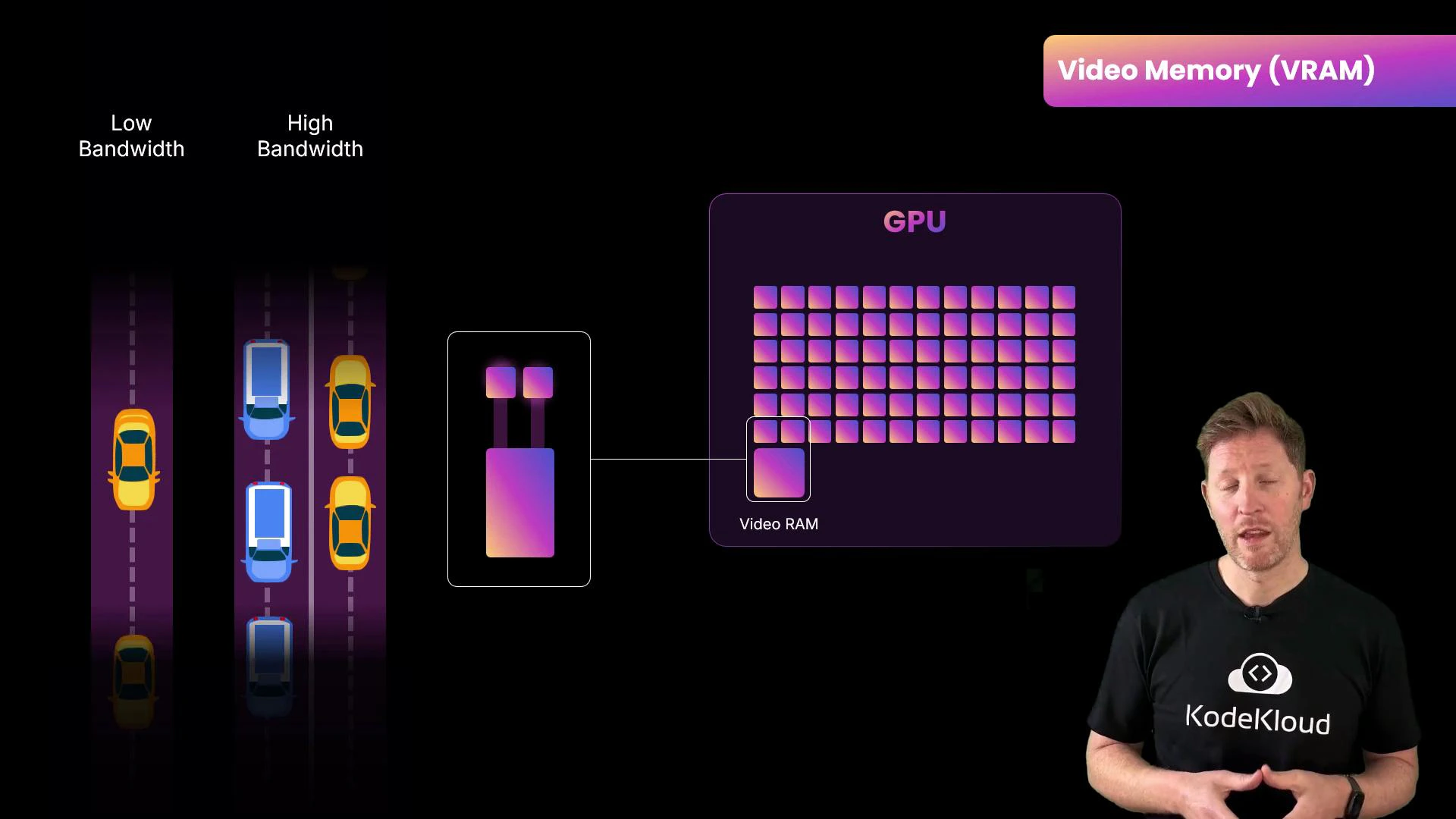

- Capacity: how large a model or batch you can store on-device.

- Bandwidth: how fast data moves between VRAM and compute cores.

VRAM capacity and bandwidth are crucial for AI workloads: capacity limits the size of models and batch sizes you can process, while bandwidth reduces stalls by keeping compute units fed. Running out of VRAM forces data transfers or smaller batches, which slow training.

2.5 GHz vs 5 GHz), but they compensate by having orders of magnitude more cores. Think of a race car (high single-thread speed) versus a freight train (lots of throughput). GPU designs prioritize total operations per second over single-thread latency.

High-performance GPUs require adequate cooling and power. When planning for continuous AI training, include power consumption and data-center cooling in hardware selection and total cost calculations.

| Feature | CPU | GPU |

|---|---|---|

| Cores | Few, powerful cores optimized for complex control flow | Thousands of simpler cores optimized for parallel workloads |

| Processing model | Serial / branching tasks, low latency | SIMD / SIMT parallelism, high throughput |

| Specialized units | Vector units, general-purpose cores | Tensor/matrix cores for AI acceleration |

| Memory | System RAM (lower bandwidth) | High-bandwidth VRAM (higher throughput) |

| Clock speed | Higher per-core clocks (~3–5 GHz) | Lower per-core clocks (~1–3 GHz) but many cores |

| Energy | Optimized for response latency and single-thread perf | Performance-per-watt focus; can draw high total power |

- VRAM capacity: large models and big batch sizes require more memory.

- Memory bandwidth: reduces stalls and speeds up compute.

- Specialized cores: tensor/matrix cores accelerate common NN ops.

- Power and cooling: ensure infrastructure can support continuous loads.

- Price vs performance: the most expensive GPU isn’t always the best fit—consider workload characteristics (training, inference, or graphics) and cost efficiency.

- NVIDIA CUDA Documentation

- NVIDIA Tensor Cores overview

- AMD GPU Architecture

- Deep Learning & Mixed Precision Training