In this lesson we cover backend strategies in CDKTF — why Terraform state matters, how to use a remote backend on AWS, and a practical workflow to avoid synth/deploy circular dependencies when using CDK for Terraform. Recap: Terraform state is a JSON file that records the resources Terraform manages, their current attributes, and relationships. Terraform (and CDKTF) uses state to plan changes accurately by comparing the real infrastructure with the desired configuration in code. When working locally you might see a normal deploy flow like this:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

terraform.tfstate). When you run cdktf deploy, CDKTF/Terraform compares the synthesized configuration to that stored state to determine what to create, change, or destroy.

Local state is fine for single-developer experiments, but it presents problems for collaboration: team members don’t share a single source of truth and concurrent changes can cause conflicts and drift.

Why use a remote backend?

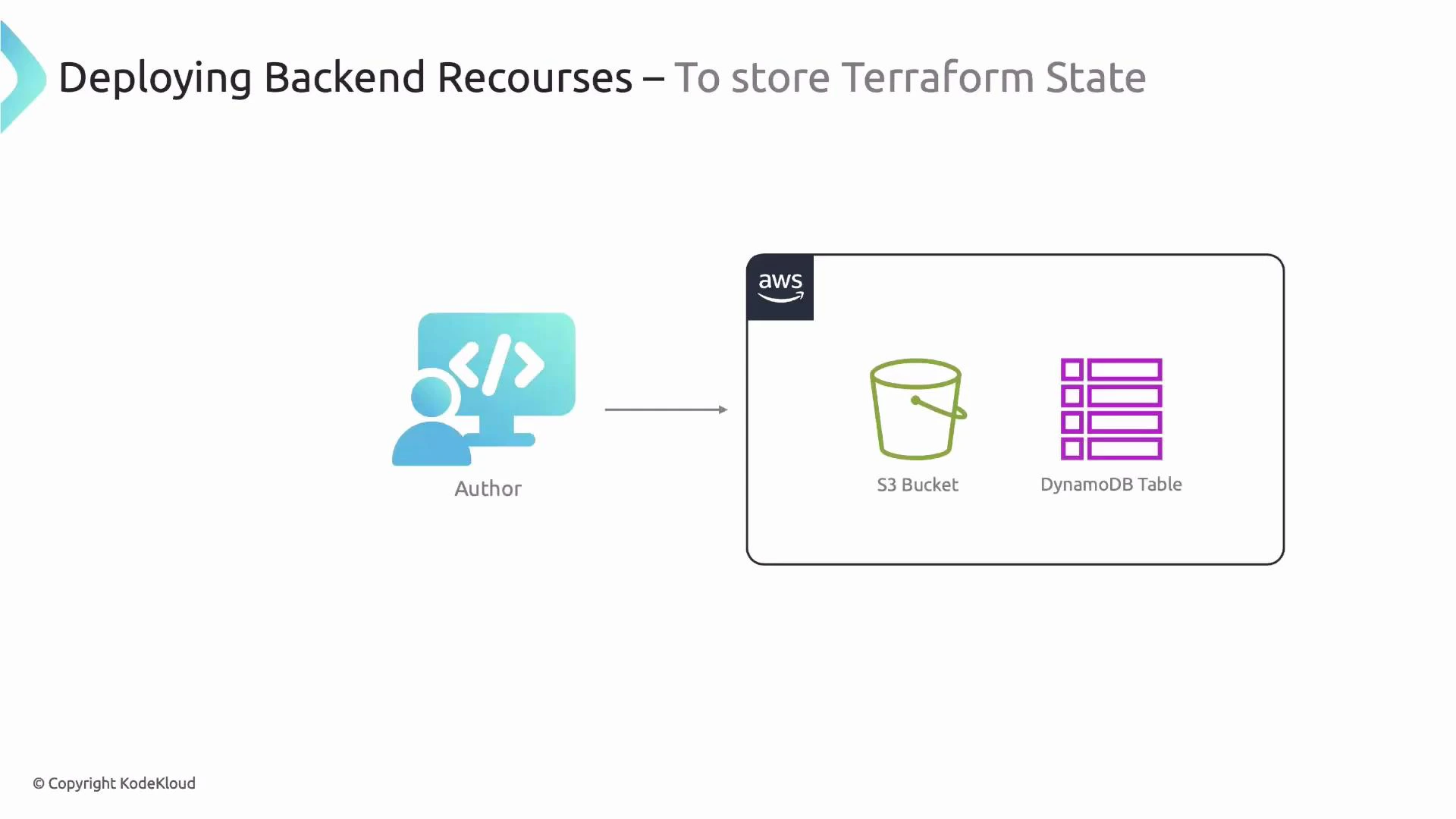

A remote backend provides a shared state file and avoids inconsistent local copies. On AWS a common production-ready choice is:- S3 to store the Terraform state file (shared storage)

- DynamoDB to provide a locking mechanism to prevent concurrent apply/plan operations

s3://cdktf-name-picker-backend/state-file and use the DynamoDB table for locking.

Creating the backend resources (S3 + DynamoDB)

You must create the S3 bucket and DynamoDB table before the backend can be used. Common approaches:| Option | When to use | Pros / Cons |

|---|---|---|

| Manual (AWS Console) | Quick one-off setup | Quick, but not reproducible or auditable |

| CDKTF (your project) | Keep everything in code | Reproducible, but can create a circular dependency if not bootstrapped first |

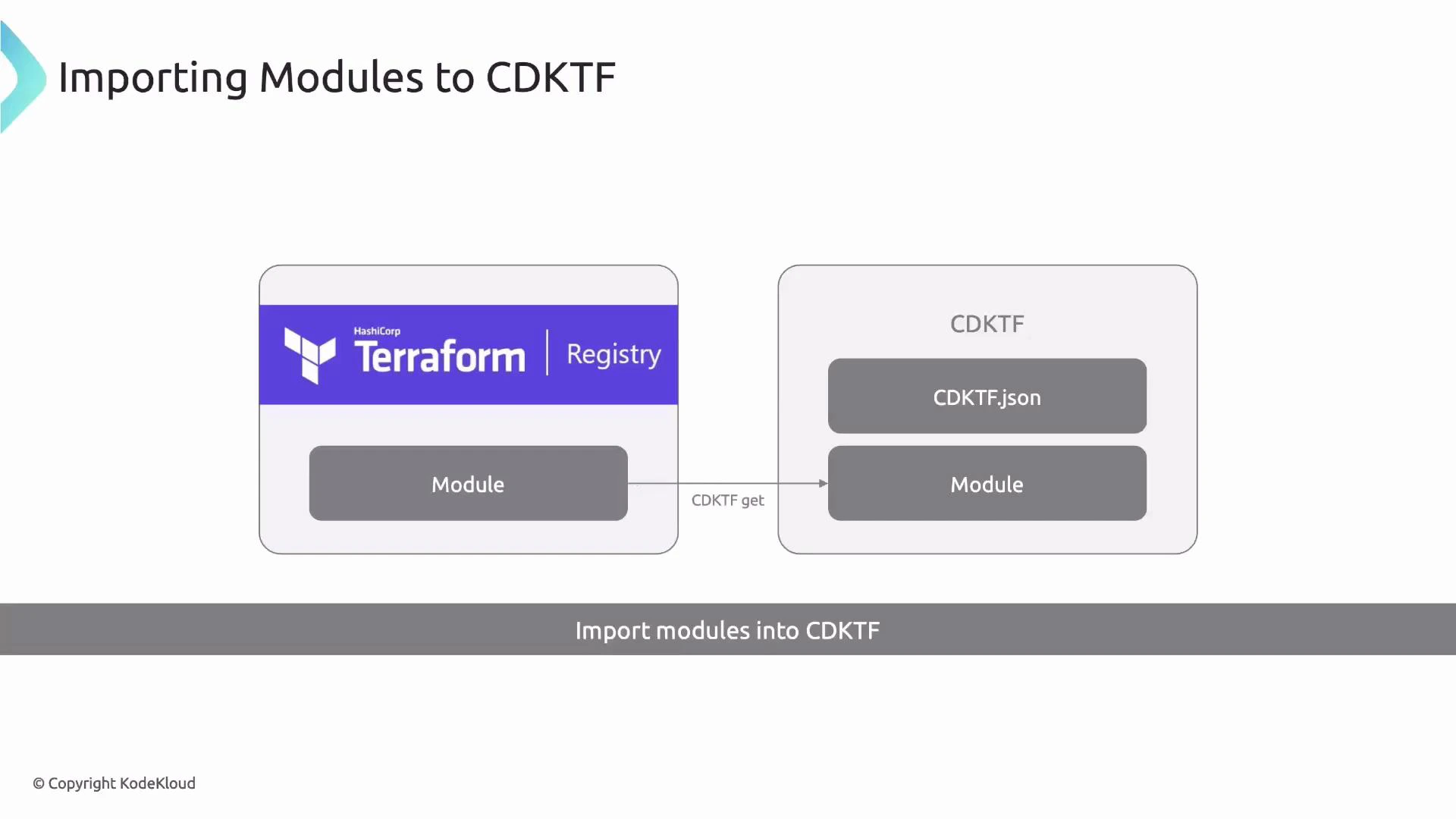

| Import a Terraform Registry module | Reuse community modules | Fast and consistent; integrates with CDKTF via cdktf get |

cdktf get.

cdktf get):

yarn cdktf get or cdktf get to fetch modules and generate .gen wrappers. Then import them like any other CDKTF construct:

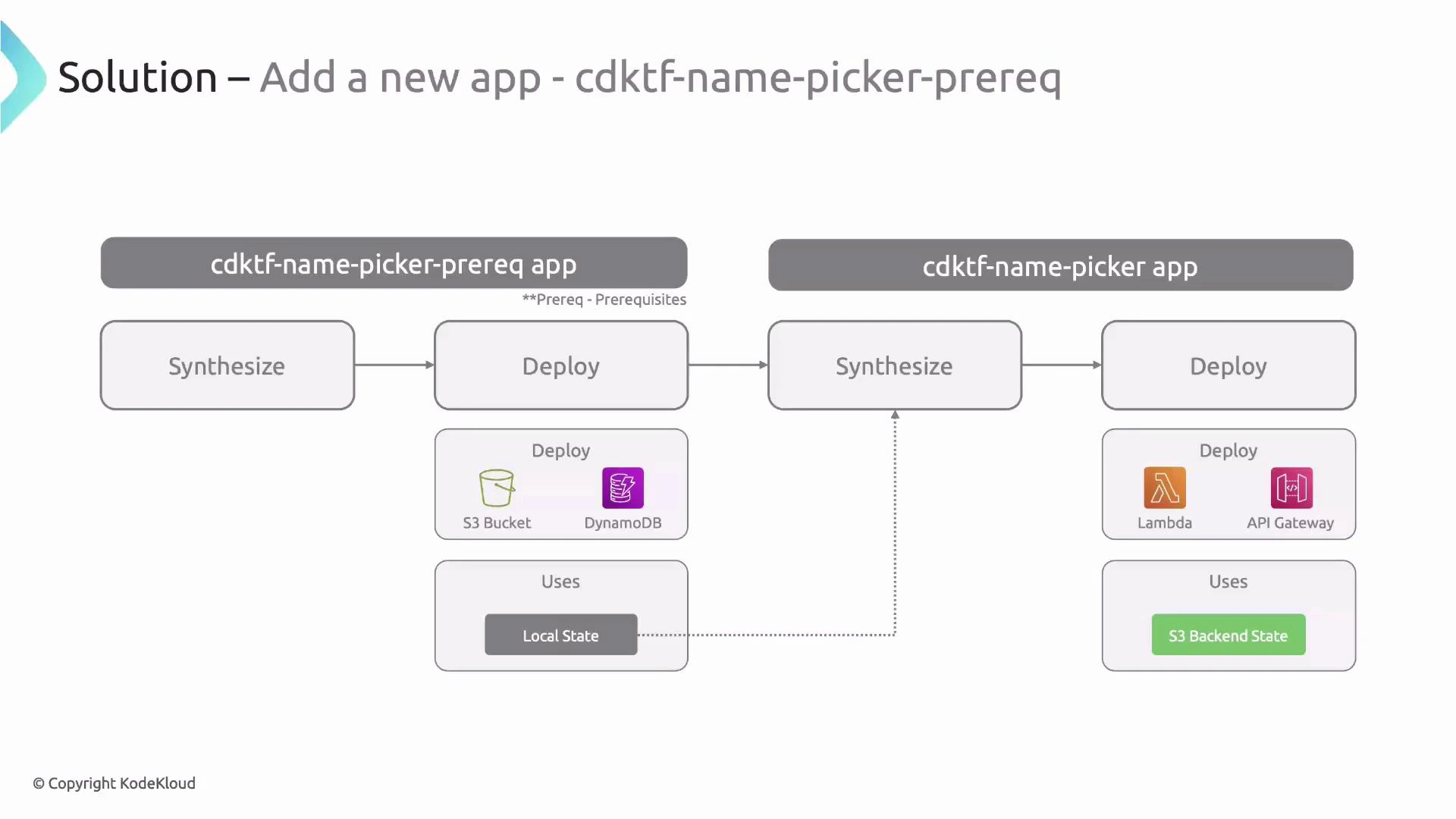

Circular dependency problem and the recommended solution

Problem scenario:- CDKTF synthesizes your main app into Terraform. CDKTF needs a backend configuration to be resolvable at synth-time.

- That backend (S3 + DynamoDB) may be created by Terraform itself as part of the same project.

- Prereq app (boots the backend) — uses local state and creates S3 + DynamoDB.

- Main app — uses the S3 backend created by the prereq app.

Implementation overview (step-by-step)

-

Organize code: move the existing NamePicker stack to

stacks/NamePickerStack.ts. Remove any S3Backend declarations from it — the backend will be injected later by a base stack. - Create a Prereq stack that provisions the S3 bucket and DynamoDB table and emits outputs.

Use the AWS account ID or another unique suffix for S3 bucket names to avoid global collisions — S3 bucket names must be globally unique.

- Add a small config module for project-level constants:

- Add package.json scripts to manage prereq lifecycle separately:

- Deploy the prereq app to create the backend resources:

- Create a reusable base stack that sets up the S3 backend for downstream stacks by reading outputs from the prereq state file:

terraform.<BACKEND_NAME>.tfstate (the prereq’s local state file created when you applied the prereq app) and configures an S3 backend for any stack that extends it. The key uses the stack ID so each stack gets its own state key in the bucket.

- Update NamePicker stack to extend the base stack:

- Synthesize the main app:

cdktf.out/stacks/<stack-name> that now reference the S3 backend configuration.

- Migrate the existing local state into the S3 backend. In the generated stack folder run:

yes to migrate state.

Example (condensed) interaction:

When migrating state, run

terraform init -migrate-state inside the generated stack directory (e.g., cdktf.out/stacks/<stack-name>). Always back up local state files before migrating.- Deploy the main app from the project root as usual:

s3://<prereq-bucket>/<stack-key>) and you can remove the local terraform.tfstate for that stack if you no longer need it locally. Keep the prereq state file if you want to use it for subsequent bootstrapping or redeploys.

Best practices and notes

- For new projects, prefer creating the remote backend first (prereq app) to avoid manual migration.

- Consider separating prereq and main app into different packages or workspaces inside a monorepo to avoid CDKTF overwriting

cdktf.outduring synths. - Use unique names for globally scoped resources (like S3 buckets) — incorporate account ID, region, or a generated UUID.

- For larger teams and pipelines, consider automating the prereq bootstrap in CI/CD so the backend is reproducibly created before running downstream synths.

- CDKTF’s generated

.genconstructs let you reuse community Terraform modules from the Registry — runcdktf getto fetch and generate TypeScript wrappers.

Summary

- Terraform/CDKTF state is required to track resource metadata and plan accurate changes.

- Local state is acceptable for single-developer use but not for team collaboration.

- Use an S3 backend with DynamoDB locking for shared state on AWS.

- Avoid synth/deploy circular dependencies by splitting into a prereq app (bootstrap backend) and a main app (uses backend).

- Importing registry modules into CDKTF is straightforward with

cdktf get. - To move local state into S3, run

terraform init -migrate-statein the generated stack directory.