This guide shows how to connect Cline to local large language models (LLMs) so you can run models on your own machine instead of relying on hosted services. Running local LLMs is useful for experimenting with custom models from sources like Hugging Face, reducing API costs, and keeping data on-premises for privacy or compliance. We cover two common local runtimes: LM Studio and Ollama. Each section shows the configuration steps in Cline, example requests, and troubleshooting tips.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

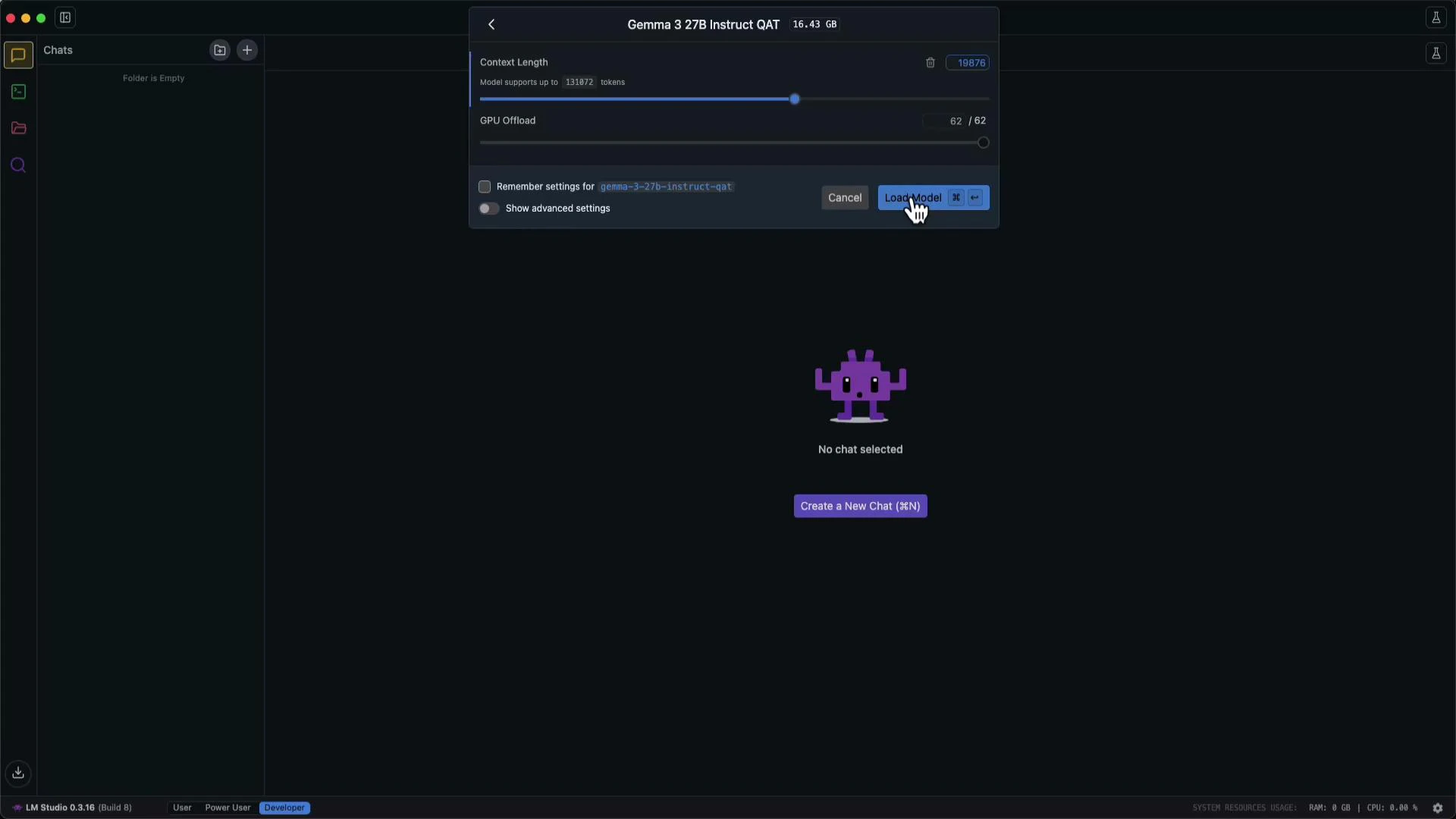

LM Studio — load and call a local model

LM Studio provides a GUI for running LLMs locally and exposes a simple HTTP API. In Cline you select Local LLMs as the provider and point the app at LM Studio’s base URL. Steps to use LM Studio with Cline:- Install and open LM Studio on your machine.

- Load the model you want to run (for example, Gemma 3 27B — the UI shows context length, GPU settings, and a Load Model button).

- Start LM Studio’s server and copy the server Base URL into Cline’s Local LLMs settings.

- Start Cline’s developer server (recommended) so you can view logs while testing requests.

- User prompt:

- Cline sends the request to the LM Studio API and returns a proposed file. Example result saved by Cline:

- Experiment with custom checkpoints from Hugging Face or other model repos.

- Reduce development costs by avoiding paid hosted APIs while prototyping.

- Keep source code and prompts on-premises for privacy and compliance.

Running LLMs locally is a good option when organizational policies restrict sending code or data to hosted providers (like OpenAI or Anthropic). It also gives you full control over model versions and runtime settings.

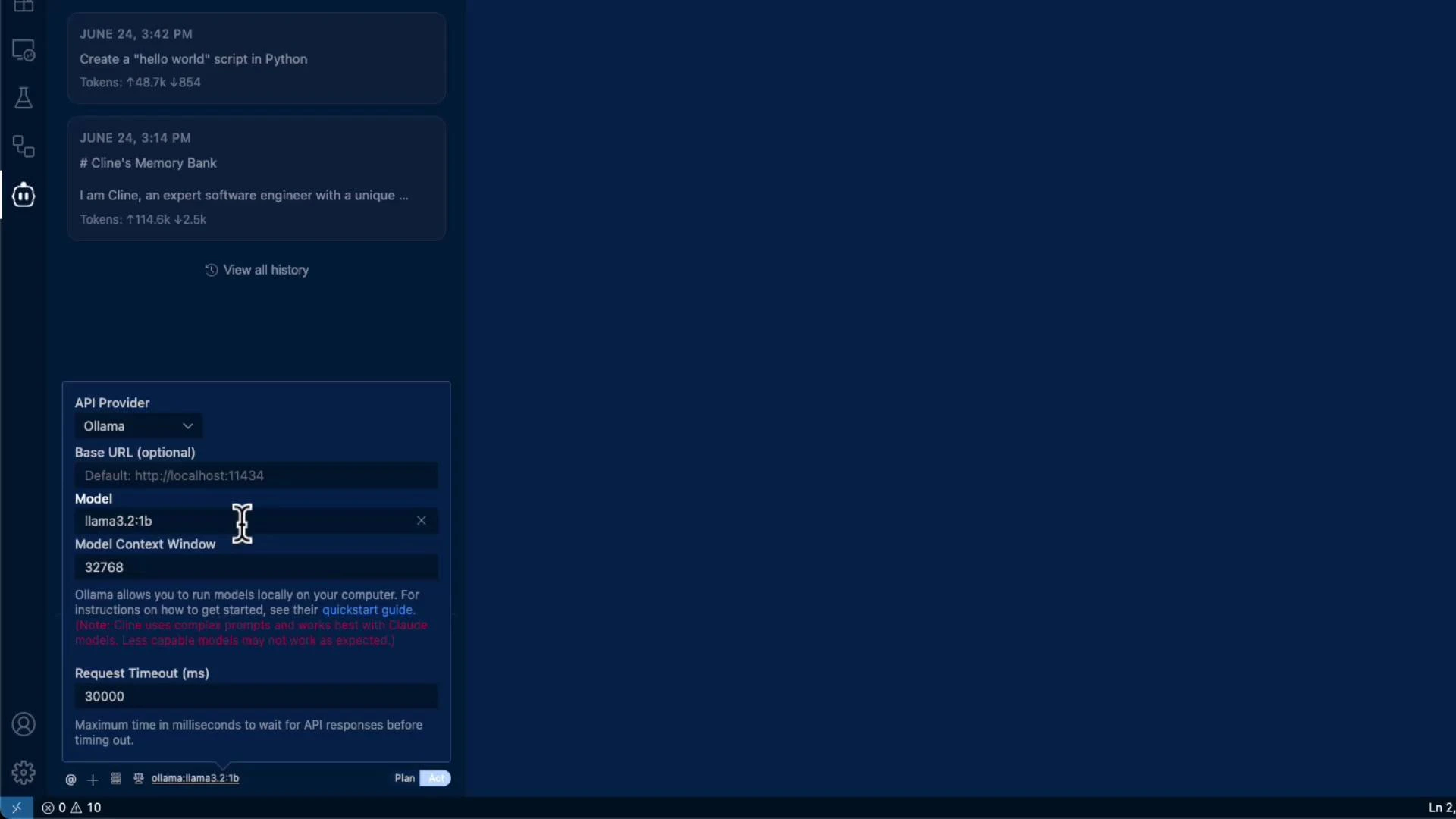

Ollama — another local runtime option

Ollama is a popular local LLM runtime that exposes a server you can point Cline to. When you choose Ollama as the provider in Cline’s API settings, the UI will list models available in your local Ollama installation. Model behavior varies, so try a few models to find the best fit for Cline’s edit-and-suggest workflows. Below is an example showing a model selected in Cline (e.g.,llama3.2:1b) and using it to apply an edit to an existing file.

- Switch Cline’s API provider to Ollama and choose a model (for example,

llama3.2:1b). - Open

hello.pyin the editor or add it to the model context. - Issue an edit request, for example:

| Feature | LM Studio | Ollama |

|---|---|---|

| Access method | GUI with built-in HTTP API | CLI/runtime with HTTP API |

| Best for | Visual model management, GPU settings | Lightweight local serving, model management via CLI |

| Pros | Easy model load/UI, model details visible | Quick to deploy, integrates with local tooling |

| Cons | GUI overhead, may require more resources | Model compatibility varies, fewer GUI controls |

- Model output format: Some local models return slightly different response formats; if Cline’s change suggestions look incorrect, try another model or refine the prompt.

- Resource limits: Larger models require adequate GPU/CPU and memory. Monitor LM Studio/Ollama logs and system resources.

- Logs: Run Cline in developer mode to inspect request and response logs for debugging.

- Model compatibility: If edits are inconsistent, test different models (or smaller/larger variants) to find one that produces coherent edit suggestions.

| Problem | Likely cause | Fix |

|---|---|---|

| Cline shows no models | Incorrect base URL or service not running | Verify LM Studio/Ollama server is running and use the correct base URL |

| Timeouts or slow responses | Model size or insufficient hardware | Increase timeout in Cline settings, use a smaller model, or upgrade hardware |

| Incorrect suggestion format | Model response doesn’t match expected schema | Try a different model or add clearer instructions in the prompt |

Running large models locally can consume significant GPU/CPU and memory. Ensure your machine meets the recommended requirements for the model you plan to run, and monitor logs and system resources to avoid crashes.

Summary

Connecting Cline to local LLMs (LM Studio, Ollama, etc.) gives you the flexibility to:- Experiment with custom or community models,

- Reduce or eliminate API costs during development,

- Keep sensitive code and data within your environment.

- LM Studio — https://lmstudio.ai/

- Ollama — https://ollama.com/

- Hugging Face model hub — https://huggingface.co/