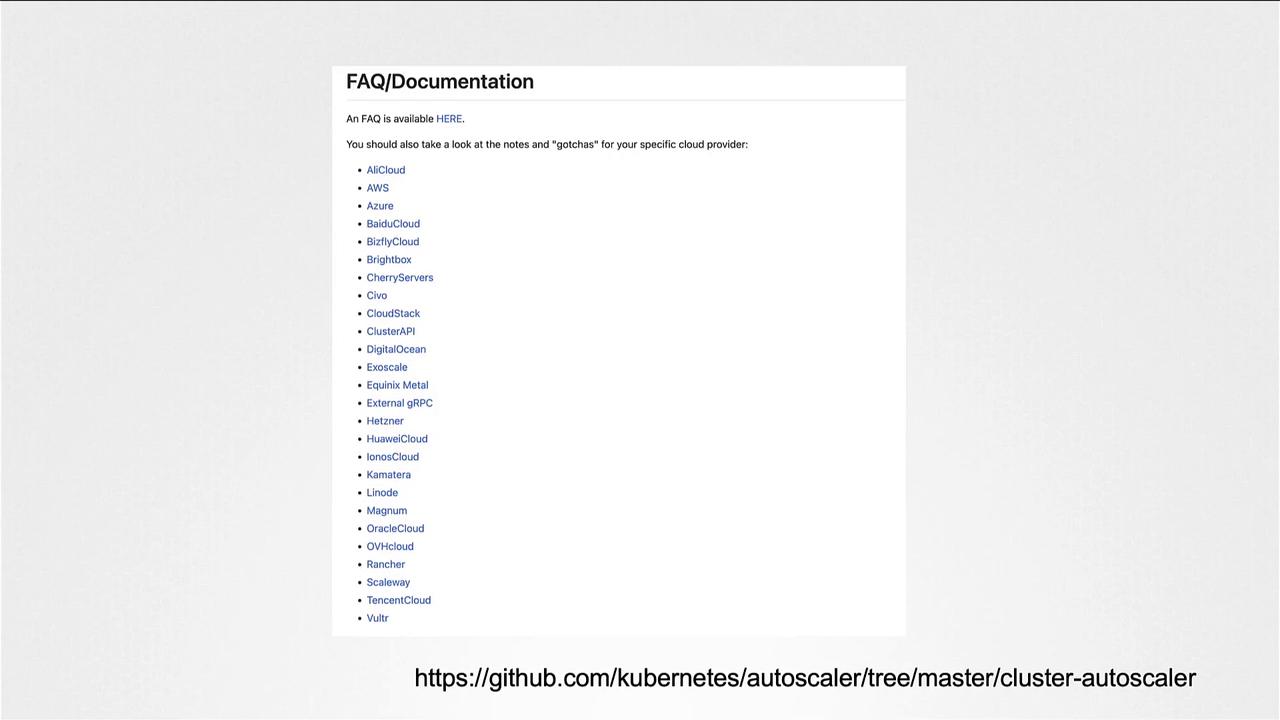

In this article, we explore the Cluster Autoscaler and its crucial role in optimizing resource allocation within a Kubernetes cluster. Previously, we discussed how pods consume resources and how the Kubernetes scheduler assigns these pods to nodes with enough available capacity. However, as more pods are deployed, available resources become scarce. Once nodes run out of sufficient resources, pods enter a pending state, and the Cluster Autoscaler steps in to automatically scale the cluster by adding new compute instances. The Cluster Autoscaler integrates seamlessly with the underlying infrastructure provided by your cloud provider. Each supported provider has its own specific requirements and capabilities. For an up-to-date list of compatible cloud providers, please refer to the Kubernetes Cluster Autoscaler documentation. For example, the diagram below displays the supported cloud providers:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

On Google Cloud, you can easily configure the Cluster Autoscaler by setting autoscaling options during the creation of your Kubernetes cluster.

Configuration settings can vary significantly between cloud providers. Always consult your specific cloud provider’s documentation for precise instructions.

| Aspect | Description | Example Command/Reference |

|---|---|---|

| Resource Scaling | Automatically adds nodes when pods are pending due to resource shortages | gcloud container clusters create --cluster-autoscaler |

| Provider Specific Setup | Varies by cloud provider, refer to provider documentation | Google Cloud Autoscaling |