This guide explains how to configure a Prometheus server to scrape metrics from one or multiple nodes. After installing Prometheus and setting up your nodes with Node Exporters to expose metrics, you must explicitly configure Prometheus to discover and scrape these targets using its pull-based model. The configuration is maintained in the prometheus.yaml file, typically found in the /etc/prometheus directory. Below is a basic Prometheus configuration example:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- The global section defines default parameters, which can be inherited or overridden by other sections.

- The scrape_configs section specifies the target endpoints for scraping metrics.

Detailed Scrape Configuration

Prometheus uses the scrape_configs block to identify targets for metric collection. In this enhanced example, additional parameters—such asscrape_interval, scrape_timeout, and sample_limit—allow you to fine-tune the scraper’s behavior.

- Global defaults specify a 1-minute scrape interval and a 10-second timeout.

- The node job overrides these defaults, setting a 15-second interval and a 5-second timeout.

- The static_configs block clearly indicates the target IP and port for scraping metrics.

Customizing Job Configurations

When adding a new job under scrape_configs, you must specify details such as the job name, scrape interval, timeout, scheme (HTTP/HTTPS), and the metrics path. By default, Prometheus scrapes metrics from the/metrics endpoint; however, customization is possible if your target uses a different endpoint.

For instance, the following configuration demonstrates how to scrape two targets over HTTPS using a custom metrics path:

- A job called nodes that scrapes every 30 seconds.

- A 3-second scrape timeout.

- Usage of HTTPS for securing the connection.

- Changing the default metrics path from

/metricsto/stats/metrics. - Two specified target nodes with their respective IP addresses and ports.

Updating the Prometheus Configuration

After modifying the prometheus.yaml file, Prometheus does not automatically reload changes. You must restart the Prometheus process. If running Prometheus manually (e.g., using./prometheus), you can simply press Ctrl+C and restart the process. For Prometheus running under systemd, use one of the following methods:

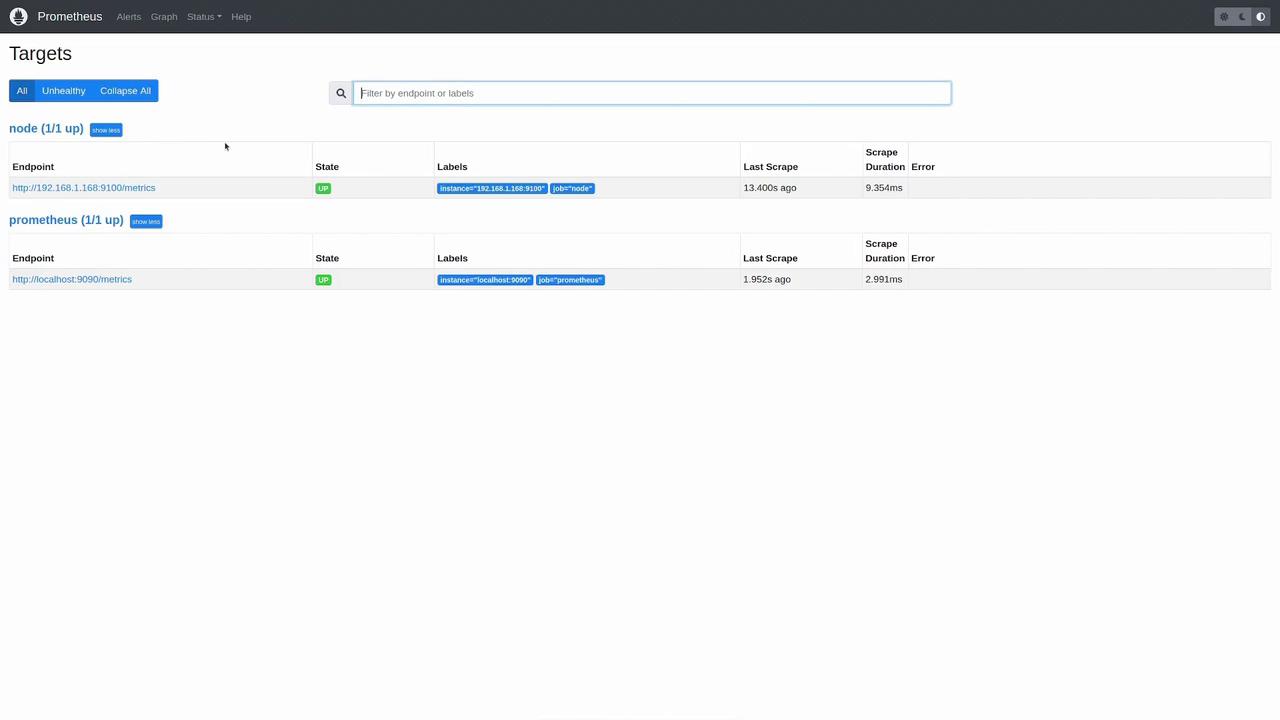

- The prometheus job continues to scrape Prometheus itself.

- A new node job is added to scrape a Linux machine running Node Exporter on IP 192.168.1.168 at port 9100.

Remember to save your changes to prometheus.yaml and restart Prometheus to apply the new configuration.

Verifying the Configuration

After restarting Prometheus, open the Prometheus web UI and navigate to the “Status” -> “Targets” page. Here you can inspect all configured targets and their scrape status. Both the Prometheus target and the new node target should display an “UP” status for successful metric collection.

This article demonstrated how to modify your Prometheus configuration file to add new scrape targets and adjust parameters such as scrape interval, timeout, and metrics path. By following these steps, Prometheus can successfully collect metrics from both itself and external nodes running Node Exporters.