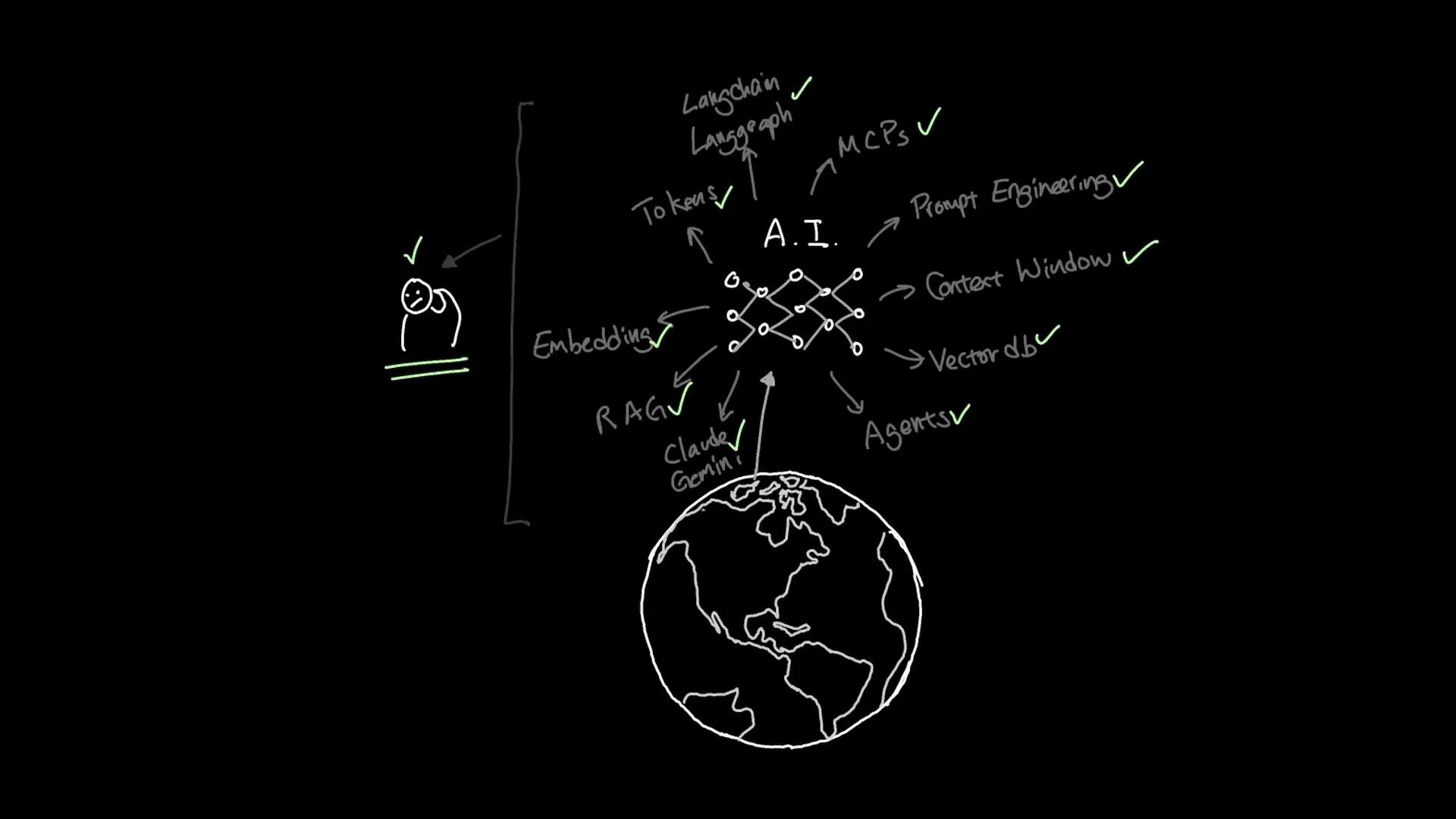

AI has advanced rapidly over the past few years. Today’s practical toolkit for building intelligent applications includes concepts and technologies such as prompt engineering, context windows, tokens, embeddings, Retrieval-Augmented Generation (RAG), vector databases, Model Context Protocols (MCPs), orchestration libraries like LangChain and LangGraph, and AI agents. This lesson gives a concise, hands-on overview so you can understand how these pieces fit together and start building right away.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

This lesson assumes no prior knowledge. It’s structured around a single, practical project that integrates fundamental AI concepts (tokens, embeddings, context windows, prompt design) with retrieval and orchestration (RAG, vector databases, LangChain/LangGraph, MCPs, and agents).

| Topic | What it is | Why it matters |

|---|---|---|

| Tokens, context windows, prompt design | The basic units and limits for language model input and strategies for guiding behavior | Impacts cost, capability, and response quality |

| Embeddings | Numerical vectors that represent text semantics | Enables semantic search and similarity-based retrieval |

| Retrieval-Augmented Generation (RAG) | Combining retrieval from a knowledge store with generation by a model | Improves factual accuracy and relevance for LLM outputs |

| Vector databases | Storage and indexing systems for embeddings | Fast, scalable similarity search for RAG pipelines |

| LangChain / LangGraph | Orchestration libraries for composing models, prompts, and tools | Simplifies building complex, multi-step AI workflows (agents) |

| MCPs (Model Context Protocols) | Conventions for how models share context and tools | Helps agents coordinate model calls and external tools |

| AI Agents | Systems that use models + tools to perform tasks autonomously | Enables multi-step, tool-enabled workflows like data lookups, API calls, and reasoning |

- Core AI fundamentals (tokens, embeddings, context windows, prompt design)

- Retrieval-Augmented Generation and vector databases — how embeddings are stored and searched

- Orchestration with LangChain and LangGraph, and how they help build agents

- MCPs and agent coordination across models and tools

- A single end-to-end project that ties these components together