In this walkthrough, we’ll verify how Amazon EKS and Kubernetes self-heal after a Pod deletion, and confirm there’s no impact on end-users by monitoring the application UI, Real User Monitoring (RUM), and control plane metrics in CloudWatch.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

1. Verify Pod Auto-Replacement

After deleting a Pod, Kubernetes should automatically spin up a new one. Run:Kubernetes replaces the deleted

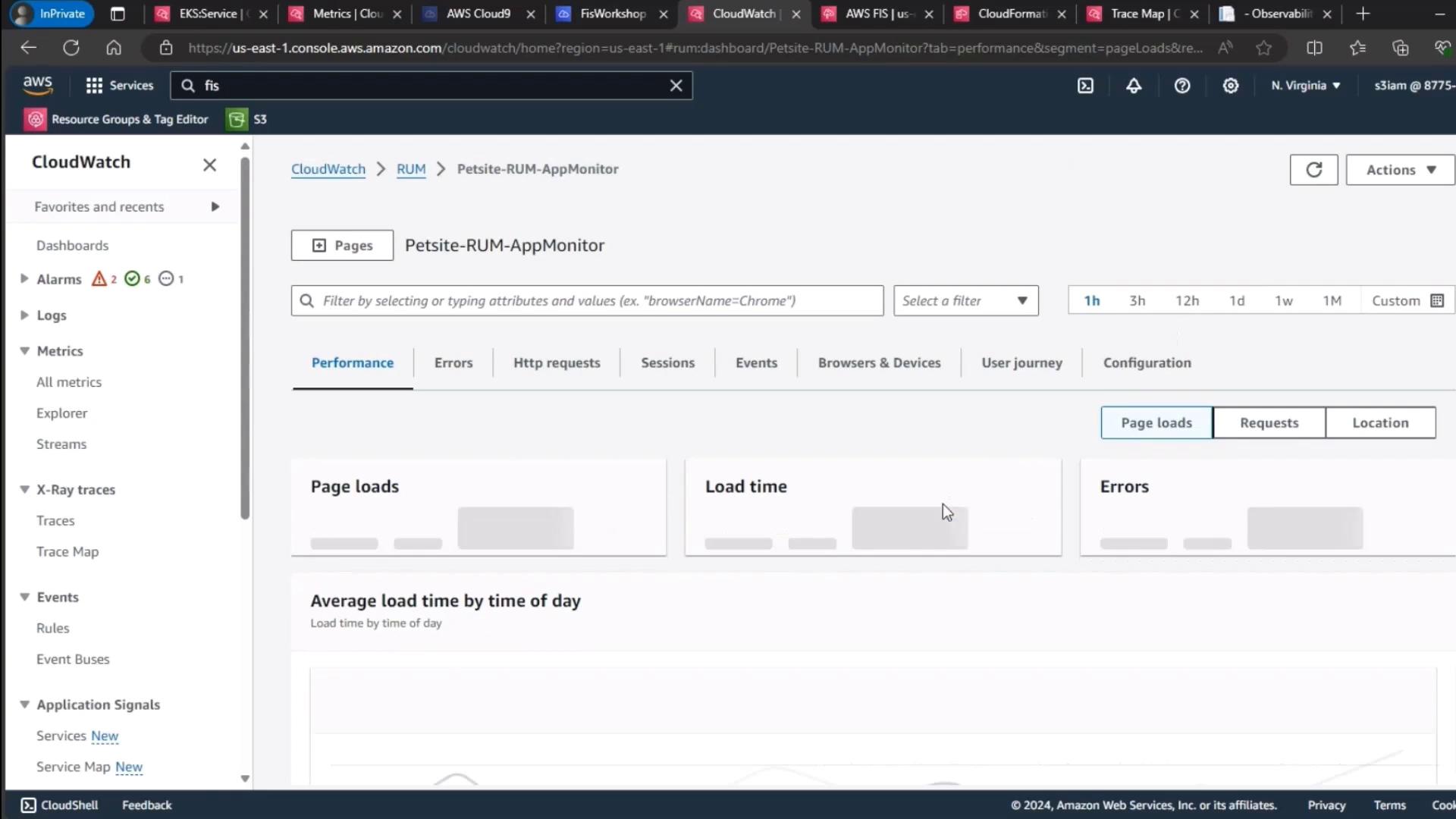

petsite-deployment Pod within seconds, demonstrating built-in self-healing.2. Validate Application Availability & RUM Metrics

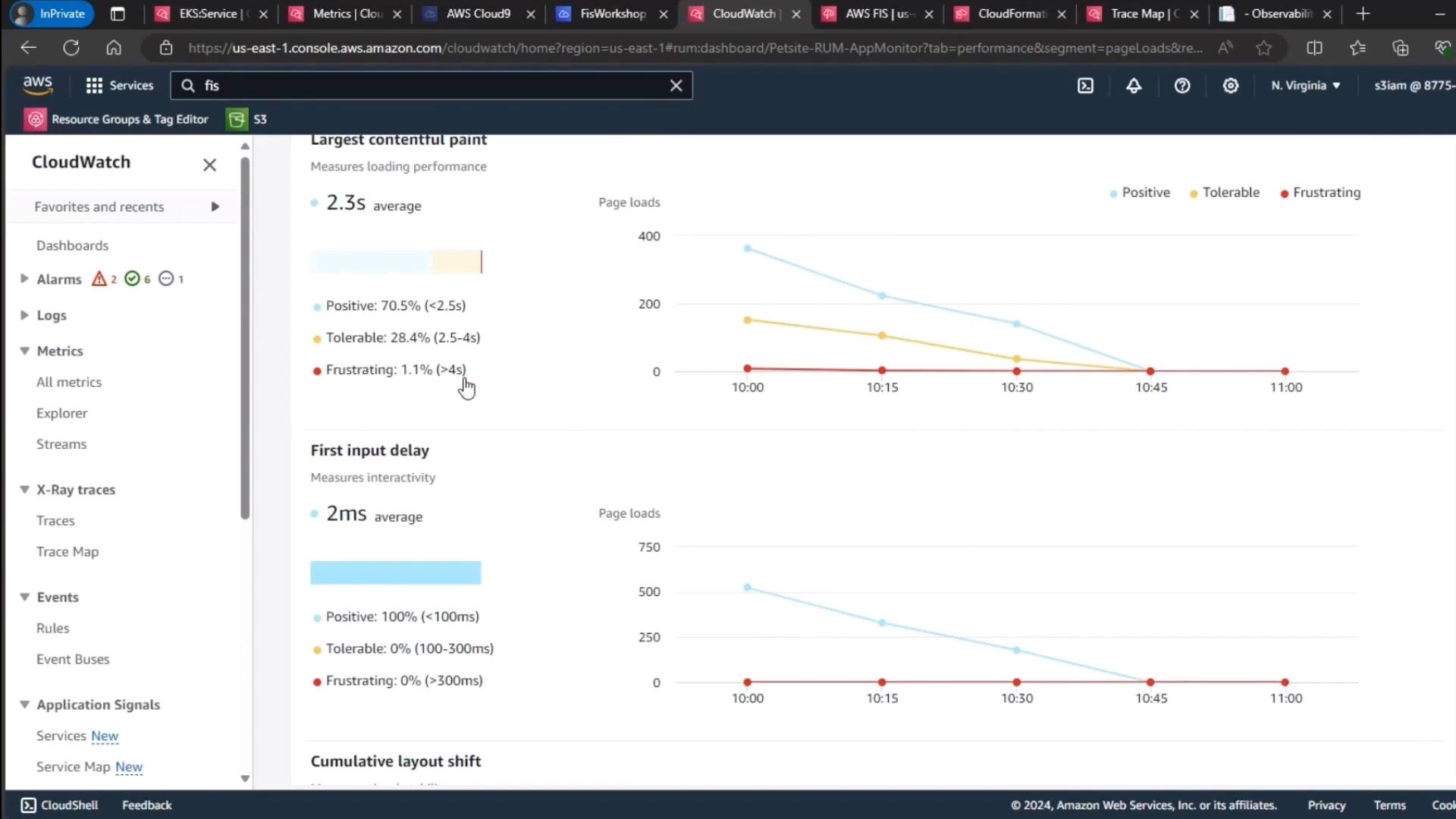

Next, confirm the application UI loads without errors and that RUM data in CloudWatch shows no spike in errors or user frustration signals.

| Metric | Use Case | Observation |

|---|---|---|

| Page Loads | Track user visits | Stable |

| Load Time | Measure responsiveness | Within SLA |

| Errors | Detect failures | No spikes detected |

| Performance Metric | Threshold (ms) | Level |

|---|---|---|

| Largest Contentful Paint | < 2500 | Positive |

| First Input Delay | < 100 | Tolerable |

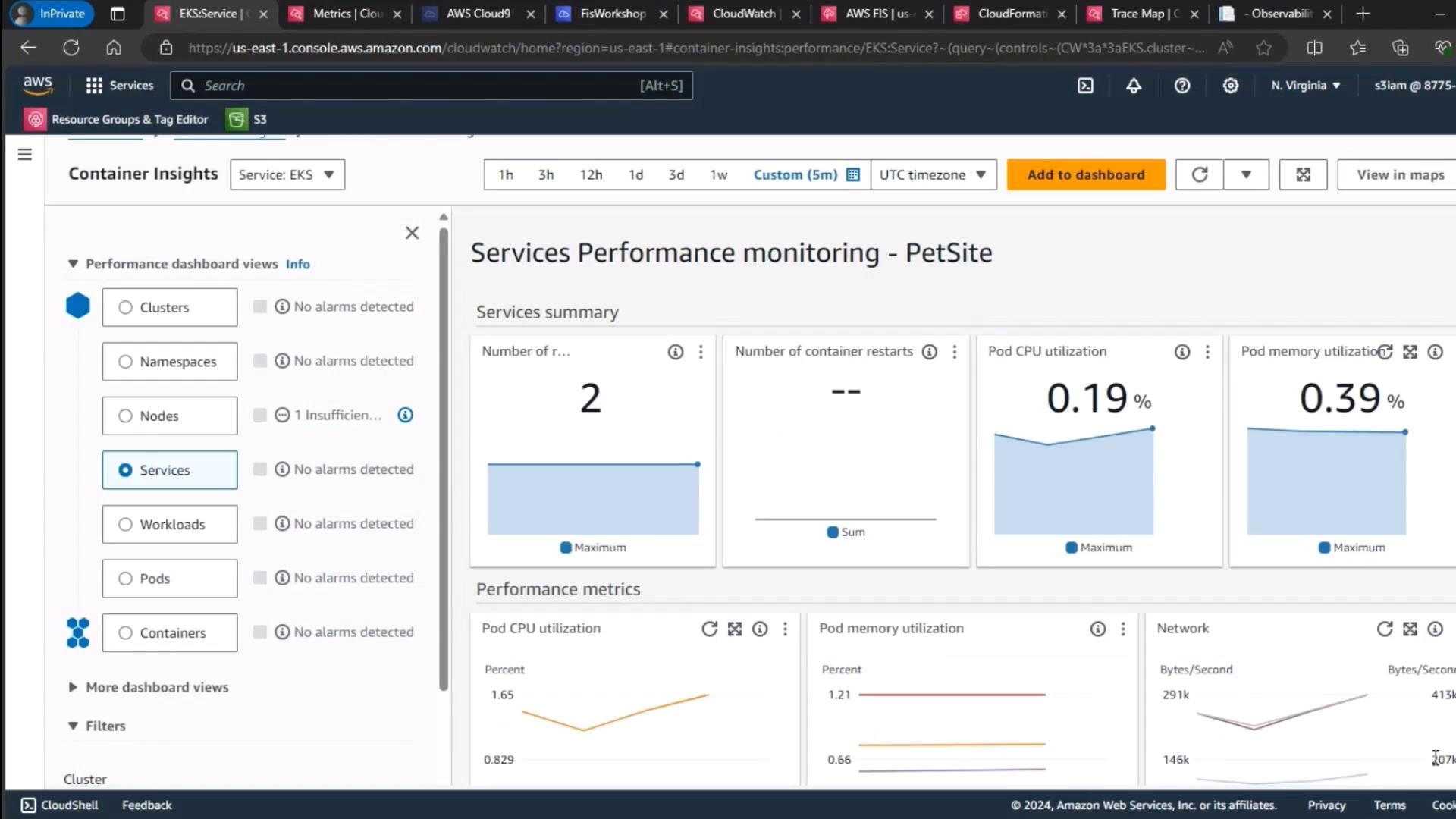

3. Monitor EKS Control Plane Metrics

Finally, review your EKS cluster’s control plane metrics to ensure resource utilization stayed consistent throughout the experiment.

| Metric | Description | Observation |

|---|---|---|

| CPU Utilization | Aggregate Pod CPU usage | Stable (~30%) |

| Memory Utilization | Aggregate Pod memory usage | Stable (~40%) |

| Running Pods | Total active Pods in default namespace | Consistent |

Stable control plane metrics confirm that pod deletion did not adversely affect cluster health.

Conclusion

This demo highlights Kubernetes’ resilience on Amazon EKS:- Self-healing: Deleted Pods are recreated almost instantly.

- Zero user impact: No UI errors or RUM spikes.

- Stable cluster health: Control plane metrics remain steady.