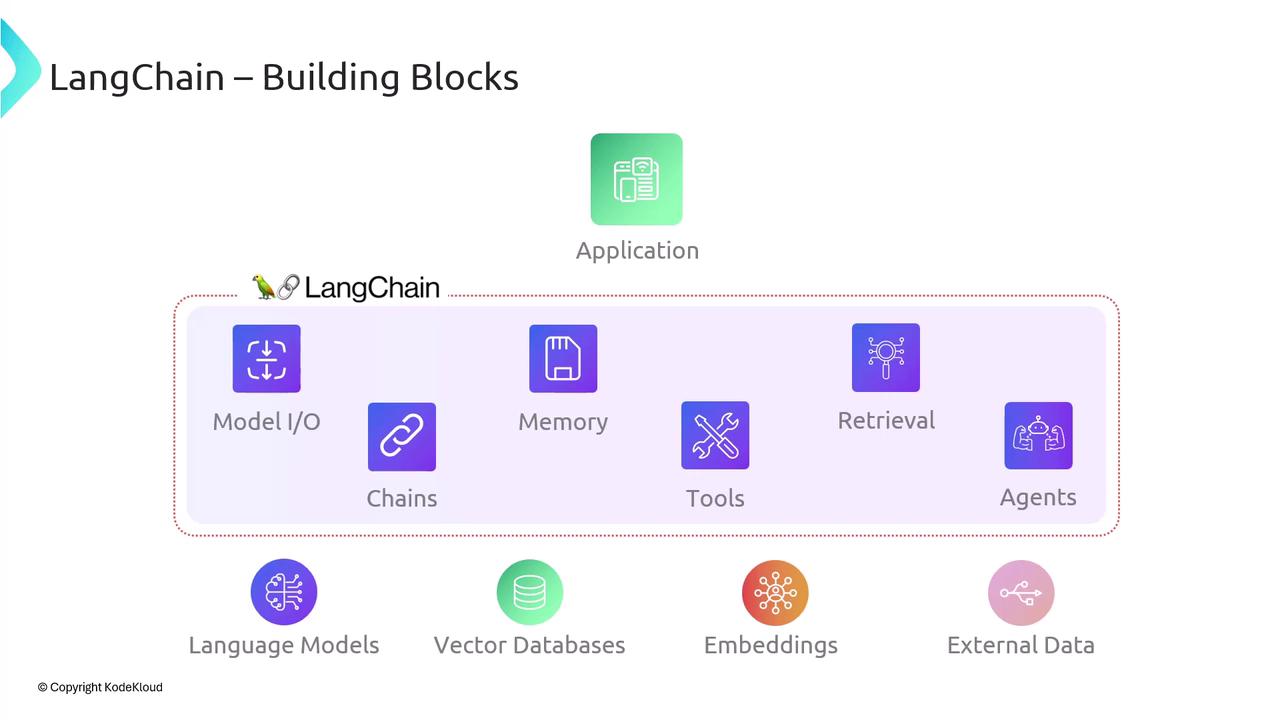

Previously, we provided an overview of LangChain. In this article, we’ll dive into LangChain’s architecture and explore its six core building blocks. Acting as middleware, LangChain connects your application to Large Language Models (LLMs), vector stores, embedding models, and other data sources—providing abstractions that simplify integration and accelerate development. Below is a high-level diagram illustrating these components:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

| Component | Purpose | Examples |

|---|---|---|

| Model I/O | Manages prompt formatting, response parsing, and streaming | OpenAI, Anthropic, Hugging Face |

| Memory | Persists conversational context or state | Redis, in-memory cache |

| Retrieval | Retrieves relevant documents or embeddings | Pinecone, FAISS, Weaviate |

| Agents | Orchestrates decision-making across tools and APIs | Custom toolkits, action chains |

| Embeddings | Converts text into vectors for similarity search | OpenAI Embeddings, Cohere |

| External Data | Integrates external knowledge sources (databases, APIs) | SQL/NoSQL, RESTful APIs |