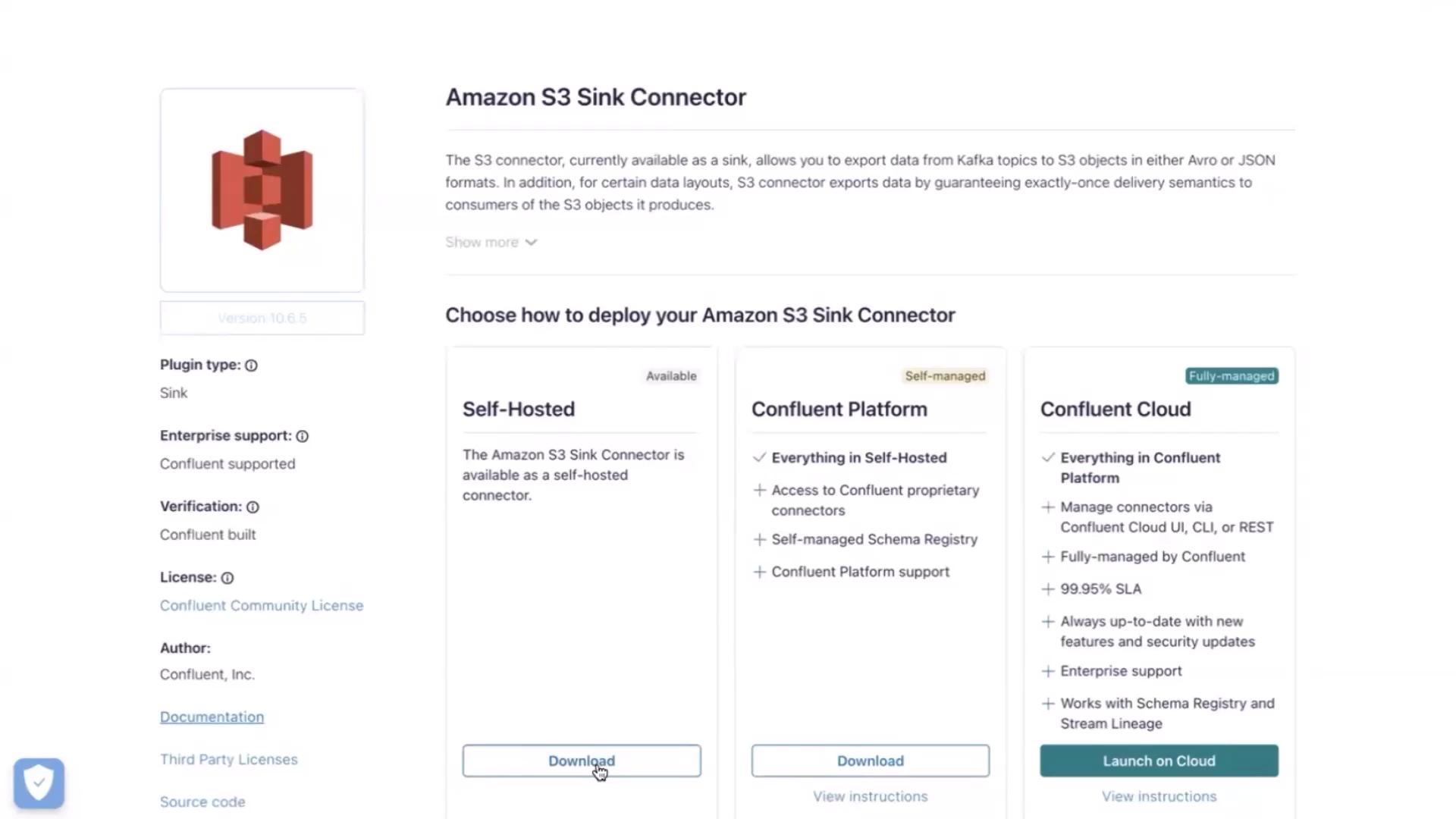

In this tutorial, we’ll configure Kafka Connect to stream events from a Kafka topic into Amazon S3 using Confluent’s S3 Sink Connector. By the end, you’ll have a working pipeline that writes data from Kafka into an S3 bucket in JSON format. PrerequisitesDocumentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- A running Kafka broker on your EC2 instance.

- AWS CLI installed and configured on the same instance.

- An IAM role attached to your EC2 instance with S3 access.

Keep your Kafka broker terminal open throughout this demo. Closing it will disconnect you from the cluster.

1. Open a new terminal on the EC2 instance

Switch to root and navigate to your home directory:2. Download the S3 Sink Connector plugin

We’ll fetch the Amazon S3 sink connector ZIP from Confluent Hub. In this lab, the ZIP is hosted in an S3 bucket:

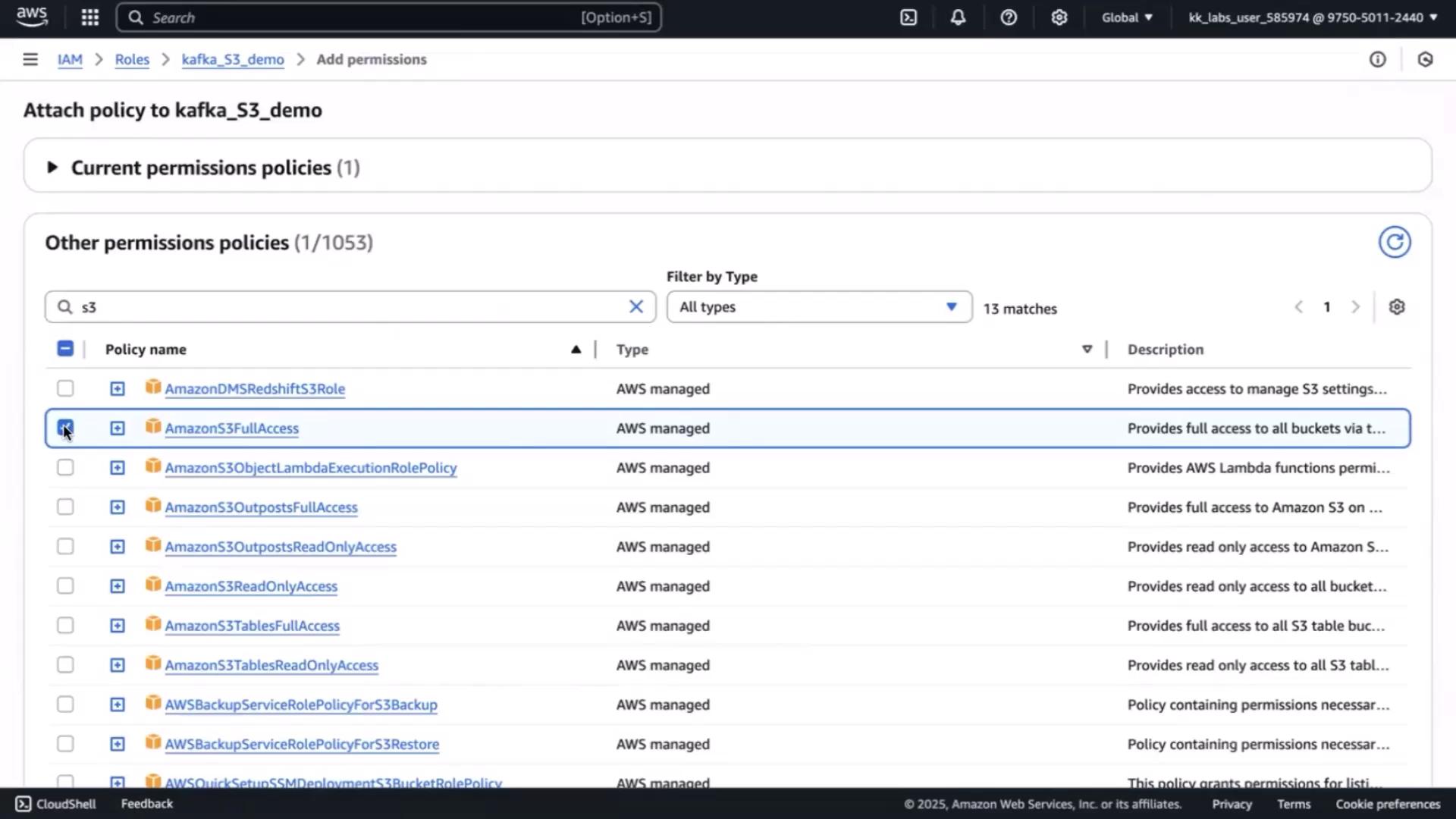

If you see a 403 Forbidden error, you need to attach S3 permissions to your EC2 IAM role.

- Open the IAM console, select the role attached to your EC2 instance (e.g.,

kafka_S3_demo), then click Add permissions. - Attach AmazonS3FullAccess (or a least-privilege policy you define).

3. Configure Kafka Connect

Edit the standalone worker configuration to point at your broker and include the connector plugin path:4. Create the S3 Sink connector properties

Define the connector configuration inconfig/s3-sink-connector.properties:

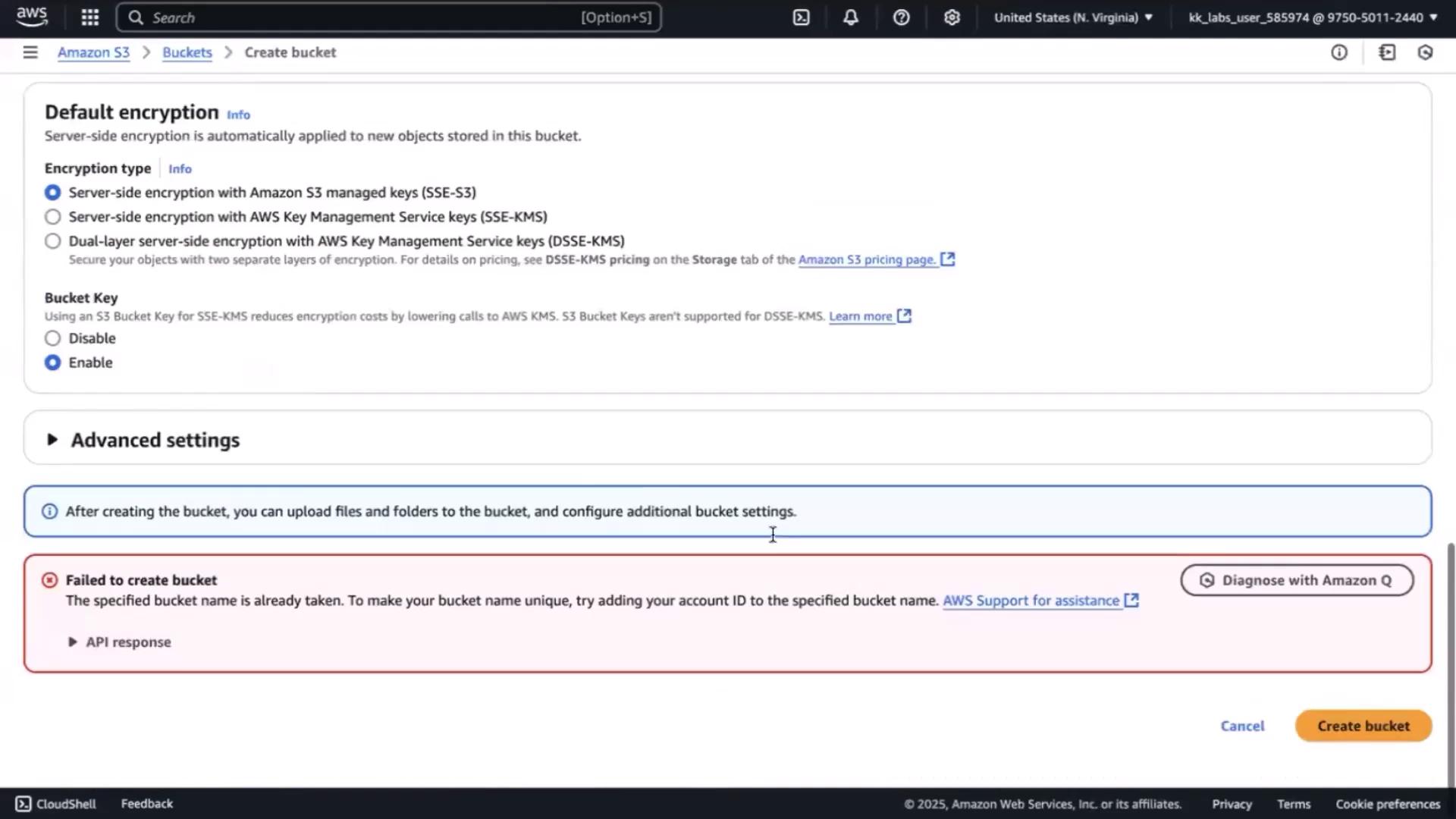

Create the S3 bucket

In the S3 console, click Create bucket and specify the same name.If it’s taken, append a suffix (e.g.,

-01) and retry.

5. Start Kafka Connect

Launch the standalone Connect worker:cartevent topic, and begin streaming data into S3.

Links and References

- Confluent Hub: S3 Sink Connector

- Kafka Connect Documentation

- AWS IAM User Guide

- AWS S3 Developer Guide