This lesson explains how to structure a Site Reliability Engineering (SRE) function, the trade-offs for common team models, role definitions, sizing guidelines, and the skills that make SREs effective. Use this as a practical guide when deciding how to staff reliability work and evolve SRE practices as your organization grows.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Quick overview

- What to choose: the right SRE model depends on organization size, product complexity, culture, and growth plans.

- Practical trade-offs: embedded SREs give product context; centralized teams deliver consistency; consulting scales knowledge; hybrid blends the benefits.

- People and skills: SRE teams combine technical depth (programming, systems, observability) with strong communication and incident leadership.

Common SRE team models (with trade-offs)

| Model | How it works | Key benefits | Main trade-offs |

|---|---|---|---|

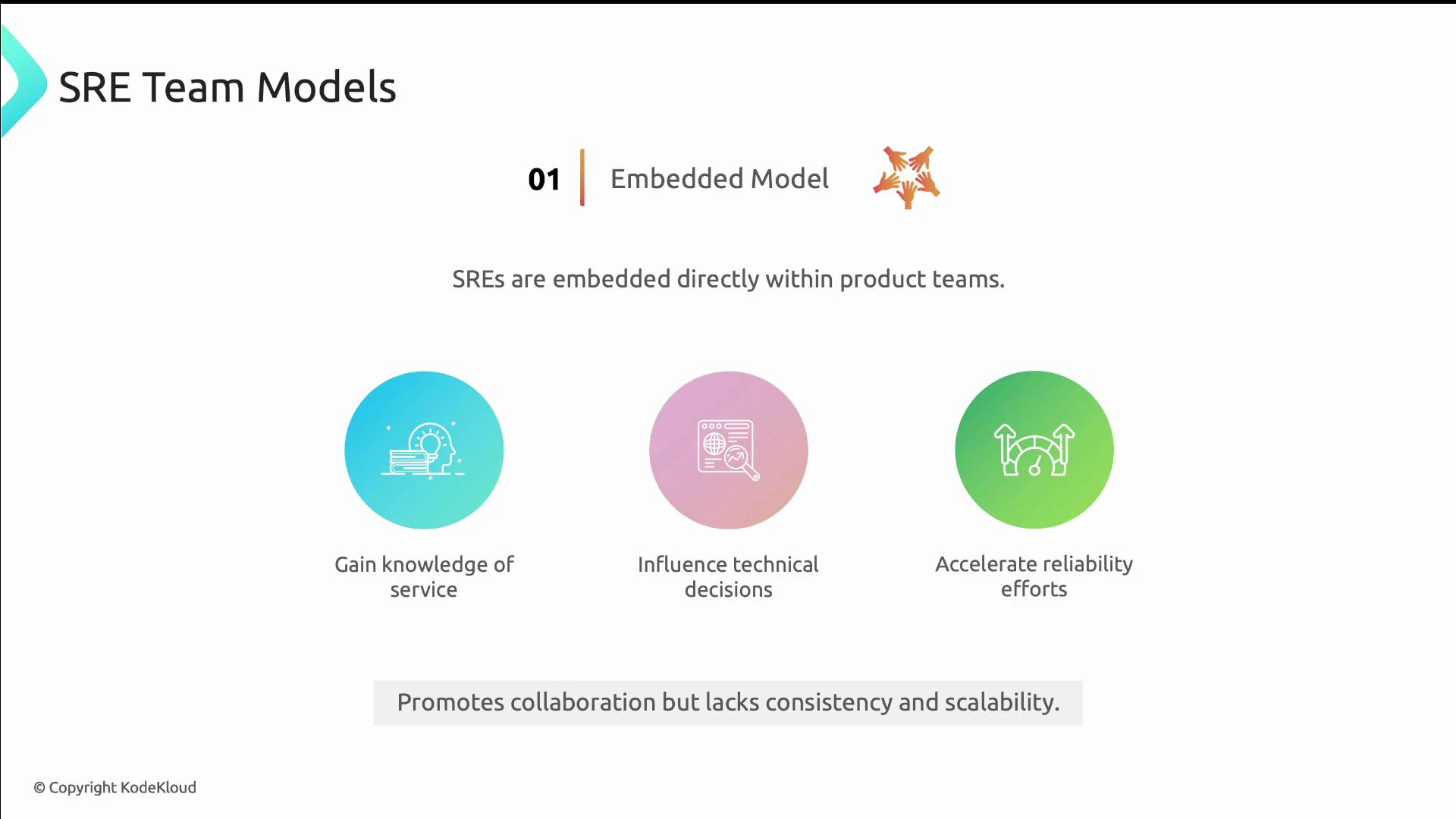

| Embedded | SREs sit inside product teams | Deep service knowledge, faster reliability improvements, strong ownership | Inconsistent practices across teams, harder to scale tooling & standards |

| Centralized | One SRE team supports multiple products | Consistent tooling, shared standards, efficient resource use | May lack product context, can become a throughput bottleneck |

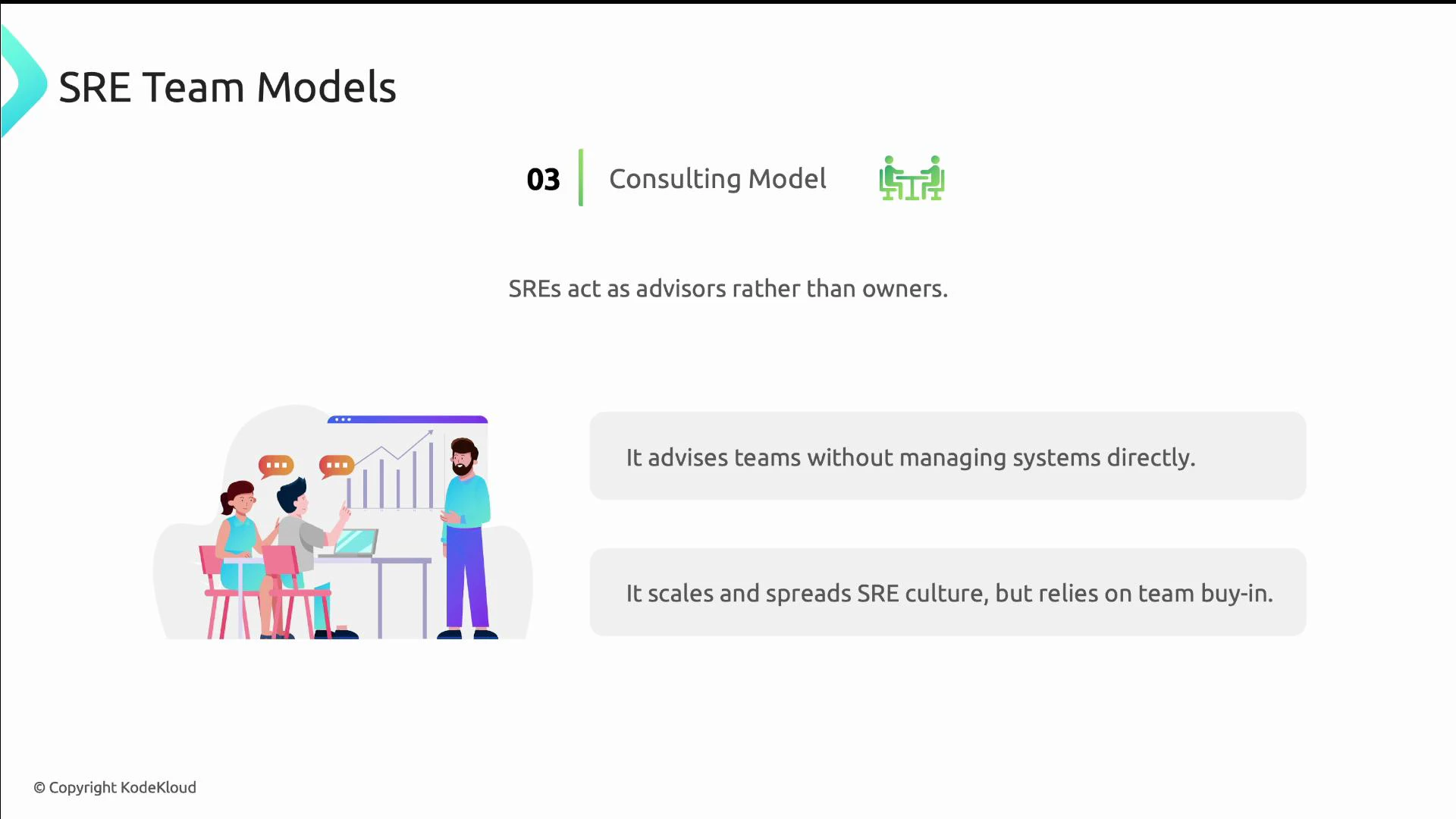

| Consulting | SREs act as advisors/coaches | Scales knowledge, lightweight ownership, accelerates capability adoption | Effectiveness depends on product teams adopting guidance |

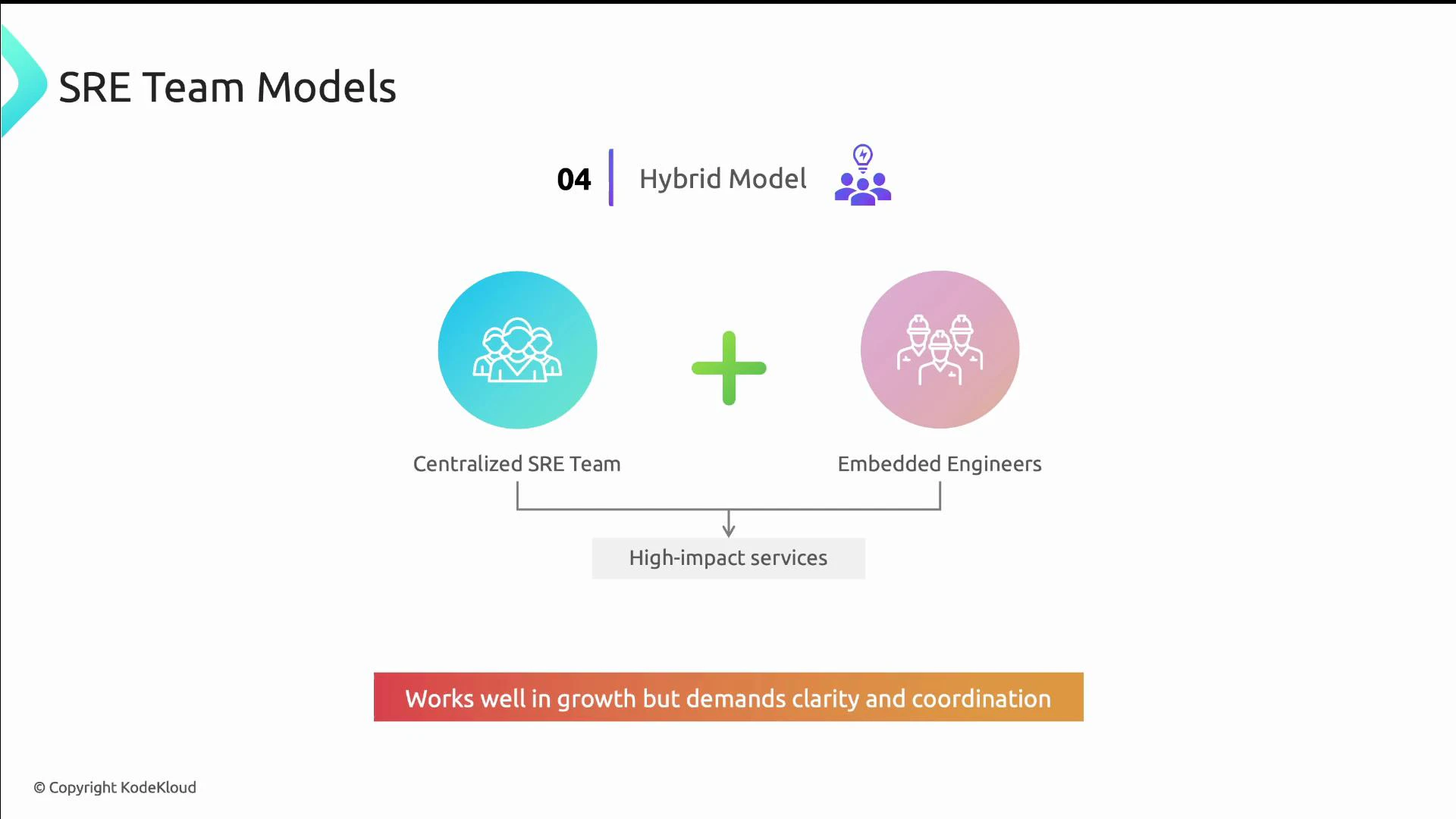

| Hybrid | Mix of central tooling + embedded SREs | Flexible; balances consistency and context | Requires clear role boundaries and strong coordination |

- SREs live in product teams, influence design choices, and deliver rapid reliability improvements.

- Best when product teams must own both feature and reliability work; promotes collaboration and faster feedback loops.

- A single SRE organization maintains cross-product platforms, shared monitoring, and common practices.

- Works well for enforcing standards and building platform-level automation.

- SREs operate as coaches or consultants, advising product teams, building blueprints, and delivering training.

- Scales SRE ideas without owning each service directly; requires adoption by product teams to succeed.

- Blends embedded SREs for high-impact services with a centralized team that builds shared tooling, libraries, and standards.

- Allows organizations to combine scale and context while evolving engagement models as teams mature.

There is no single “best” model. Choose based on your organization’s size, maturity, product complexity, and culture — and be prepared to evolve the model as you scale.

Real-world examples and how organizations apply the models

- Embedded: Netflix and Amazon follow “you-run-it” philosophies where product teams own reliability. Spotify also embeds reliability into product squads.

- Centralized: Google historically used dedicated SRE teams; LinkedIn and Microsoft have centralized functions to enforce consistency.

- Consulting: Dropbox uses SREs mainly as coaches; Google also places SREs in advisory roles where appropriate.

- Hybrid: Meta, Google (in many domains), IBM, and Uber combine central tooling with embedded engineers aligned to product needs.

Core SRE roles (typical team composition)

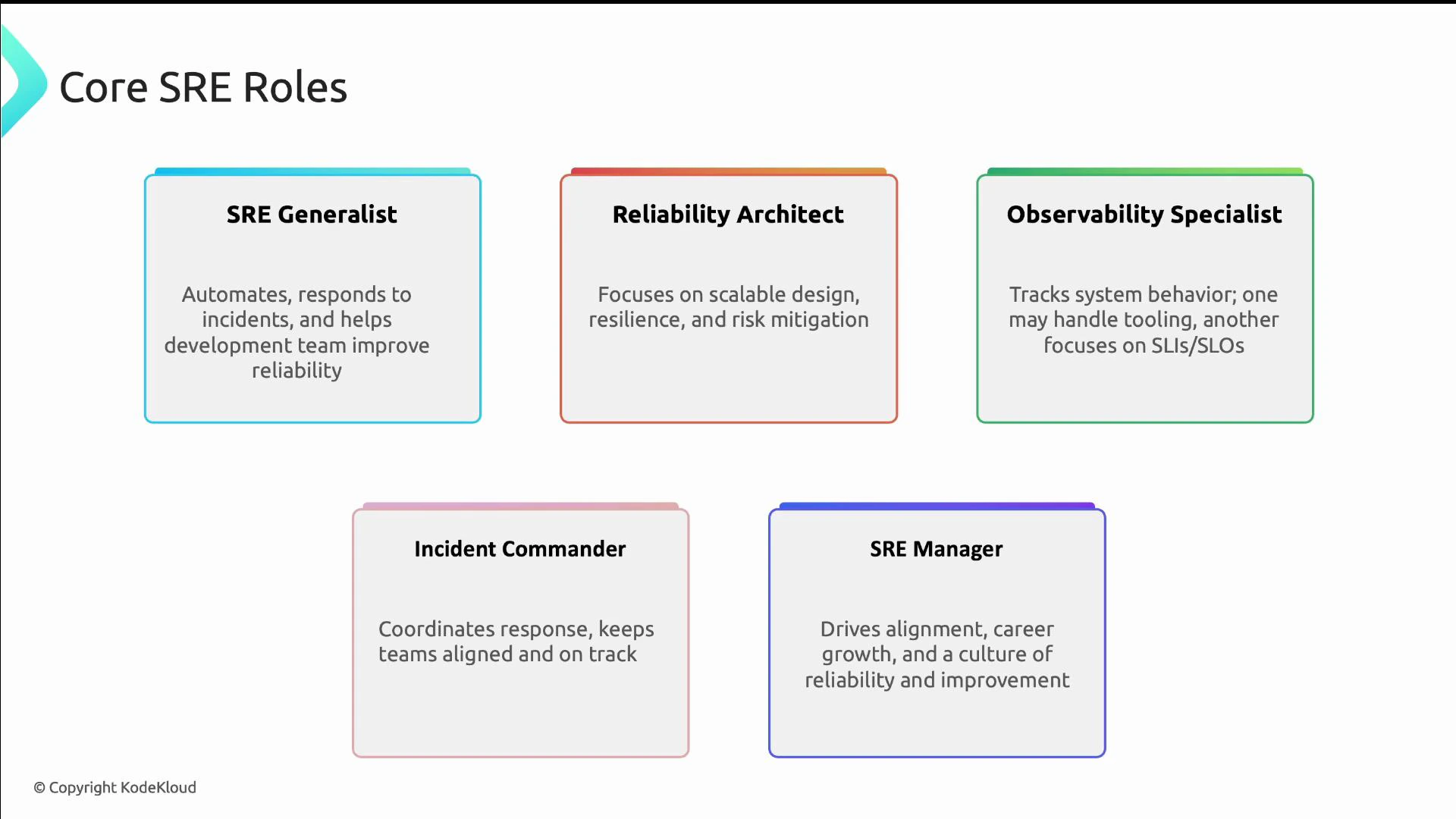

| Role | Primary focus | When to introduce |

|---|---|---|

| SRE Generalist | Toil reduction, automation, on-call, partnering with developers | From day one for small teams |

| Reliability Architect | System design, capacity planning, fault domains | Mid-stage onward for complex systems |

| Observability Specialist | Metrics, logging, tracing, SLIs/SLOs | When you need consistent instrumentation and platform observability |

| Incident Commander | Incident coordination, communications, postmortems | As on-call rotations scale beyond a few people |

| SRE Manager | Strategy, team development, engagement models | When multiple SREs or subteams need alignment |

SRE at every stage of growth

SRE practices are adaptable — the principles remain the same while the approach changes with scale.- Small / early-stage startups

- Team size: 0–5 SREs or developer-led reliability.

- Focus: generalists who automate, monitor, and own on-call duties. Keep tooling pragmatic.

- Mid-size organizations

- Team size: ~5–15 SREs; specializations appear (observability, incident response, platform).

- Focus: formalize SLOs, incident playbooks, error budgets, and internal standards.

- Large enterprises

- Team size: 15+ SREs organized into domain-aligned subteams (e.g., storage, networking, data pipelines).

- Focus: invest in platform services, training programs, and defined engagement/onboarding processes for product teams.

Team-sizing and engagement guidance

- Early-stage: hire generalists who can iterate quickly and establish basic on-call, monitoring, and automation.

- Mid-stage: hire role owners for observability and incident response; codify SLOs and error budget policies.

- Large scale: create clear service-level engagement models so product teams know how to request SRE help and what to expect.

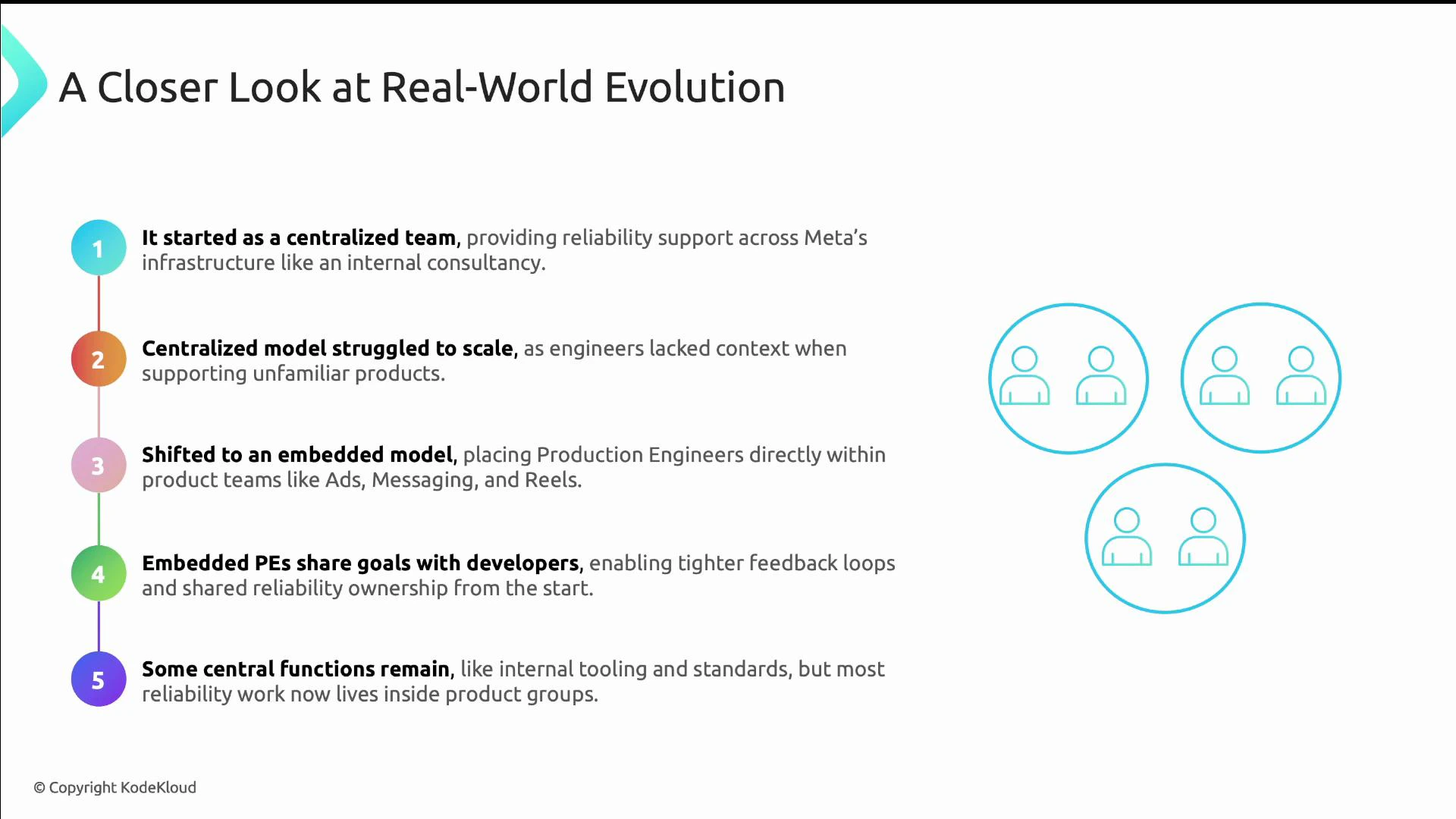

Case study: Meta’s Production Engineering evolution

Meta’s Production Engineering (PE) demonstrates an SRE evolution:- Centralized beginnings — PE provided incident response and scaling help when product teams were small.

- Shift to embedded — as Meta grew, PE embedded engineers in product teams for design-time reliability and better feedback loops.

- Hybrid outcome — central PE functions (tooling and standards) remain while most reliability work is handled within product teams.

Skills and hiring signals

Technical skills- Proficiency in at least one primary language (Go, Python, Java).

- Systems expertise: Linux internals, networking, containers.

- CI/CD and infrastructure-as-code experience (Terraform, GitOps).

- Observability tooling and instrumentation (metrics, tracing, logging).

- Troubleshooting distributed systems at scale.

- Calm and clear communication during incidents.

- Curiosity and continuous learning.

- Empathy for users and product teams.

- Root-cause thinking and a focus on long-term fixes.

- Cloud-native observability (OpenTelemetry, Prometheus, Grafana).

- Kubernetes troubleshooting and automation.

- SLO design and error budget management.

- Incident leadership and cross-team communication.

- Collaboration for shared ownership and platform engagement.

Pro tip

- Go deep before you go broad: build deep expertise in one area (observability, automation, or incident response) first. Depth builds intuition and technical muscle you can apply across the SRE discipline.

Useful links and references

- Site Reliability Engineering: How Google Runs Production Systems (Book)

- Kubernetes documentation — Concepts

- OpenTelemetry

- Prometheus and Grafana

- Google SRE practices and SLO guidance

- LinkedIn Workforce Insights — industry skills signals