In this lesson we examine the Cilium architecture end-to-end: the per-node agent, the in-kernel datapath powered by eBPF, cluster-wide controller responsibilities, L7 proxying with Envoy, observability with Hubble, kube-proxy integration/replacement, and service-mesh deployment models (sidecar vs sidecarless). Each section explains the component’s role, how it integrates with the rest of the system, and the traffic flow implications. Key components coveredDocumentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- Cilium agent (per-node daemon)

- eBPF programs (in-kernel datapath)

- Cilium operator (cluster-wide controller)

- Envoy proxy (L7 proxy)

- Hubble (observability)

- kube-proxy (and Cilium’s kube-proxy replacement mode)

- Service mesh models: sidecar vs sidecarless

| Component | Primary role | When it matters |

|---|---|---|

| Cilium agent | Per-node orchestration, loads eBPF, enforces policies | Always on each node |

| eBPF datapath | High-performance L3/L4 networking, in-kernel LB/policy | East‑west traffic, services |

| Cilium operator | Cluster-wide state (IPs, services, identities, CRDs) | Multi-node coordination, IPAM |

| Envoy | L7 termination, routing, mTLS, deep observability | HTTP/gRPC, advanced L7 policies |

| Hubble | Flow capture, metrics, CLI/UI integration | Troubleshooting, monitoring |

| kube-proxy | Service programming (iptables/ipvs) | Only if Cilium runs alongside kube-proxy |

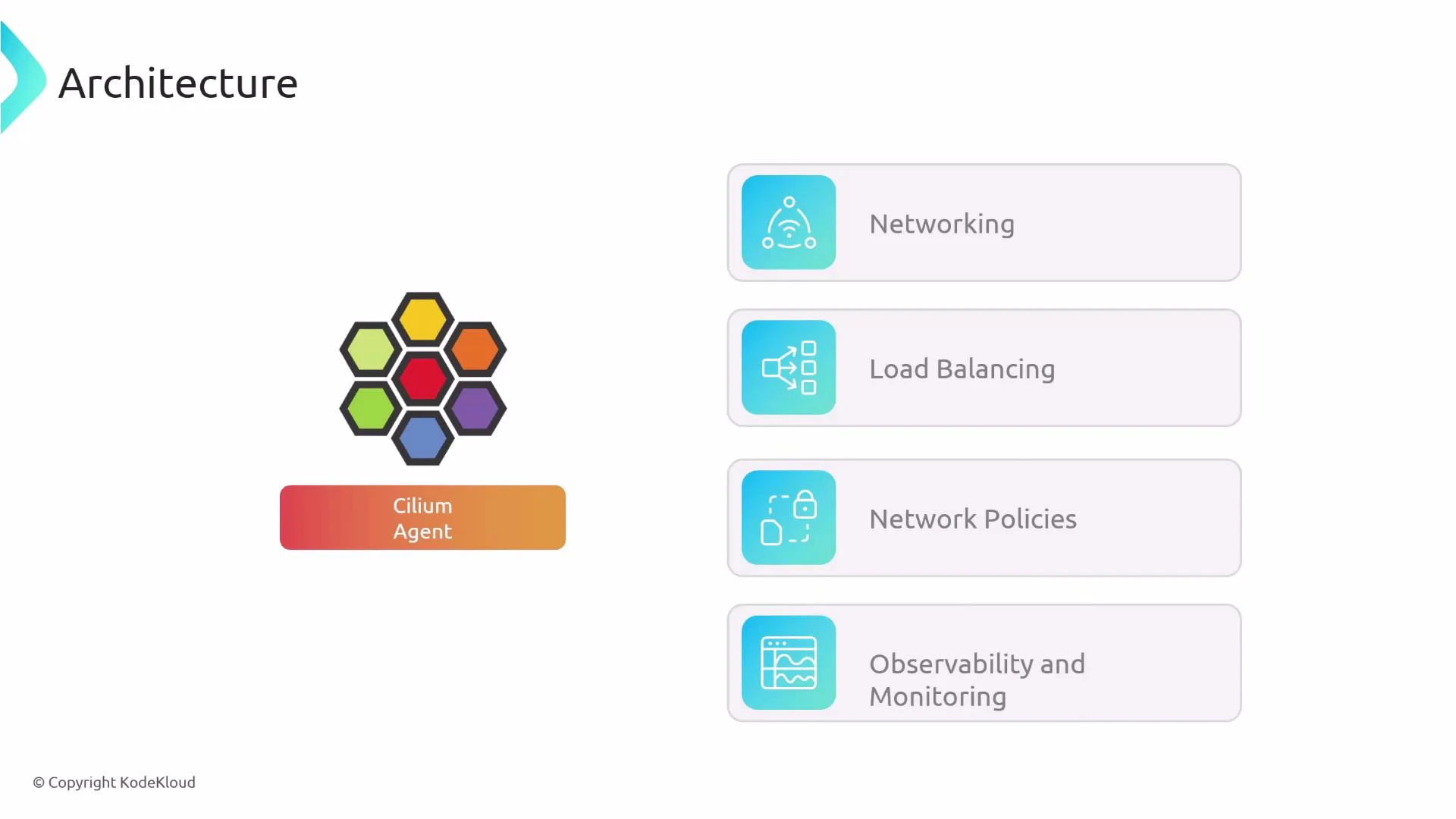

Cilium agent

The Cilium agent runs on every node (typically as a DaemonSet). It is responsible for translating Kubernetes cluster state into datapath configuration and ensuring the kernel runs the correct programs. Primary responsibilities:- Watch Kubernetes API events (Pods, Services, Endpoints, CRDs) and translate cluster state into datapath configuration.

- Compile, load, and attach eBPF programs to the appropriate kernel hooks and network interfaces.

- Enforce network policies and implement service/load-balancing rules using eBPF.

- Route traffic to Envoy when L7 processing is required.

- Host a local Hubble server for per-node flow capture and metrics.

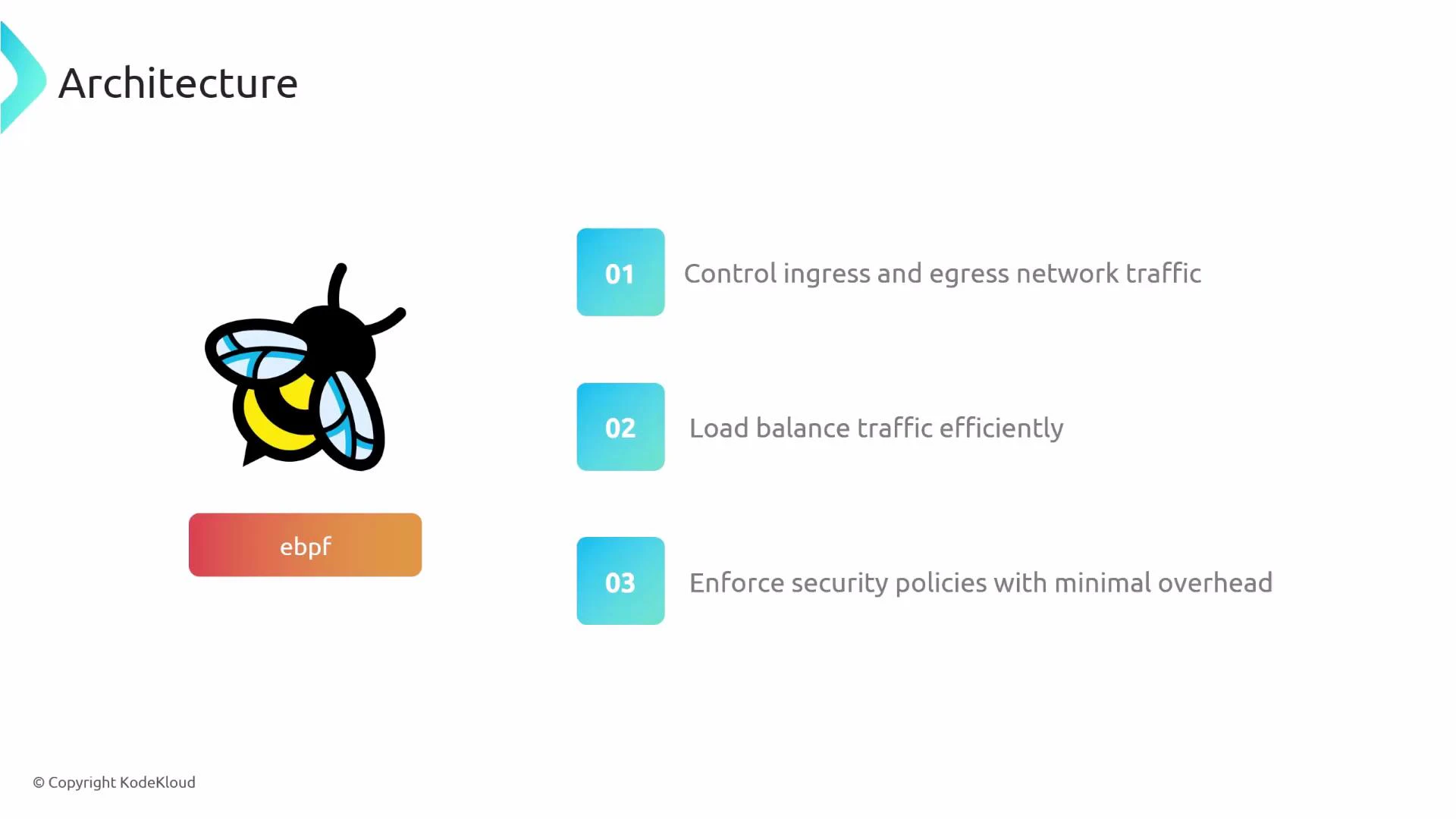

eBPF datapath

Cilium leverages eBPF to implement the datapath inside the Linux kernel. eBPF programs provide a fast, flexible way to control networking without costly user/kernel context switches. What eBPF provides for Cilium:- L3/L4 ingress and egress packet processing (IP/TCP/UDP).

- In-kernel, efficient load balancing for Services.

- Low-overhead enforcement of network and security policies.

Cilium operator

The Cilium operator handles cluster-scoped duties that don’t belong to any single node agent. It keeps cluster-wide state synchronized and performs tasks that require global knowledge. Operator responsibilities:- Synchronize node IPs and lifecycle between Kubernetes and Cilium.

- Coordinate cluster-wide service information so nodes program consistent service maps.

- Manage IP allocation for services when using Cilium’s eBPF-based load balancer.

- Allocate and synchronize security identities (used by network policies).

- Manage CIDRs, CRDs, and IPAM tasks for cluster-scoped resources.

- Assist Cluster Mesh / multi-cluster configuration and CRD lifecycle.

- Aggregate or coordinate Hubble relays and observability across the cluster.

Envoy proxy (L7)

Envoy is used by Cilium to provide Layer‑7 features that cannot be implemented in-kernel. Typical L7 responsibilities include HTTP/gRPC routing, rate limiting, TLS/mTLS termination, and deep request inspection for observability and policy. Deployment options:- Embedded in the Cilium agent (in‑agent Envoy).

- Deployed as a single Envoy pod per node (node-level proxy).

Kubernetes CRDs

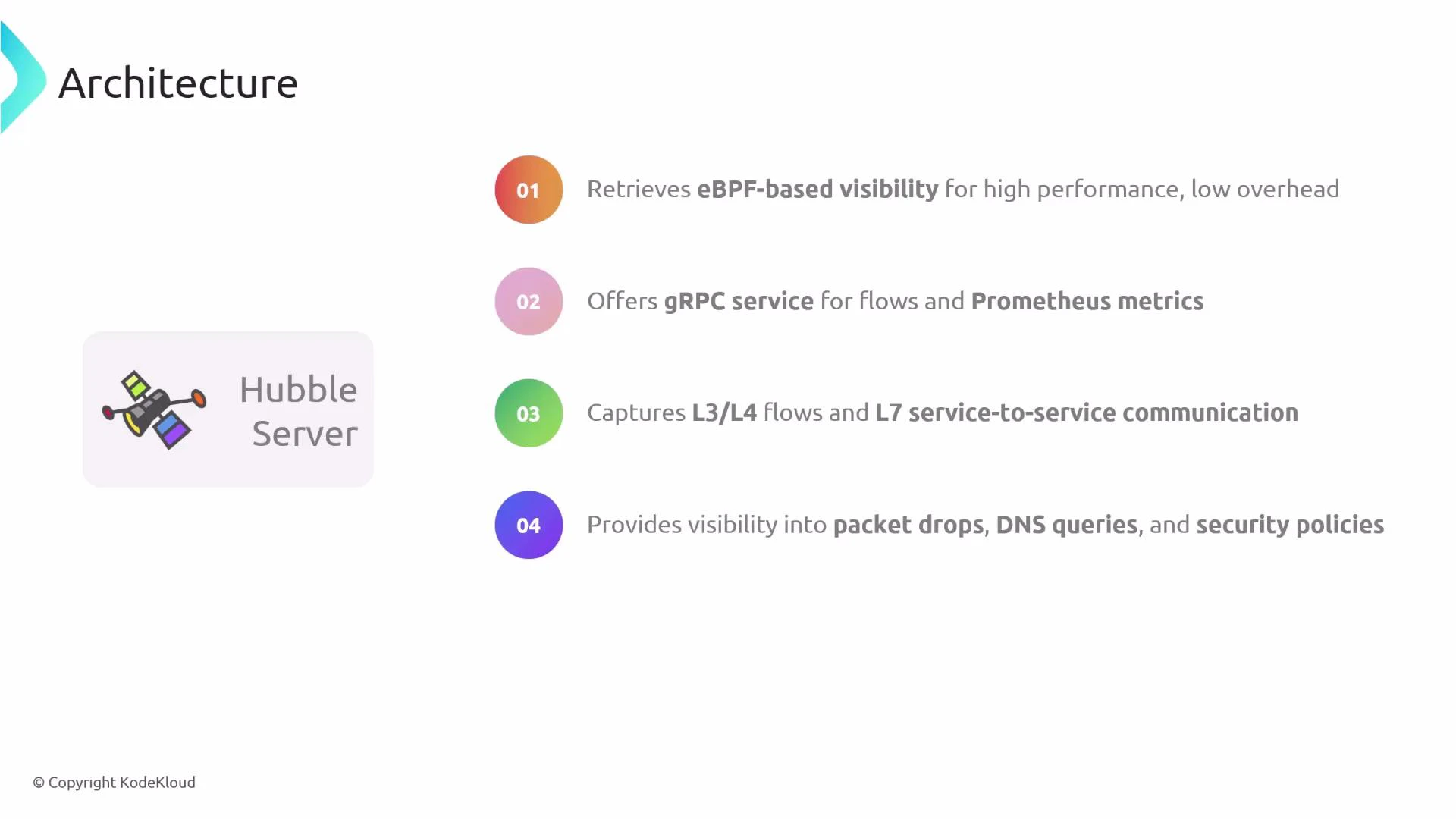

Cilium installs several Kubernetes CRDs to represent policies, observability settings, and other Cilium-specific configuration. These CRDs provide a Kubernetes-native interface to express Cilium network policies, service mesh configuration, and observability controls across the cluster.Hubble: per-node server, relay, CLI, UI

Hubble provides high-performance observability built on eBPF flow capture. Each Cilium agent includes a Hubble server for local flow telemetry. Hubble components and capabilities:- Per-node Hubble server that streams eBPF-based flow data with low overhead.

- gRPC API and Prometheus metrics endpoints for integration and monitoring.

- Capture of L3/L4 flows and optional L7 telemetry (when Envoy provides L7 context).

- Visibility into packet drops, DNS queries, policy decisions, and more for debugging.

kube-proxy and Cilium’s kube-proxy replacement

Kubernetes uses kube-proxy on each node to program iptables/ipvs rules for Services. Cilium can either run alongside kube-proxy or replace it entirely by implementing service handling in eBPF. Benefits of kube-proxy replacement mode:- Service load-balancing and routing are programmed directly in the kernel via eBPF.

- Improved performance and scalability compared to iptables/ipvs.

- Reduced complexity by removing the need for kube-proxy in the datapath.

Cilium can run in kube-proxy replacement mode: instead of running kube-proxy, Cilium programs the service datapath using eBPF for more efficient Service traffic handling.

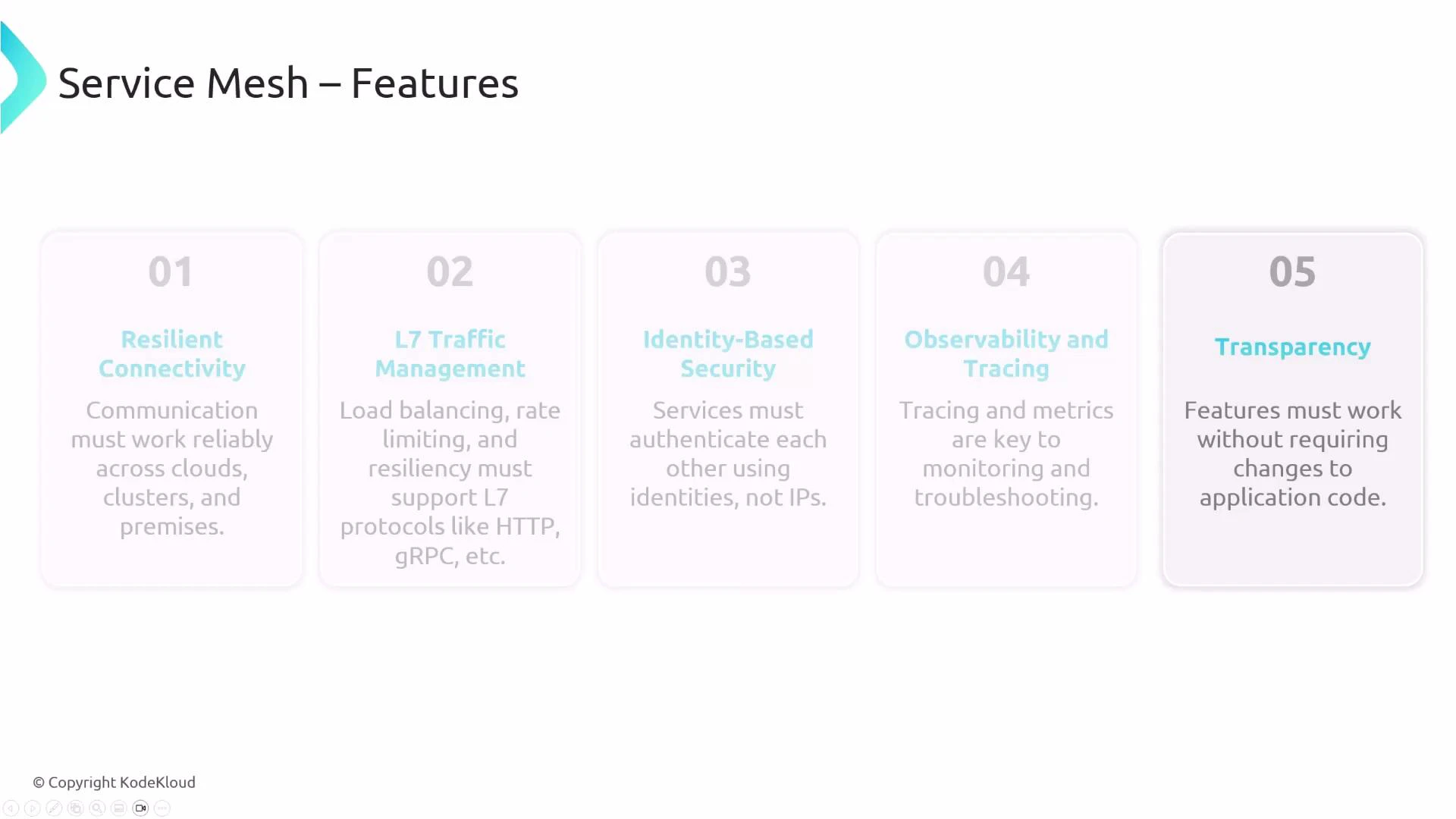

Service mesh: sidecar vs sidecarless

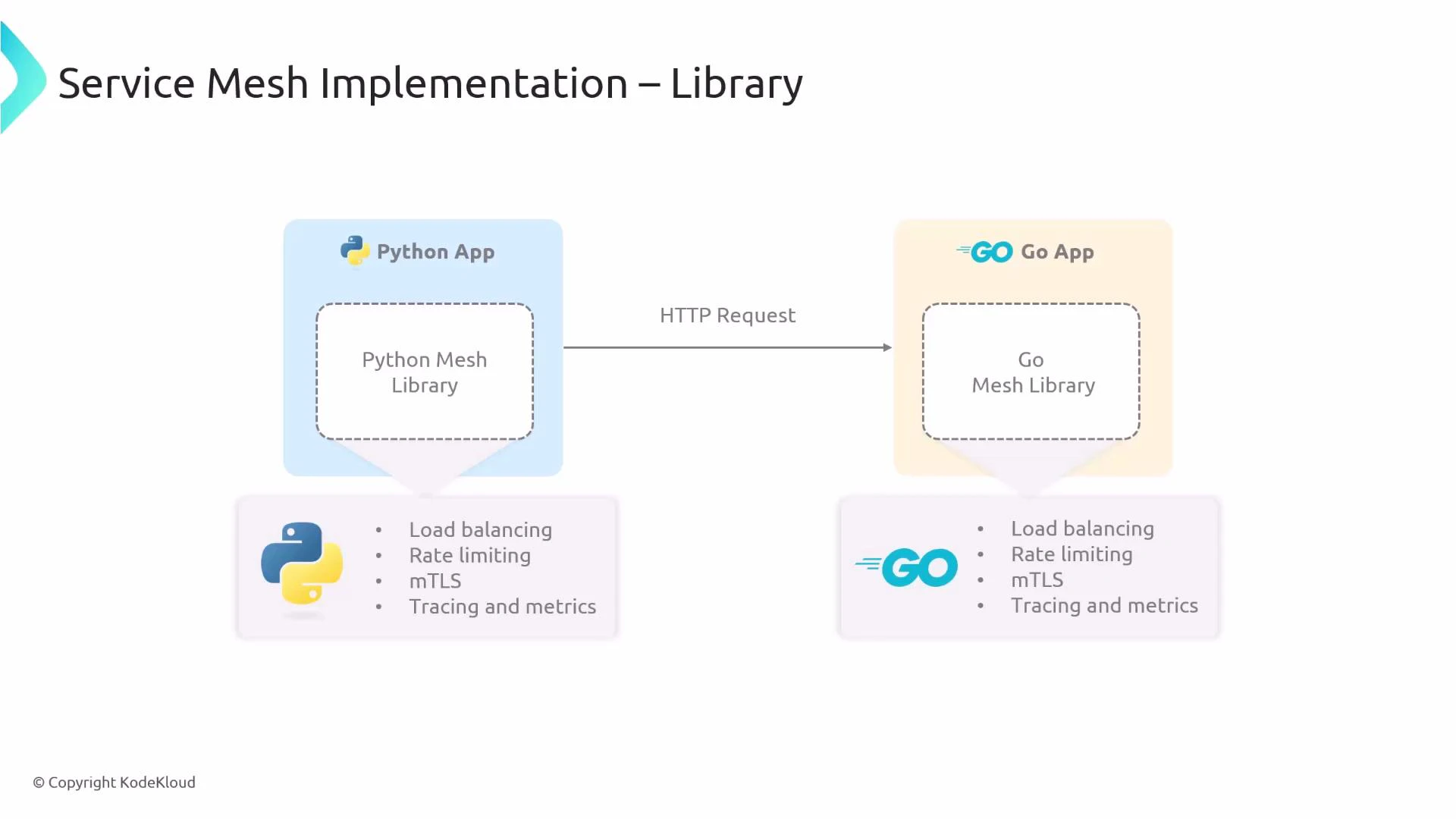

Service meshes historically required either application libraries or a sidecar proxy per pod to implement features such as load balancing, mTLS, rate limiting, tracing, and observability.

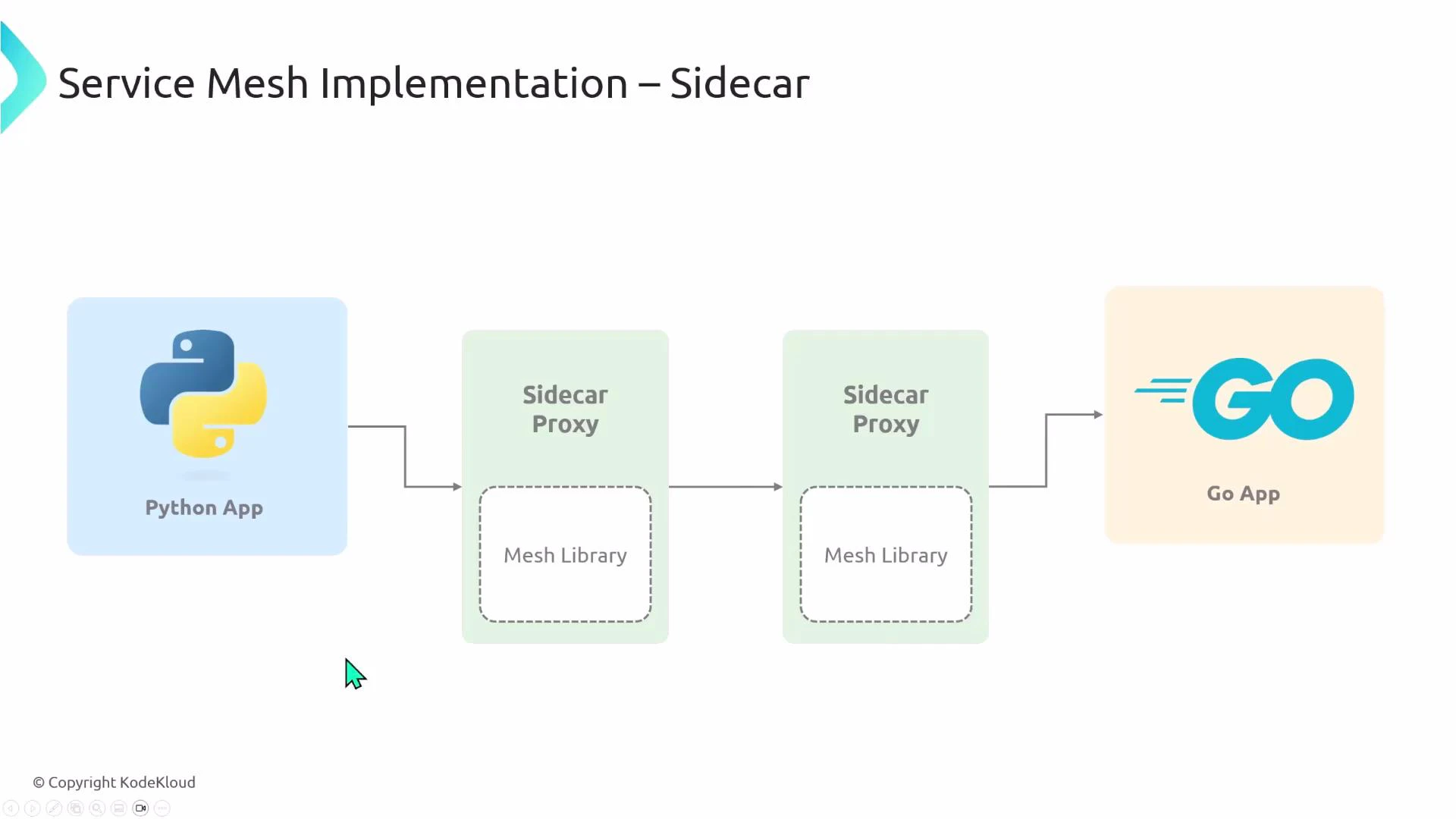

Sidecar model

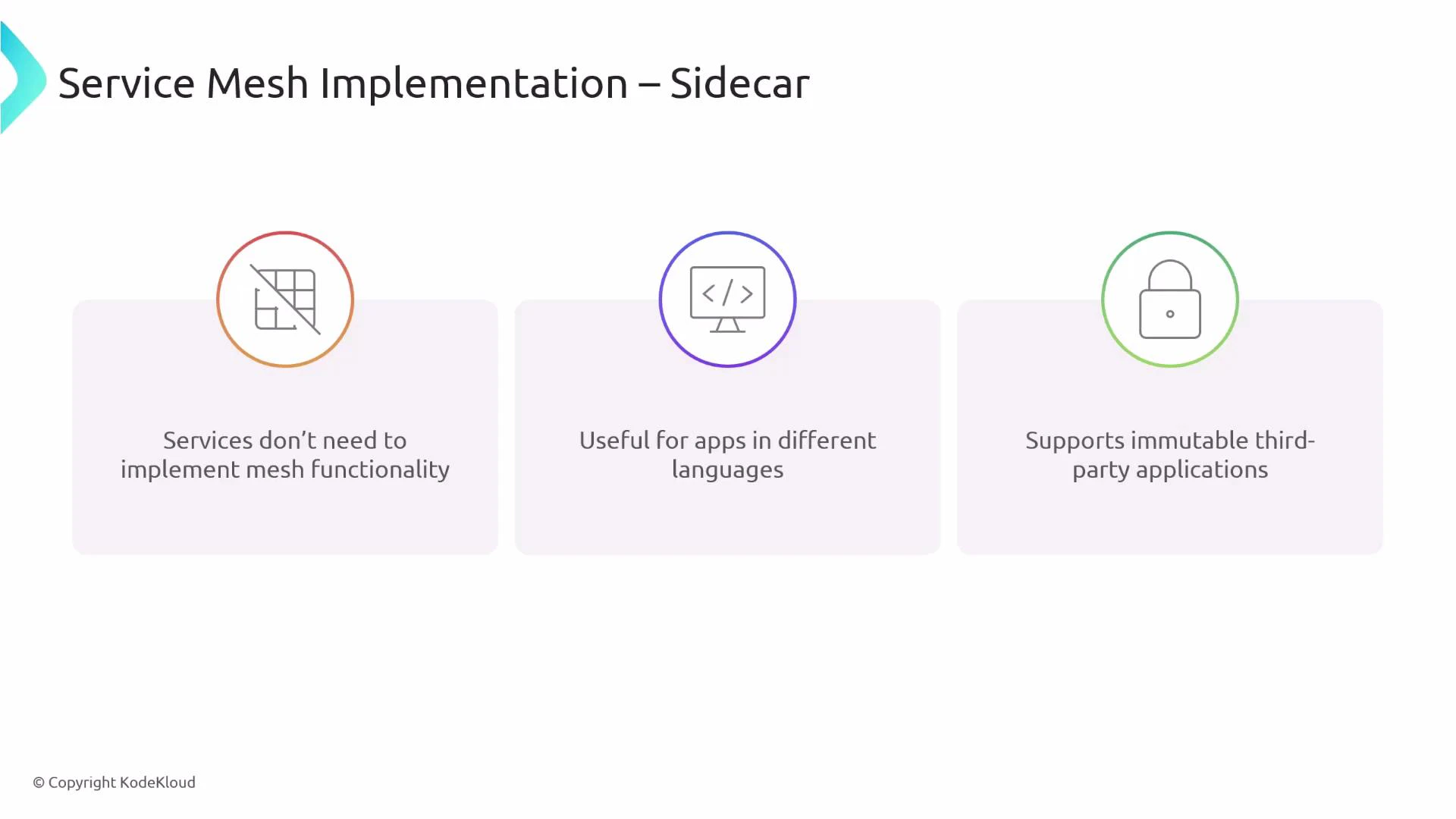

The sidecar pattern deploys a proxy (commonly Envoy) next to each application container in the pod. The proxy implements mesh features so application code remains unchanged. Benefits:- No application changes required; language-agnostic.

- Works for immutable third‑party apps.

- Mesh responsibilities are offloaded to the proxy.

- One proxy per pod increases resource usage (CPU/memory).

- Operational complexity for proxy configuration and lifecycle.

- Potential startup and readiness race conditions because proxies must initialize.

- Extra network hop per request increases latency.

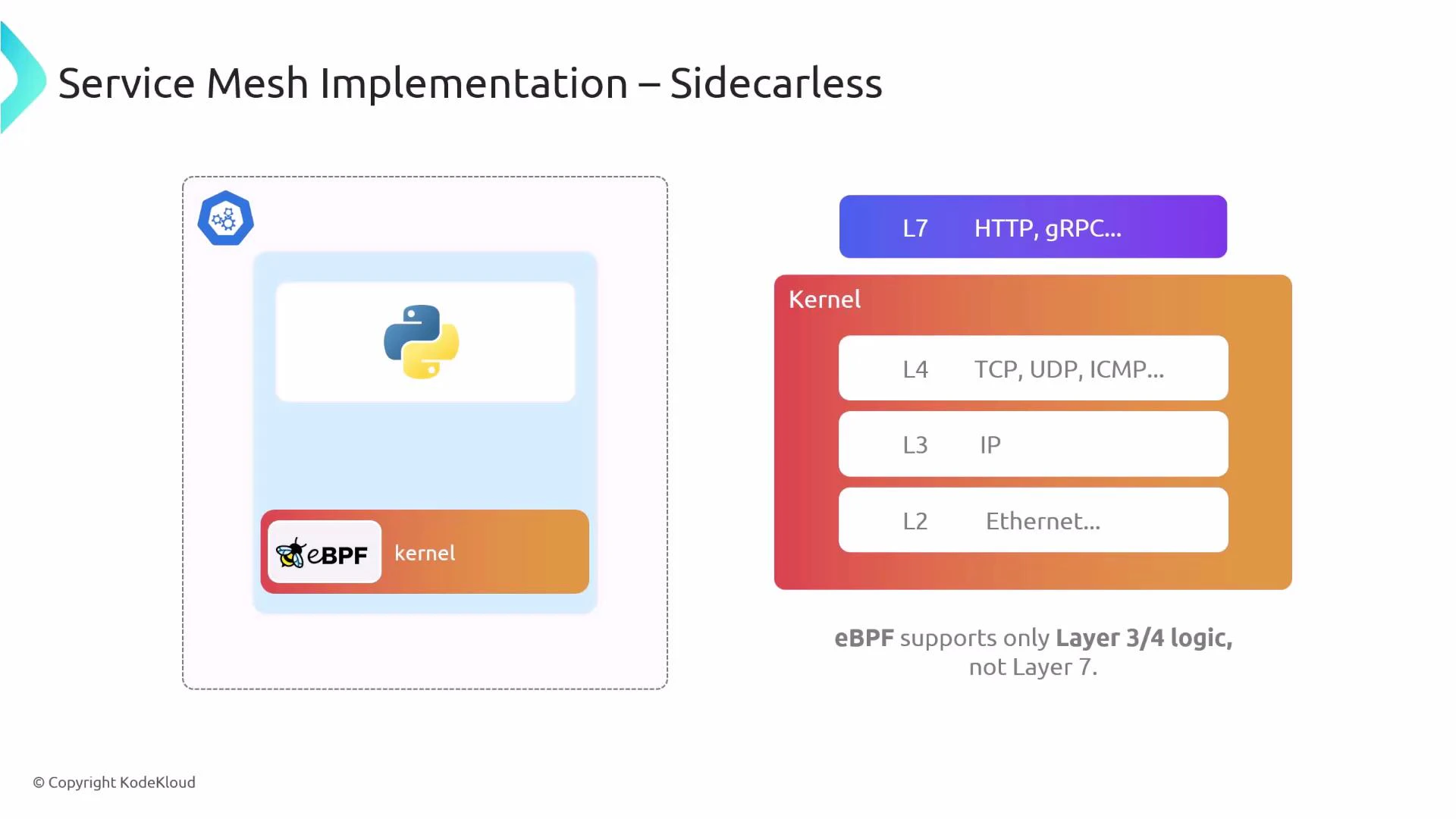

Sidecarless model (Cilium)

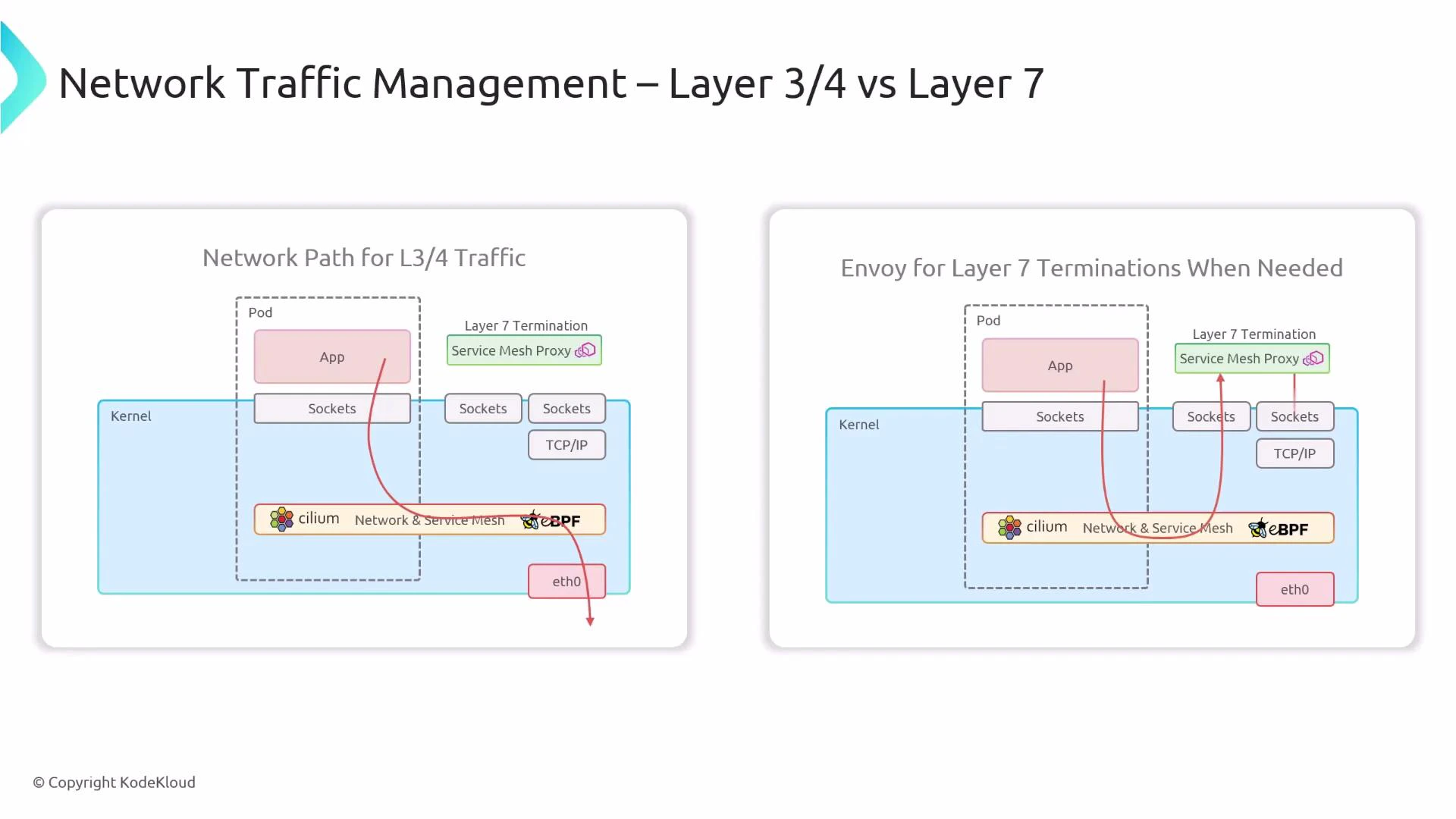

Cilium’s sidecarless approach moves most mesh capabilities into the kernel using eBPF, and uses a smaller number of Envoy proxies for L7 features. Key characteristics:- L3/L4 logic (routing, policy enforcement, service load balancing) is handled in-kernel by eBPF.

- L7 features (HTTP/gRPC routing, rate limiting, deep inspection, application-protocol policies) are handled by Envoy.

- Instead of one Envoy per pod, Cilium commonly runs a single Envoy per node (or an Envoy embedded in the agent), drastically reducing per-pod resource overhead.

Sidecarless reduces per-pod resource usage and latency for L3/L4 flows, but advanced L7 features still depend on user‑space proxies (e.g., Envoy). Plan proxy placement and capacity accordingly.

Traffic flow comparison

- L3/L4-only flow: the application socket sends packets into the kernel where eBPF implements routing, policy, and forwarding (no sidecar hop).

- L7 flow: eBPF detects the need for L7 processing and redirects traffic to the Service Mesh proxy (Envoy) for termination and deep inspection; Envoy then forwards or proxies the request to the destination.

- Fewer containers per pod and lower aggregate CPU/memory usage.

- Better performance for L3/L4 workloads because eBPF runs in-kernel.

- Reduced complexity from fewer per-pod proxies to manage.

- Compatible with other mesh solutions when needed, enabling hybrid deployments.

- L7 features still require an Envoy proxy component; Cilium optimizes this by using fewer proxies (one per node).

- Some advanced L7 capabilities remain necessarily in user-space proxies.

Summary

- Cilium agent runs on each node and watches the Kubernetes API to convert cluster state into kernel programs and policies.

- eBPF programs implement the high-performance datapath for L3/L4 networking, load balancing, and policy enforcement.

- The Cilium operator handles cluster-wide responsibilities (node, service, identity, IPAM, multi-cluster).

- Envoy provides L7 functionality; Cilium routes L7 flows to Envoy and can embed Envoy in the agent or run a node-level proxy.

- Hubble provides per-node flow capture; Hubble relay aggregates flows cluster-wide; the Hubble CLI and UI expose observability.

- Cilium can operate alongside kube-proxy or replace it by implementing service handling in eBPF.

- For service mesh functionality, the traditional sidecar model runs a proxy per pod; Cilium’s sidecarless approach uses eBPF for L3/L4 and a node-level Envoy for L7, reducing resource use and operational complexity.

Links and references

- Cilium documentation: https://cilium.io/docs/

- eBPF community: https://ebpf.io/

- Envoy proxy: https://www.envoyproxy.io/

- Hubble concepts: https://cilium.io/docs/concepts/hubble/

- Kubernetes kube-proxy docs: https://kubernetes.io/docs/reference/command-line-tools-reference/kube-proxy/