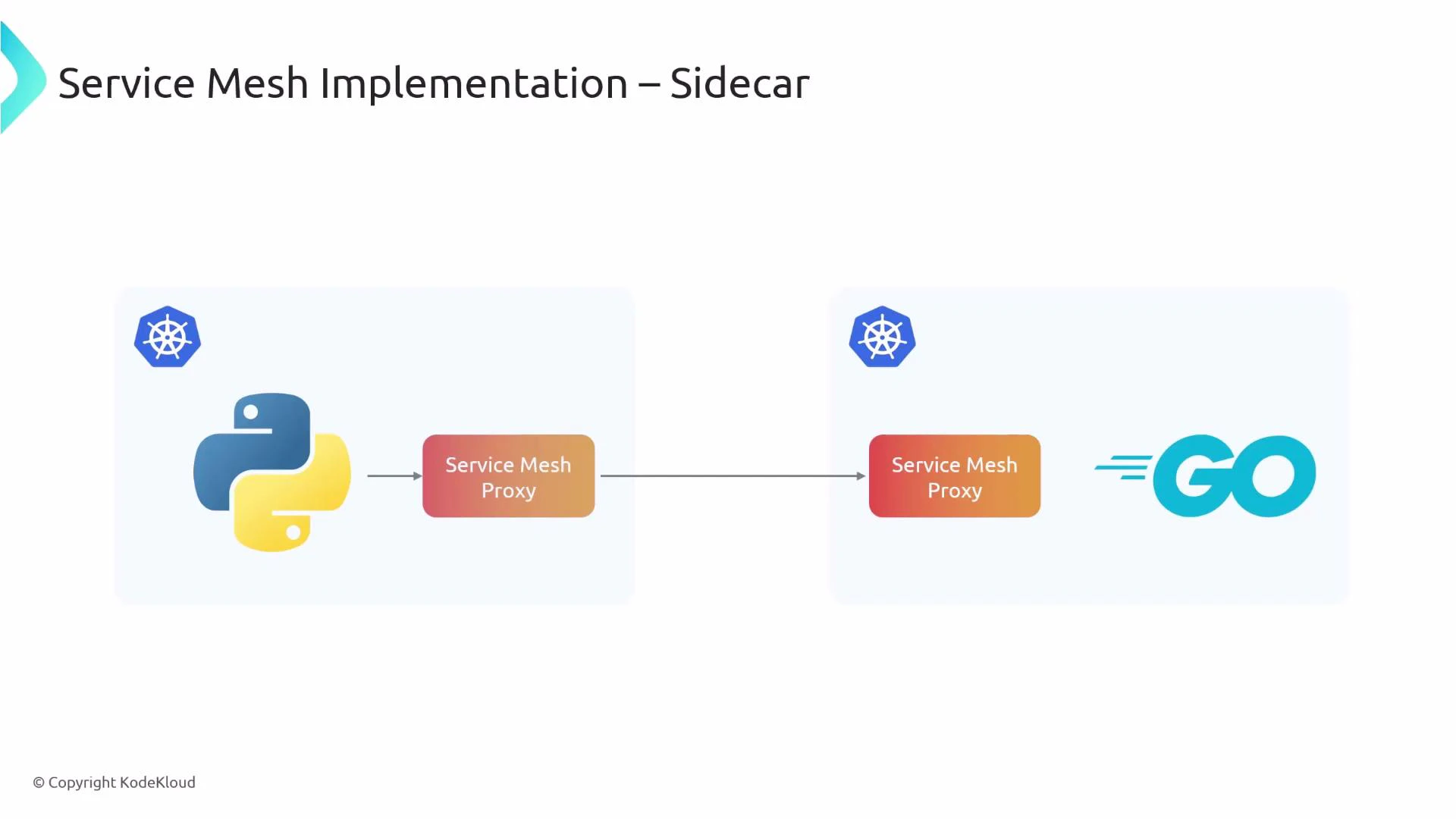

This lesson compares Cilium’s sidecarless service mesh architecture with the common sidecar-based model used by many service meshes (for example, Istio). It explains how each model directs traffic, the operational trade-offs, and when you might prefer one approach over the other. Most service meshes implement a sidecar-based model. In Kubernetes, the mesh injects a proxy container (sidecar) into every application pod. Each application pod (for example, a Python or Go service) pairs with a local proxy that intercepts and routes all inbound and outbound application traffic.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

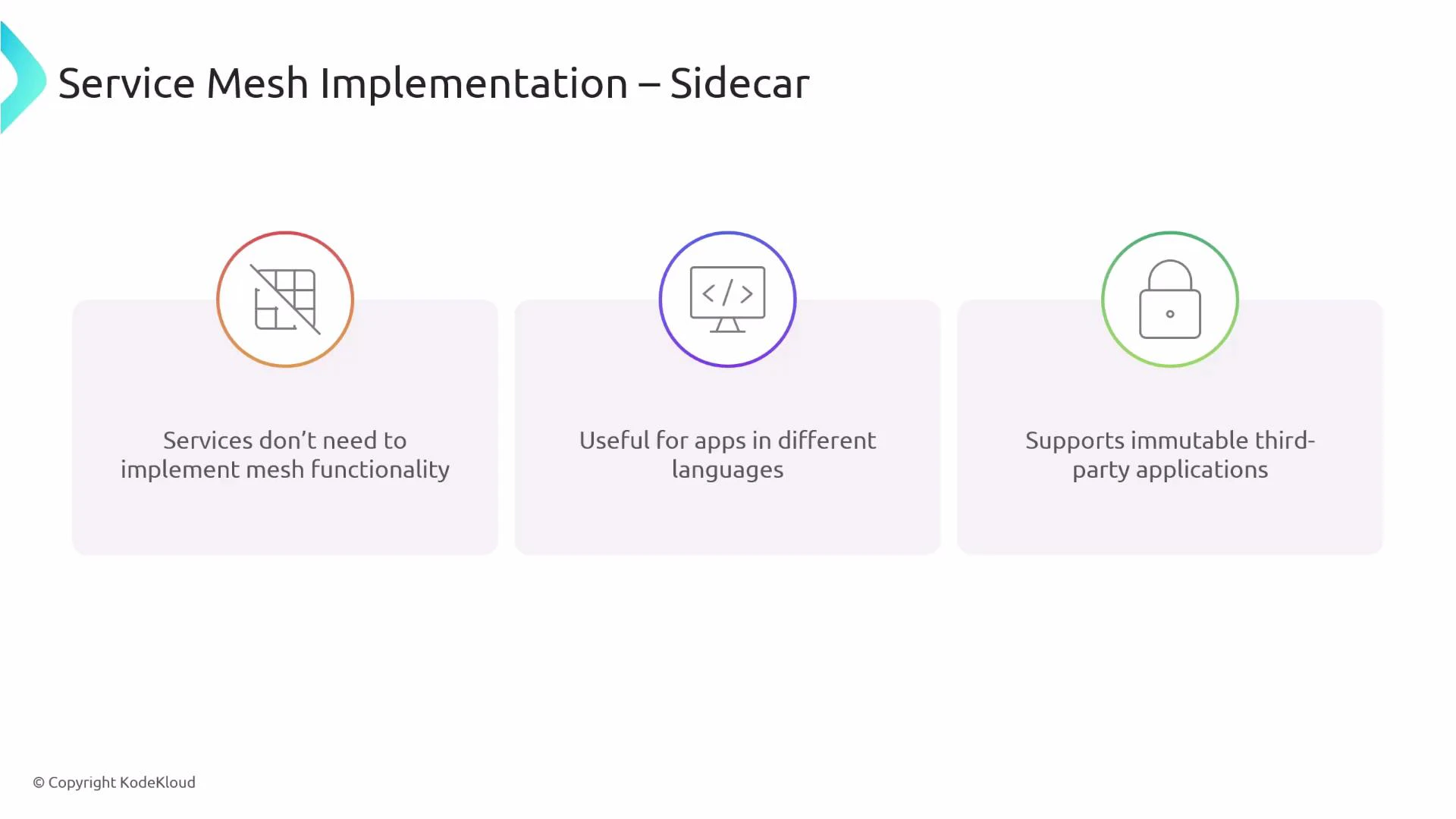

- Services don’t need to implement mesh features themselves; sidecars provide observability, traffic control, mTLS, retries, circuit-breaking, etc.

- Language-agnostic: applications written in any language benefit from the same network features without changing application code.

- Supports immutable or closed-source applications because features are attached via a proxy, not code changes.

- Higher resource usage: a proxy instance runs per pod, increasing CPU and memory consumption cluster-wide.

- Operational complexity: operators must manage proxy configuration for each pod (per-pod sidecars).

- Slower lifecycle and startup complexity: pods may wait for sidecar readiness, introducing potential race conditions.

- Extra network hop: each request typically traverses the application → sidecar → network path, adding latency.

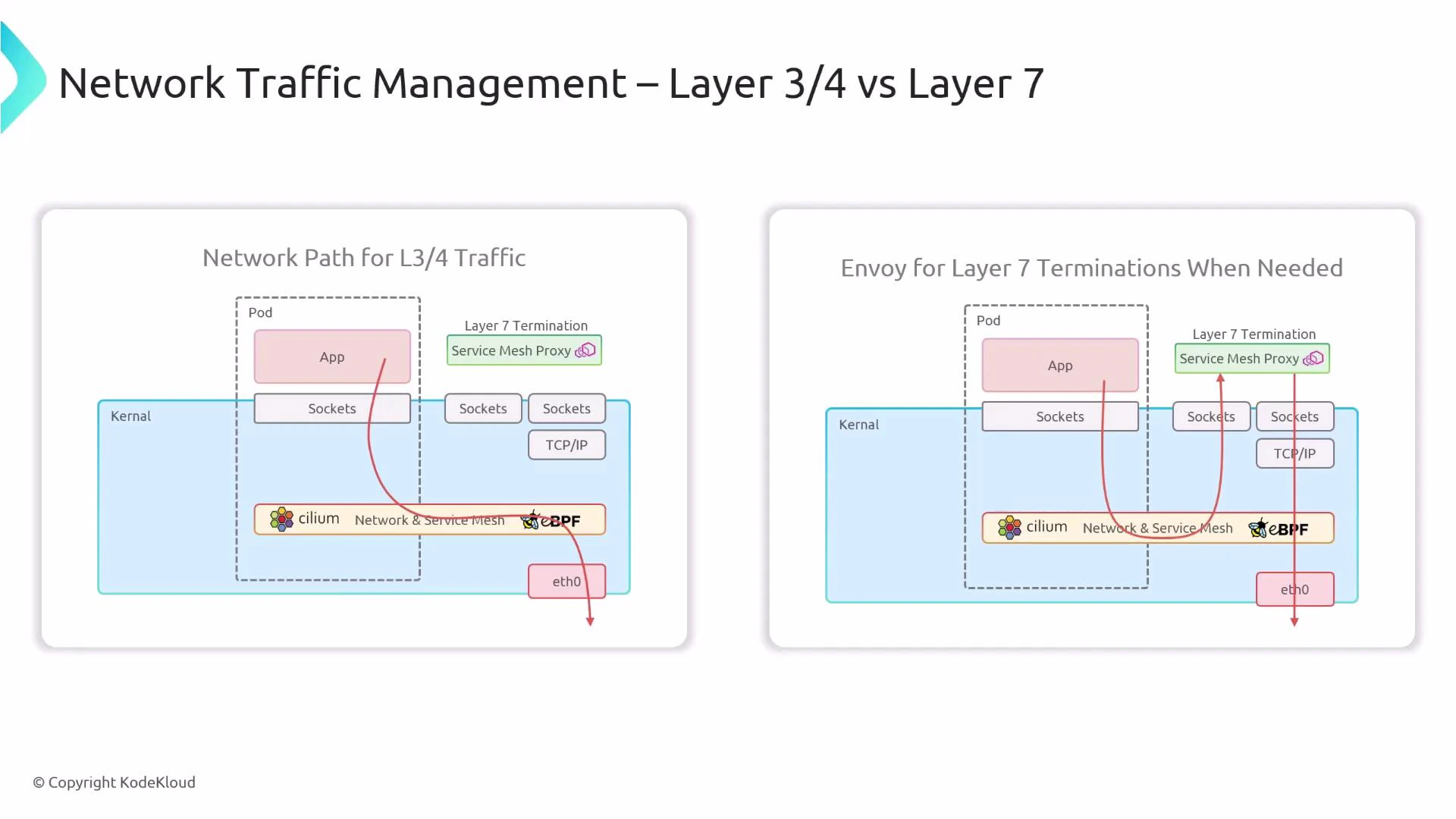

- eBPF in-kernel processing: Cilium programs operate at L3/L4 (network and transport layers) inside the kernel, allowing efficient packet processing without additional proxies.

- L7 functionality via Envoy: for application-layer (L7) features—HTTP routing, L7-aware telemetry, or protocol-specific filtering—Cilium forwards traffic to Envoy. The crucial difference is that Envoy is deployed as a node-local instance (one Envoy per node), not one per pod. That typically means far fewer Envoy instances across the cluster (e.g., two nodes → two Envoy instances).

- L3/L4 traffic: handled directly by eBPF inside the kernel — no extra proxy hop.

- L7 traffic: intercepted and forwarded to the node-local Envoy proxy for L7 termination or advanced processing — one proxy hop only for L7 paths.

| Feature | Sidecar model (e.g., Istio) | Cilium (sidecarless) |

|---|---|---|

| Proxy placement | Per-pod sidecar proxies | Node-local Envoy (when L7 needed); eBPF in kernel for L3/L4 |

| L3/L4 handling | Sidecar or kernel depending on implementation | eBPF handles L3/L4 in kernel (no proxy hop) |

| L7 handling | Sidecar handles L7 | Envoy handles L7 (node-local), invoked for L7 only |

| Resource consumption | Higher (proxy per pod) | Lower (fewer Envoy instances, eBPF efficiency) |

| Configuration surface | Per-pod proxy configs to manage | Centralized node-level configs + eBPF policies |

| Startup and lifecycle | Sidecars add startup complexity | Pod startup decoupled from sidecar lifecycle |

| Compatibility with immutable apps | Works well (no code change) | Works well; integrates transparently via kernel hooks |

| Observability & L7 features | Full L7 via sidecar | Full L7 via Envoy; L3/L4 observability via eBPF |

- Use a sidecar model when you need per-pod L7 controls tightly coupled with the application, or when an existing ecosystem relies on per-pod proxies.

- Choose Cilium (sidecarless) when you want lower per-pod resource overhead, high-performance L3/L4 processing, and a simplified operational model while still supporting L7 functionality through node-local Envoy instances.

- Cilium can integrate with other meshes if you need hybrid setups or incremental migration.

- Cilium — eBPF-based networking and security for cloud-native environments

- eBPF — extended Berkeley Packet Filter: safe kernel-level programs

- Envoy — high-performance edge and service proxy

- Istio — example sidecar-based service mesh

Cilium’s approach reduces per-pod overhead by using eBPF for L3/L4 while still providing L7 capabilities via node-local Envoy instances—combining performance with feature completeness.