In this lesson we examine the limitations of the standard Kubernetes NetworkPolicy API and explain why extended policy engines (for example, Cilium) introduce CRDs to fill those gaps. Understanding these constraints helps when you design secure cluster networking and select a CNI or policy engine that meets your requirements.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

What native Kubernetes NetworkPolicy supports

Kubernetes NetworkPolicy works at Layer 3 (IP) and Layer 4 (TCP/UDP/SCTP). It can restrict traffic using pod selectors, namespace selectors, IPBlocks, and ports. A common L3/L4 NetworkPolicy looks like this:Standard NetworkPolicy is L3/L4-only. It cannot inspect or filter Layer 7 protocol semantics (for example, HTTP method or HTTP path), and it does not support DNS/FQDN-based matching, service-account selectors, or cluster-wide policies out of the box.

Key limitations of networking.k8s.io/v1 NetworkPolicy

-

No Layer 7 (L7) protocol awareness

- Native NetworkPolicy cannot express rules such as “allow only HTTP GET to /users.” It can allow TCP port 80, but it cannot validate HTTP methods, paths, headers, or other L7 attributes.

-

DNS / FQDN matching limitations

- NetworkPolicy cannot match on DNS names or FQDNs. To allow egress to an external service that resolves to multiple IPs, you must enumerate all IPs in ipBlock entries and update the policy whenever DNS resolves to new addresses.

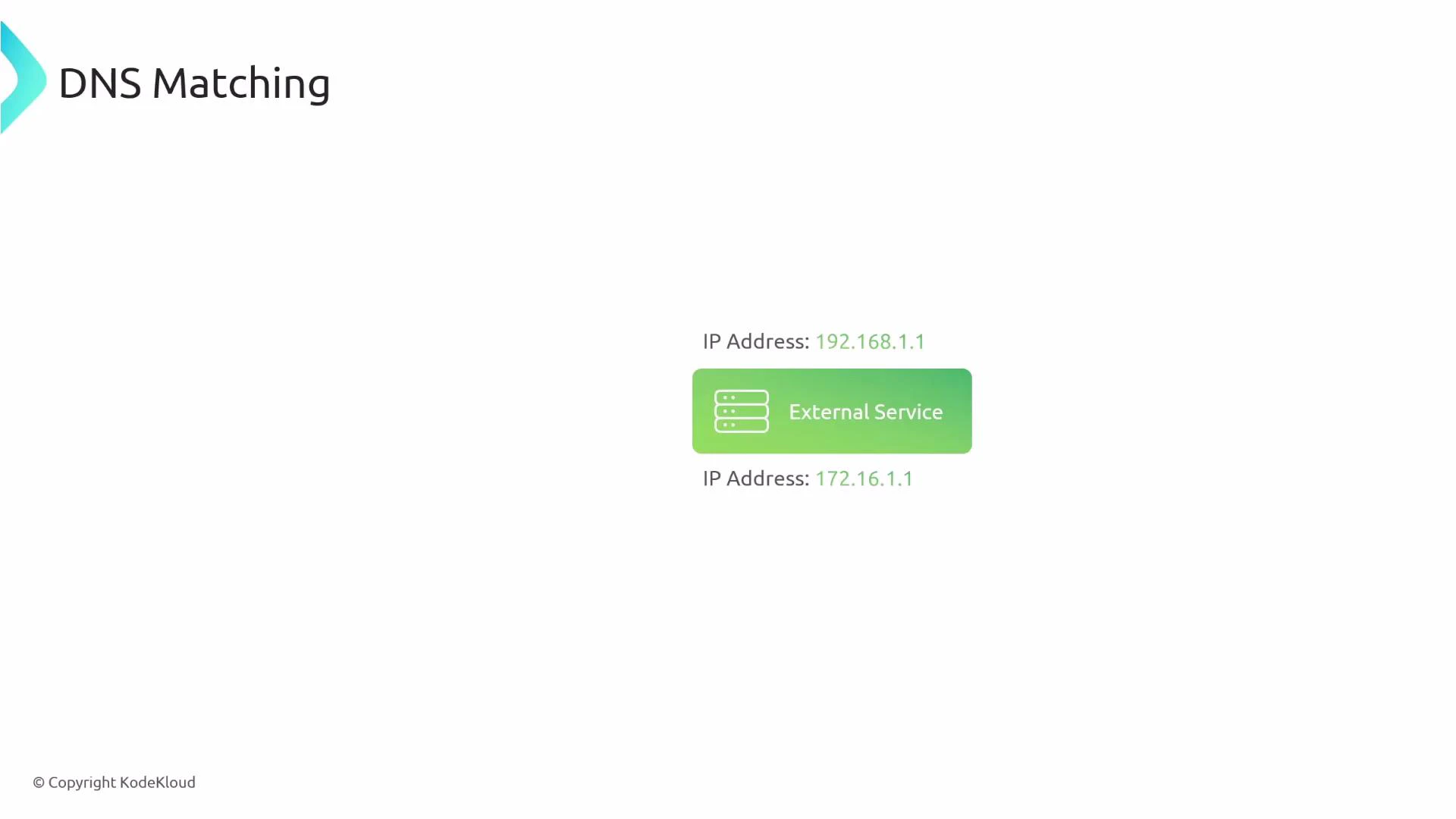

To illustrate the problem: suppose external-service.com resolves to multiple IPs (e.g., 192.168.1.1 and 172.16.1.1). With native policies you must list those IPs explicitly, and update the policy when the service endpoints change.

-

Limited pod attribute selectors

- Native NetworkPolicy can only match pods by labels and namespaces. It cannot select by other pod attributes such as serviceAccountName, or match richer metadata fields without label workarounds.

-

Protocol matching constraints

- The protocol field supports TCP, UDP, and SCTP in the Kubernetes API. ICMP or other protocol-specific matches and application-protocol-specific filtering (for example, DNS query matching) are not standardized in networking.k8s.io/v1. Some CNIs may extend behavior, but it is not portable.

-

No explicit deny rules

- Native NetworkPolicy is primarily a whitelist model: you define what is allowed. It does not provide explicit deny rules that take precedence. You cannot express “allow everything from X except pods labeled env=staging” using only networking.k8s.io/v1 constructs.

-

Namespace scoping (no cluster-scoped NetworkPolicy)

- NetworkPolicy objects are namespace-scoped. To apply the same policy across multiple namespaces, you must replicate the object in each namespace. There is no native cluster-scoped NetworkPolicy in networking.k8s.io/v1.

-

Multi-cluster awareness

- NetworkPolicy is cluster-local. It cannot inherently distinguish source cluster origin across multiple clusters; cross-cluster policies require labeling and replication strategies outside the API.

Summary comparison: native NetworkPolicy vs extended policy engines

| Feature | networking.k8s.io/v1 NetworkPolicy | Cilium (CiliumNetworkPolicy / CiliumClusterwideNetworkPolicy) |

|---|---|---|

| L3/L4 filtering | Yes | Yes |

| L7 filtering (HTTP, gRPC, Kafka) | No | Yes (methods, paths, headers) |

| FQDN / DNS-based rules | No | Yes (toFQDNs) |

| Service account selection | No | Yes |

| Explicit deny semantics | No | Yes (ingressDeny/egressDeny) |

| Cluster-scoped policies | No | Yes (CiliumClusterwideNetworkPolicy) |

| Protocol variety | TCP/UDP/SCTP | Extended support depending on Cilium features |

Why Cilium and other enhanced engines exist

Cilium provides CRDs such as CiliumNetworkPolicy and CiliumClusterwideNetworkPolicy to address the functional gaps in native NetworkPolicy:- L7-aware rules: match HTTP methods, paths, headers; support for gRPC and Kafka.

- FQDN-based egress: toFQDNs allow DNS-driven policies that follow changing IPs.

- Rich selectors: service accounts, identity-based selectors, and metadata-aware matching.

- Explicit deny semantics and more flexible precedence models.

- Cluster-wide policies for consistent rules across namespaces and clusters.

Cilium policy examples (illustrative of capabilities)

- L7 HTTP allow (Cilium can match method/path):

- FQDN-based egress (toFQDNs):

- Deny and advanced matching:

- Cluster-scoped policy example placeholder:

Final notes

- Native Kubernetes NetworkPolicy is intentionally simple and broadly supported by many CNIs. That simplicity means it is portable but functionally limited (no L7, no FQDN matching, limited selectors, no explicit deny, no cluster-scoped definitions).

- If your security requirements include L7 filtering, DNS-aware egress, service-account selectors, explicit denies, or cluster-wide rules, evaluate an extended policy engine such as Cilium and its CRDs: CiliumNetworkPolicy and CiliumClusterwideNetworkPolicy.

Links and references

- Kubernetes NetworkPolicy documentation

- Cilium documentation

- CiliumNetworkPolicy reference

- CiliumClusterwideNetworkPolicy reference