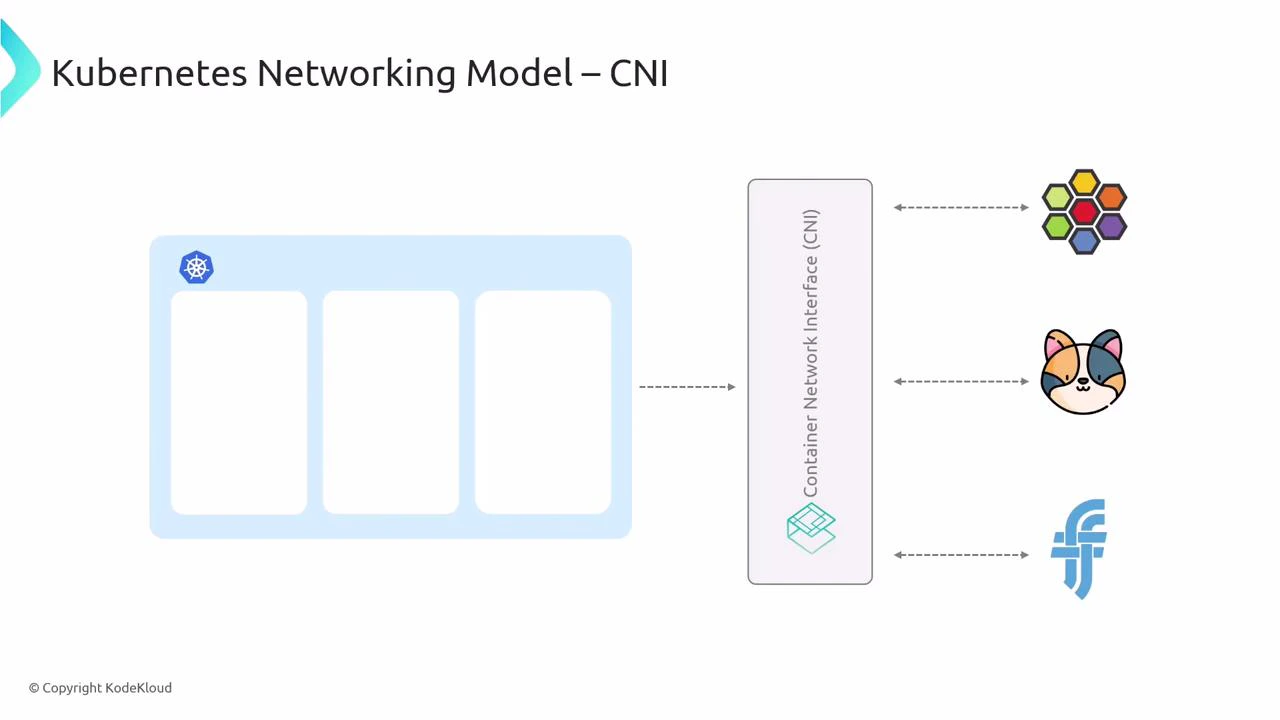

This article explains how Kubernetes networking works and the expectations the platform places on Container Network Interface (CNI) plugins such as Cilium. Understanding Kubernetes’ networking primitives—Pod CIDR, Service CIDR, kube-proxy, and NetworkPolicy—will make it easier to see what CNIs must provide and how eBPF-based CNIs (like Cilium) can replace or augment kube-proxy behavior.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Pod IP addressing and the Pod CIDR

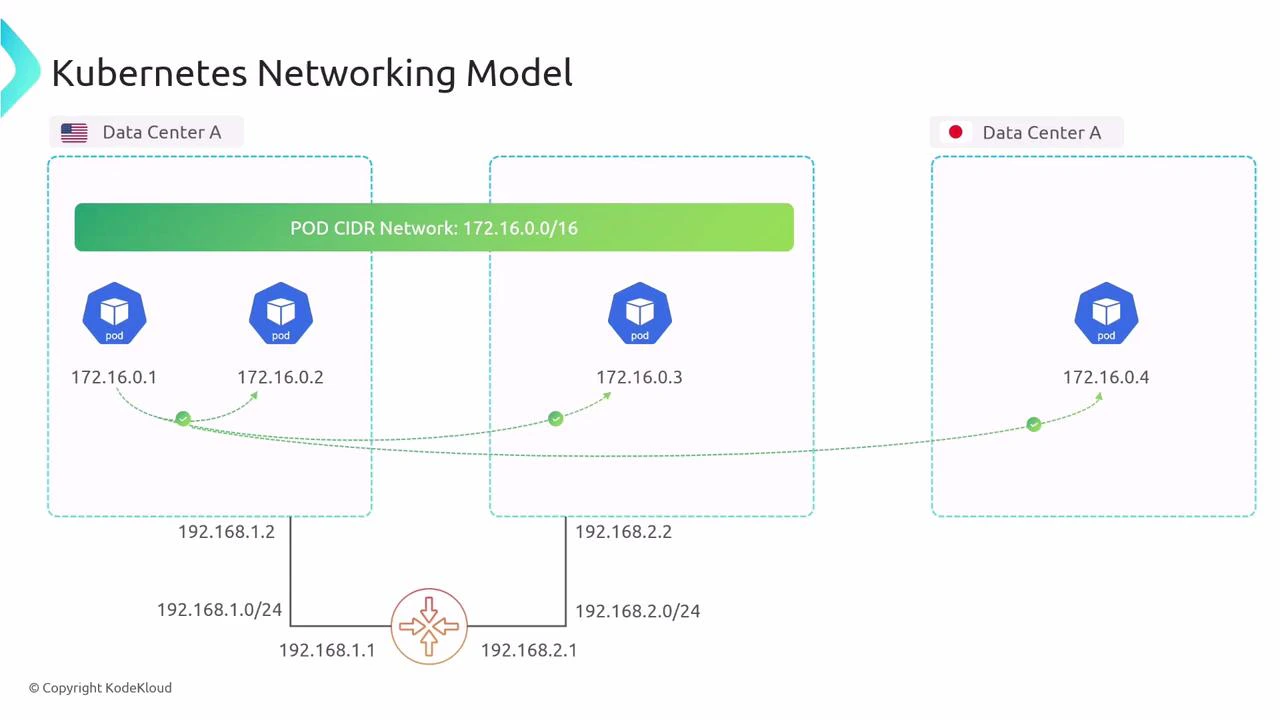

When you create a Kubernetes cluster you typically allocate a Pod CIDR (example: 172.16.0.0/16). Kubernetes assigns pod IP addresses from that CIDR so every pod in the cluster has an IP in the shared address space. Kubernetes assumes a flat pod address space: any pod should be able to reach any other pod using its pod IP, regardless of which node the pods run on or the underlying node network topology. As long as nodes have IP reachability between them, pod-to-pod communication should work without application-level routing changes.

- Pod IPs are ephemeral—pods that restart typically receive new IPs.

- Host processes running directly on a node can also reach pods on that node using pod IPs.

Services and the Service CIDR

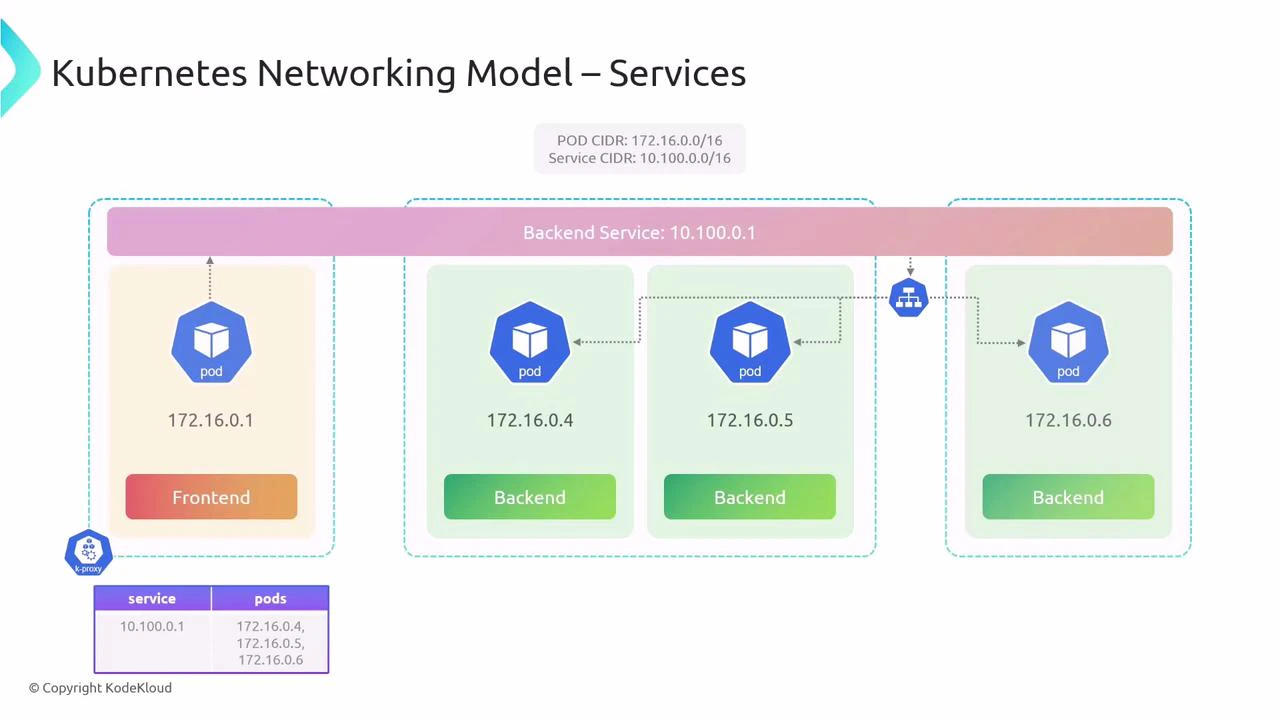

Because pod IPs are ephemeral, Kubernetes provides Services to give stable network identities and load balancing for groups of pods. When you create a cluster you also define a Service CIDR (example: 10.100.0.0/16). Each Service receives a stable ClusterIP from the Service CIDR (for example, 10.100.0.1). Client pods send traffic to that ClusterIP and the Service load-balances traffic to the current backend pod endpoints.

- kube-proxy runs on every node and watches Service and Endpoint objects.

- kube-proxy programs iptables or IPVS rules so that traffic sent to a Service IP is redirected to one of the backend pod IPs.

- Some CNIs (including eBPF-based ones like Cilium) can replace or augment kube-proxy and implement service handling more efficiently.

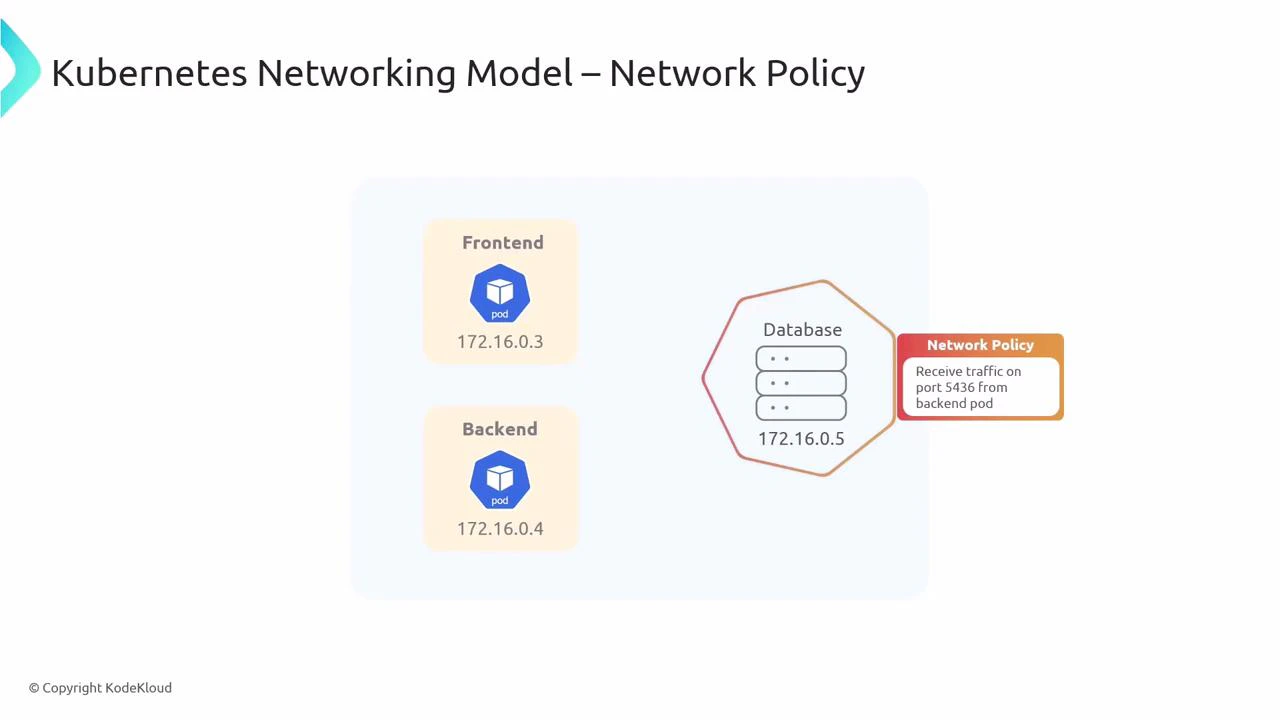

NetworkPolicy: declare who can talk to whom

By default, Kubernetes networking is permissive: any pod can reach any other pod. For production workloads you usually want to restrict traffic between pods and across tiers. Kubernetes NetworkPolicy is the declarative API for expressing these restrictions. Enforcement, however, is performed by the cluster’s CNI implementation.

Kubernetes defines the NetworkPolicy API, but enforcement depends on the cluster’s CNI plugin. If the CNI does not implement NetworkPolicy semantics, the policies will not be enforced.

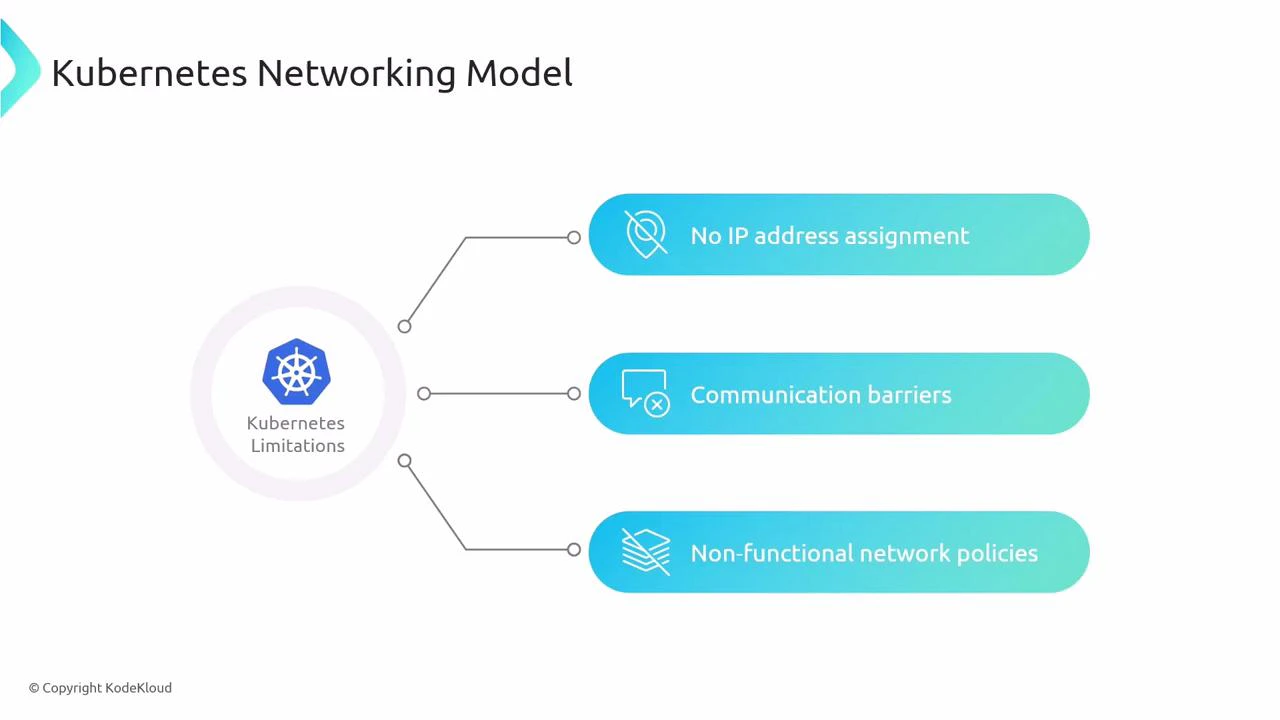

Kubernetes does not ship full data-plane networking

Kubernetes itself does not provide pod IP allocation, overlay routing, or NetworkPolicy enforcement. If you create a cluster without installing a CNI plugin, pods will not receive Pod CIDR IPs and pod-to-pod communication will not function. The only networking component included in upstream Kubernetes is kube-proxy; everything else (IP allocation, routing/overlays, service handling integrations, and policy enforcement) is delivered by a third‑party CNI.

If a cluster is created without a conformant CNI plugin, pods will not get IP addresses from a Pod CIDR and cluster networking (including NetworkPolicy enforcement) will not work.

Choose a conformant CNI plugin

You don’t need to build networking yourself. Install a conformant CNI plugin to provide:- Pod IP allocation and routing (overlay or routing-based).

- Service integration or kube-proxy replacement (iptables/IPVS or CNI-native service handling).

- NetworkPolicy enforcement.

- Cilium — eBPF-based, advanced network and security features: https://cilium.io/

- Calico — policy and routing features: https://projectcalico.org/

- Flannel — simple overlay networking: https://github.com/flannel-io/flannel

Quick reference

| Resource / Component | Purpose | Example / Notes |

|---|---|---|

| Pod CIDR | IP address pool for pods | 172.16.0.0/16 |

| Service CIDR | IP range for ClusterIP services | 10.100.0.0/16 |

| kube-proxy | Implements Services (iptables/IPVS) on nodes | Can be replaced/augmented by some CNIs |

| NetworkPolicy | Declarative access control for pods | Enforcement depends on CNI |

| CNI plugin | Provides pod IP allocation, routing, and policy enforcement | Cilium, Calico, Flannel |

Summary

- Pod CIDR: where pods receive their IP addresses.

- Service CIDR + kube-proxy (or CNI alternatives): provide stable ClusterIPs and load balancing.

- NetworkPolicy: expresses network access control but must be enforced by the CNI.

- Kubernetes requires a conformant CNI plugin to enable pod networking and policy enforcement—choose a plugin (Cilium, Calico, Flannel, etc.) that meets your networking and security requirements.

Links and references

- Kubernetes Networking Concepts

- CNI Project Specification

- Cilium — eBPF-powered Networking & Security

- Calico

- Flannel