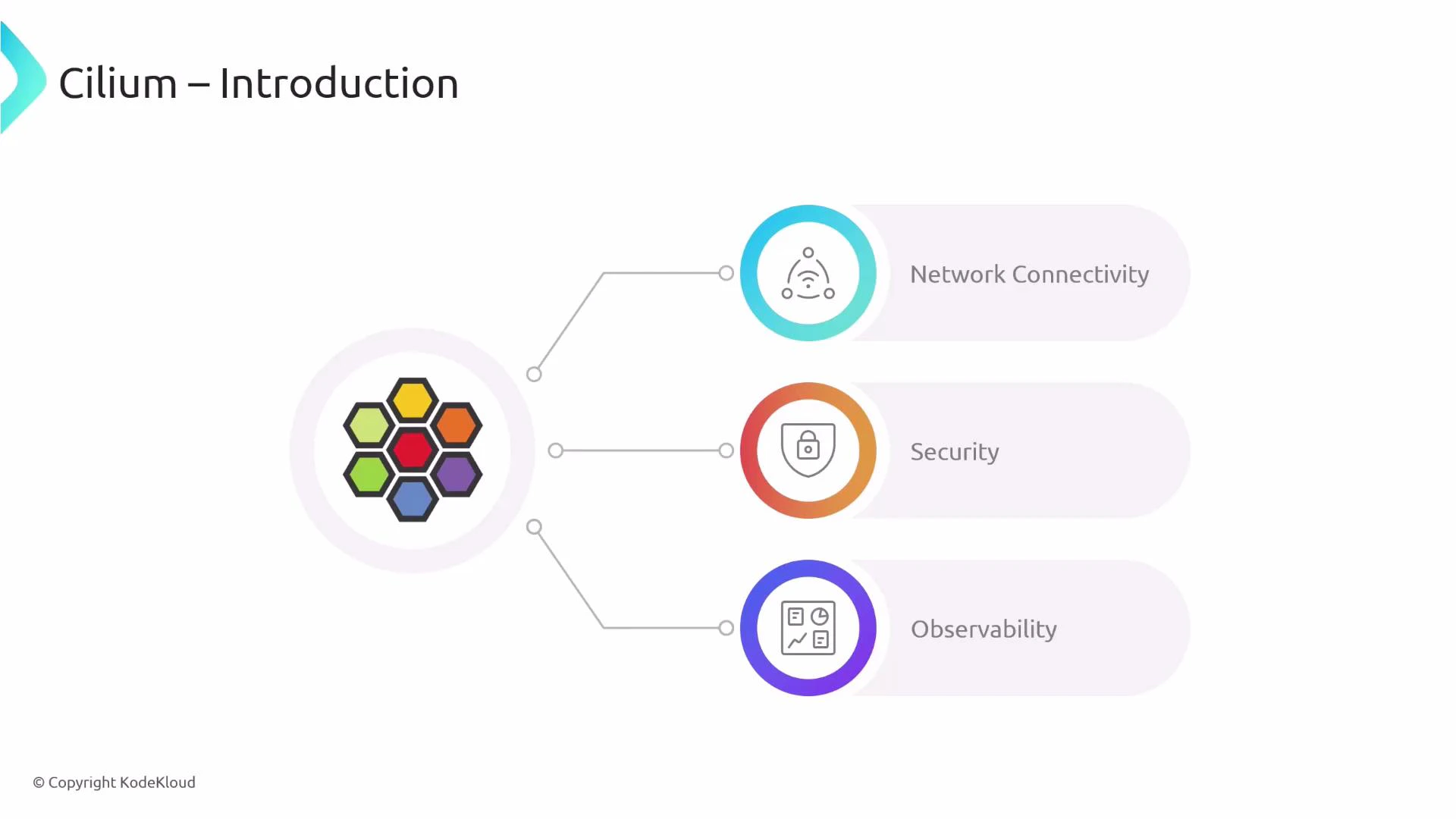

In this lesson we introduce Cilium: what it is, the capabilities it provides, and how it integrates into a Kubernetes environment. Cilium is an open-source, cloud-native networking, security, and observability platform for Kubernetes. Although commonly used as a CNI plugin, Cilium extends far beyond basic pod connectivity—leveraging eBPF to deliver high-performance packet processing, identity-based security, L7-aware policies, and rich traffic visibility.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

At-a-glance feature matrix

| Feature category | Primary capabilities | Examples / integrations |

|---|---|---|

| Networking | Pod networking, L4/L7 load balancing, ingress/gateway, egress, multi-cluster | CNI plugin, Envoy for L7, Cluster Mesh, Gateway API |

| Security | L3/L4 NetworkPolicy, L7-aware CiliumNetworkPolicy (CNP), encryption | IPsec/WireGuard, mTLS, protocol-aware L7 rules (HTTP, Kafka, gRPC) |

| Observability | Flow visibility, metrics, tracing, troubleshooting | Hubble, Prometheus metrics, Grafana dashboards |

| Platform & performance | Kernel-accelerated datapath, kube-proxy replacement, QoS | eBPF-based datapath, service handling without kube-proxy |

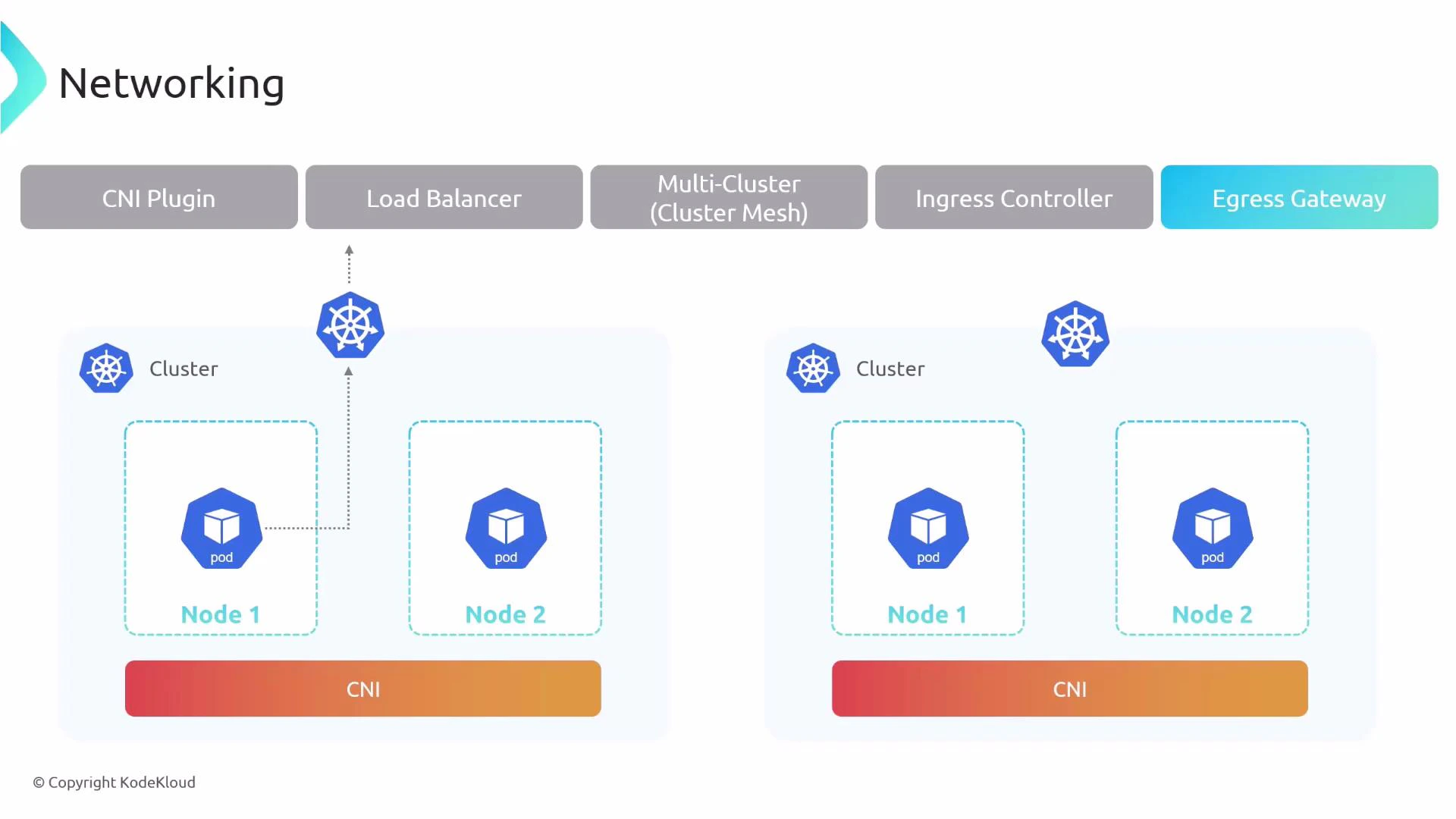

Networking features

Cilium can be installed as the cluster CNI to provide pod-to-pod networking. Beyond basic connectivity, Cilium implements additional networking components:- CNI plugin: manages pod interfaces, routes, and connectivity.

- Load balancing: L4 load balancing plus L7 routing when integrated with an L7 proxy such as Envoy.

- Cluster Mesh: connect multiple Kubernetes clusters and expose global services with cross-cluster load balancing.

- Ingress Controller & API Gateway: Cilium can act as an ingress/gateway implementation (including Gateway API support and Envoy-based datapath), reducing the need for a separate ingress controller.

- Egress gateway: define and control how traffic leaves the cluster (specific node/IPs, NATing rules, egress policies).

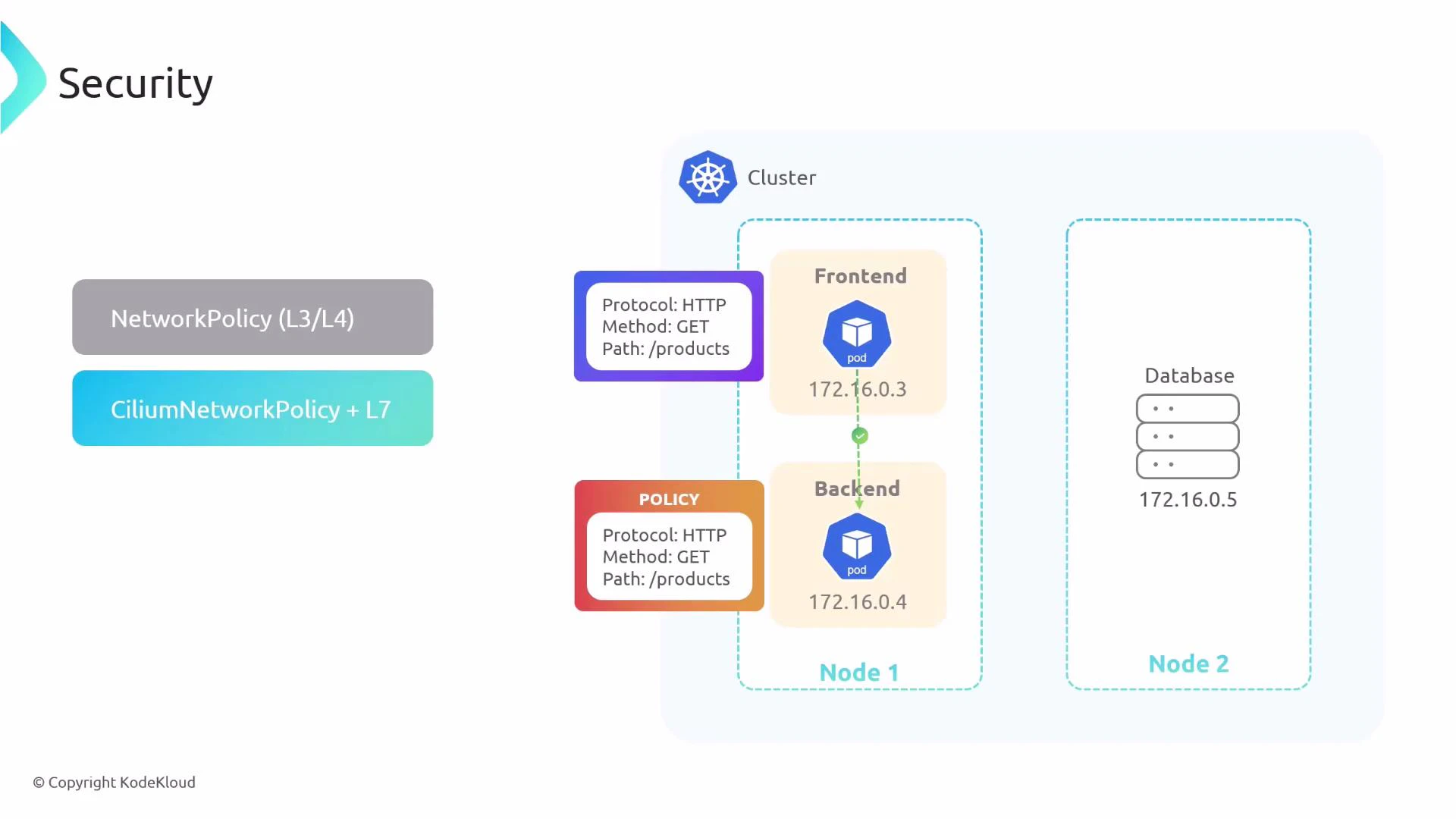

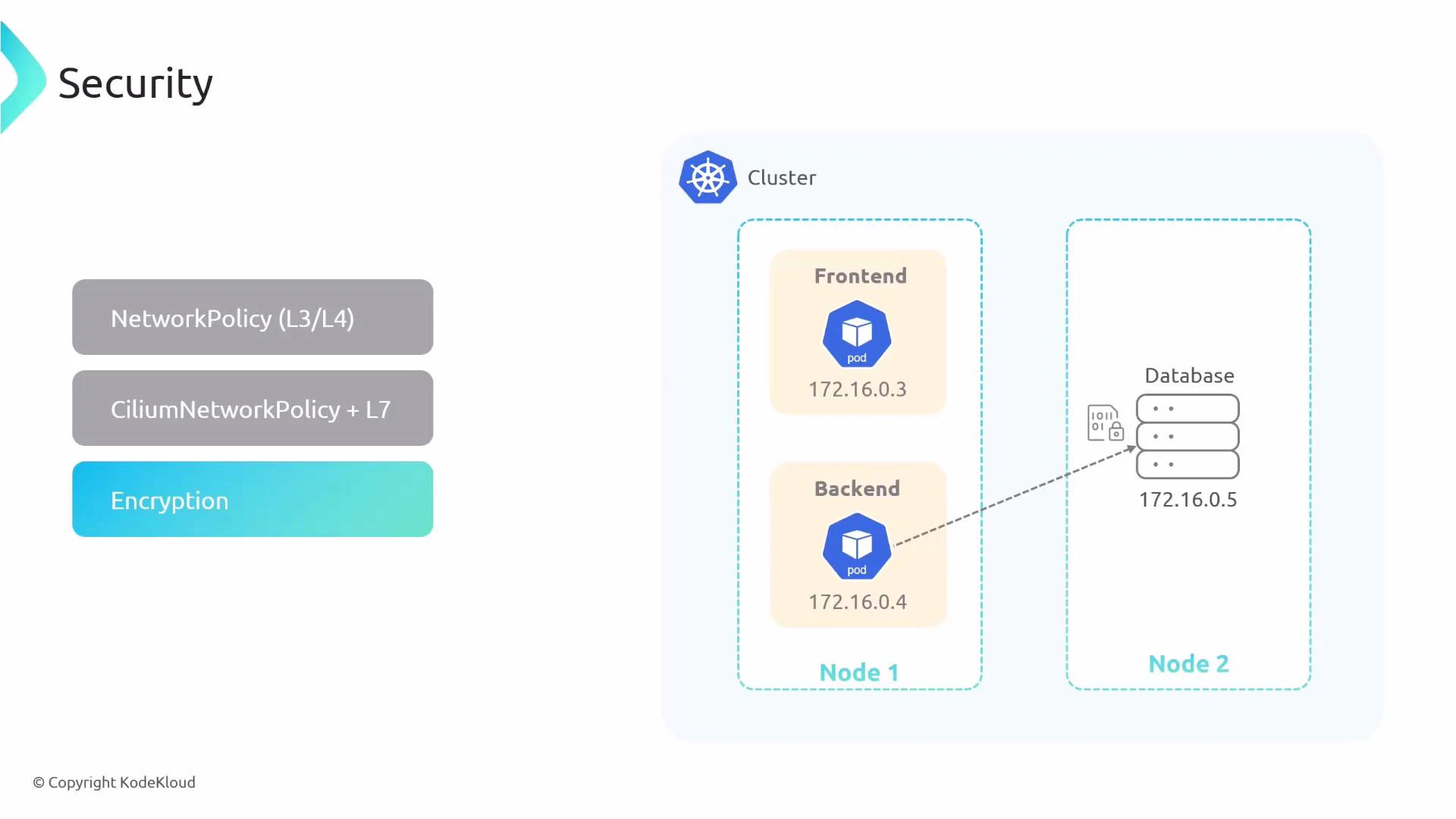

Security features

Cilium provides layered security that ranges from IP/port filtering to protocol-aware, application-layer rules:- Kubernetes NetworkPolicy: L3/L4 enforcement (IP and port-based rules).

- CiliumNetworkPolicy (CNP): a richer CRD that supports L7 policies (HTTP, Kafka, gRPC, etc.) and identity-based rules (service account / labels).

- Encryption: optional pod-to-pod encryption using IPsec or WireGuard tunnels managed by Cilium.

- Identity-based policies: policies based on workload identity rather than ephemeral IP addresses, improving policy resilience and security posture.

CiliumNetworkPolicy enables protocol-aware L7 filtering (HTTP, Kafka, gRPC, etc.), allowing precise enforcement based on application semantics rather than just IPs and ports.

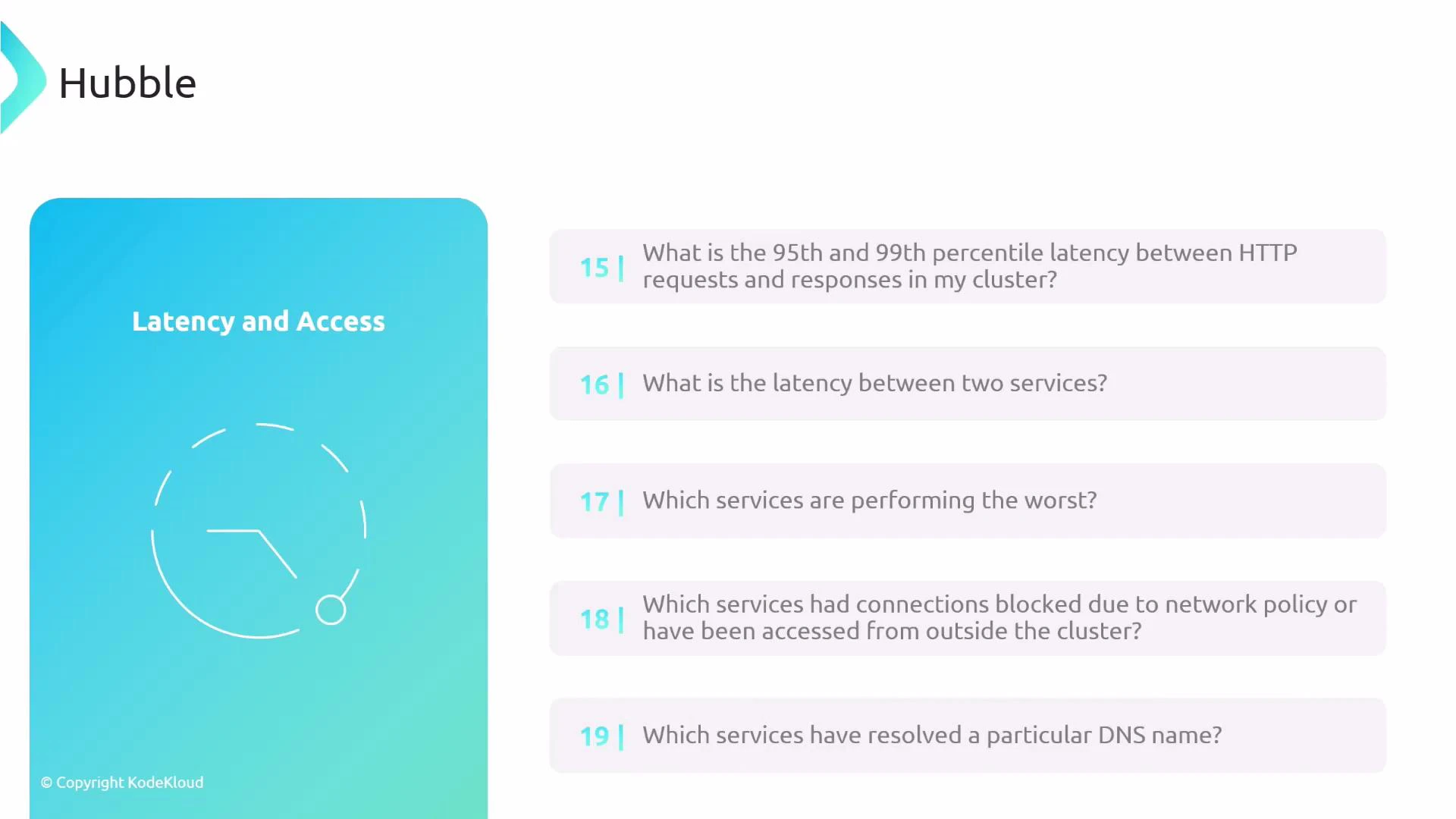

Observability: Hubble, metrics, and troubleshooting

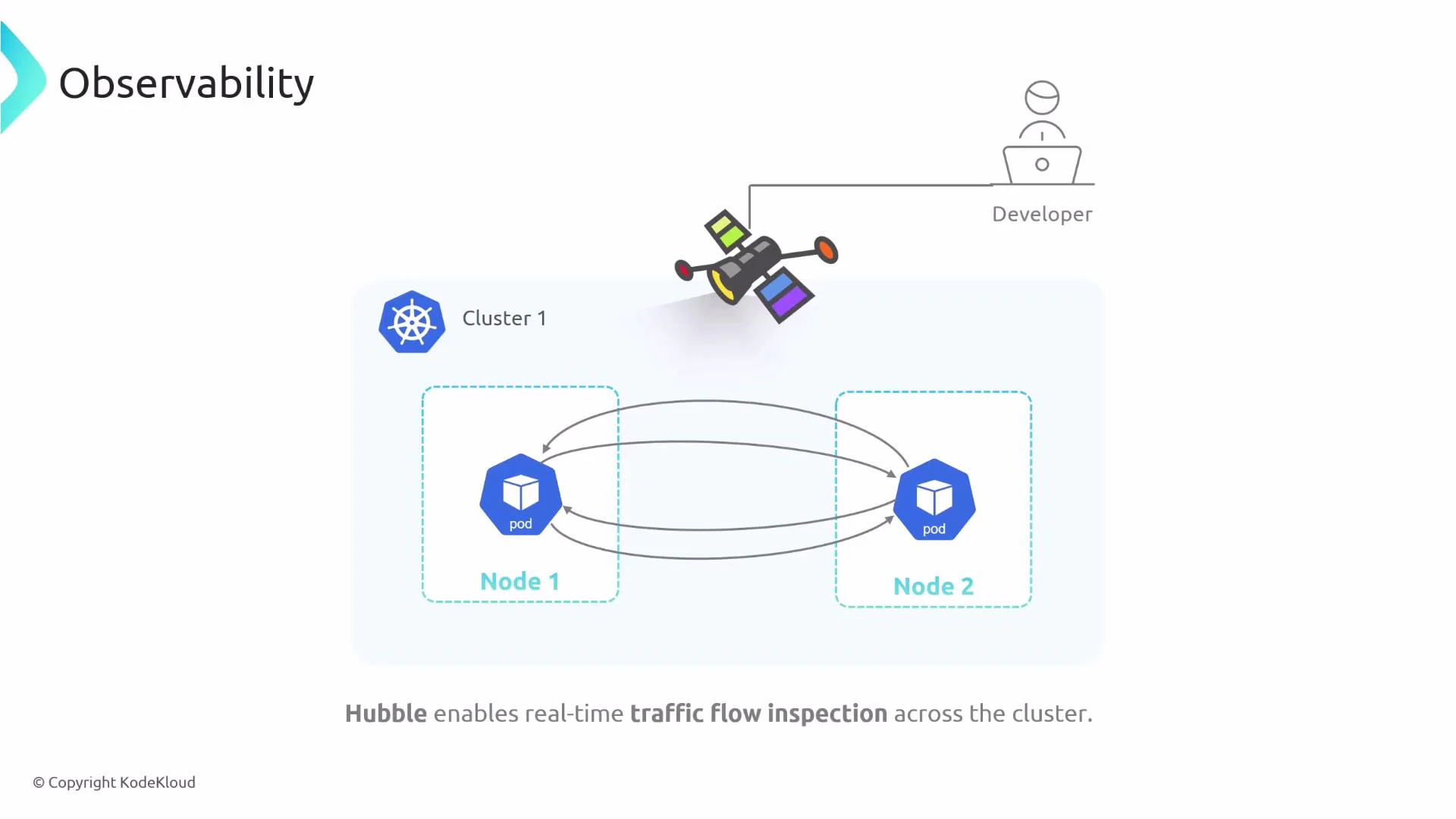

Cilium’s observability is centered on Hubble, which provides real-time flow visibility and troubleshooting telemetry.- Hubble: flow-level insights—who communicates with whom, which protocols and endpoints are used, events and errors, and allowed vs dropped flows.

- CLI and GUI: Hubble offers both a CLI and a web UI for interactive exploration and service-dependency graphs.

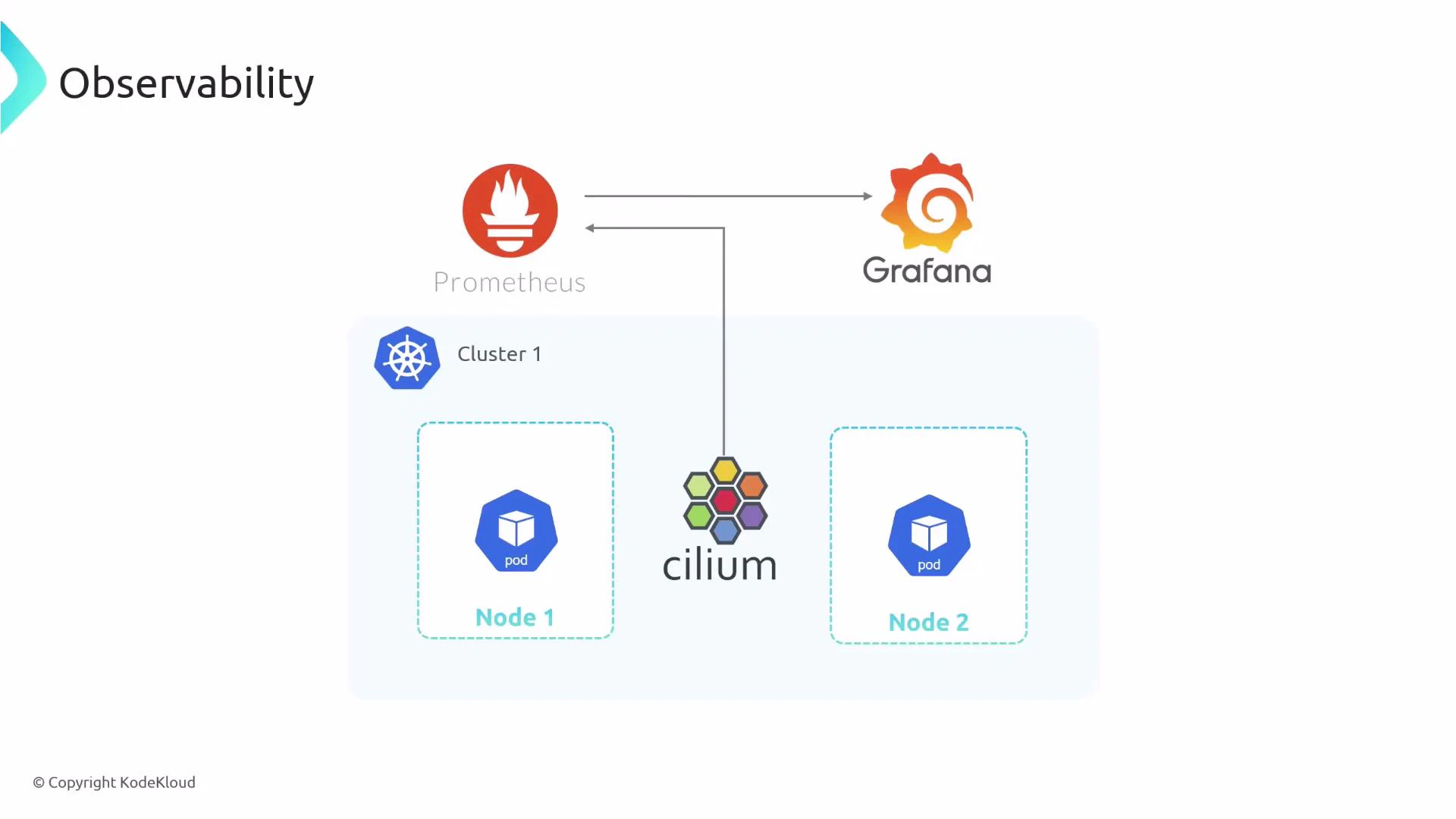

- Metrics: Cilium exports metrics that Prometheus can scrape; Grafana dashboards visualize these metrics for operational insight.

- Which services are communicating and what HTTP calls are happening?

- Which Kafka topics are used by which services?

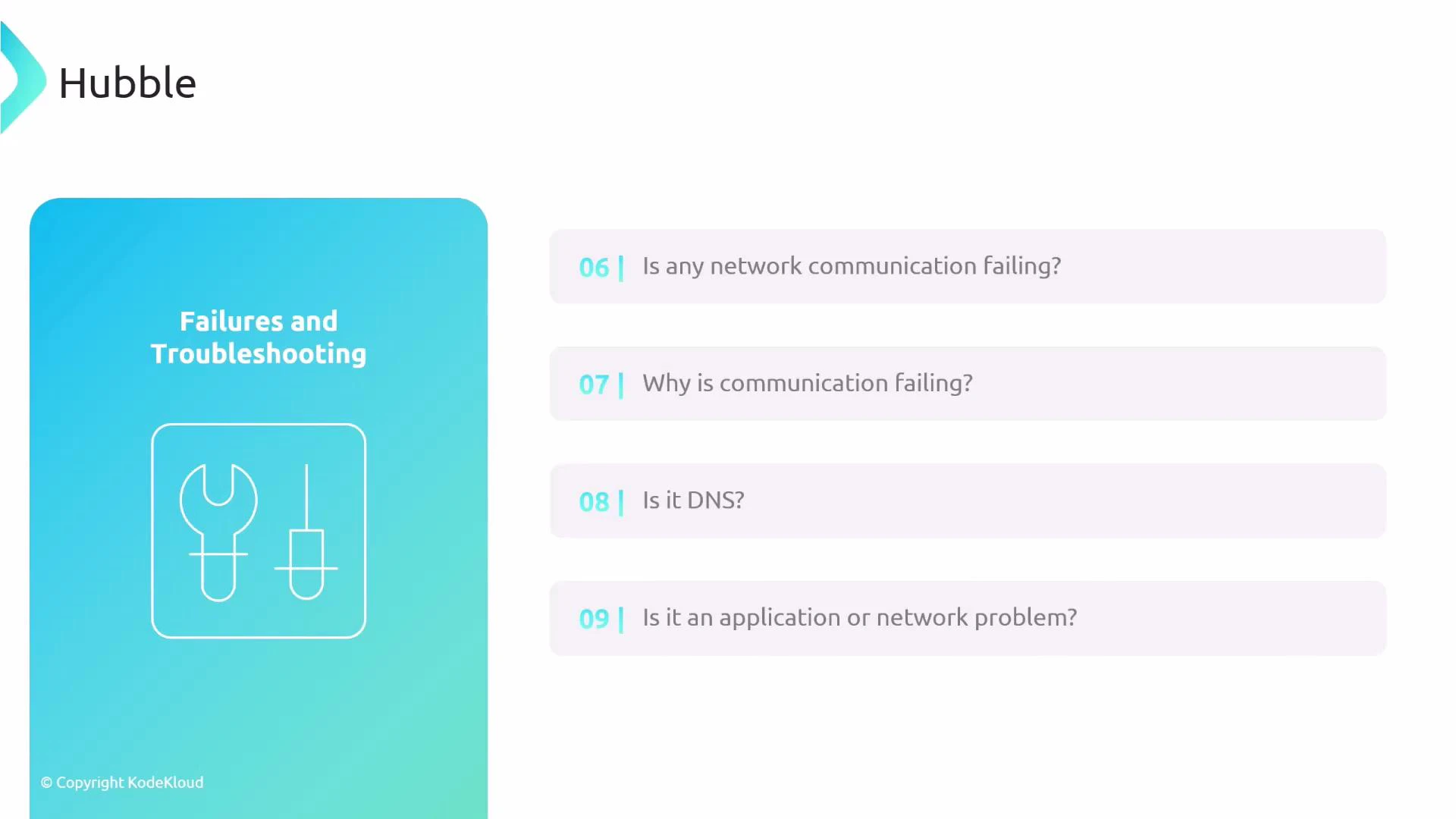

- Where are flows being dropped and why?

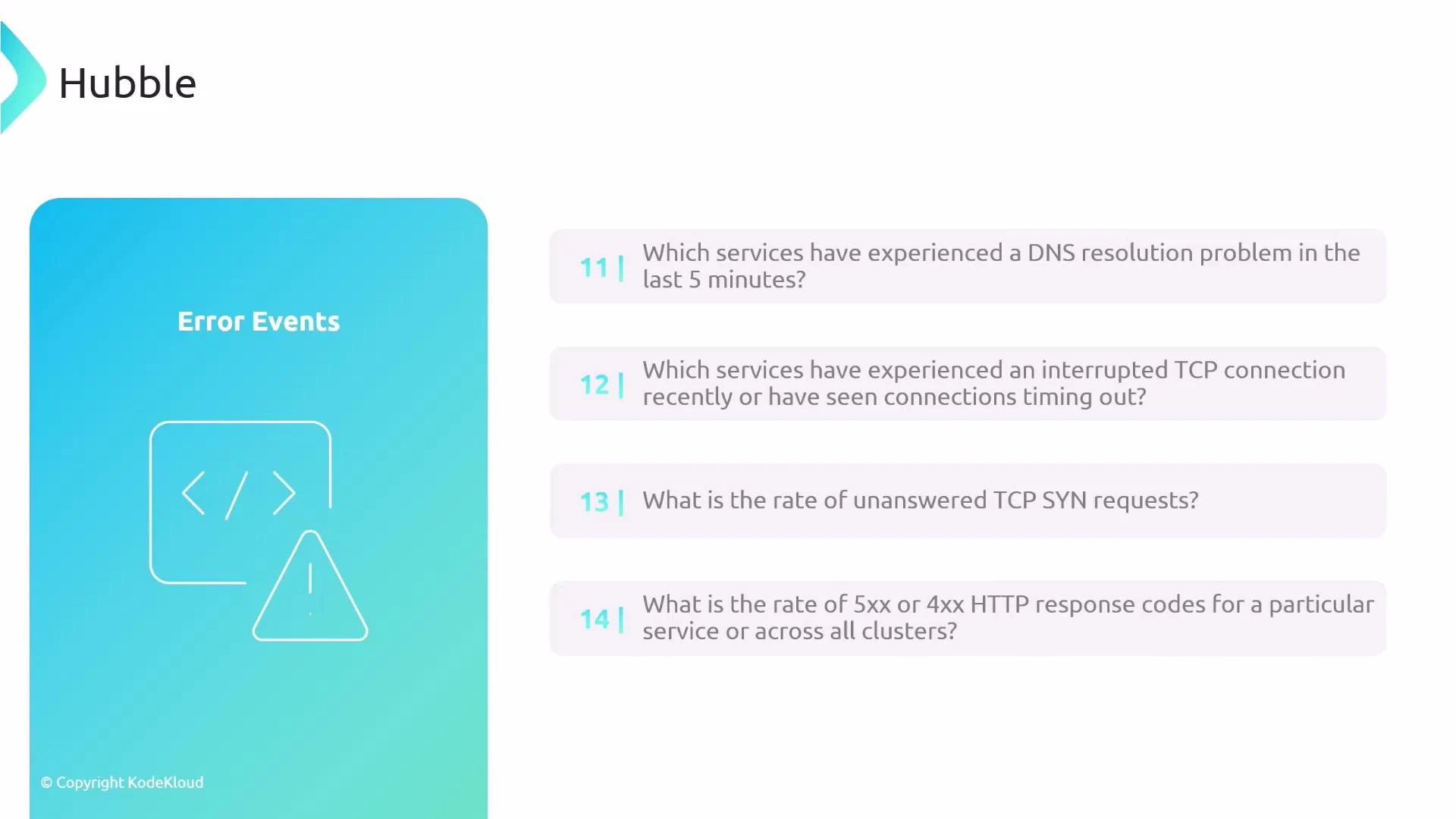

- Failure diagnosis: DNS resolution issues, interrupted TCP connections, unanswered TCP SYNs, HTTP 4xx/5xx spikes, and latency percentiles.

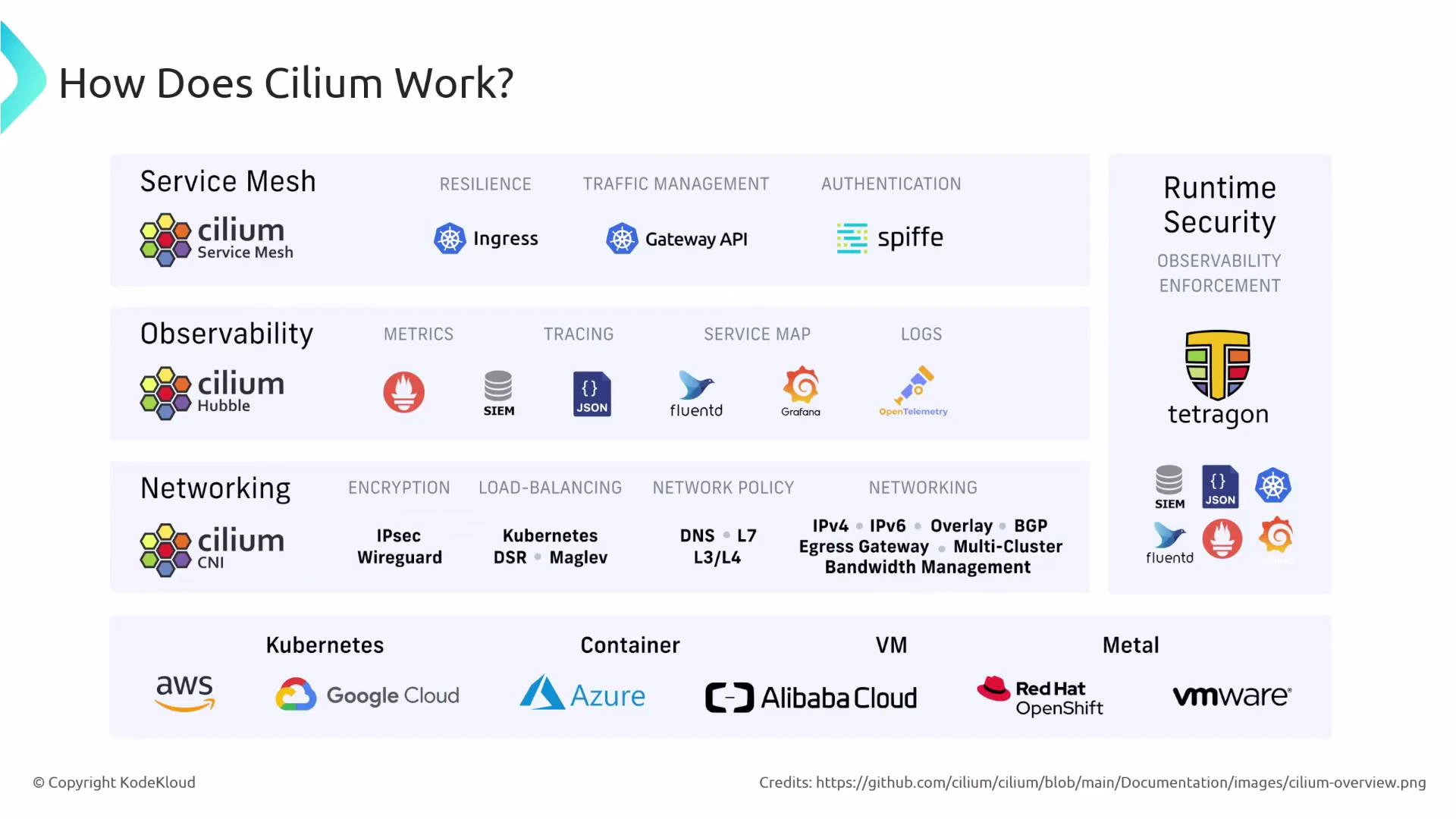

Cilium and service-mesh functionality

Many features provided by service meshes overlap with Cilium capabilities: resilient connectivity, L7 routing, identity-based security, ingress/gateway functionality, and observability/tracing. Cilium can therefore serve as or replace parts of a traditional service mesh by combining an Envoy-based datapath (or other proxies), authentication primitives, and Hubble-based observability.

- Networking: encryption, load balancing, network policy, IPv4/IPv6, overlays/BGP, multi-cluster, egress gateway.

- Observability: metrics, tracing, service maps, logs.

- Service mesh: ingress, Gateway API, authentication, L7 traffic management.

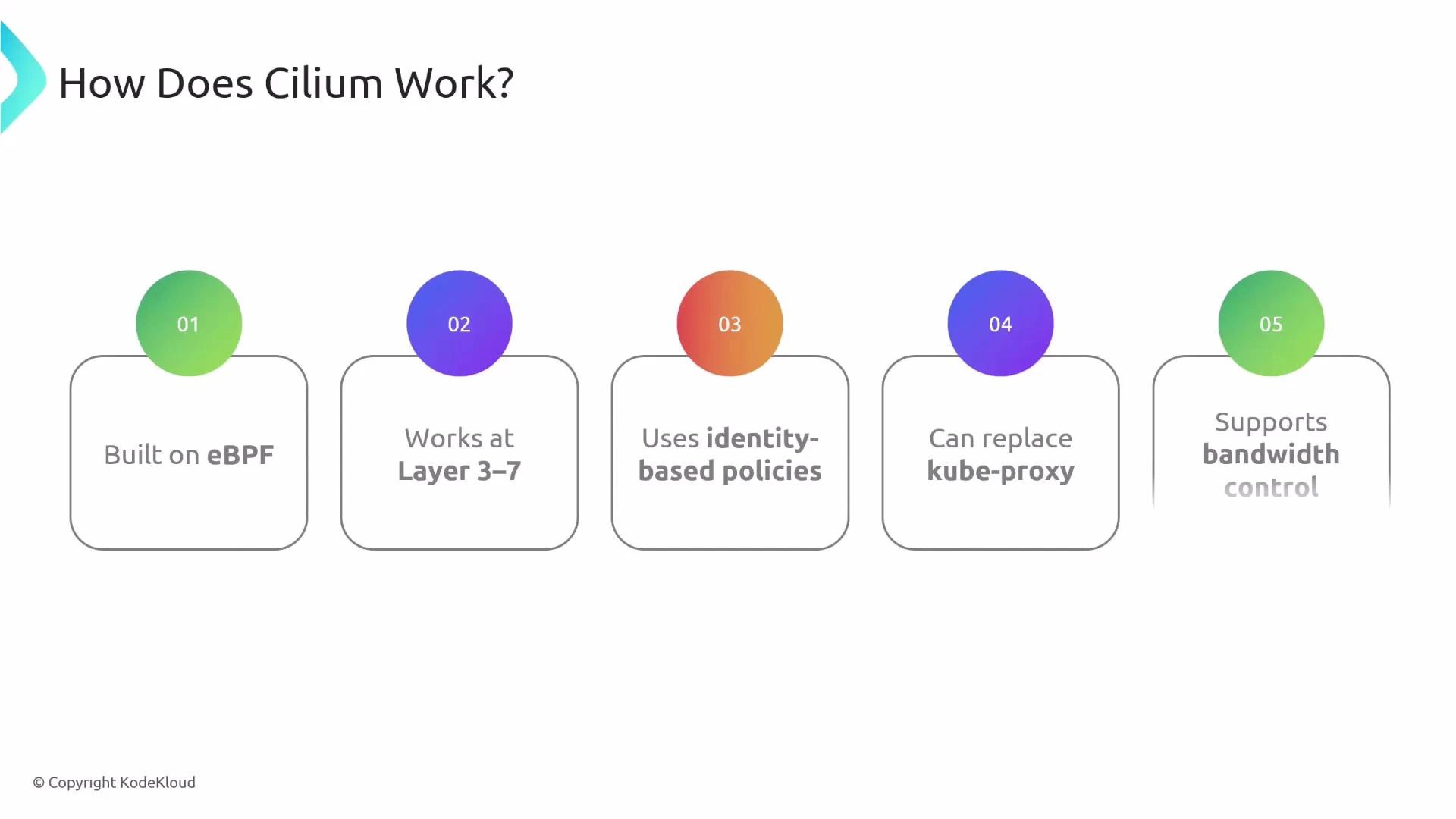

How Cilium works: eBPF and core concepts

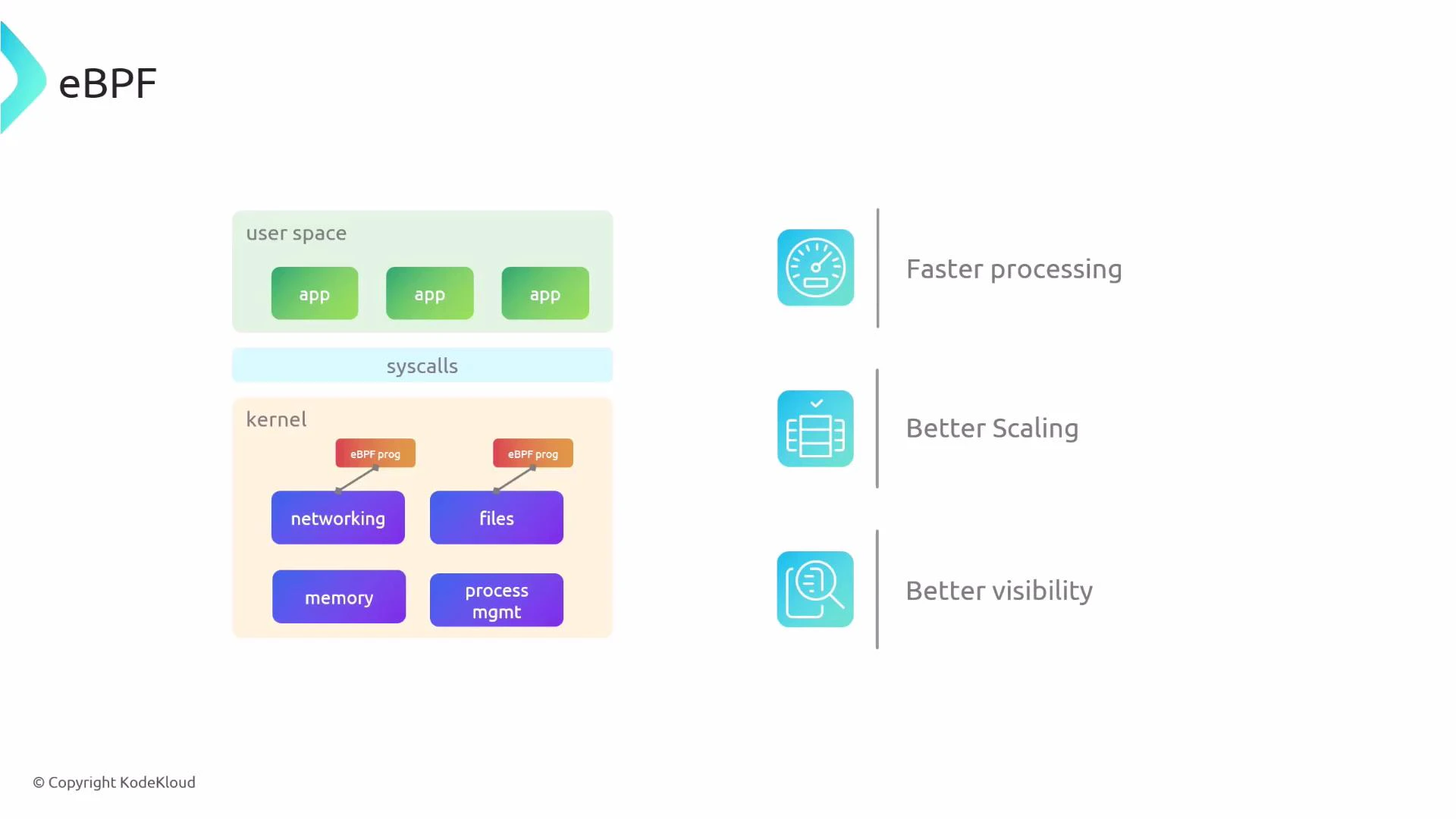

Cilium is built on eBPF (extended Berkeley Packet Filter), which enables in-kernel, sandboxed programs to perform networking, security, and observability tasks with low overhead. Key properties:- eBPF-powered datapath for high-performance packet processing.

- Layer 3–7 capabilities: from IP routing to L7 protocol inspection.

- Identity-based policy enforcement (workload identity vs IP addresses).

- Can replace kube-proxy for service handling (kube-proxy replacement).

- Supports bandwidth control and Quality-of-Service (QoS) features.

- eBPF lets you run small, verified programs inside the kernel without changing kernel source code or loading kernel modules.

- eBPF programs attach to kernel hooks (networking, tracepoints, syscalls) and can inspect and act on packets, events, and system calls with minimal context switching.

- This in-kernel execution yields lower latency, better throughput, and richer visibility compared to user-space-only solutions.

- Kernel-accelerated packet routing and forwarding for the CNI.

- L4 and L7 load balancing with minimal overhead.

- NAT and encapsulation for overlay/underlay networking.

- Multi-cluster connectivity (Cluster Mesh) and cross-cluster service handling.

- mTLS/encryption and secure tunnels between endpoints.

- Enforcement of NetworkPolicy and CiliumNetworkPolicy with protocol-aware inspection.

- Real-time flow visibility, metrics, tracing, and logging.

Because eBPF runs inside the kernel, Cilium delivers low-latency, scalable packet processing and deep telemetry without requiring user-space proxies for many datapath operations.

Summary

Cilium is a comprehensive, eBPF-powered platform for Kubernetes networking, security, and observability:- Acts as a CNI and provides L4/L7 load balancing, ingress/gateway, egress control, and multi-cluster features.

- Enforces Kubernetes NetworkPolicy and provides CiliumNetworkPolicy for protocol-aware L7 controls.

- Supports pod-to-pod encryption via IPsec or WireGuard.

- Hubble delivers flow-level observability and troubleshooting, integrating with Prometheus and Grafana.

- Built on eBPF for performance, scalability, and rich visibility.

- Can replace kube-proxy and provide many service-mesh capabilities, reducing the need for separate service-mesh layers in some deployments.

Links and references

- Cilium official site: https://www.cilium.io

- Hubble: https://www.cilium.io/features/hubble/

- eBPF: https://ebpf.io

- Envoy proxy: https://www.envoyproxy.io

- Prometheus: https://prometheus.io

- Grafana: https://grafana.com

- Kubernetes NetworkPolicy docs: https://kubernetes.io/docs/concepts/services-networking/network-policies/

- WireGuard: https://www.wireguard.com

- IPsec (Wikipedia): https://en.wikipedia.org/wiki/IPsec