In this lesson we cover Cluster Mesh fundamentals: what Cluster Mesh enables, required cluster prerequisites, how to configure and enable it in Cilium, how to connect clusters into a full mesh, and why KVStoreMesh improves Cluster Mesh scalability. Cluster Mesh lets multiple Kubernetes clusters behave as a single multi-cluster network fabric by providing:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

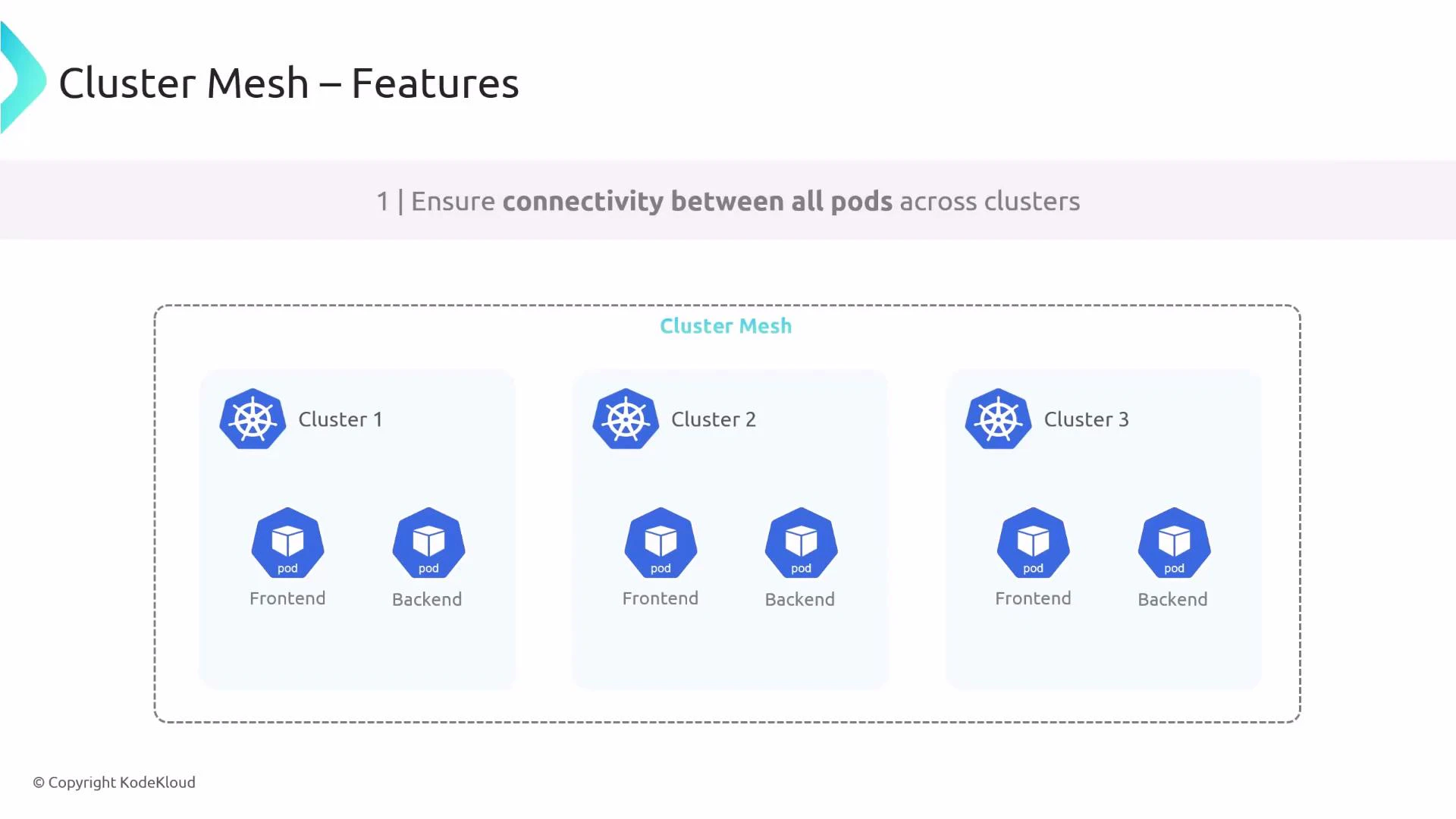

- Cross-cluster network connectivity (pods can talk across clusters).

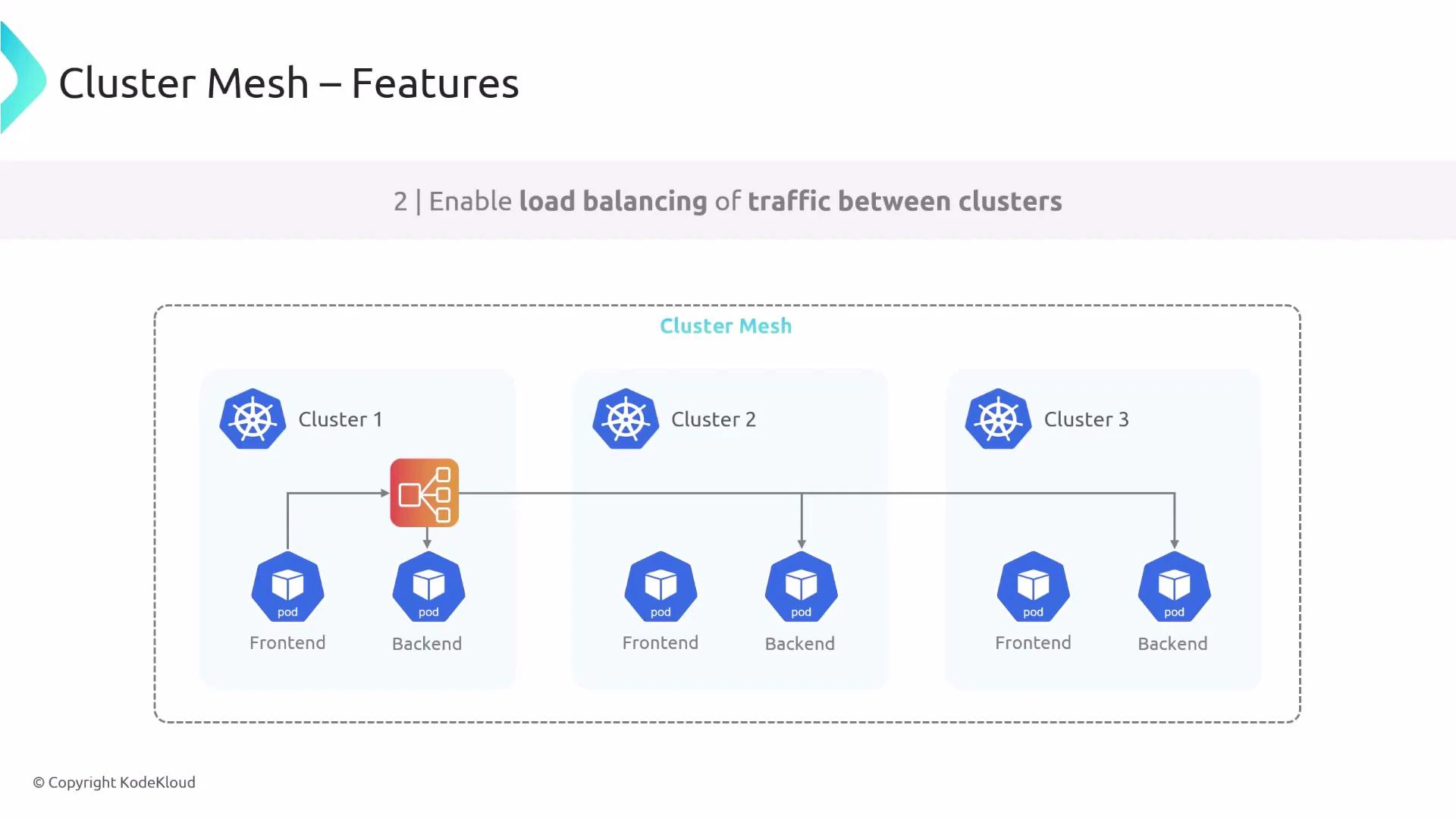

- Cross-cluster load balancing (services can balance across backends in other clusters).

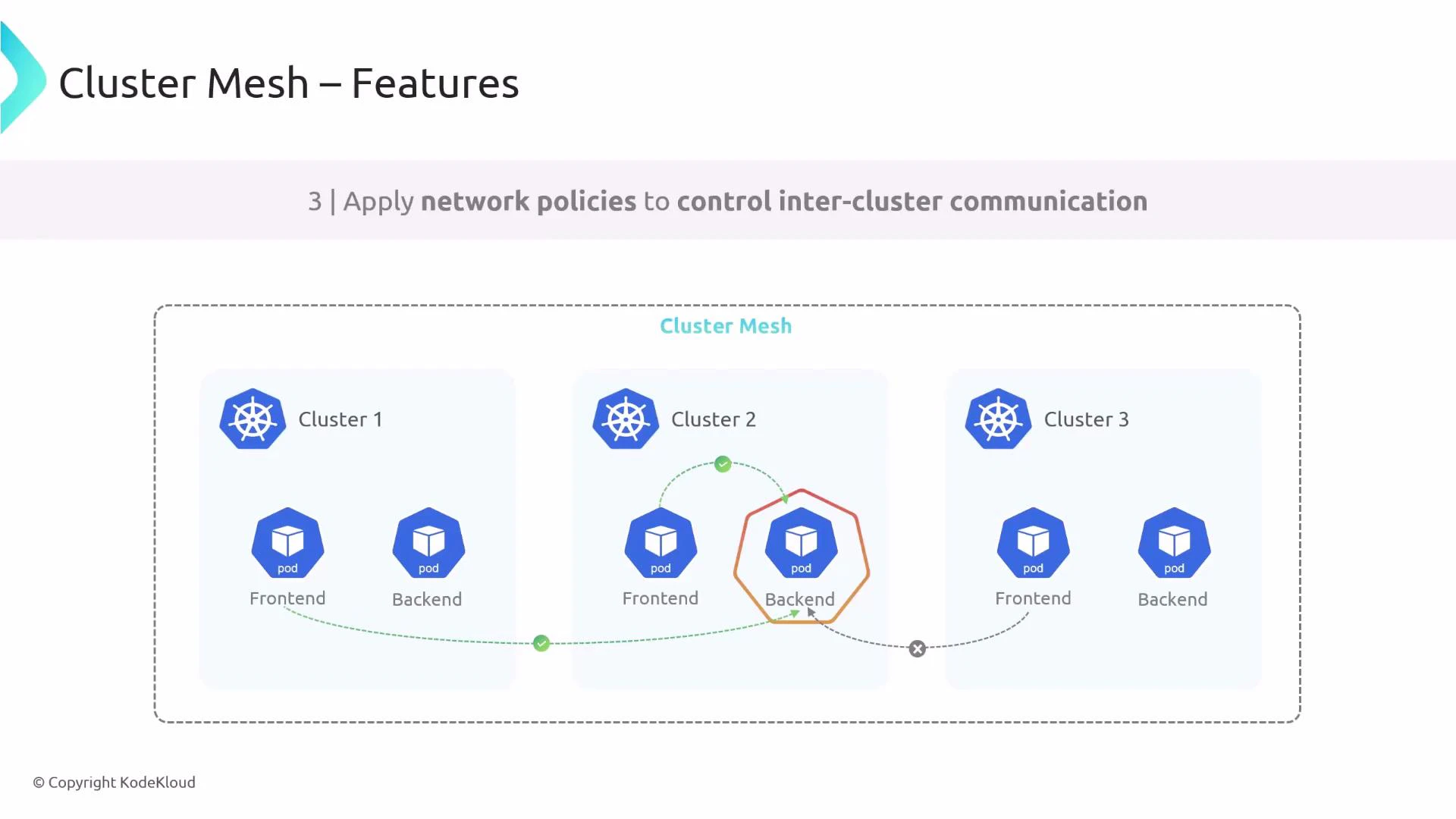

- Shared security controls (apply Kubernetes NetworkPolicies across clusters).

Prerequisites and requirements

Before joining clusters into a Cluster Mesh, verify the following requirements across all clusters:| Requirement | Why it matters |

|---|---|

| Matching datapath mode | All clusters should use the same datapath (e.g., encapsulation/tunnel or native routing) to avoid connectivity and routing mismatches. |

| Non-overlapping Pod CIDRs | Pods in different clusters must use unique IP ranges to prevent address conflicts. |

| Full node-to-node IP connectivity | Nodes across clusters must be able to reach each other (or through a suitable networking fabric) for cross-cluster traffic and service access. |

| Unique cluster identifiers | Each cluster needs a unique cluster name and integer ID in Cilium configuration to avoid collisions. |

- Provide unique IPv4/IPv6 ranges per cluster if IPv6 is enabled.

- Give each cluster a unique name and integer ID.

Enabling Cluster Mesh (Cilium)

After deploying Cilium on each cluster, enable Cluster Mesh with the cilium clustermesh enable command. If your environment cannot automatically provision an appropriate LoadBalancer or service type, you can supply a service type explicitly. Example: enable Cluster Mesh on two clusters using LoadBalancer service type:Connecting clusters into a mesh

After enabling Cluster Mesh on each cluster, establish mesh connections using cilium clustermesh connect. Provide the source and destination kubeconfig contexts:

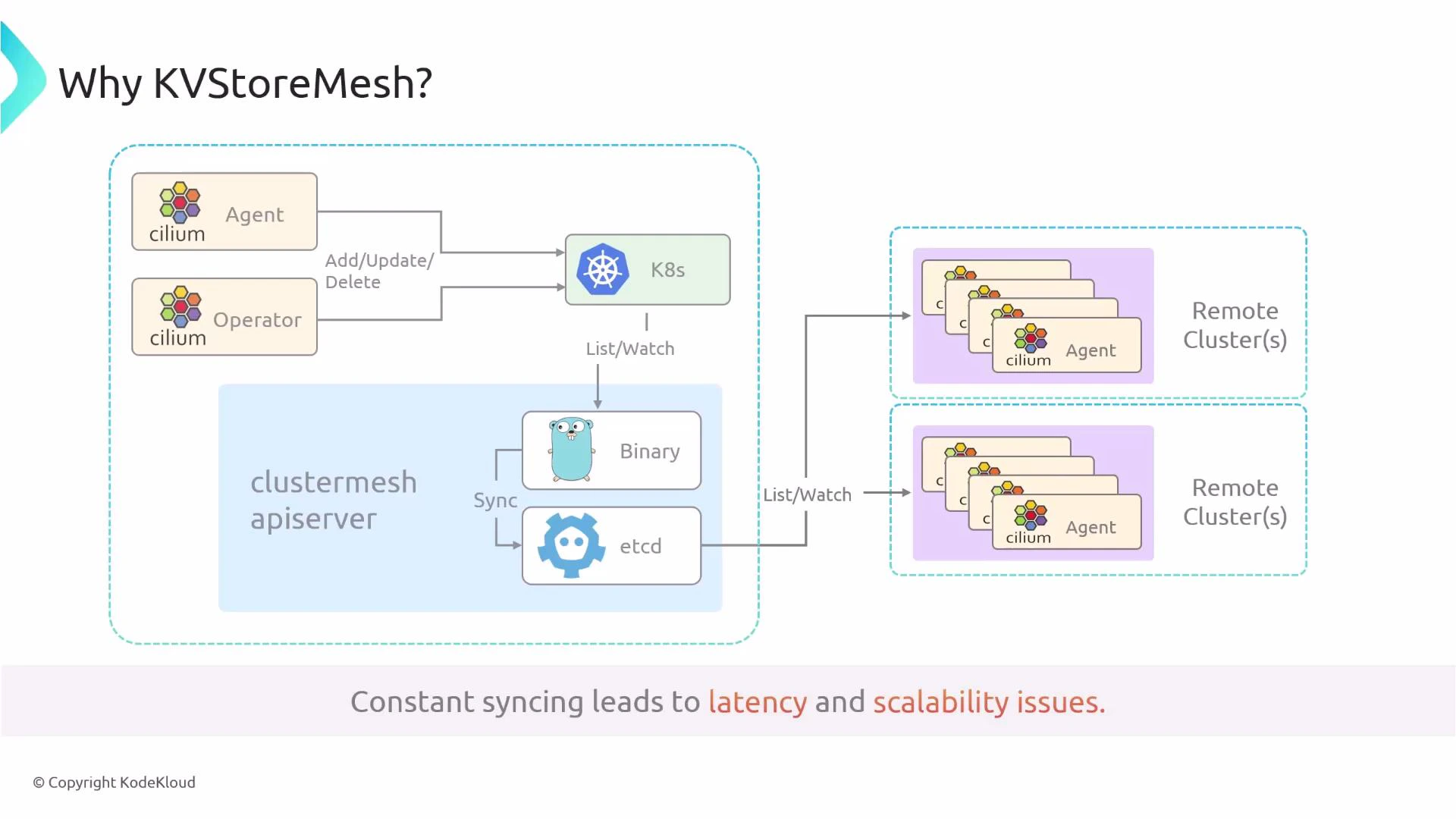

KVStoreMesh — purpose and design

KVStoreMesh is a design improvement introduced to scale Cluster Mesh. Understanding its role helps when planning and troubleshooting multi-cluster environments. Original design challenges:- Each Cilium agent/operator wrote resources (Services, CiliumNodes, identities, endpoints) into the cluster Kubernetes API.

- The Cluster Mesh API server would watch the Kubernetes API and sync that data into a central etcd.

- Remote agents had to watch many remote etcd instances; at scale this caused high synchronization load, increased latency, and heavy etcd pressure.

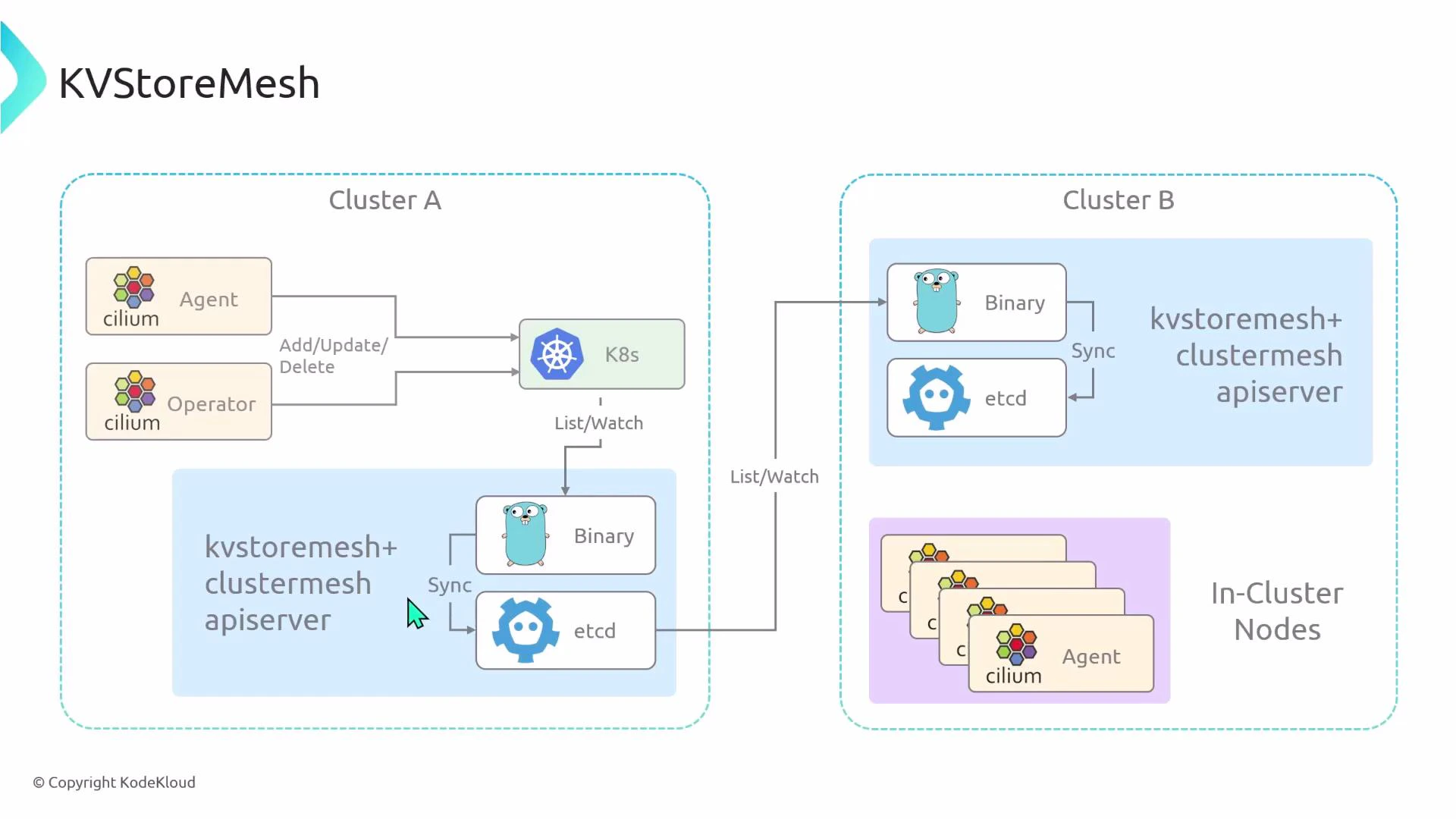

- Each cluster runs a local KVStoreMesh binary and a local etcd instance containing mesh-wide state.

- Cilium agents sync only with the local KV store.

- KVStoreMesh instances synchronize state between clusters, reducing the number of remote endpoints that each agent must watch.

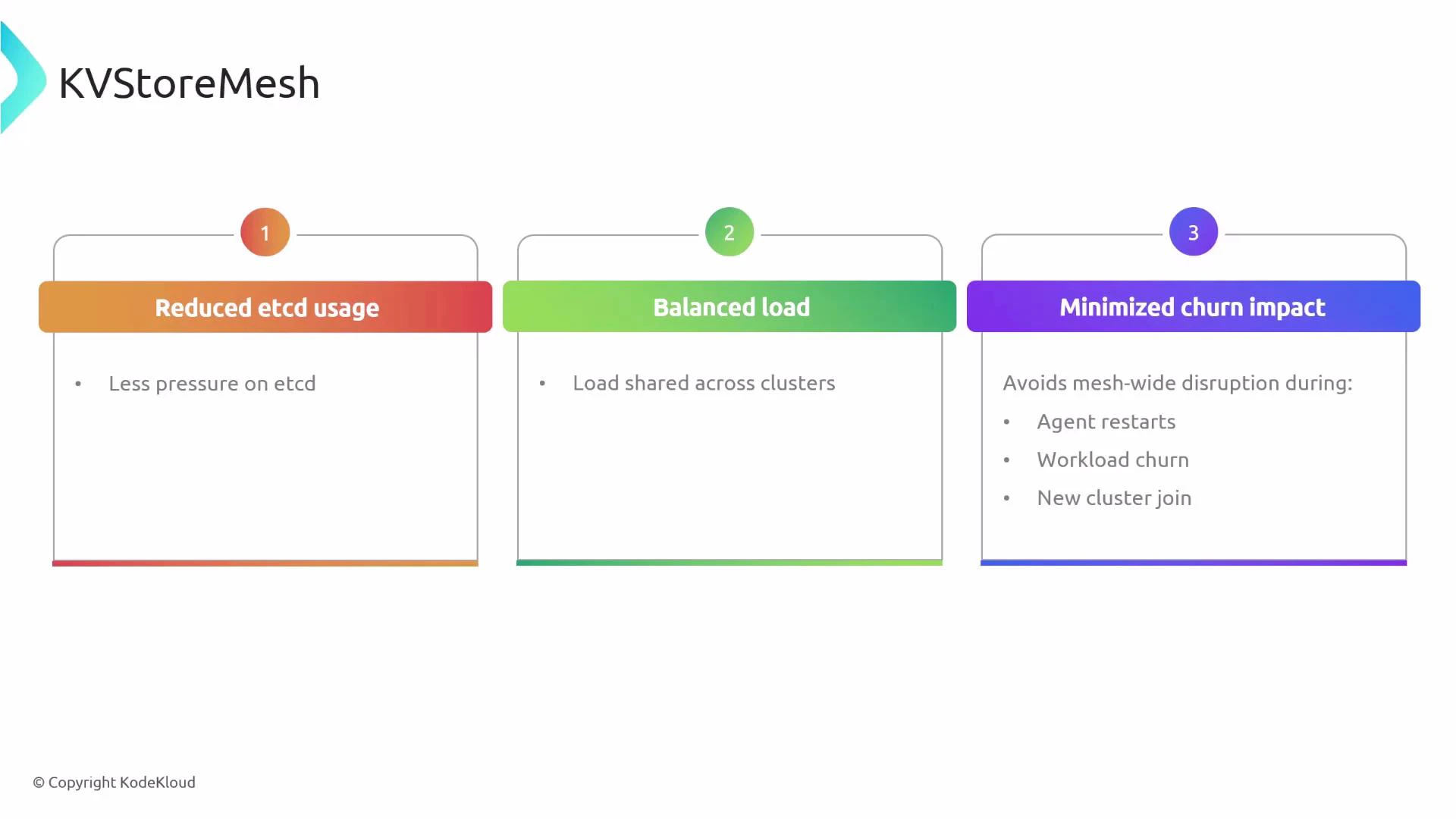

- Reduced overall etcd usage and pressure.

- More balanced load across cluster KV stores.

- Lower impact from agent restarts, workload churn, or new clusters joining the mesh.

KVStoreMesh is enabled by default. If you must disable it for compatibility reasons, pass —kvstore-mesh=false when enabling Cluster Mesh.

Summary

This article covered:- What Cluster Mesh provides: cross-cluster connectivity, cross-cluster load balancing, and shared network policies.

- Key prerequisites: matching datapath, unique Pod CIDRs, full node connectivity, and unique cluster IDs.

- Example Cilium per-cluster configuration snippets.

- How to enable Cluster Mesh and verify the Cluster Mesh API service.

- How to connect clusters and validate mesh status.

- Why KVStoreMesh improves scalability and how to enable/disable it.