In this lesson we’ll explain what a service mesh is, why the pattern exists, and the problems it solves in modern microservices architectures.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

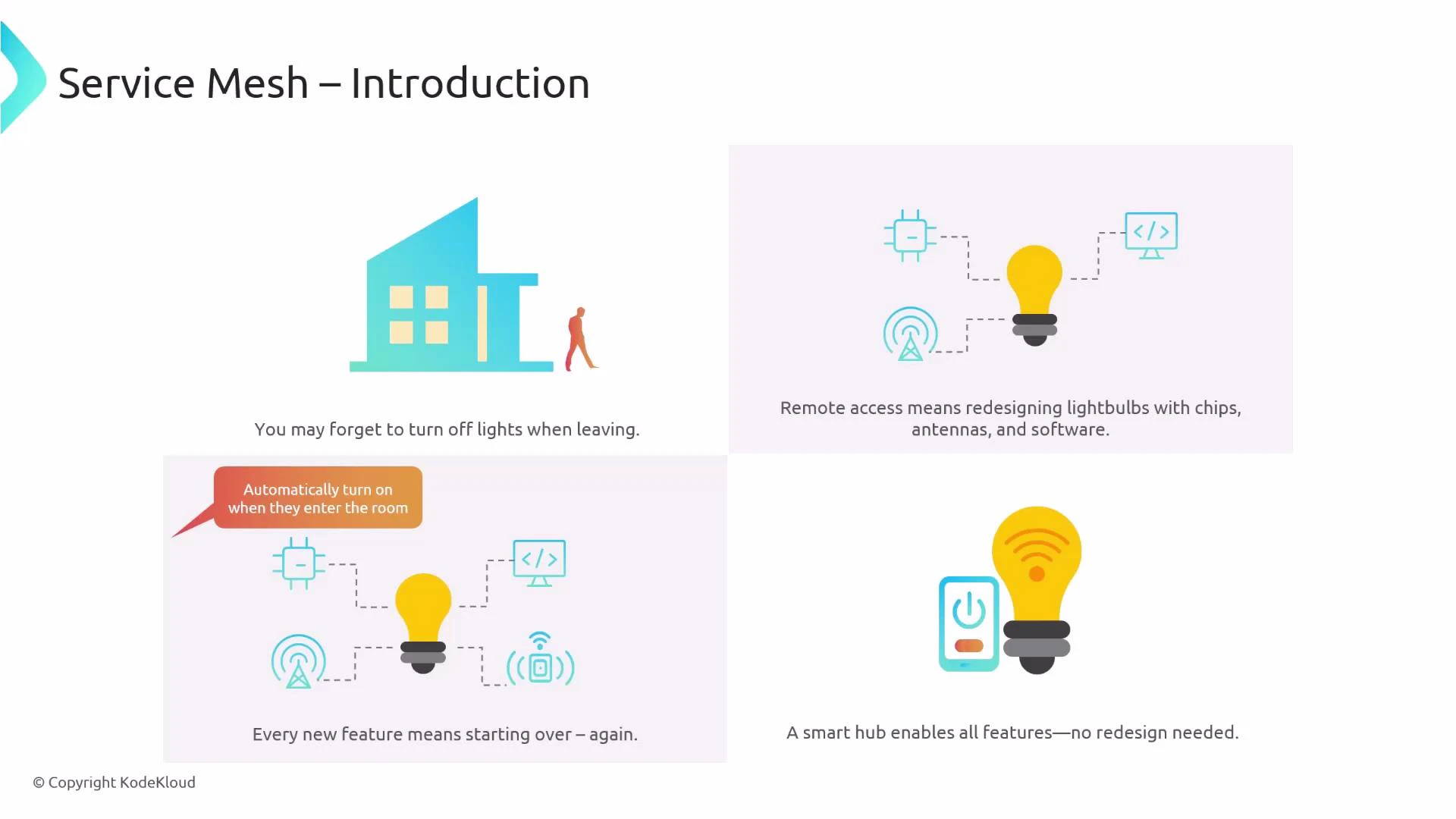

Why a service mesh was created (an analogy)

Imagine you leave home and forget to turn off the lights. One option is to redesign every light bulb to include networking chips, firmware, and antennas so each bulb can be controlled remotely. That works, but it’s costly and impractical to repeat for every new feature. A simpler pattern is a smart socket (a hub) that provides Wi‑Fi, presence detection, and voice integration. Any plain bulb screwed into that socket instantly inherits those capabilities without being redesigned.

- Light bulbs = applications/services.

- Smart socket = service mesh / infrastructure layer that provides common features (connectivity, security, observability).

- Moving features into the socket avoids redesigning each bulb; moving cross‑cutting concerns out of application code avoids changing every service.

The challenge in microservices

Consider two services: a Python service and a Go service. If you need mutual TLS (mTLS) for secure service‑to‑service communication and you implement it in-app, every team must:- Add language-specific libraries,

- Modify application code,

- Maintain integration over time.

What a service mesh does

A service mesh provides a shared infrastructure layer that handles cross‑cutting concerns like:- Encryption and authentication (mTLS)

- Observability (metrics, logs, tracing)

- Traffic management (load balancing, retries, timeouts, circuit breaking)

- Policy enforcement and access control

- Deployment routing for canary/blue‑green releases

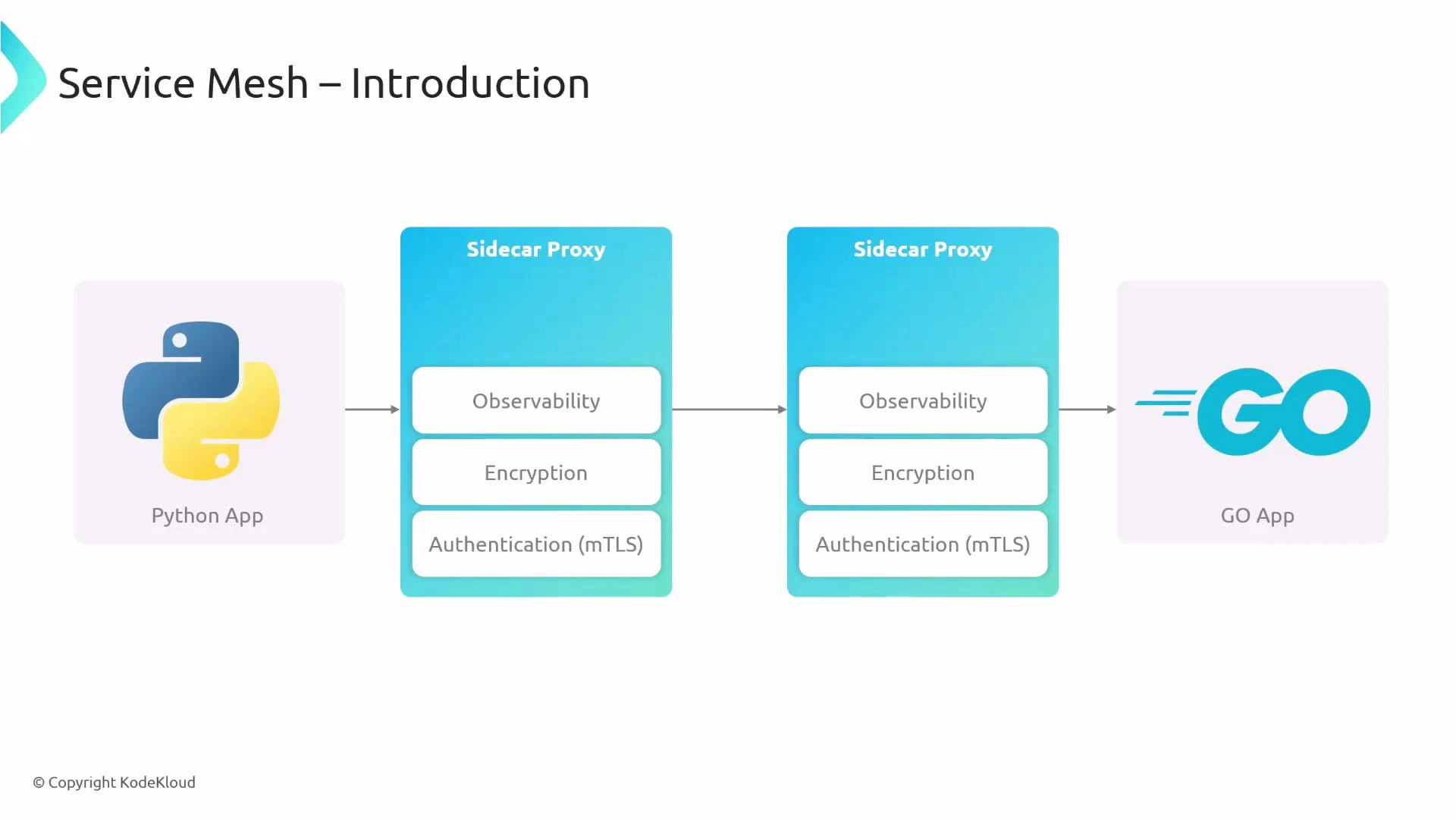

- Each application instance is paired with a local sidecar proxy (process or container).

- The application sends traffic to the local sidecar; the sidecar enforces encryption, authentication (mTLS), observability, and routing.

- The remote sidecar receives traffic, applies policy, decrypts when required, and forwards it to the destination application.

Key benefits of using a service mesh

- No code changes: Offload network, security, and observability features to the infrastructure layer.

- Cross‑language: Works across services implemented in different languages and frameworks.

- Developer productivity: Developers concentrate on business logic while the platform team manages the mesh.

- Traffic management: Fine‑grained control—load balancing, retries, timeouts, circuit breaking, and routing strategies for safe deployments.

- Observability: Centralized metrics, tracing, and logs to troubleshoot and optimize applications.

- Security: mTLS for encryption and mutual authentication, plus policy‑based access control.

- Consistency and decoupling: Centralized policies reduce misconfiguration and scale management.

Quick comparison table

| Feature | Problem solved | Example |

|---|---|---|

| mTLS | Secure service-to-service communication | Automatic certificate rotation enforced by mesh |

| Observability | Fragmented telemetry across services | Unified metrics and distributed tracing |

| Traffic management | Hard to implement consistent retries/timeout policies | Centralized retry and circuit-breaker configuration |

| Deployments | Risky rollouts and inconsistent routing | Canary and blue/green traffic splitting managed by mesh |

A service mesh is the “smart socket” for microservices: attach a sidecar proxy to provide network, security, and observability features uniformly—without changing application code.

Popular service mesh implementations

- Istio Service Mesh — feature‑rich, extensible control plane and telemetry.

- Consul Connect (HashiCorp) — service discovery and mesh features with identity-based security.

- Linkerd — lightweight, performance-oriented, and easy to operate.

Further reading and references

- Kubernetes Documentation

- Istio Documentation

- Linkerd Documentation

- Cilium Service Mesh & Cilium Documentation