In this lesson we cover practical Cilium performance enhancements you can enable to improve throughput and latency. These knobs are commonly used in production and are useful for understanding how Cilium forwards packets at scale. The explanations remain tied to the accompanying diagrams so you can visualize how traffic flows through the host and pod network stacks. Architecture reminderDocumentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- Each Kubernetes node has a host network namespace (with the main interface to the outside world).

- Each Pod runs in its own network namespace.

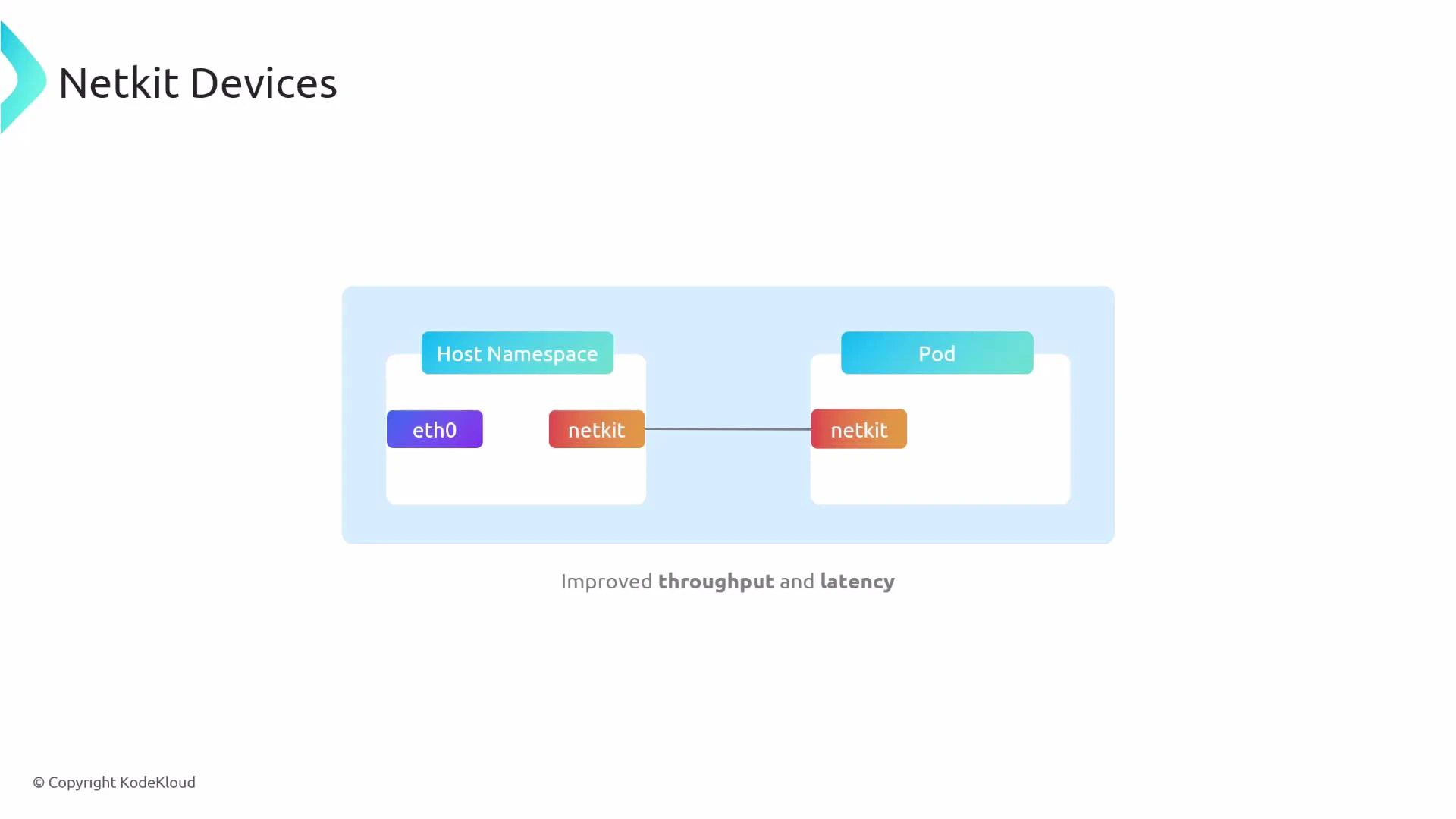

- Pods and the host are typically connected via a veth pair; many of the tunables below replace or bypass parts of that path to reduce host-side processing and latency.

- netdev datapath (replace veth with the AF_XDP-based datapath)

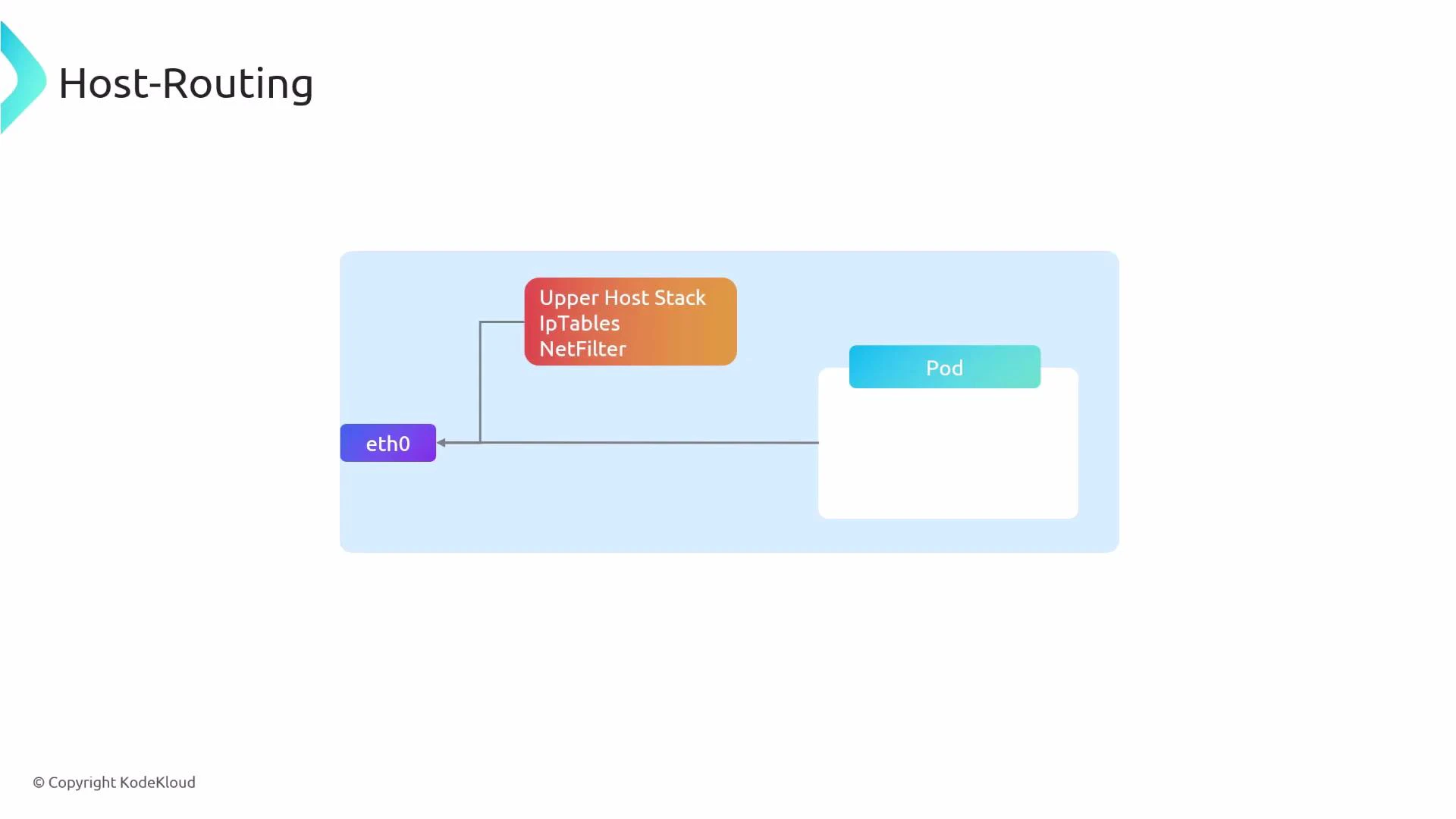

- host routing (bypass host iptables/netfilter for selected flows)

- BIG TCP (coalesce TCP segments to reduce packet rate)

- routingMode=native — use native eBPF-based routing instead of proxy-based routing.

- bpf.datapathMode=netdev — use the netdev (AF_XDP) datapath rather than veth.

- bpf.masquerade=true — enables eBPF-based masquerading (SNAT) when required.

- kubeProxyReplacement=true — replaces kube-proxy with Cilium’s eBPF-based proxy implementation.

- In many deployments you will combine these settings with the netdev datapath.

- Host routing behavior depends on cluster topology and whether nodes are directly routed or behind NAT/load balancers.

BIG TCP requires kernel and NIC driver support. Test carefully on staging before enabling in production. Incorrect assumptions about offloads or driver behavior can cause performance issues or packet corruption.

- enableIPv4BIGTCP=true — enable BIG TCP for IPv4.

- enableIPv6BIGTCP=true — enable BIG TCP for IPv6.

- ipv6.enabled=true — required when enabling IPv6-specific features.

| Tuning area | Primary effect | Key Helm settings |

|---|---|---|

| netdev datapath (AF_XDP) | Replaces veth, reduces host processing, improves throughput & latency | routingMode=native, bpf.datapathMode=netdev, bpf.masquerade=true, kubeProxyReplacement=true |

| Host routing | Bypasses iptables/netfilter for flows, reduces forwarding overhead | bpf.masquerade=true, kubeProxyReplacement=true (often combined with netdev) |

| BIG TCP | Coalesces TCP segments to reduce pps and CPU usage | enableIPv4BIGTCP=true, enableIPv6BIGTCP=true, ipv6.enabled=true |

- Validate kernel version and NIC driver support for AF_XDP and large GSO/GRO.

- Test netdev and BIG TCP in a staging environment using realistic traffic patterns.

- Monitor CPU, packet rates (pps), and error counters (e.g., XDP drops, checksum errors) after enabling changes.

- Confirm compatibility with your CNI configuration, routing topology, and upstream load balancers.

- Cilium official docs: https://docs.cilium.io/

- AF_XDP / XDP overview: https://www.kernel.org/doc/html/latest/networking/index.html#xdp

- Linux network offloads (GSO/GRO/TCP segmentation offload): https://www.kernel.org/doc/Documentation/networking/

Always test these optimizations (netdev datapath, host routing, and BIG TCP) in a non-production environment first. Hardware and driver incompatibilities or misconfigured offloads can cause degraded performance or packet corruption.