Caching helps you serve repeated requests faster by storing frequently accessed content closer to the client. Think of it like ordering the same coffee and cookie every morning: at first, the barista prepares your order on demand, but over time they have it ready before you even ask. Similarly, Nginx can remember—and quickly deliver—static or dynamic resources without hitting your backend every time.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Core Proxy Cache Configuration

Below is a minimalnginx.conf snippet illustrating the main caching directives:

proxy_cache_path

Defines where and how cache files are stored:| Parameter | Description |

|---|---|

/var/lib/nginx/cache | Filesystem path for cached data (ensure correct permissions). |

levels=1:2 | Two-level directory structure to avoid too many files per folder. |

keys_zone=app_cache:8m | Shared memory zone app_cache using 8 MB for cache keys and metadata. |

max_size=50m | Optional limit; older entries are removed when the cache exceeds this size. |

Verify that the Nginx user has read/write access to your cache directory (

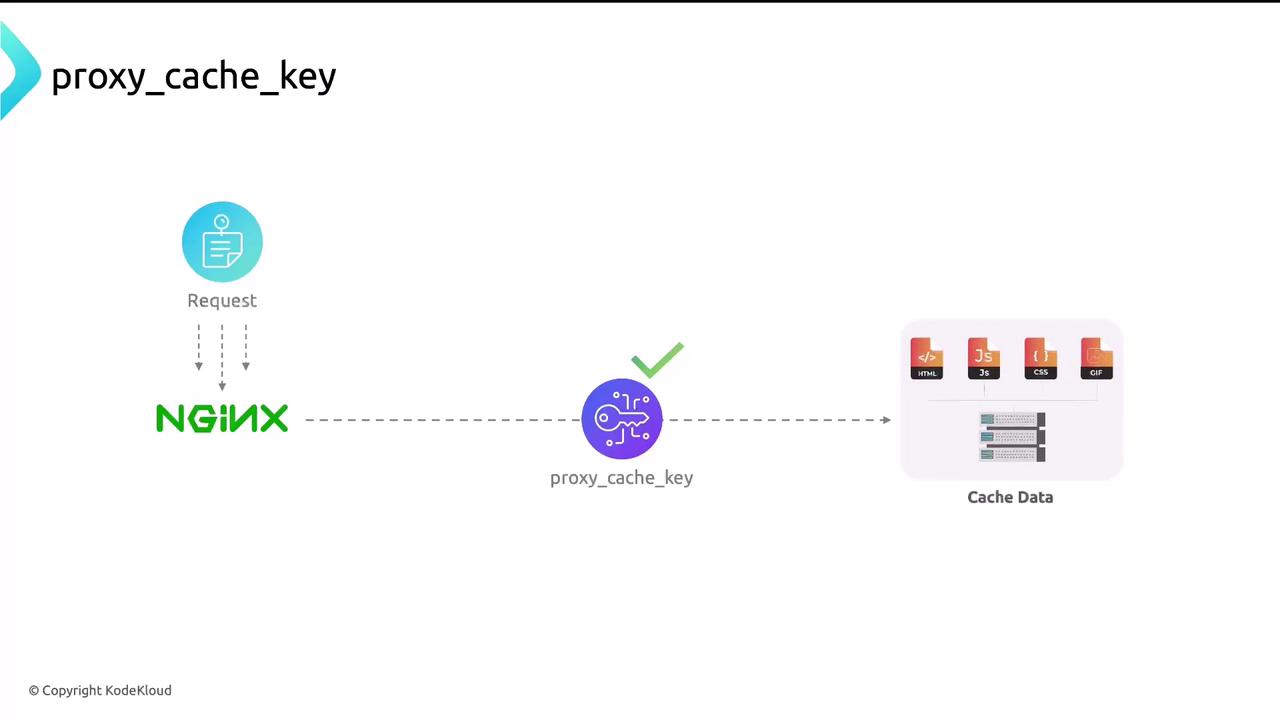

/var/lib/nginx/cache by default).proxy_cache_key

By default, Nginx uses the full request URL as the cache key. You can customize it to include protocol, HTTP method, host, and URI for better uniqueness:

proxy_cache_valid

Control how long different response codes are kept in cache:Optional: Bypassing the Cache

For certain dynamic routes or when clients send cache-control headers, you may want to skip cache lookup:Overusing

proxy_cache_bypass will reduce cache effectiveness and increase load on your backend. Use it only when necessary.Verifying Cache Behavior

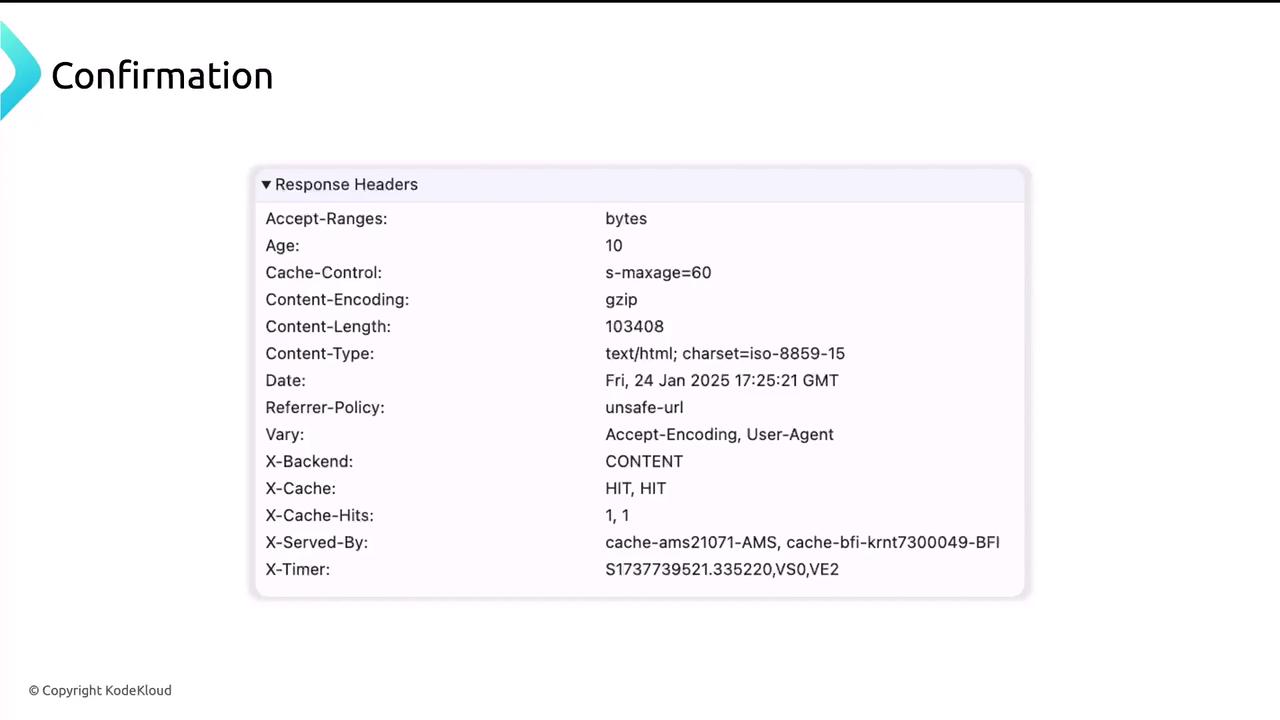

Inspect theX-Proxy-Cache header in your HTTP response to determine whether content was served from cache:

HIT indicates a cached response, while a MISS shows that Nginx forwarded the request upstream.