What can you expect from a targeted feature engineering process? In short: more accurate, more reliable models whose predictions are easier to act on. Thoughtful feature engineering strengthens signal, reduces noise, and helps training discover true, generalizable patterns instead of memorizing idiosyncrasies in the training set. The result is faster convergence, fewer experiments to reach acceptable performance, and improved downstream business utility.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

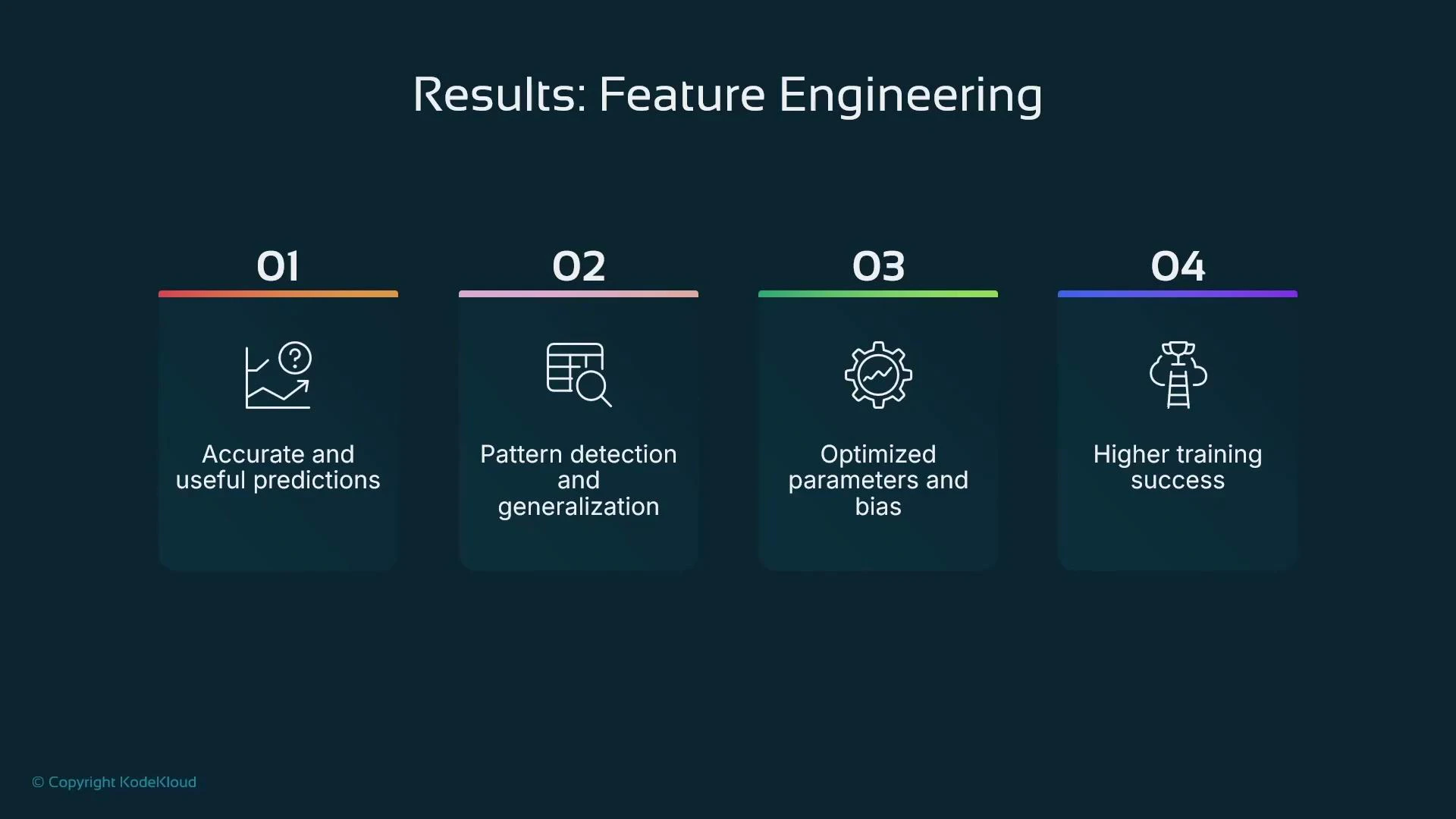

- Stronger features produce clearer correlations between inputs and targets, yielding more accurate predictions and lower error metrics (e.g., reduced mean squared error for regression).

- Feature engineering can reduce bias by exposing relevant signals, which leads to better-optimized model parameters and improved generalization.

- Well-crafted features often allow simpler models to achieve competitive performance and reduce the need for deeper architectures.

- Training usually converges faster on feature-engineered data, meaning fewer epochs and less hyperparameter tuning.

| Without feature engineering | With feature engineering |

|---|---|

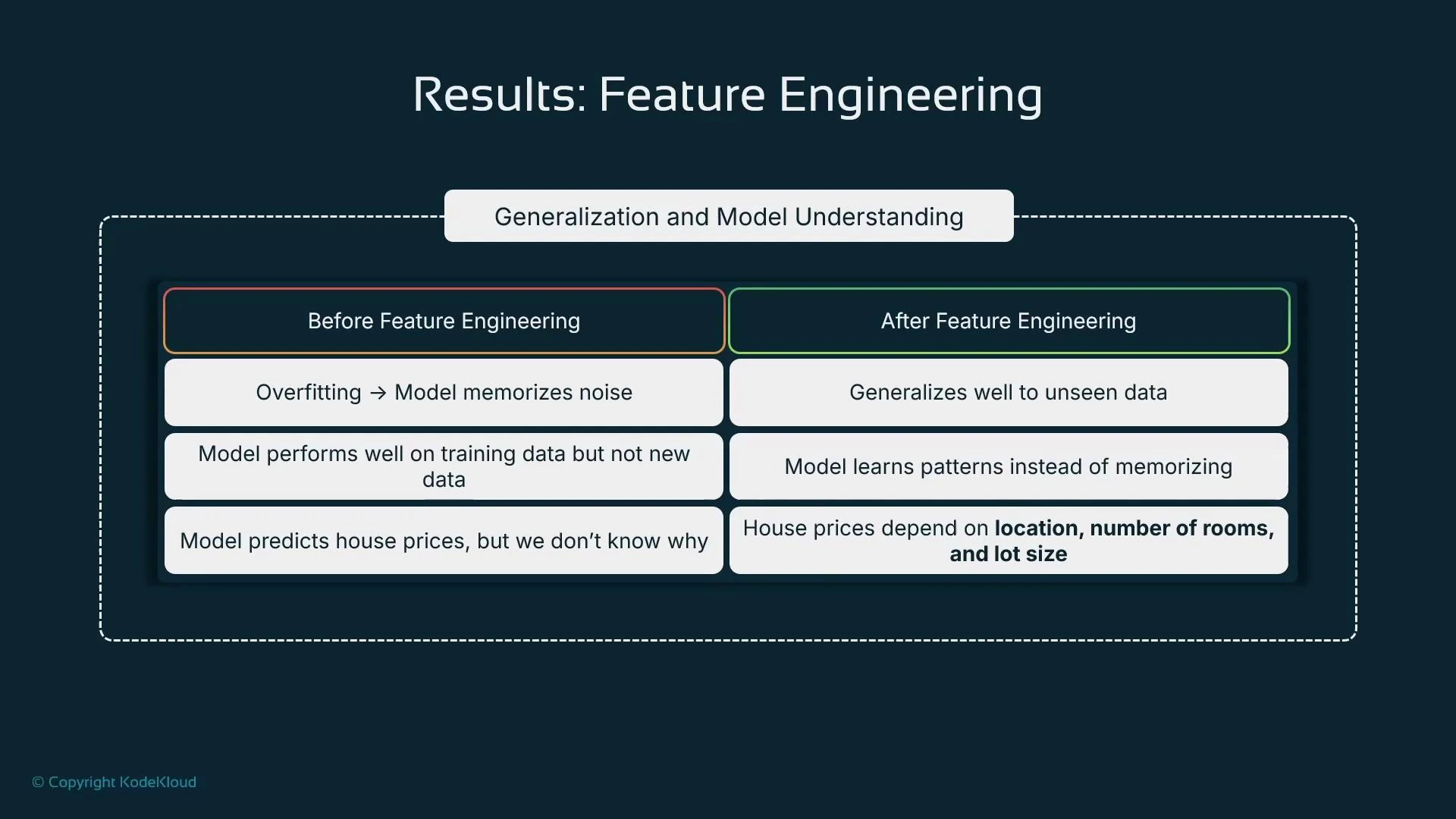

| Weak signals; unclear feature importance | Stronger predictive signals and interpretable importance |

| Higher risk of overfitting or underfitting | Better generalization to new data |

| Slower convergence; may require complex models | Faster convergence; simpler models often suffice |

| Higher evaluation error (e.g., MSE) | Lower evaluation error and better-explained variance |

Overfitting is when a model memorizes training examples and performs poorly on new data. Thoughtful feature engineering mitigates overfitting by exposing true predictive signals and reducing noisy or irrelevant inputs.

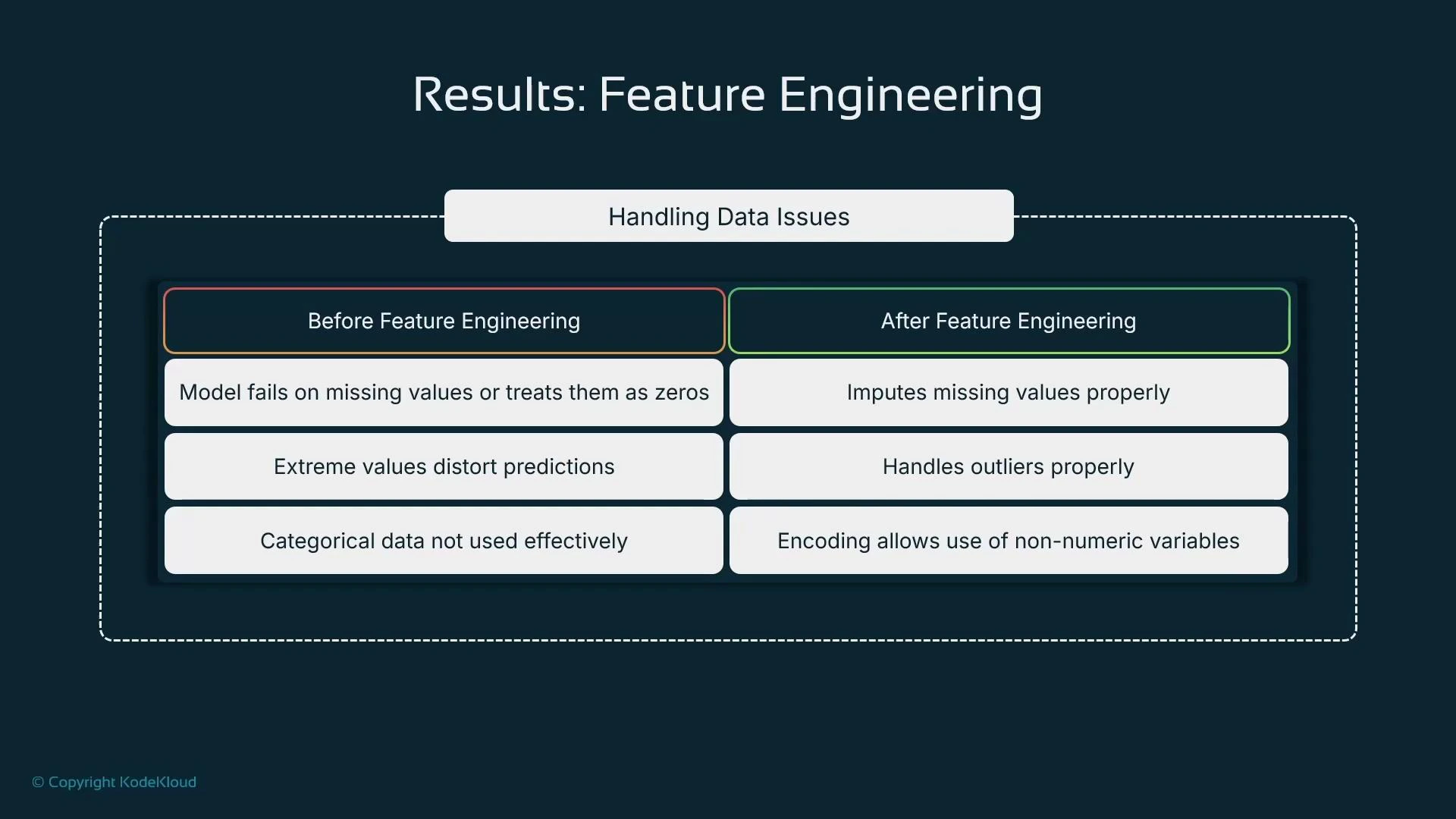

- Missing values: apply per-feature strategies such as mean/median imputation, KNN imputation, or model-based methods; consider adding a missing-value indicator.

- Outliers/extreme values: use clipping, winsorizing, trimming, or model-based handling depending on whether extremes are valid signals.

- Categorical variables: choose one-hot, ordinal, target/mean encoding, or learned embeddings based on cardinality and model type.

| Data issue | Typical fixes |

|---|---|

| Missing values | Mean/median imputation, KNN, model-based imputation, missing indicators |

| Outliers/extremes | Clipping, winsorizing, trimming, or model-aware handling |

| High-cardinality categorical | Target encoding, hashing, or embeddings |

| Skewed numerical distributions | Log transforms, power transforms, or quantile transforms |

- Retail: Customer spend is often driven by seasonality or promotions rather than static income. Time-based features—month, week-of-year, holiday flags, rolling aggregates—can dramatically outperform raw income variables.

- Banking and fraud detection: Velocity and patterns (transactions per day, time since last transaction, anomalous sequence patterns) often indicate fraud more reliably than single-transaction amounts. Aggregate and ratio features are especially valuable.

- Cleaned data is a necessary starting point but not sufficient—feature engineering extracts predictive signals that raw cleaning does not.

- Typical feature engineering steps: drop irrelevant variables, transform skewed features (log, power), synthesize new features (ratios, differences, timestamps to ages), and choose appropriate encodings.

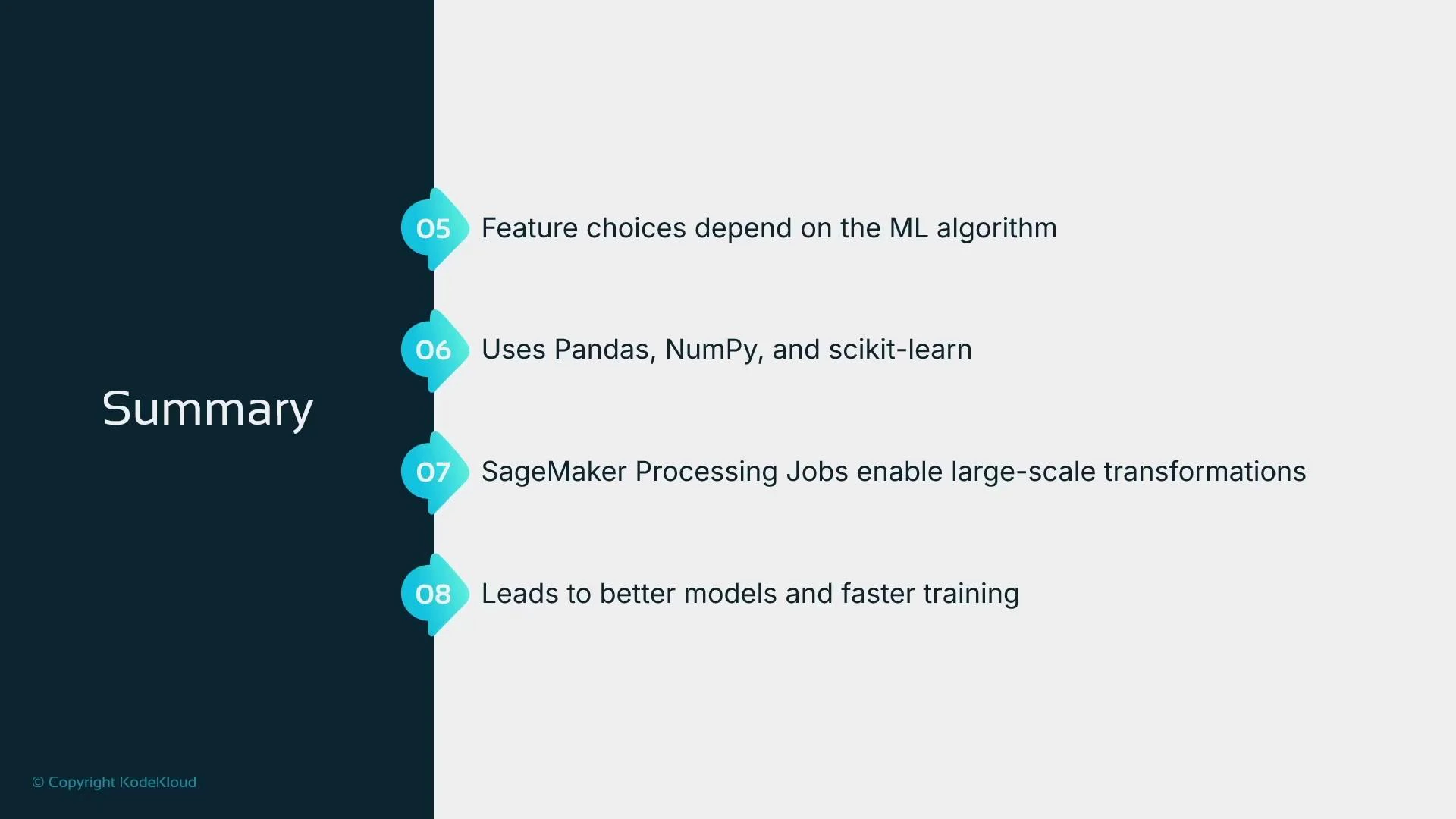

- Consider the model family: tree-based models, linear models, and neural networks expect different feature representations (e.g., scaling matters more for linear and neural models than for many tree models).

| Resource | Use case |

|---|---|

| pandas | Tabular data manipulation and feature construction |

| NumPy | Numerical operations and vectorized transforms |

| scikit-learn | Preprocessing pipelines, encoders, and transformers |

| SageMaker Processing Jobs | Scalable, managed data transforms for large datasets |

- pandas

- NumPy

- scikit-learn

- SageMaker Processing Jobs

- Kubernetes Documentation (for orchestration and deployment patterns)