Now that your endpoint shows as InService, the next step is to send a real-time inference request from your Jupyter notebook. This guide shows two common ways to call a SageMaker real-time endpoint:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- Using boto3 with the SageMaker Runtime client (low-level).

- Using the SageMaker Python SDK and the Predictor abstraction (higher-level).

boto3.client('sagemaker')— used to create, configure, and delete SageMaker Models, EndpointConfigurations, and Endpoints.boto3.client('sagemaker-runtime')— used to invoke real-time endpoints.

When invoking a real-time endpoint from code, always use the SageMaker Runtime client (

'sagemaker-runtime'). Calling invoke_endpoint on the SageMaker service client will not work for real-time inference requests.Test the endpoint using boto3 (SageMaker Runtime)

This example shows how to call the endpoint directly using the SageMaker Runtime API. Ensure the variableendpoint_name matches the endpoint you created earlier.

predictions array with a score field.

Use the SageMaker Python SDK (Model.deploy + Predictor)

The SageMaker Python SDK provides a higher-level API to create and deploy models.Model.deploy(...) will create the EndpointConfiguration and Endpoint in one call. Behavior varies by the Model class used:

- Framework-specific model classes (e.g.,

sagemaker.xgboost.XGBoostModel,sagemaker.sklearn.SKLearnModel,sagemaker.pytorch.PyTorchModel,sagemaker.tensorflow.TensorFlowModel) —model.deploy(...)returns aPredictorinstance. - The base

sagemaker.model.Modelclass —model.deploy(...)returnsNone; you must instantiate aPredictorand attach it to the created endpoint name.

| Method | Use case | Returns |

|---|---|---|

boto3 + sagemaker-runtime | Low-level control / direct invocation | Raw response stream; you decode bytes |

sagemaker.model.*.Model.deploy() | Quick deployment for framework-specific models | Predictor (if framework-specific) or None (base Model) |

sagemaker.predictor.Predictor | Explicit client for requests | predict() returns bytes or str (decode if bytes) |

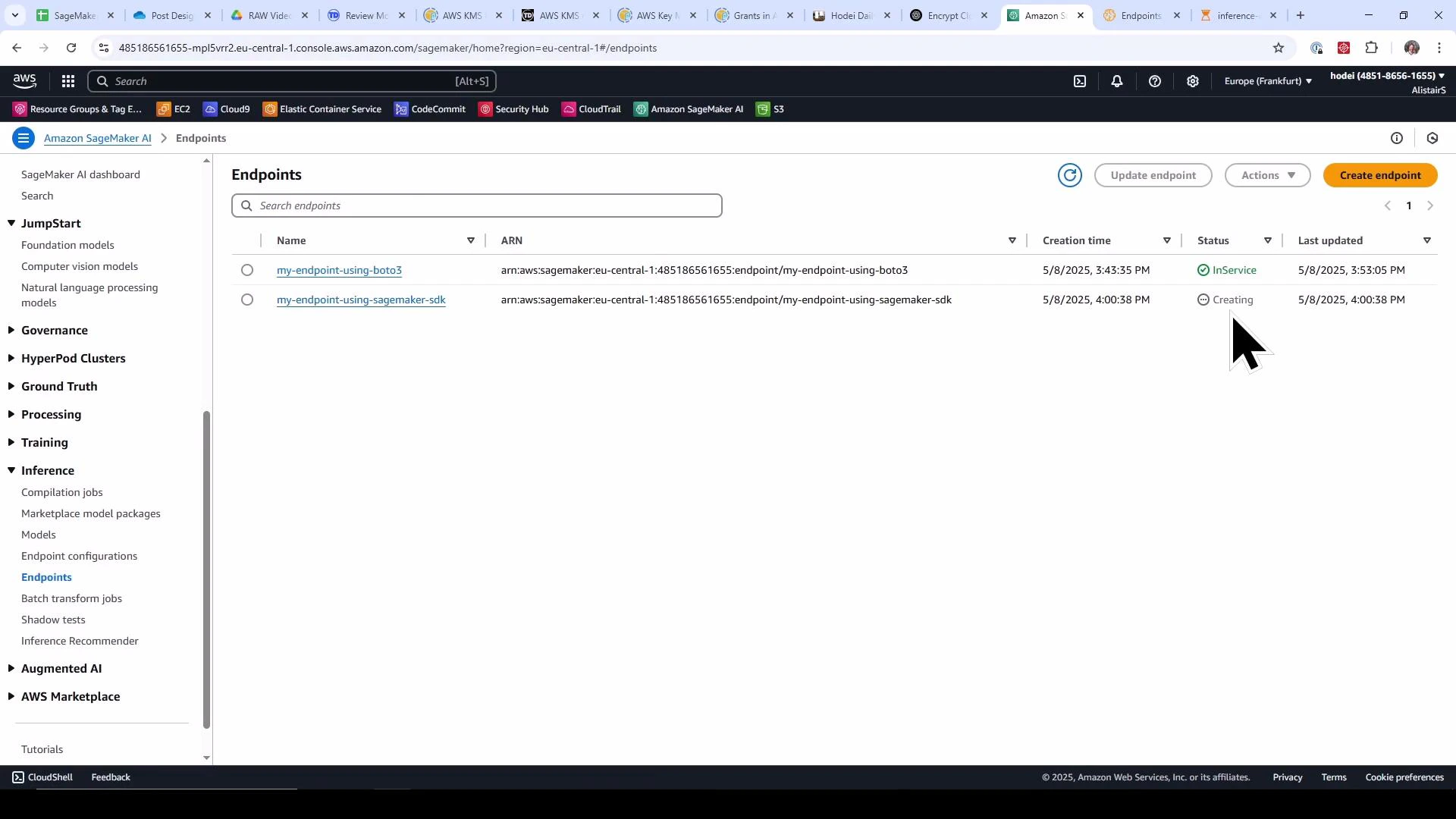

Visual confirmation in the SageMaker console

Once your SDK-created endpoint starts provisioning, check the SageMaker console to confirm status. The screenshot below shows one endpoint InService and another Creating while the SDK-created endpoint is provisioning.

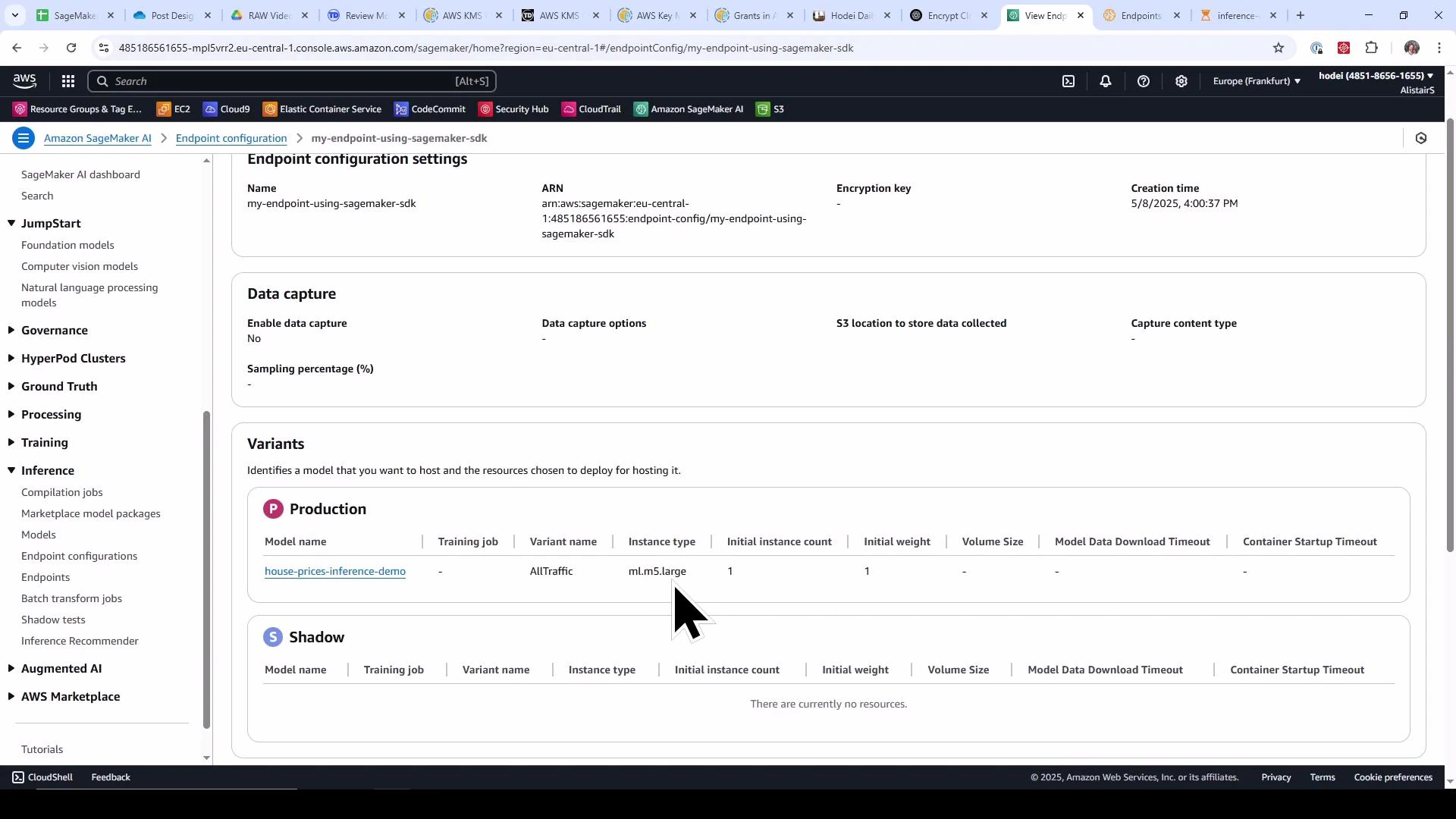

ml.m5.large).

Cleanup — remove endpoints and endpoint configurations

Running endpoints incurs charges. Use the snippet below to enumerate endpoints and delete each endpoint and its EndpointConfiguration. Confirm you want to remove these resources in the current AWS account/region before running it.Deleting an endpoint is asynchronous. After calling

delete_endpoint, the endpoint transitions to Deleting and may take time to reach Deleted. If you attempt to delete the endpoint configuration while the endpoint is still deleting, delete_endpoint_config may fail because the configuration is still in use. Poll describe_endpoint (or use a waiter) and wait for the endpoint to reach a terminal Deleted state before deleting the endpoint configuration.Summary

- Use the SageMaker Runtime client (

boto3.client('sagemaker-runtime')) to invoke real-time endpoints withinvoke_endpoint(...). - The SageMaker Python SDK (

Model.deploy(...)) simplifies endpoint creation by creating both the EndpointConfiguration and Endpoint in one step. - If you deployed with a framework-specific

Modelclass,deploy()returns aPredictor. If you used the baseModelclass,deploy()returnsNoneand you must construct aPredictorbound to the endpoint name before callingpredict. - Always delete endpoints and endpoint configurations after demos or tests to avoid ongoing charges.

Links and references

- Amazon SageMaker documentation

- SageMaker Runtime API — invoke-endpoint

- SageMaker Python SDK — Predictor class