Feature engineering is the process of transforming prepared dataset columns into representations that are more useful for a chosen machine learning algorithm. It sits between dataset preparation (cleaning, imputation) and model training. Good feature engineering can improve predictive performance, reduce training time, and make models more robust.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Why feature engineering matters

Even after basic cleaning (e.g., filling missing values), raw data often needs further transformation:- Irrelevant or redundant features slow training and may reduce model quality.

- Noise or outliers can bias learning and harm generalization.

- Categorical features must be encoded in ways that reflect their semantics (ordinal vs nominal). Poor choices can mislead models.

- Strongly skewed numeric inputs can violate algorithmic assumptions and reduce performance.

Common feature-engineering activities

| Activity | Purpose | Typical methods / tools |

|---|---|---|

| Feature selection | Remove irrelevant or redundant inputs to speed training and avoid overfitting | Correlation analysis, feature importance, recursive feature elimination |

| Categorical encoding | Represent categorical data numerically | One-hot, ordinal, target encoding, hashing, embeddings |

| Feature extraction | Create informative features from raw values | Date parts, text lengths, tokenization, embeddings |

| Feature transformation | Reduce skew and stabilize variance | log, sqrt, power transforms, Box-Cox |

| Feature interactions | Capture multiplicative or composite effects | Multiplication, concatenation, polynomial features |

| Aggregation / grouping | Add group-level statistics | groupby / pivot_table (mean, sum, count) |

| Scaling / normalization | Put features on comparable scales | StandardScaler, MinMaxScaler |

When features have very high cardinality (for example, postal codes or item IDs), prefer techniques that limit dimensionality (target encoding, hashing, or learned embeddings) instead of naive one-hot encoding, which explodes feature count.

Hands-on examples (pandas + scikit-learn)

Start by loading a small sample dataset into a pandas DataFrame for local experimentation:Encoding categorical variables

- One-hot encoding expands nominal categories into binary columns (useful for low-cardinality categorical variables).

- Ordinal encoding maps categories to integers when a natural order exists.

- For dates, extract year/month/dayofweek with dt accessor.

- For text, use length, token counts, or embeddings depending on needs.

Be careful with target encoding: if you encode categories using information from the target without proper cross-validation or out-of-fold strategies, you can leak label information and inflate evaluation metrics.

Feature transformations and interactions

- Use log or sqrt transforms to reduce skew and moderate the influence of extreme values.

- Create interaction features to capture multiplicative or combined effects.

Aggregations and grouping

Group-level statistics can be powerful features (e.g., mean price by city). Use groupby or pivot_table and merge results back into the main DataFrame.Derived features, missing-value handling, dropping redundant columns, and scaling

Create derived features such as age, handle missing values with imputation strategies, drop original columns if redundant, compute ratios like price per square foot, and scale numeric columns for algorithms that require normalized inputs.Where to execute feature-engineering code at scale

- Local development: pandas + scikit-learn on a developer laptop is ideal for prototyping and experiments.

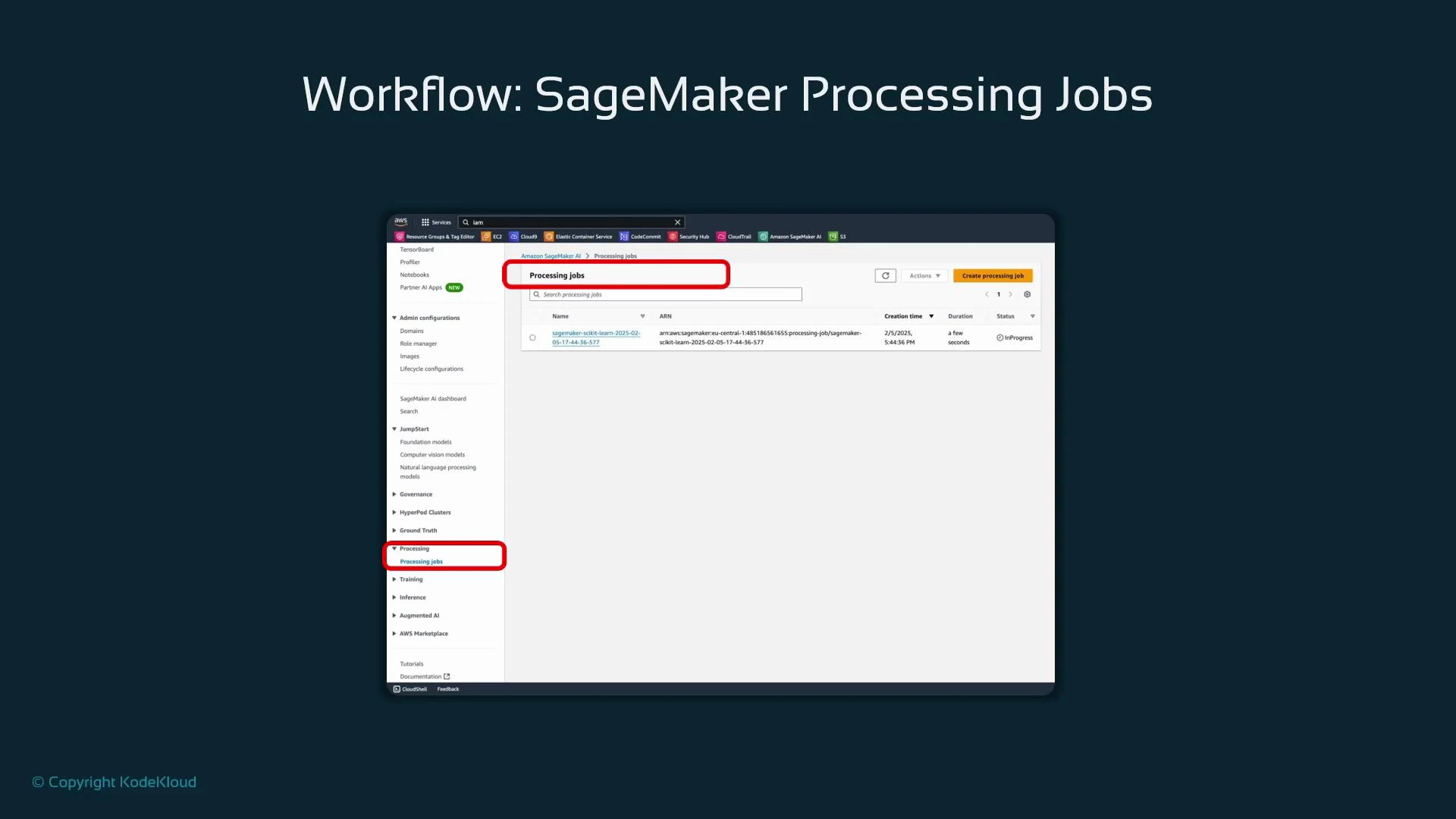

- Production-scale or repeatable pipelines: use managed compute and orchestration. SageMaker Processing is a common choice to run these transformations on managed instances and produce reproducible outputs.

- A container (framework image, e.g., scikit-learn)

- A processing script that reads inputs, transforms data, and writes outputs

- Instance type and count

- IAM role with appropriate permissions

| Parameter | Purpose | Example |

|---|---|---|

| framework_version | Version of the SageMaker scikit-learn container | ”1.2-1” |

| instance_type | Compute instance for processing | ”ml.m5.large” |

| instance_count | Number of instances to run in parallel | 1 or more |

| code / source_dir | Script and code dependencies | preprocessing.py in src/ |

| inputs / outputs | S3 or local paths for input and output artifacts | S3 paths for raw and transformed data |

Summary and best practices

- Feature engineering is essential to present the most informative inputs to a model and usually improves performance and convergence speed.

- Choose encodings and transformations intentionally—consider domain knowledge, algorithm assumptions, and feature cardinality.

- Prototype locally with pandas and scikit-learn; scale production jobs with SageMaker Processing or other managed services.

- Always validate feature changes with proper cross-validation and monitor for data leakage (especially with target-based encodings).

Links and references

- pandas documentation

- scikit-learn documentation

- SageMaker Processing jobs

- StandardScaler (scikit-learn)

- MinMaxScaler (scikit-learn)