In this lesson we’ll train a model in Amazon SageMaker Studio using the SageMaker Python SDK. This guide walks through a compact, runnable notebook flow that demonstrates:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

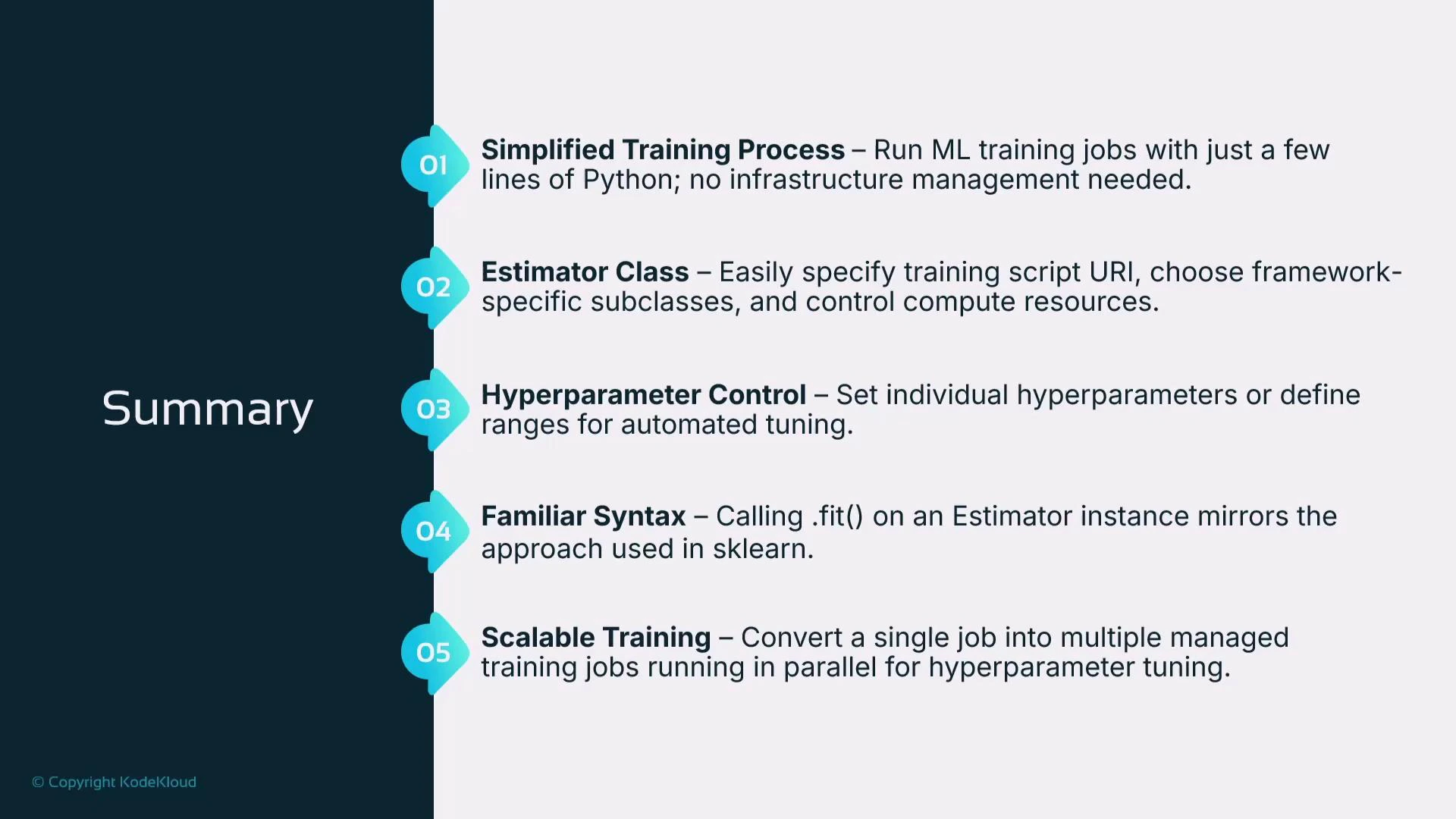

- Defining and running a training job with the SageMaker SDK’s Estimator class.

- Launching a Hyperparameter Tuning job to explore multiple hyperparameter combinations in parallel.

- Inspecting and retrieving model artifacts produced by training.

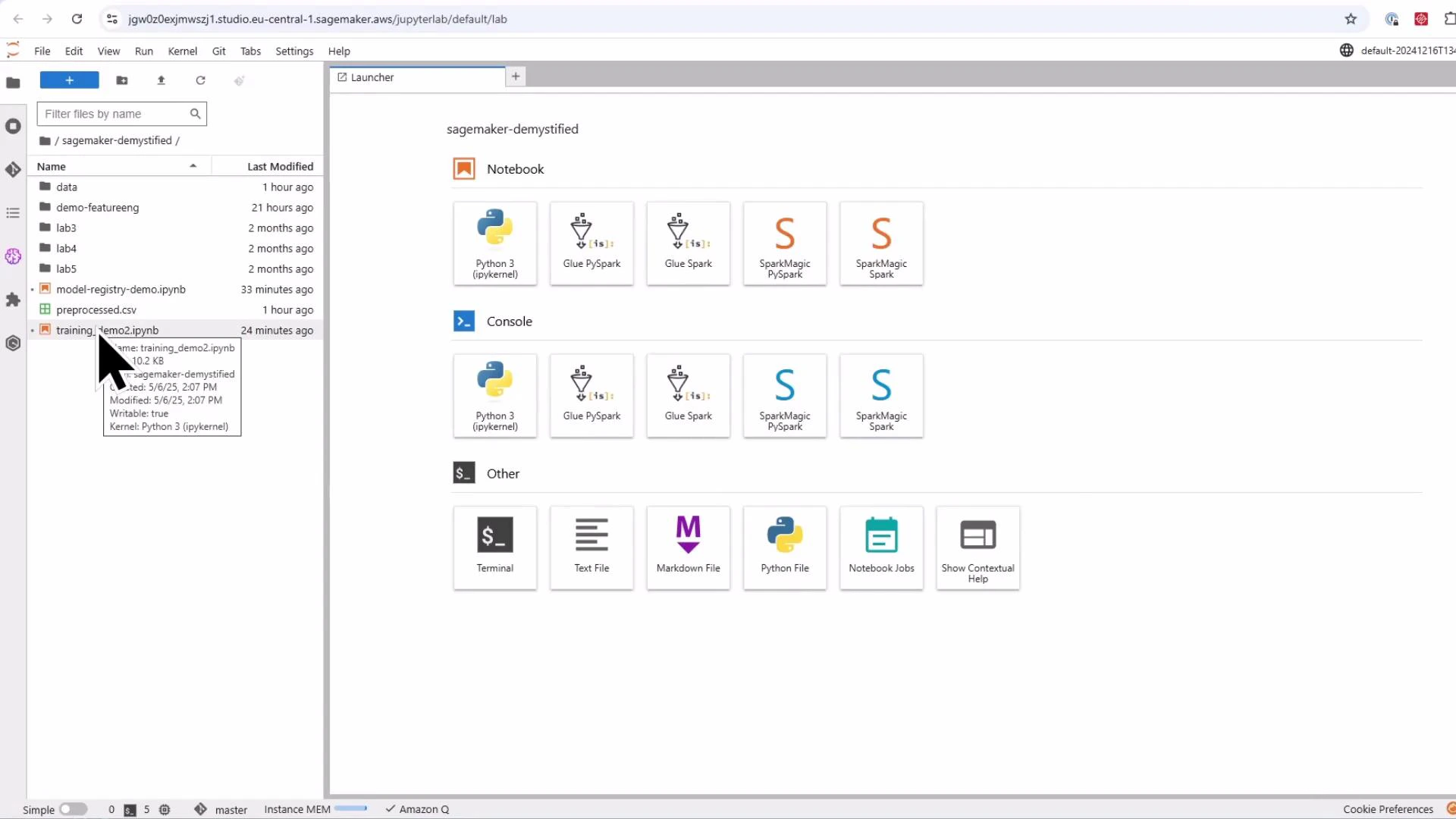

- Open a Jupyter notebook in SageMaker Studio.

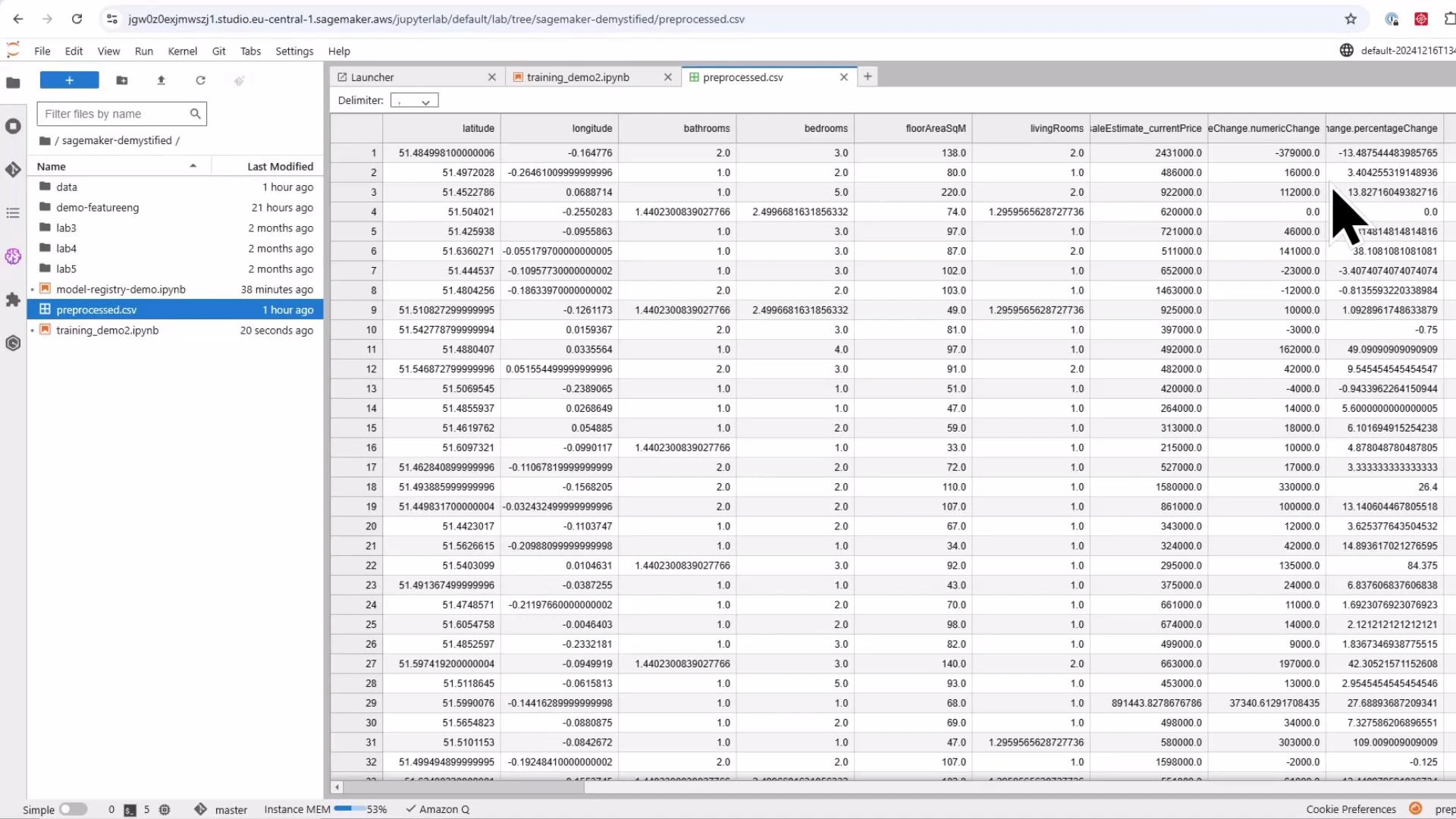

- Prepare data: split into train/validation/test (≈ 70% / 20% / 10%).

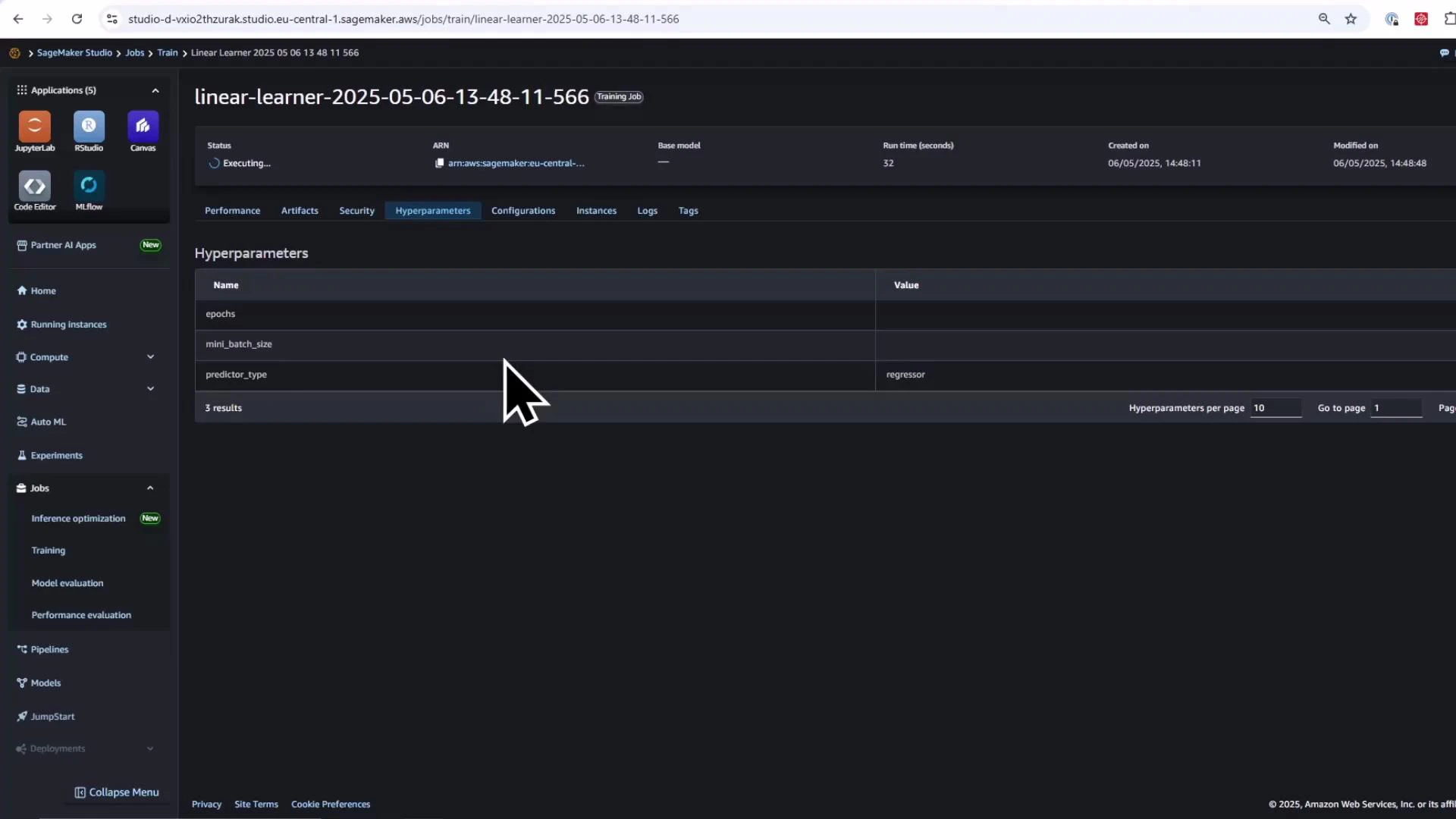

- Create and run a SageMaker training job using Estimator — provide the container image, compute resources, and IAM role.

- We’ll use the generic Estimator from the SageMaker SDK, so we must provide the container image URI for the training algorithm. For this demo we use SageMaker’s built-in Linear Learner (regression).

- Workflow: set hyperparameters (mini-batch size, epochs, etc.), upload CSV data to Amazon S3, call estimator.fit(…), then inspect the model artifact (model.tar.gz) in S3.

- To accelerate experimentation, we create a Hyperparameter Tuning job to run multiple training jobs in parallel and pick the best model by an objective metric (e.g., validation RMSE).

- The sequence below contains the main notebook steps: imports, session/role setup, load & split data, save CSVs, upload to S3, define estimator, and run training.

- This example assumes a preprocessed CSV file (preprocessed.csv) is available in the notebook filesystem.

- Below we configure the Estimator to use a single ml.m5.large instance for demonstration. Adjust instance type and count for larger jobs.

- SageMaker creates a managed training job and provisions the compute instance(s). Monitor progress from SageMaker Studio (Jobs > Training) or the SageMaker Console training jobs page. Logs stream to the notebook cell output and include metrics such as validation RMSE and MSE.

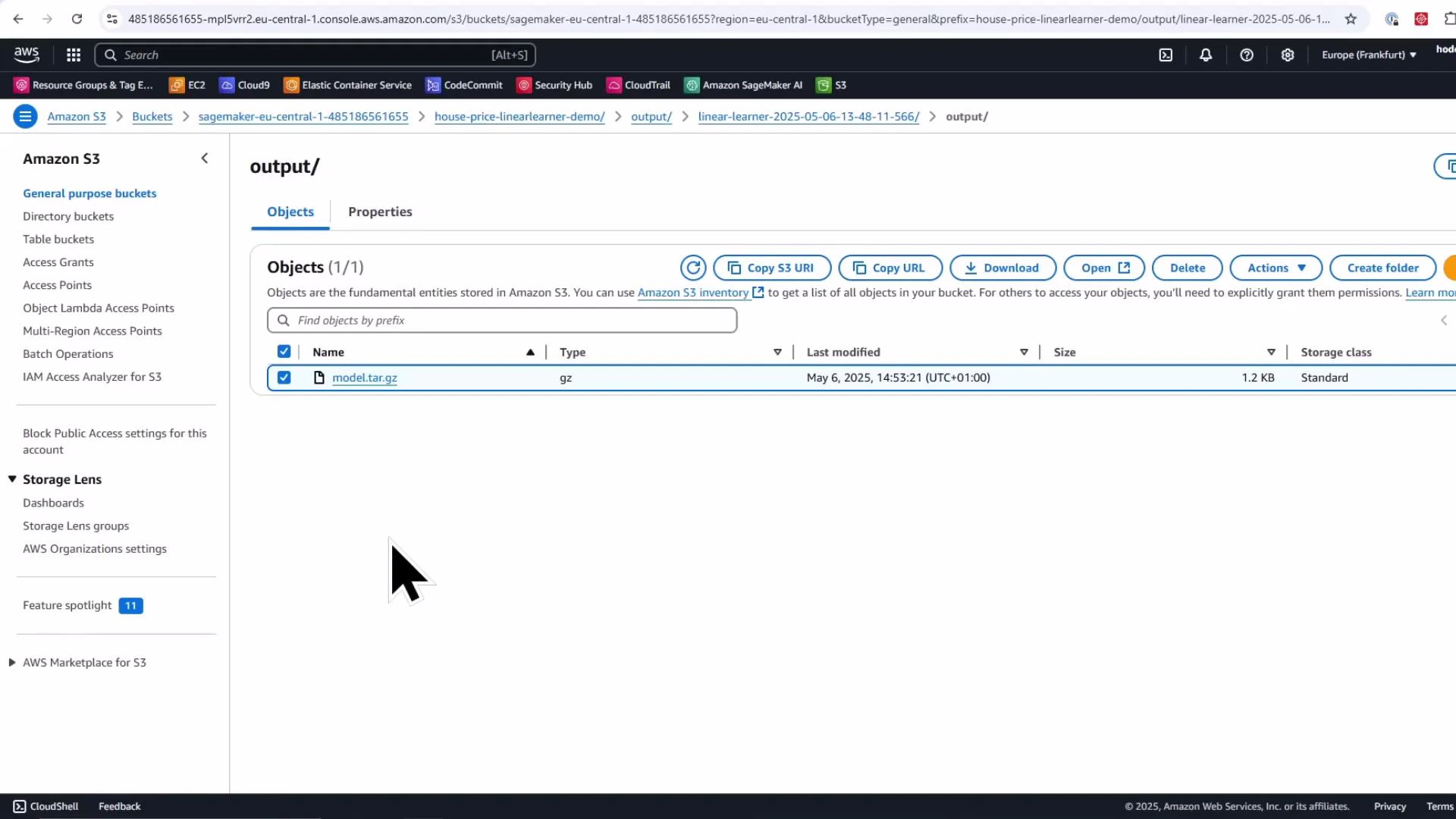

- After training completes, the model artifact (model.tar.gz) is saved to the configured S3 output prefix.

- Define ranges for the hyperparameters you want SageMaker to explore (ContinuousParameter or IntegerParameter).

- Create a HyperparameterTuner and call fit(); the tuner launches multiple training jobs (up to max_parallel_jobs concurrently) and returns the best training job according to the specified objective metric.

By default tuner.fit(…) waits until the tuning job finishes and blocks the notebook cell. To launch asynchronously, call tuner.fit(…, wait=False) so you can continue other work while the tuner runs.

- After tuning completes, use Boto3 or the SageMaker SDK to describe the tuning job and obtain the BestTrainingJob and its objective metric.

- If your model shows large RMSE values, investigate feature scaling, outliers, target distribution, and whether a linear model is appropriate. Hyperparameter tuning speeds up exploration but cannot replace good feature engineering and the right model choice.

- Many built-in algorithms expect the label as the first column and CSV inputs without headers or index — ensure you follow the input formatting expected by the chosen algorithm.

- For faster iteration, use smaller subsets of data or fewer epochs during development, then scale up for final training runs.

- Created a SageMaker training job with the Python SDK Estimator class.

- Retrieved the built-in Linear Learner container image and provided it to a generic Estimator.

- Uploaded train/validation/test CSVs to S3 and started training with estimator.fit(…).

- Launched a HyperparameterTuner to try multiple hyperparameter combinations in parallel and retrieved the best model.

- Verified the model artifact saved to S3 (model.tar.gz).

| Resource | Purpose | Link |

|---|---|---|

| SageMaker Python SDK | API reference for Estimator, inputs, and training workflows | https://sagemaker.readthedocs.io/en/stable/ |

| Estimator class docs | API for creating and running training jobs | https://sagemaker.readthedocs.io/en/stable/api/training/estimators.html#sagemaker.estimator.Estimator |

| SageMaker Hyperparameter Tuning | Tuner and strategies | https://sagemaker.readthedocs.io/en/stable/tuner.html |

| Linear Learner algorithm | Built-in algorithm overview for regression/classification | https://docs.aws.amazon.com/sagemaker/latest/dg/linear-learner.html |

| SageMaker Studio | Studio overview and getting started | https://docs.aws.amazon.com/sagemaker/latest/dg/studio.html |

| Boto3 SageMaker client | Programmatic access to tuning job metadata | https://boto3.amazonaws.com/v1/documentation/api/latest/reference/services/sagemaker.html |

| Amazon S3 Console guide | Managing S3 artifacts and objects | https://docs.aws.amazon.com/AmazonS3/latest/userguide/using-console.html |

- Try different instance types (e.g., ml.m5.2xlarge) and compare training time vs cost.

- Replace Linear Learner with other built-in algorithms or your own training container to evaluate model performance.

- Integrate model evaluation and model deployment (SageMaker endpoints or batch transform) as the next phase after training.