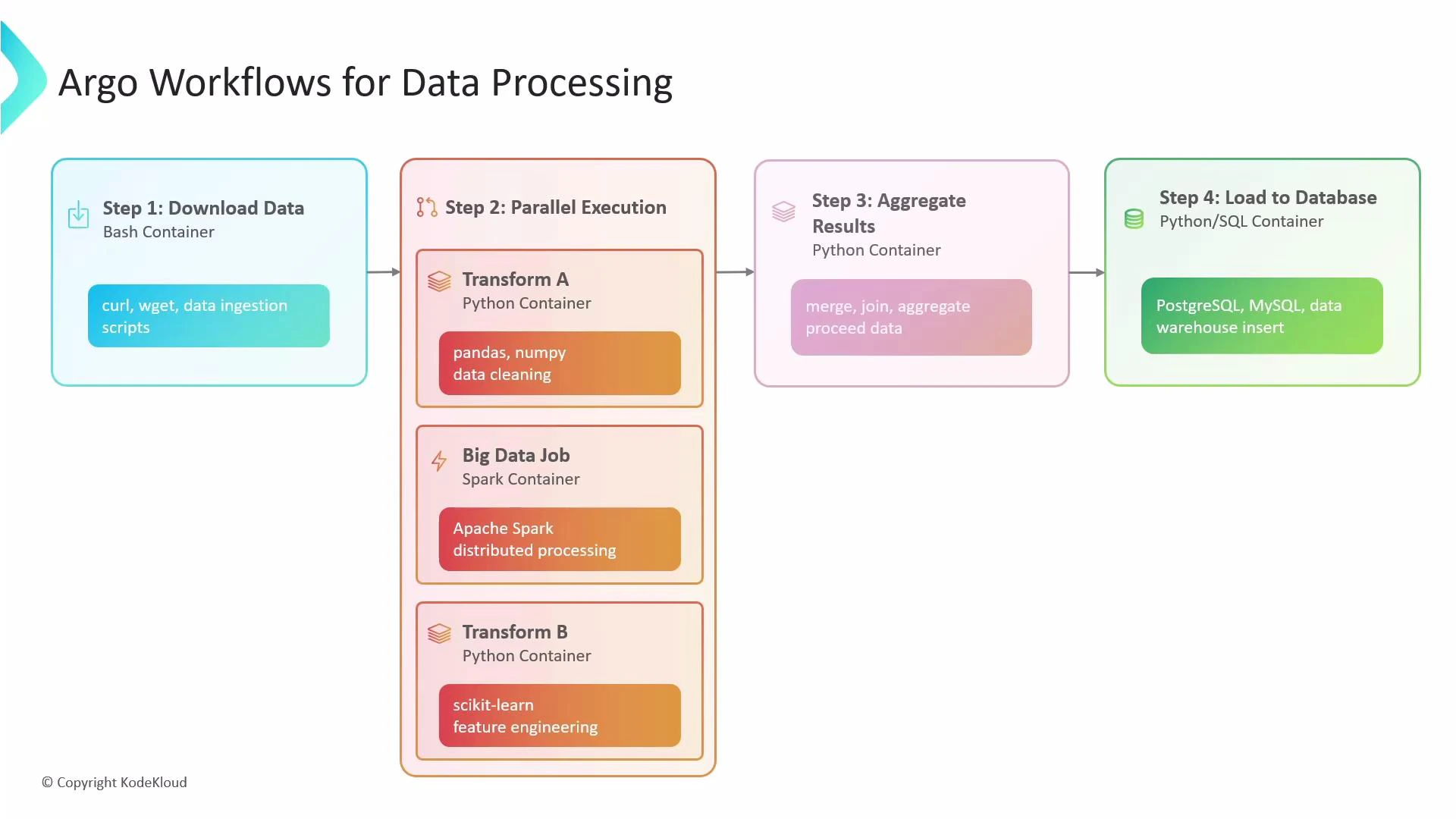

In this lesson, we explore how Argo Workflows enables scalable, container-based data processing pipelines on Kubernetes. Argo Workflows lets you define complex, fault-tolerant pipelines where each step runs as a container. This makes it easy to mix tools and languages — for example, using Bash for downloads, Python/pandas for transformations, and Spark for heavy compute — and orchestrate them as a single reproducible workflow. A typical Argo data pipeline may:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- Ingest data (e.g., curl/wget in a small container).

- Fan out to multiple transformers in parallel (Python for cleaning, Spark for heavy processing, Python for feature engineering).

- Merge parallel outputs into one dataset.

- Aggregate results and load into a SQL database (PostgreSQL/MySQL).

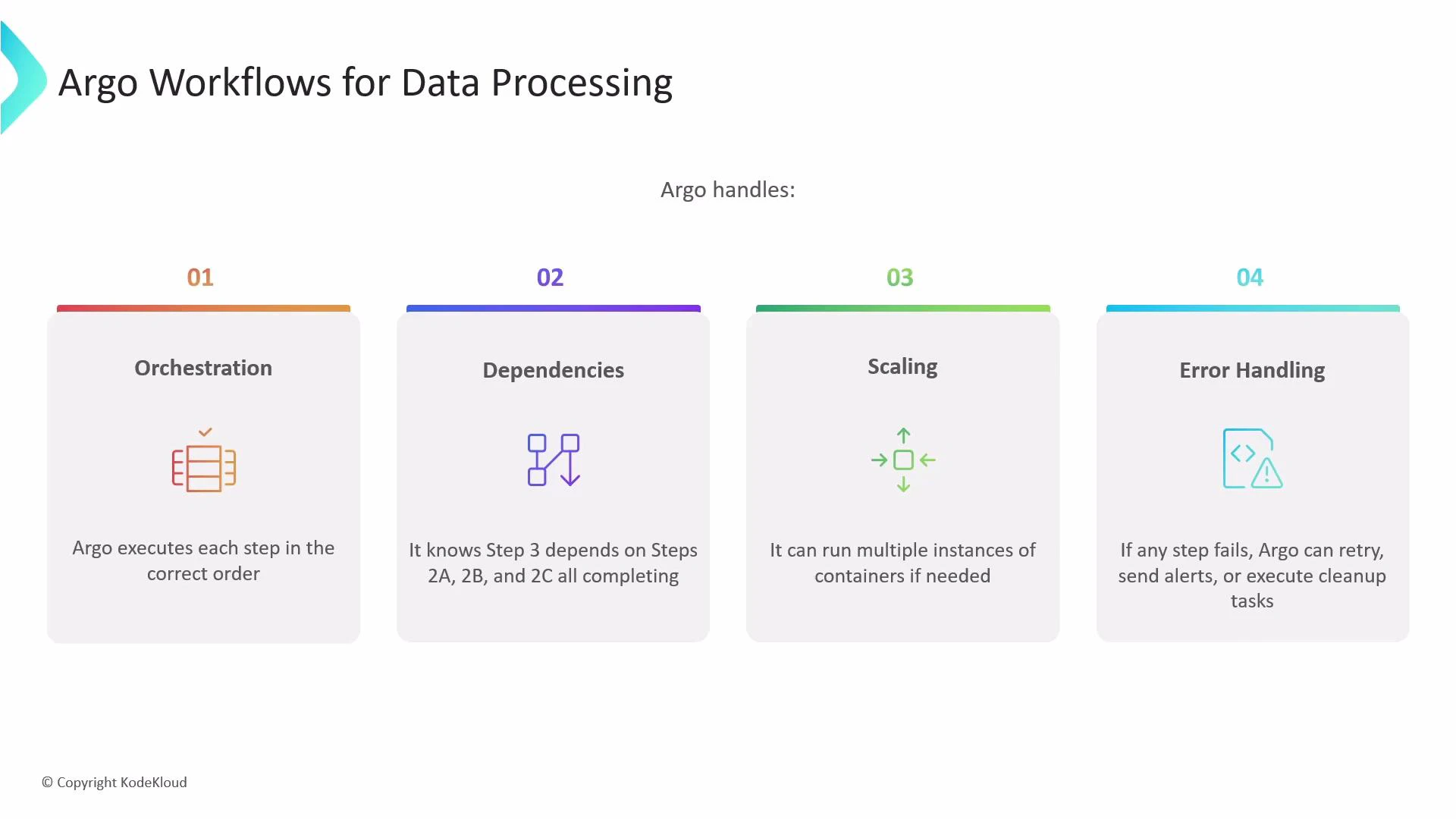

What Argo does behind the scenes

Argo Workflows orchestrates execution of containerized tasks according to your workflow definition. It:- Executes tasks in the order you define.

- Tracks and enforces dependencies (e.g., a merge step waits for all transformers).

- Scales by running multiple container instances in parallel.

- Handles failures with retries, alerts, and cleanup options.

Note: Argo Workflows is the Argo project focused on running containerized workflows. Argo CD is a separate Argo project that provides GitOps for Kubernetes deployments (see GitOps with ArgoCD). This article focuses on Argo Workflows for pipeline orchestration.

Key features at a glance

| Feature | Use case | Example |

|---|---|---|

| Orchestration | Execute container steps in order | Download → Transform → Aggregate |

| Dependencies | Ensure merge waits for all parallel tasks | Step waits on 2a, 2b, 2c |

| Scaling | Run many container instances concurrently | Fan-out file processing |

| Error handling | Retries, backoff, cleanup hooks | Retry failed step 3 times |

withItems: parallel fan-out for batch processing

withItems lets you fan out over a static list (file names, table names, endpoints) and run multiple instances of a template — effectively a for-each loop that executes in parallel by default. How it works: given items A, B, and C, Argo creates three independent instances of the referenced template (three pods). Each instance receives its item value via the placeholder. Example: process multiple files in parallel This workflow demonstrates a top-level template that fans out over S3 file paths and invokes a processing template for each item.- Use inside the referenced template to access the value.

- Each withItems iteration starts a separate pod (unless limited by parallelism).

Controlling concurrency with parallelism

Uncontrolled parallelism can overload cluster resources or downstream systems (databases, APIs). Use the workflow-level spec.parallelism field to throttle how many tasks run concurrently across the entire workflow. Example: limit the cosmic loop to 2 concurrent podsWarning: Setting parallelism too high can exhaust node CPU/memory or overwhelm downstream services. Use resource requests/limits on containers and test with smaller parallelism before scaling up.

Best practices for data processing pipelines

- Use small, focused containers for each step (single responsibility).

- Define resource requests and limits for heavy workloads (Spark, large Python jobs).

- Use artifact storage (S3/GCS) or persistent volumes to pass large data between steps instead of base64-encoded outputs.

- Use retry/backoff strategies and timeouts for unreliable external systems.

- Monitor and log at each step; consider sidecar log aggregators or central observability.