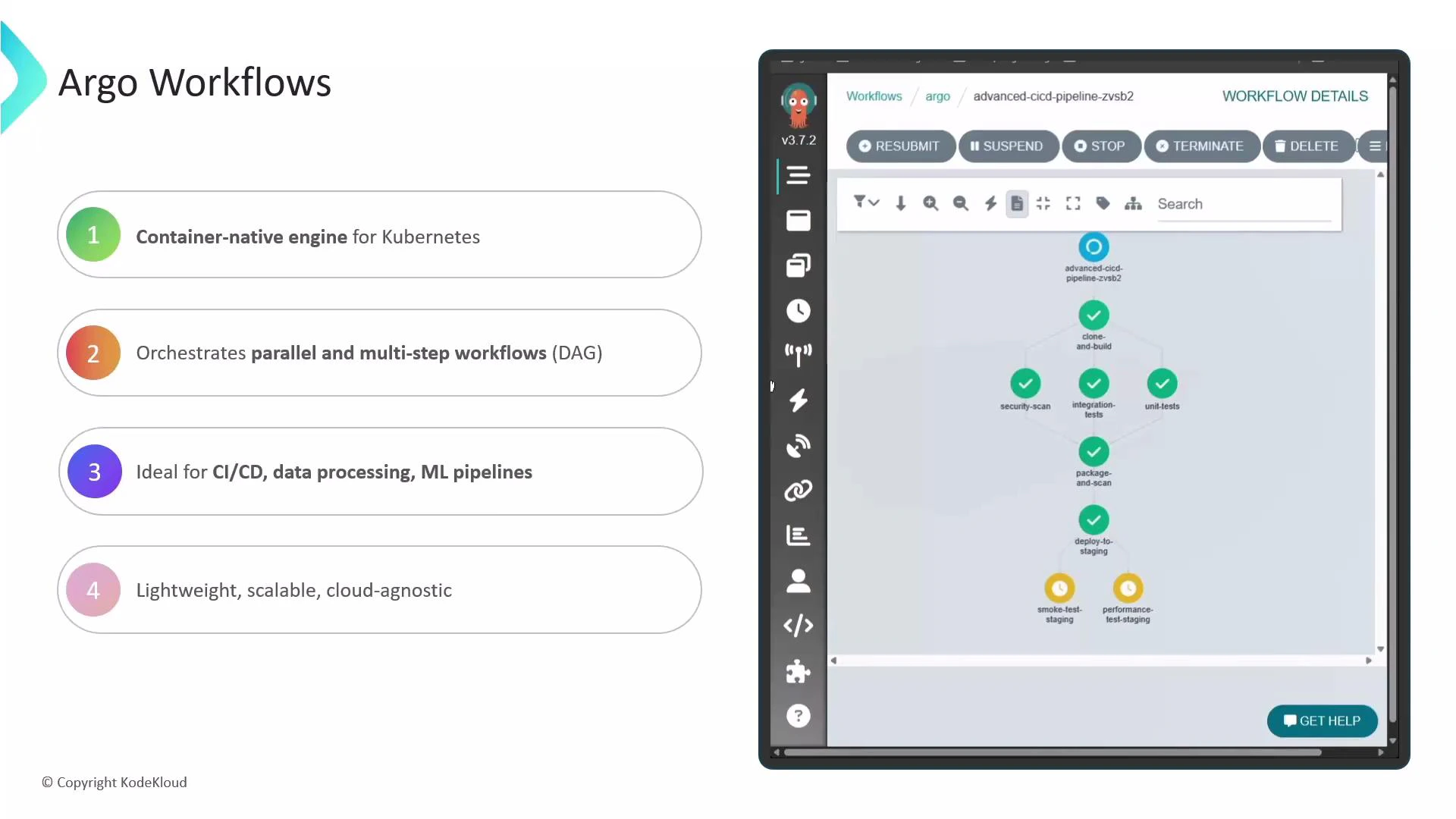

Argo Workflows is a container-native workflow engine for Kubernetes that orchestrates parallel and sequential jobs using containers. It lets you express complex multi-step processes as either a linear sequence of steps or a directed acyclic graph (DAG), where each step executes in its own container. Because Argo runs inside Kubernetes, it is lightweight, scalable, and cloud-agnostic — with no external orchestration dependency. Argo is commonly used for CI/CD pipelines, data processing pipelines, and machine learning workflows that need to run directly on Kubernetes clusters. Its Kubernetes-native design gives you access to Kubernetes primitives (RBAC, namespaces, resource quotas, storage) while providing higher-level workflow constructs.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Key capabilities

Argo Workflows provides a rich set of features for running container-native workloads on Kubernetes:- Declarative workflow definitions: author workflows with YAML manifests that describe steps, DAGs, templates, and inputs/outputs.

- Control-flow primitives: built-in support for conditional execution, loops, fan-in/fan-out, and parallel steps.

- Reusability & parameterization: define reusable WorkflowTemplate and ClusterWorkflowTemplate objects and pass parameters between templates.

- Visualization & observability: the Argo Server UI visualizes DAGs, provides per-step logs, and shows execution state for debugging.

- Execution control: timeouts, suspend/resume, retries, and backoff policies to control runtime behavior.

- Data passing and artifacts: first-class artifact support (S3, GCS, HTTP, and more) plus parameters and outputs for step-to-step data transfer.

- Concurrency & resource management: configure parallelism at workflow, template, and step levels to guard resource consumption.

- Long-running services: sidecars and daemon steps let you run background services alongside workflow steps.

- SDKs & clients: official Go and Python SDKs (and community SDKs for other languages) enable programmatic workflow control.

- Exit handlers & cleanup: onExit handlers, TTL strategies, and garbage-collection options help automate cleanup and teardown.

Minimal declarative example

Below is a minimal Argo Workflow YAML that demonstrates the declarative style and a simple sequence of steps. Save this to a file (e.g., minimal-workflow.yaml) and submit it withargo submit when you have an Argo controller running.

Feature summary (quick reference)

| Feature area | Use case | Example |

|---|---|---|

| Workflow types | Sequential or DAG orchestration | spec.templates[].steps or spec.templates[].dag |

| Artifacts & storage | Share files between steps | S3/GCS/HTTP artifact locations |

| Control flow | Conditional logic, loops | when clauses, withItems, withParam |

| Observability | UI and logs | Argo Server web UI + kubectl logs |

| Execution control | Timeouts, suspend/resume, retries | activeDeadlineSeconds, suspend, retryStrategy |

| Reusability | Templates and libraries | WorkflowTemplate, ClusterWorkflowTemplate |

When to choose Argo Workflows

- You need Kubernetes-native orchestration with fine-grained control over containers and resources.

- Your workloads require parallelism, complex DAGs, or passing artifacts between steps.

- You want UI-based visualization of pipeline execution and per-step logs.

- You prefer declarative YAML workflow definitions that integrate with GitOps and CI/CD tooling.

Argo runs natively on Kubernetes, so you’ll need a Kubernetes cluster (local or cloud) to run workflows and to use the Argo Server / UI for visualization and control.

Links and references

- Official Argo Workflows docs: https://argoproj.github.io/argo-workflows/

- Kubernetes documentation: https://kubernetes.io/docs/

- Argo GitHub repository: https://github.com/argoproj/argo-workflows

- Argo Python SDK: https://github.com/argoproj-labs/argo-python-sdk