This lesson shows an Argo Workflow that produces a file in one step and consumes it in a later step. It demonstrates creating a file, uploading it as an artifact to an artifact repository (MinIO in this example), and then downloading and reading that artifact in the consumer step. OverviewDocumentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- The generate step creates

/tmp/hello.txtand declares it as an output artifact. - The consume step accepts that artifact as an input artifact (passed via

arguments) and prints its contents. - The templates run in sequence:

generatefinishes first, thenconsumeruns and receives the artifact produced bygenerate.

Use standard temporary paths such as /tmp inside container scripts to avoid confusion. This example uses /tmp consistently for both producer and consumer.

| Component | Purpose | Example / Notes |

|---|---|---|

| outputs.artifacts (producer) | Declares artifacts to upload after the step completes | name: my-generated-artifact, path: /tmp/hello.txt |

| arguments.artifacts + from (caller) | Passes a previously produced artifact into another template | from: "{{steps.generate.outputs.artifacts.my-generated-artifact}}" |

| inputs.artifacts (consumer) | Declares the artifact the template expects and the local path where Argo will materialize it | name: message-from-producer, path: /tmp/message.txt |

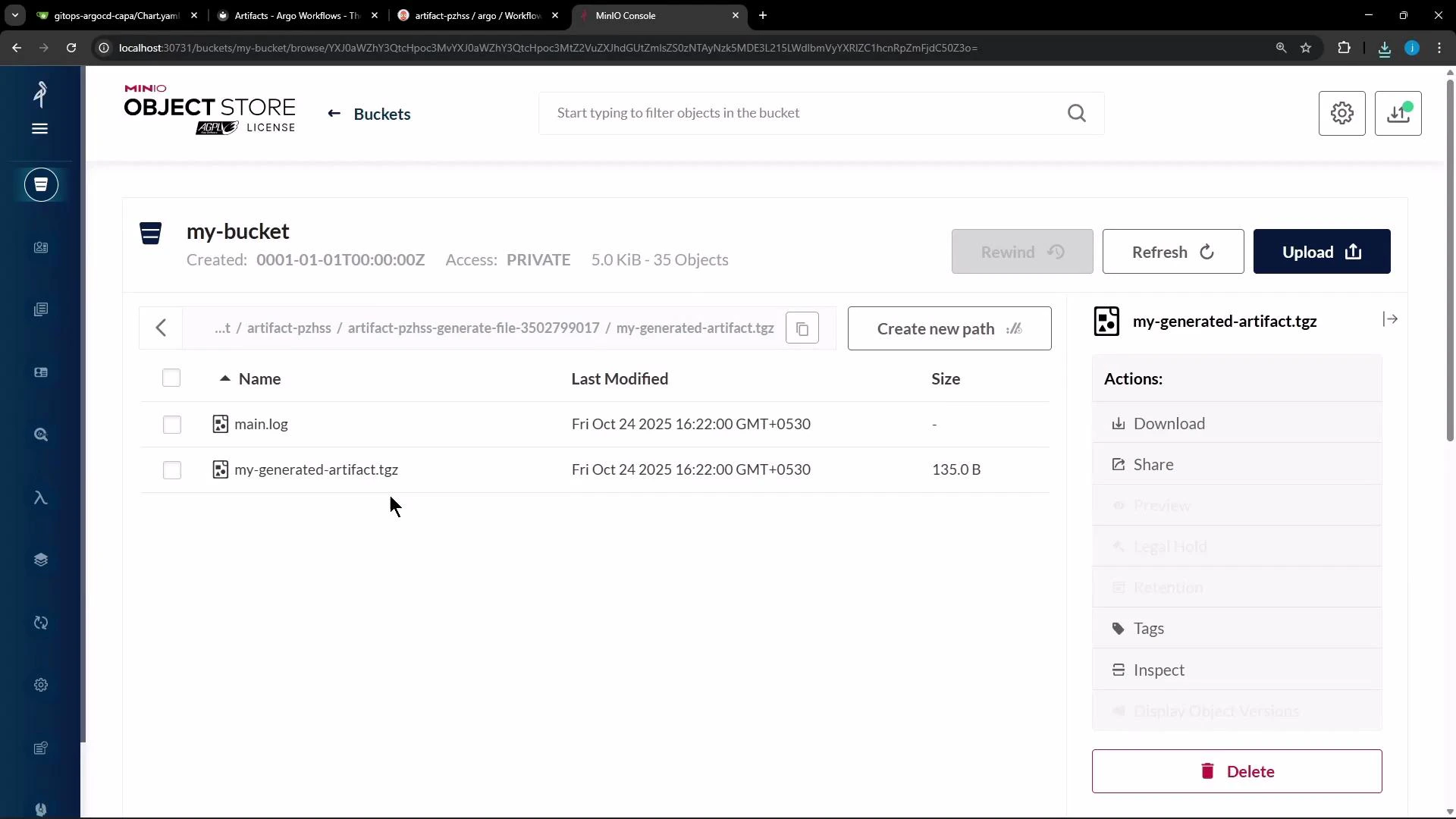

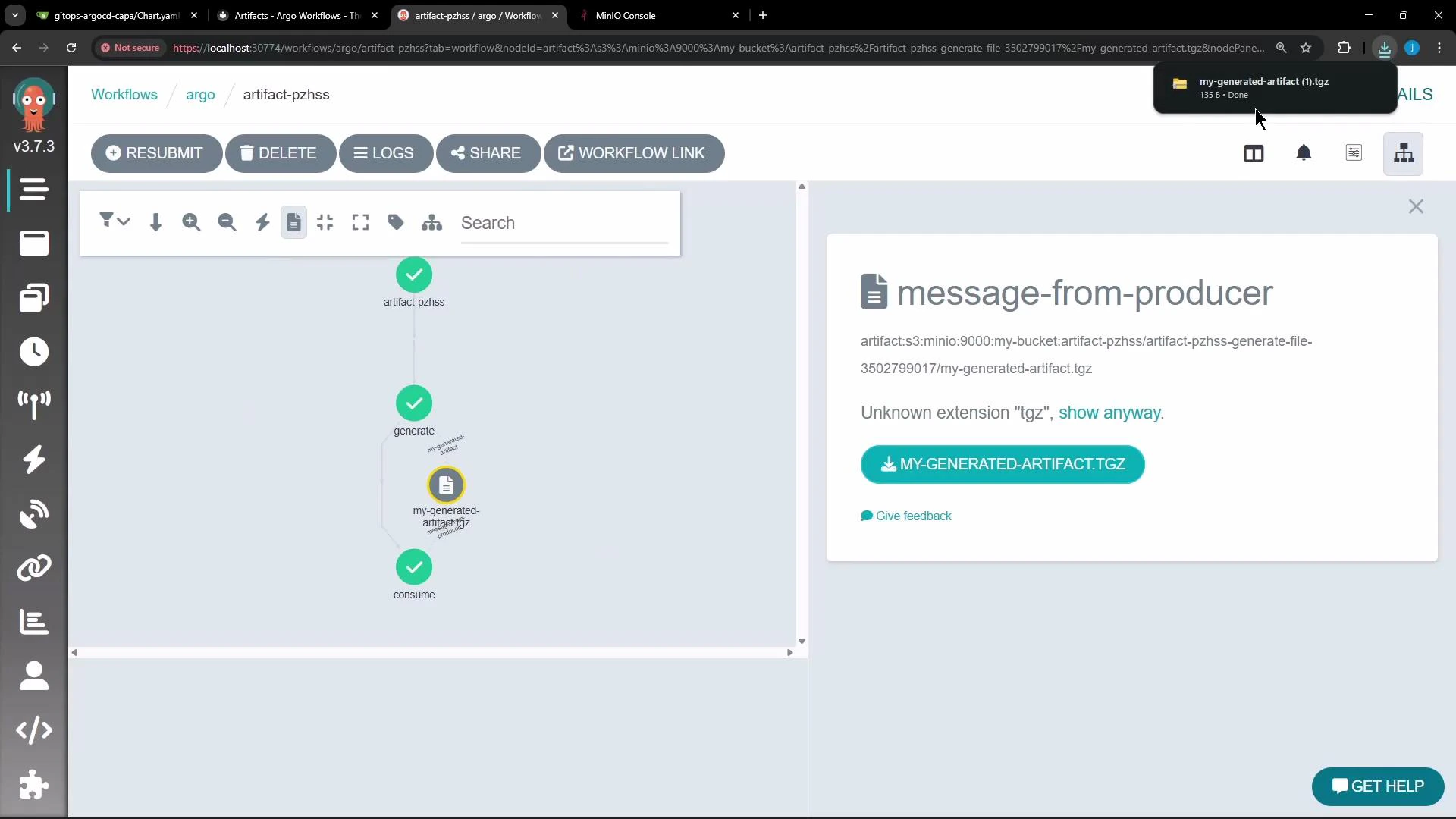

generate step completes, Argo uploads the artifact to the configured artifact repository (MinIO in this demo). By default Argo archives artifacts as a tar + gzip, so objects stored in MinIO often appear as compressed tarballs (for example, .tgz or .tar.gz). You can download these archived artifacts from the MinIO console or the Argo Workflows UI.

| Archive mode | Behavior | Use case |

|---|---|---|

| tar + gzip (default) | Archive and gzip the artifact | Default for most small files and directories |

| none | Upload the file/directory as-is | When repository layout must match container output exactly (e.g., build caches) |

| tar with compressionLevel | Control gzip compression level (0–9) | Tune size vs CPU for large textual logs or binary blobs |

- Use

archive: nonewhen the consumer must see the exact file/directory structure your container produced (for caching or large build outputs). - Use

tarwith acompressionLevelwhen you need to tune upload size vs CPU cost. For textual logs, higher compression helps; for already-compressed binaries, consider lower compression or disabling it.

generatecompletes and Argo uploads/tmp/hello.txtto the artifact repository as a tar+gzip by default (e.g.,my-generated-artifact.tgz).- The

consumestep is scheduled; Argo downloads the artifact, materializes it at/tmp/message.txtinside the consumer container, and the consumer prints: