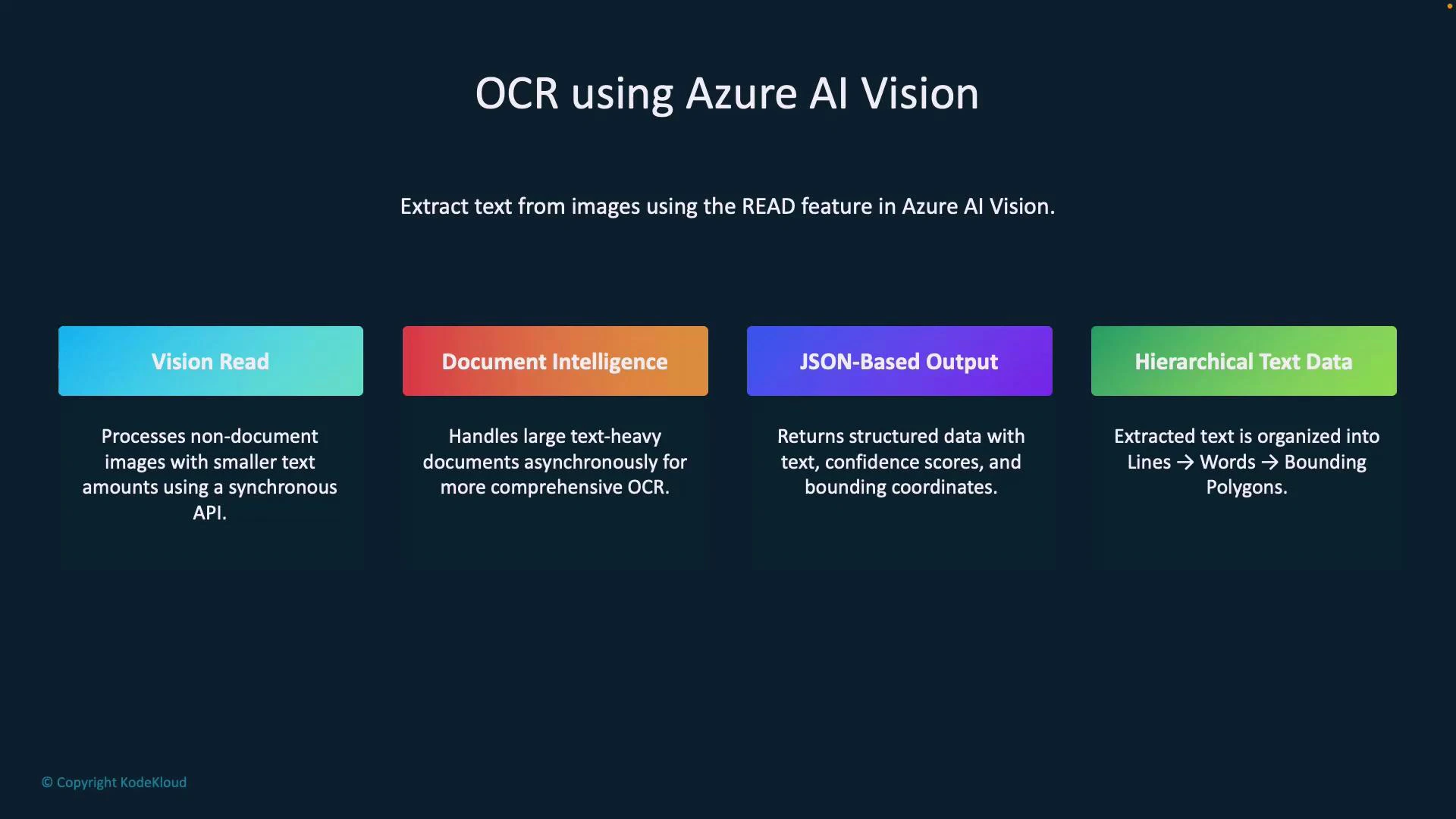

Welcome to this lesson on OCR (Optical Character Recognition) with Azure AI Vision. This guide explains how Azure extracts text from images — from photos of signs and labels to scanned documents and handwritten notes — using the Read capability. You’ll learn when to use the Read API versus Document Intelligence, how the JSON output is structured, and how to call the Read feature with a concise Python example.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

When to use Read vs Document Intelligence

Choose the right service based on document complexity and scale.| Feature | Best for | Notes |

|---|---|---|

| Vision Read | Printed or handwritten text in images, short notes, signs, labels | Typically synchronous and optimized for smaller images and short text blocks |

| Document Intelligence | Multi-page PDFs, invoices, receipts, structured forms | Richer parsing, field extraction, and often asynchronous for larger workloads |

Document Intelligence is intended for richer document processing (forms, invoices, multi-page PDFs). For short images or quick handwritten notes, the Read feature is usually sufficient and simpler to integrate.

Key outputs from the Read API

- JSON-based output containing recognized text, confidence scores, and positional coordinates.

- Hierarchical structure (blocks → lines → words) with bounding polygons for each text element.

- Useful for highlighting text in UI overlays, computing coordinates, or downstream analytics.

Sample JSON output (excerpt)

The Read API returns structured JSON where each line includes a bounding polygon and words with their own polygons and confidence scores. This abbreviated example demonstrates the typical nesting and coordinates you can expect:Sample Python code to call Read (ImageAnalysisClient)

The following Python snippet demonstrates calling the Read feature using ImageAnalysisClient, converting the SDK result to a dictionary, and extracting text from readResult → blocks → lines. Ensure you setendpoint and key variables and install required Azure SDK packages.

Keep your Azure endpoint and key secure. Do not hard-code secrets in source files; use environment variables or a secrets manager.

Sample console output

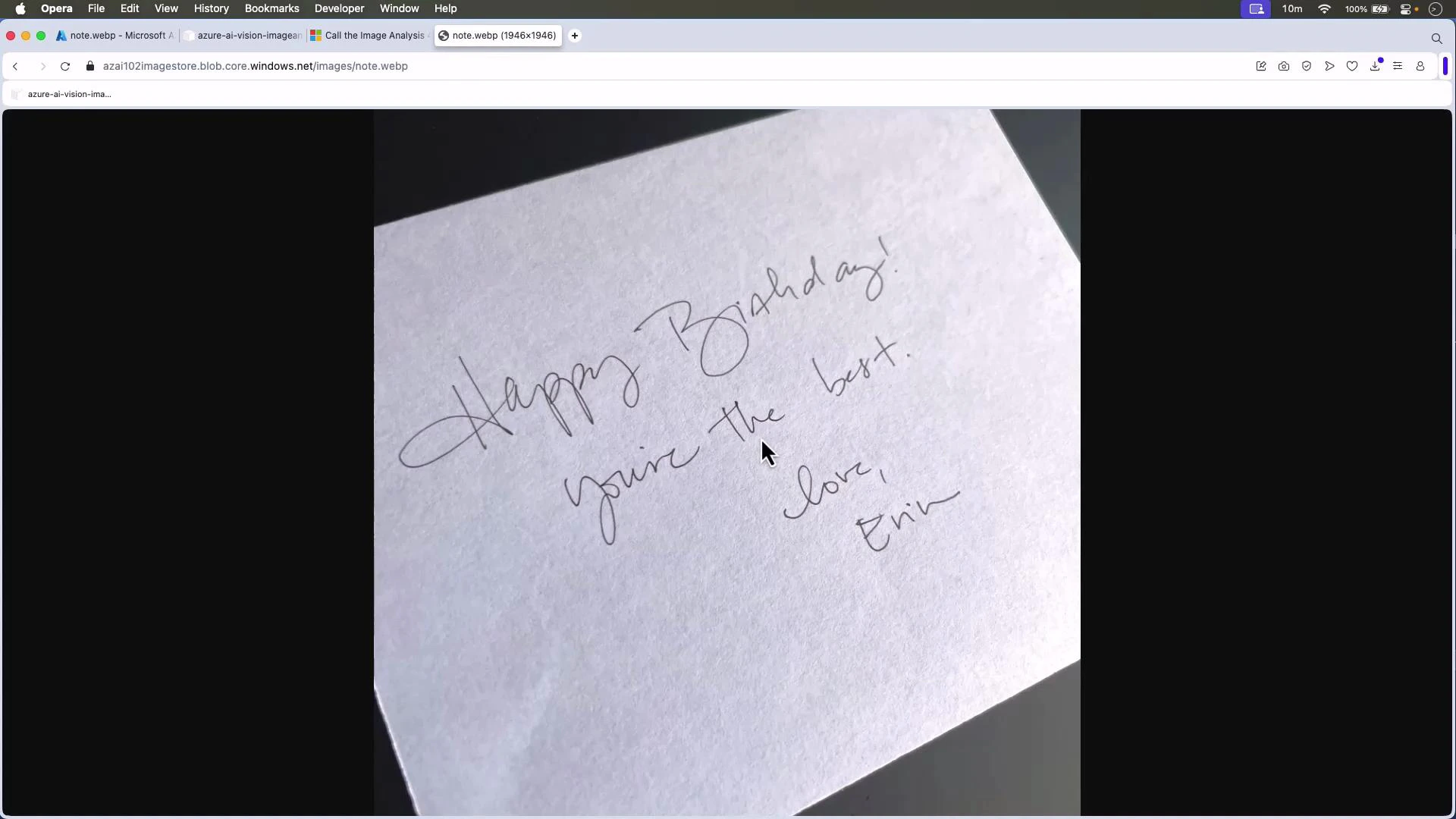

Running the script prints the extracted human-readable text and, optionally, the full JSON structure with model version, metadata, blocks, lines, words, bounding polygons, and confidence scores. Example printed output:Example: Handwritten note

This lesson used a photographed handwritten birthday note. The Read API extracted the content and returned bounding polygons and confidence values for each recognized word, enabling UI overlays or further processing.

Summary

- Use Vision Read for extracting printed or handwritten text from images (short notes, signs, labels).

- Use Document Intelligence for multi-page, structured, or complex document extraction tasks (receipts, invoices, contracts).

- The Read API returns hierarchical JSON (blocks → lines → words) with bounding polygons and confidence scores — ideal for UI overlays and downstream analytics.

- The provided Python example shows a straightforward integration pattern to call the Read API and extract text.