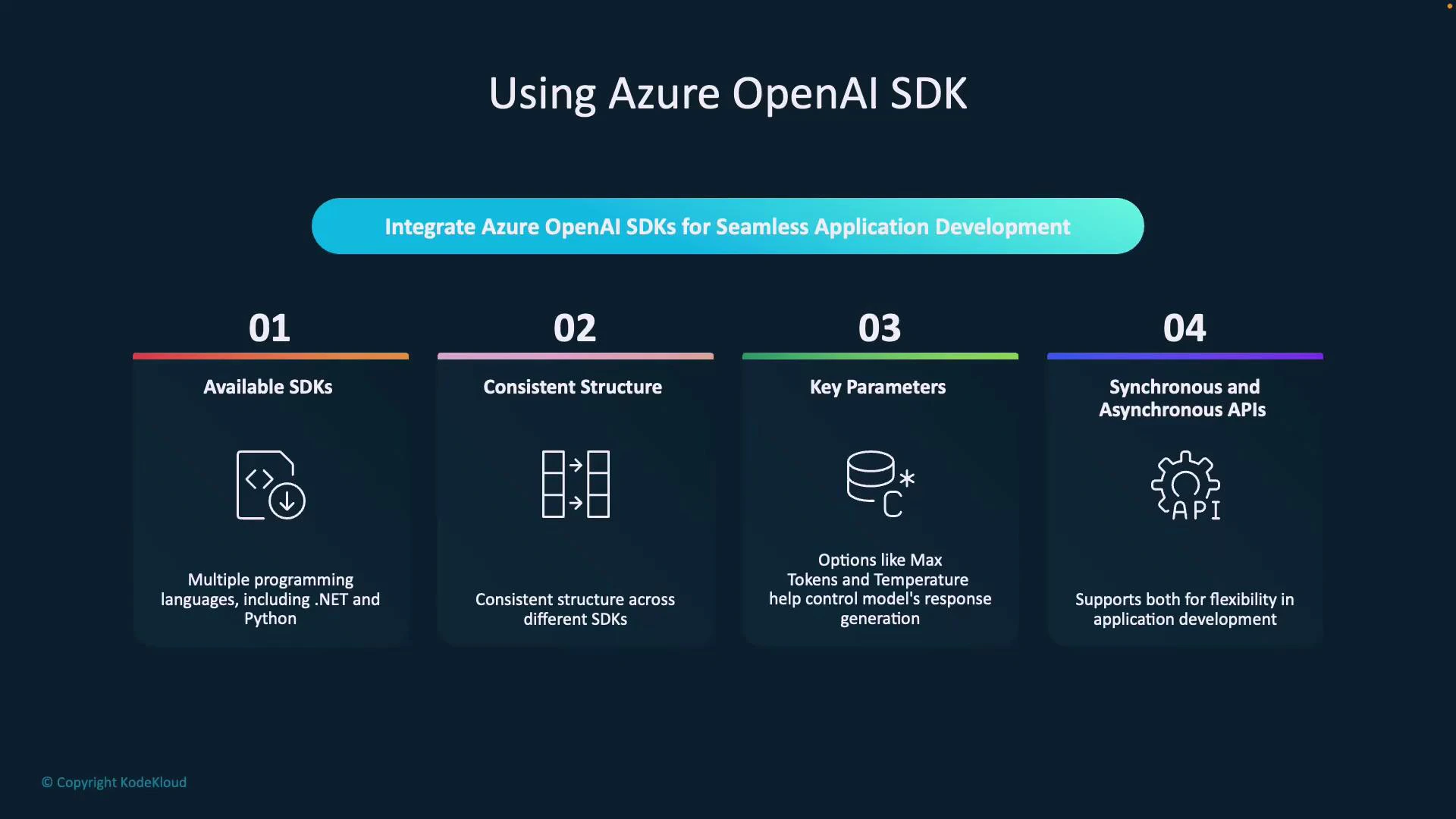

Using language-specific SDKs accelerates development by exposing idiomatic APIs and hiding low-level REST details. SDKs for Azure OpenAI provide consistent patterns across languages (for example, .NET and Python), making it easy to initialize clients, prepare requests, and handle responses while controlling model behavior with parameters like temperature and max_tokens. In this lesson we’ll cover what makes SDKs developer-friendly and walk through a concise, corrected Python example that integrates Azure OpenAI into a simple Flask chatbot. Why SDKs helpDocumentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- Familiar languages: Use the SDK for the language you already know (Python, .NET, etc.).

- Predictable structure: The typical pattern is initialize client → build messages/params → call API → process response.

- Fine-grained control: Tune generation with parameters such as max_tokens, temperature, and top_p.

- Sync and async options: Choose synchronous or asynchronous clients depending on your app architecture.

- Import the SDK package for your language.

- Initialize a client with your endpoint and credentials.

- Build chat messages and set generation parameters (system prompt, user messages, temperature, max_tokens, etc.).

- Send the request (sync or async).

- Process the response and integrate it into your application.

Never hardcode secrets (API keys or endpoints) in source code. Use environment variables or a secure secrets manager.

Store your Azure endpoint and API key in environment variables or a secure secrets store. Never commit keys to source control.

| Variable | Purpose | Example |

|---|---|---|

| AZURE_OPENAI_KEY | Your Azure OpenAI API key | set AZURE_OPENAI_KEY="..." |

| AZURE_OPENAI_ENDPOINT | Your Azure OpenAI resource endpoint | https://my-openai-resource.openai.azure.com/ |

| AZURE_OPENAI_DEPLOYMENT | Deployment name for the model | gpt-4o |

- Initialization: create an OpenAIClient using your Azure endpoint and AzureKeyCredential.

- Messages: construct a list of chat messages with roles (“system”, “user”, optionally “assistant”).

- Request: call client.get_chat_completions with your deployment_id and generation parameters (temperature, max_tokens).

- Response: extract the assistant text from response.choices[0].message.content (strip whitespace).

| Property | Example |

|---|---|

| Request URL | http://127.0.0.1:5000/chat |

| Request Method | POST |

| Status Code | 200 OK |

| Content-Type (response) | application/json |

| Server | Werkzeug/3.1.3 Python/3.9.13 |

| Header | Value |

|---|---|

| Connection | close |

| Content-Length | 171 |

| Content-Type | application/json |

| Server | Werkzeug/3.1.3 Python/3.9.13 |

| Header | Value |

|---|---|

| Accept | / |

| Content-Type | application/json |

| Host | 127.0.0.1:5000 |

| Origin | http://127.0.0.1:5000 |

- Add authentication and authorization for your Flask endpoints to protect access.

- Integrate with internal knowledge sources or a vector database to implement retrieval-augmented generation (RAG) for context-aware answers. See an intro to RAG here: Fundamentals of RAG.

- If your app needs high concurrency, switch to the async client or run the Flask app behind an async-friendly server.

- Consult Azure OpenAI docs for deployment, scaling, and best practices: https://learn.microsoft.com/azure/cognitive-services/openai/