Prompt engineering is the practice of designing clear, targeted inputs for generative AI so that models return more accurate, relevant, and usable outputs. It solves a common problem many developers and researchers face: good models can still produce vague or off-topic answers when given weak prompts. Meet Rhea, an AI researcher who uses language models to help with projects ranging from drafting documentation to answering technical questions. Over time, she noticed that the quality of the AI’s responses often depended less on the model itself and more on how she asked questions. Her mentor, Sam, gave a simple insight: treat the AI like an assistant you instruct, not a search engine. Specificity, structure, context, and fairness in prompts lead to better responses. By improving prompts, Rhea got more useful results without switching models or rewriting application code. Below are four practical prompt-engineering techniques Rhea adopted, why they work, and concrete examples you can reuse.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

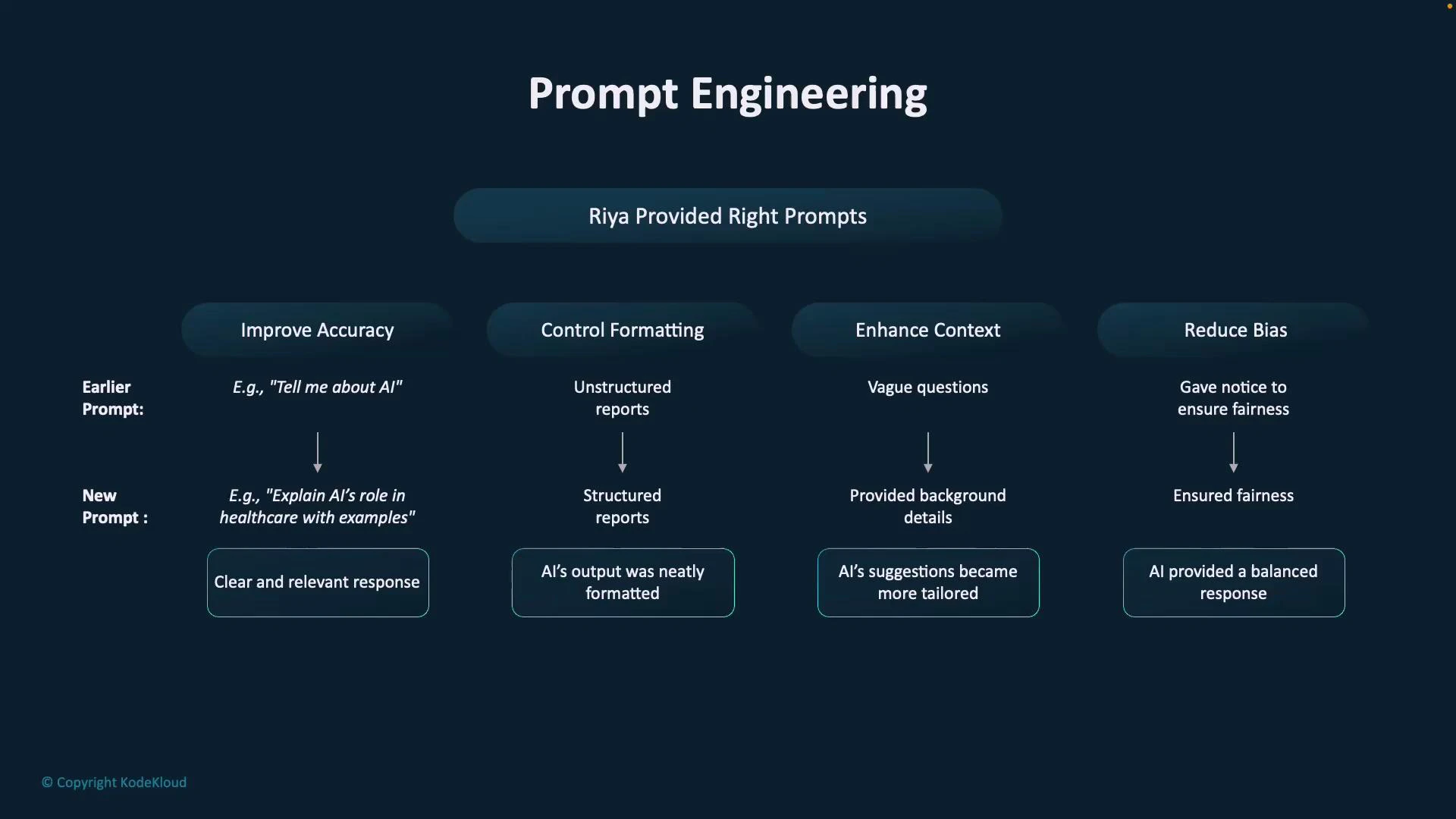

- Be specific — improve accuracy

- The problem: Generic prompts leave the model too many degrees of freedom and cause vague answers.

- The solution: Define the task, audience, constraints, and desired depth.

- Structure your output — control format

- The problem: Free-form paragraphs are harder to scan, parse into UIs, or convert into documentation.

- The solution: Request an explicit format (bullets, numbered steps, tables, headings).

- Add context — improve relevance

- The problem: Without background or role information, the model’s tone or depth may not match your needs.

- The solution: Provide a short role or system message and any necessary background.

Provide a clear role or system message when you want consistent tone, depth, or domain expertise from the model. A one-line role often yields significantly better, more focused responses.

- Address bias — encourage fairness

- The problem: Loaded or leading language in prompts can produce biased, unbalanced, or harmful outputs.

- The solution: Inspect and rephrase prompts to be neutral and inclusive. Ask for multiple perspectives when appropriate.

| Technique | Goal | Example Prompt / Output |

|---|---|---|

| Be specific | Increase accuracy and relevance | ”Explain cloud computing for a non-technical product manager; include cost, scalability, security, and two short examples.” |

| Structure outputs | Improve readability and parseability | ”Summarize SaaS, PaaS, IaaS in a 3-row table with Definition, Typical Use Case, Key Benefits.” |

| Add context | Match tone and expertise level | ”You are an AI tutor… Explain least privilege and give two practical steps.” |

| Address bias | Produce fair and balanced answers | ”What factors influence gender diversity in technology and how can companies improve inclusivity?” |

- Be specific: Define the task, audience, constraints, and expected depth. Narrow prompts produce focused answers.

- Structure outputs: Ask for lists, tables, headings, or numbered steps to make responses easier to read and reuse.

- Add context: Use a role or short background to align tone, depth, and domain expertise.

- Address bias: Reword loaded language and request balanced perspectives.

- OpenAI Prompting Guide

- Hugging Face — Prompting Best Practices

- Responsible AI and Fairness Resources