In this lesson, we’ll explain how Question Answering (QnA) systems let AI answer user questions using structured information sources such as knowledge bases. You’ll learn the architecture, the difference between static QnA and dynamic language understanding, and practical steps to create, test, and publish a knowledge base for production use. Imagine a common scenario for a bank customer asking questions like:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- How do I reset my net banking password?

- What is the interest rate for savings accounts?

- How do I block a lost debit card?

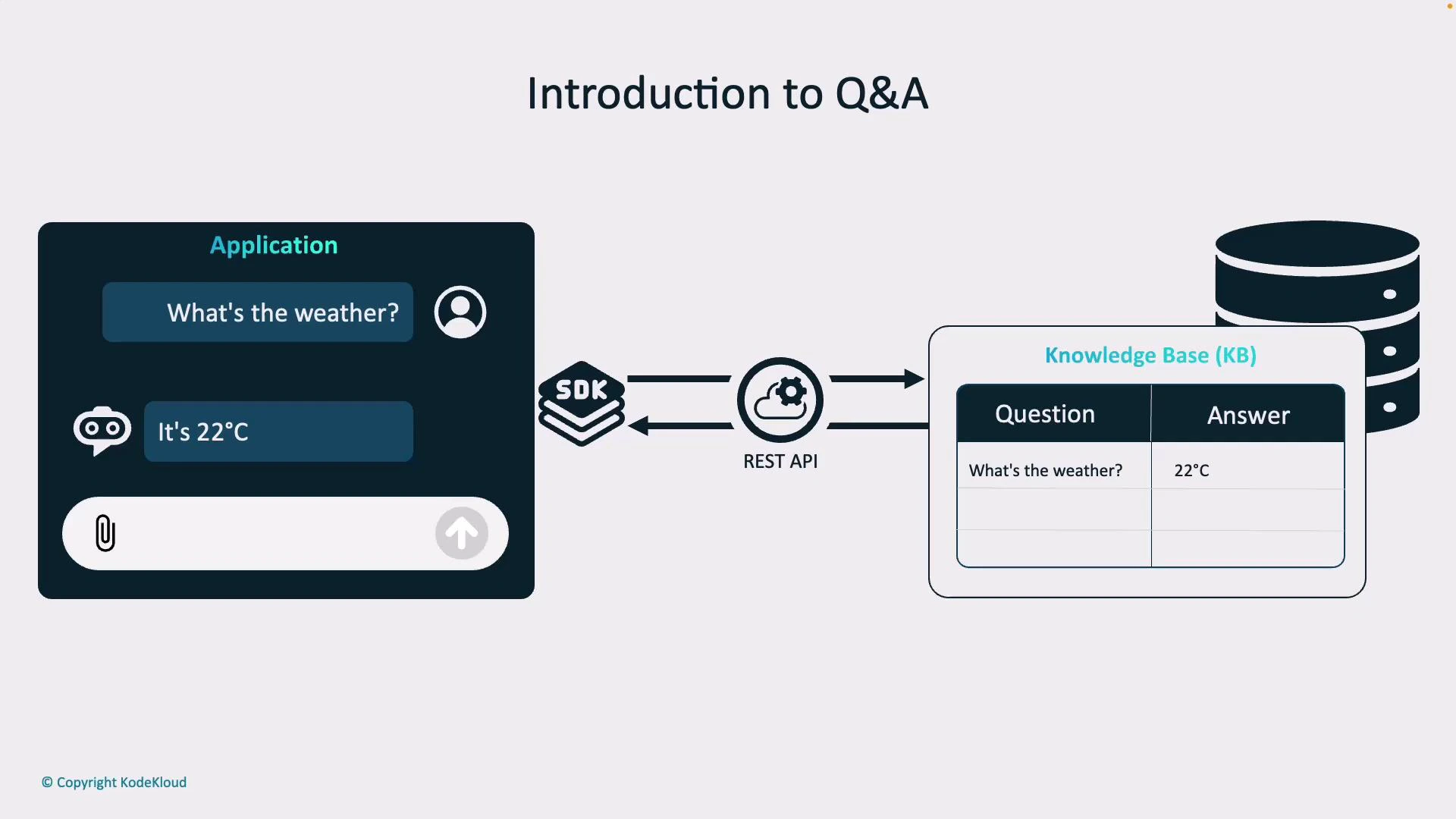

How a QnA flow typically works

- User submits a natural-language question via app, website, or chatbot.

- The client sends the query to a QnA endpoint (REST API or SDK).

- The QnA service searches the knowledge base for matching Q&A entries.

- The service returns the best matched answer, often with a confidence score.

- The client displays the answer; fallback logic handles low-confidence cases.

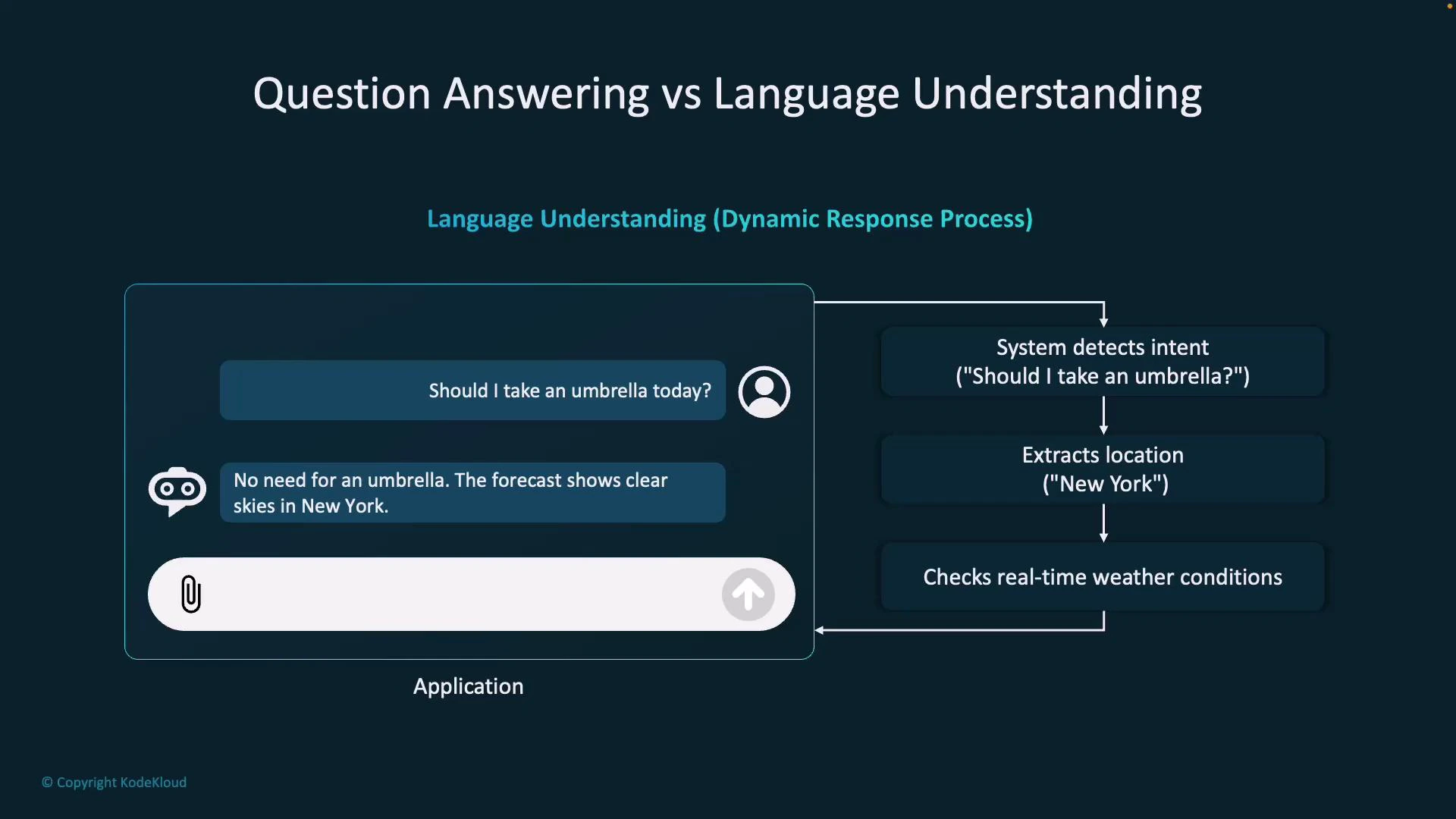

Question Answering vs Language Understanding

It’s important to distinguish a traditional QnA system from more general language-understanding solutions. The primary difference is whether responses are static (prewritten and stored) or dynamically generated based on intent, context, and live data.| Aspect | Static QnA (Knowledge Base) | Dynamic Language Understanding |

|---|---|---|

| Response type | Prewritten answers stored in KB | Generated responses using models + live data |

| When to use | FAQ, policy, onboarding, repetitive queries | Personalized recommendations, real-time data, complex reasoning |

| Speed & predictability | Fast and predictable | More flexible but may be slower/less predictable |

| Example | “How do I reset my password?” → stored reset instructions + link | “Should I take an umbrella today?” → detect intent, call weather API, reply with forecast |

- Static QnA: User asks “How do I reset my password?” The system matches the question to a stored Q&A pair and returns the prewritten answer with a reset link.

- Dynamic language understanding: User asks “Should I take an umbrella today?” The system detects the intent (“weather forecast”), extracts the location (e.g., “New York”), calls a weather API, and generates a context-aware response.

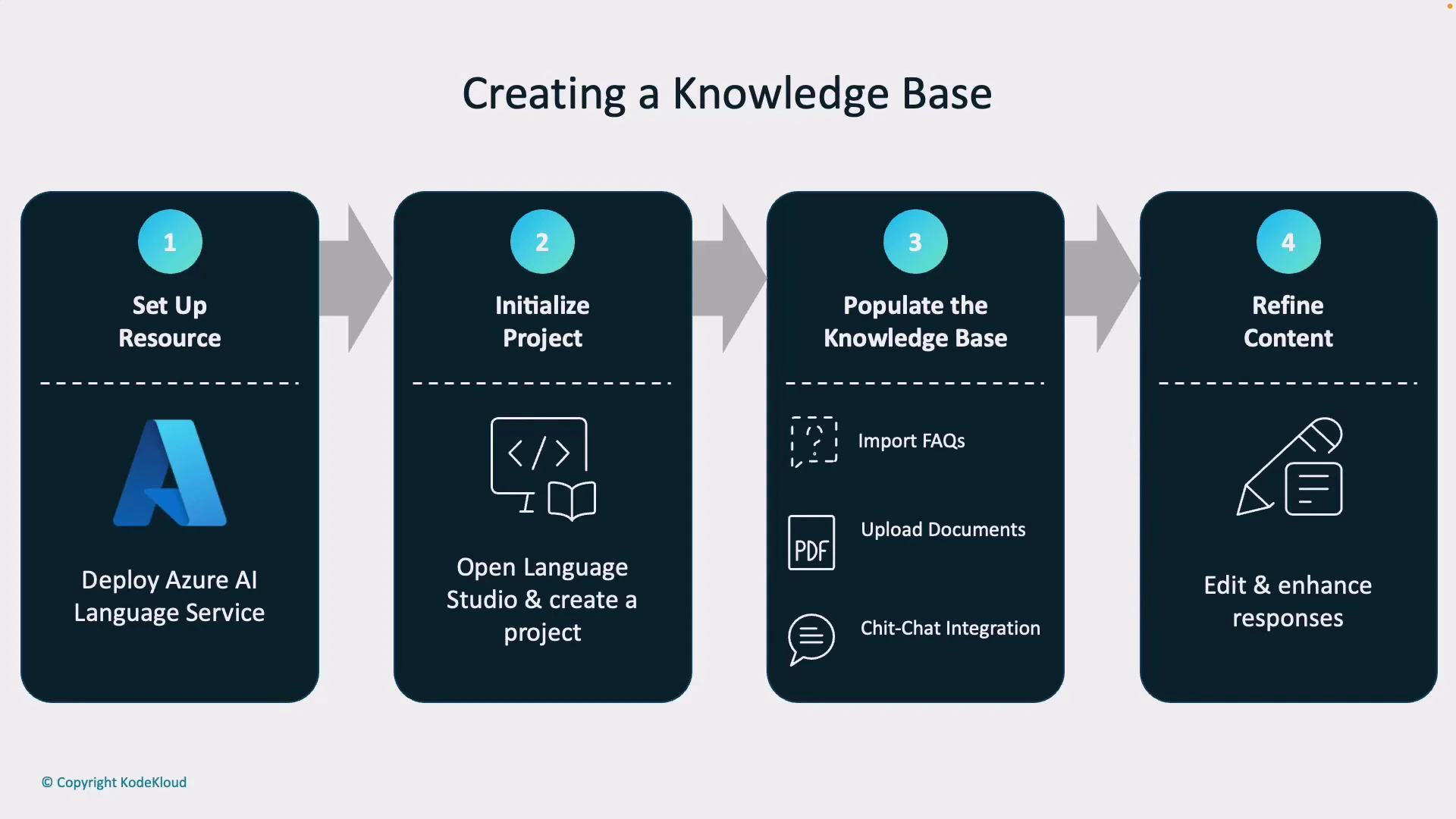

Creating a Knowledge Base

A well-structured knowledge base is the heart of an effective QnA system. Building one typically follows these four steps:- Set up resources

- Deploy a language service (for example, Azure AI Language Services) in your cloud account to provide QnA and language capabilities.

- Initialize a project

- Use a management tool like Language Studio to create a new QnA project. Language Studio provides a no-code, web-based UI for managing QnA content.

- Populate the knowledge base

- Import FAQs, upload PDFs and text documents, and add chit-chat/fallback phrases for conversational behavior.

- Refine content

- Edit responses, add alternative phrasings, and align tone with your brand for clarity and consistency.

| Content Type | Purpose | Example |

|---|---|---|

| FAQ pair | Direct answers to common queries | ”How to reset password” → steps + link |

| Document excerpts | Longer policy excerpts or guides | Banking fees PDF sections |

| Chit-chat | Friendly fallbacks for small talk | ”Thanks for your help!” → “You’re welcome!” |

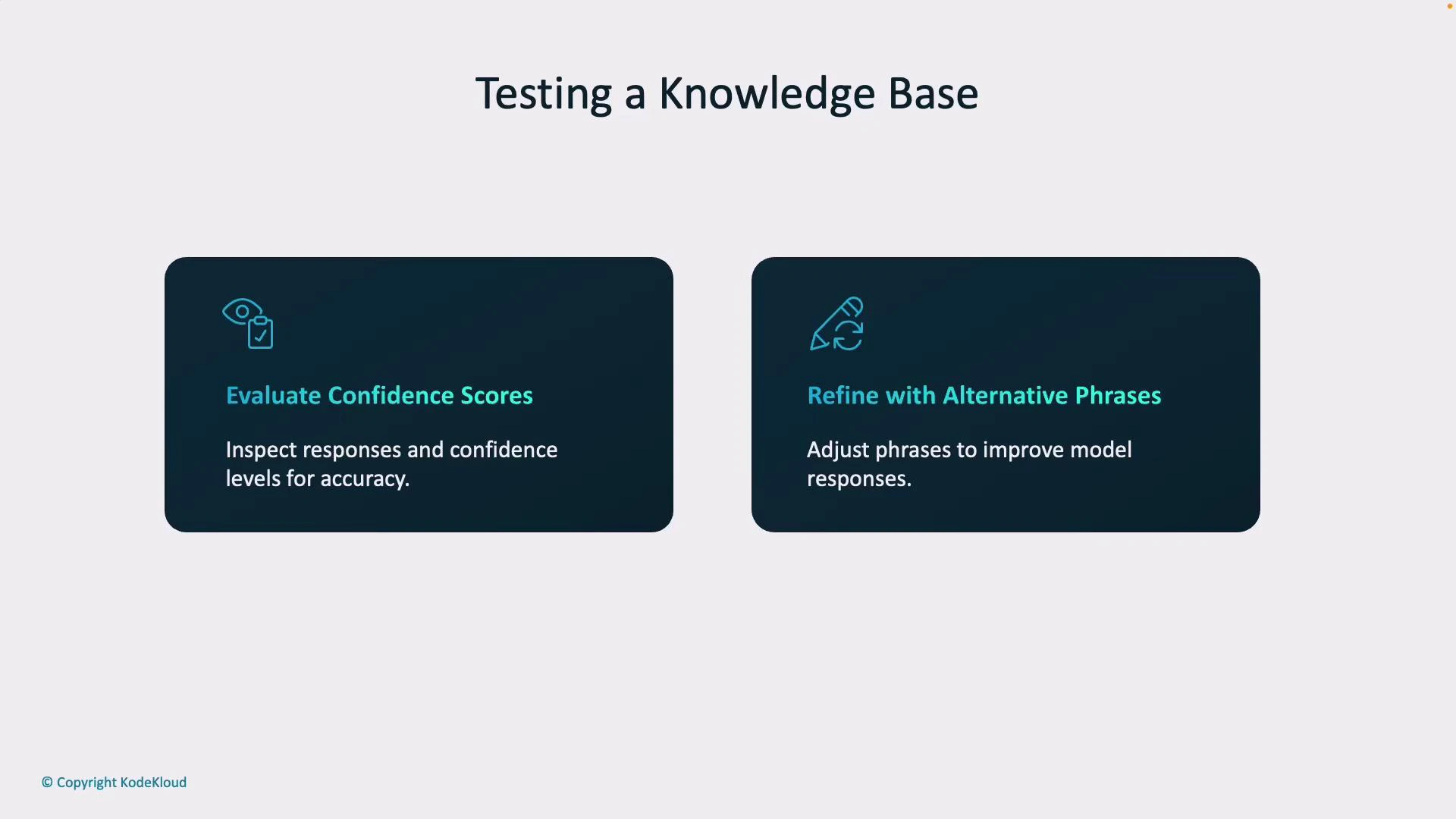

Testing and Publishing Your Knowledge Base

Testing and monitoring ensure your knowledge base behaves correctly in production. Key validation tasks include:- Evaluate confidence scores: Every returned candidate can include a confidence score. Use these scores to determine when to accept the top answer, show multiple options, ask a clarifying question, or escalate to a human agent.

- Add alternative phrasings: Users ask the same question in many ways. Add synonyms and alternate wordings to improve recall and retrieval accuracy.

Monitor confidence thresholds and design fallback behavior (for example: ask a clarifying question or route to a human agent) when scores are low.

- Generate a REST API endpoint so applications can query the knowledge base over HTTP.

- Enable SDK compatibility — Azure and other providers supply SDKs in multiple languages to speed integration and reduce boilerplate code.

Links and References

- Azure AI Language Services

- Language Studio (Azure)

- Designing conversational FAQ systems — best practices