This article explains how an application sends a natural language question to a question-answering service (Azure Language Services’ Custom Question Answering) and receives a structured answer. You’ll see request and response examples, how to create and populate a Custom Question Answering project in Language Studio, and how to call the prediction endpoint programmatically.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

How the application asks a question

An application typically sends a JSON payload containing the user’s natural language question and options that control the response ranking, filtering, and the number of answers returned. Example request payload:| Field | Type | Description |

|---|---|---|

| question | string | The user’s natural language query. |

| top | integer | Return the top N most relevant answers (example: 2). |

| scoreThreshold | number | Minimum score required for an answer to be considered (example: 20). |

| strictFilters | array | Metadata-based filters to narrow results (example: category == “api”). |

Parameter names and request formats have changed over time. Older QnA Maker exports may use fields like

scoreThreshold and strictFilters. Newer Custom Question Answering REST APIs may use confidenceScoreThreshold, filters.metadataFilter, and floating-point confidence scores. Always check the API version in the documentation for the exact schema.Example structured response

The service returns a JSON response containing an array of answers. Here is a representative response for the sample request above:| Field | Type | Description |

|---|---|---|

| answers | array | Array of answer objects returned by the knowledge base. |

| answers[].score | number | Numerical confidence score for the answer (example: ~27.7). |

| answers[].id | integer | Identifier of the QnA pair in the knowledge base. |

| answers[].answer | string | The textual answer returned. |

| answers[].questions | array | Alternate phrasings linked to this answer (useful for matching). |

| answers[].metadata | array | Metadata attached to the QnA pair (categories, tags). |

Create, populate, and deploy a Custom Question Answering project

Below are the steps to create and publish a Custom Question Answering project in Azure Language Studio. Follow the sequence and use the portal UI to create resources and configure indexing.-

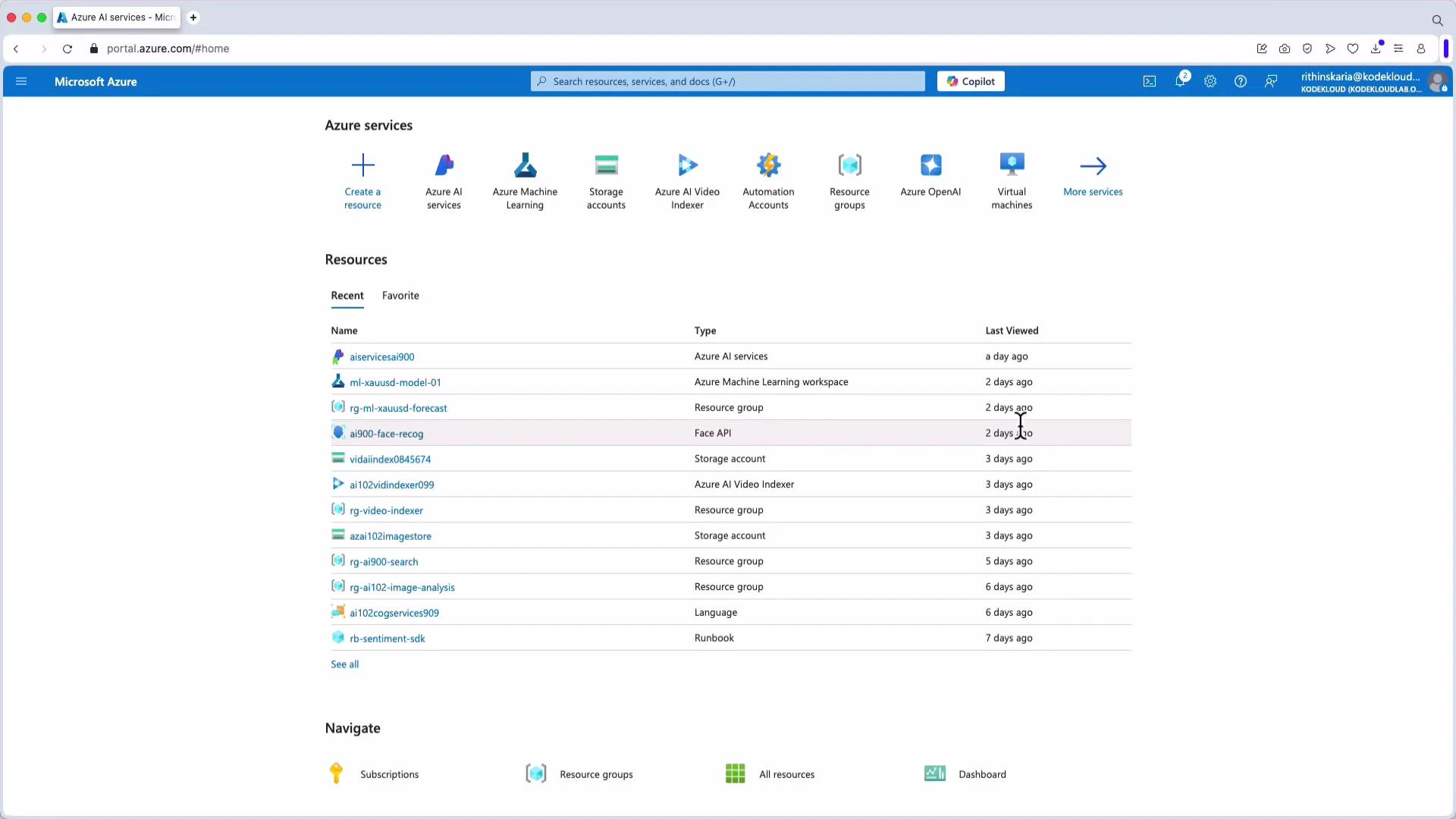

Open the Azure portal and navigate to Language Studio.

-

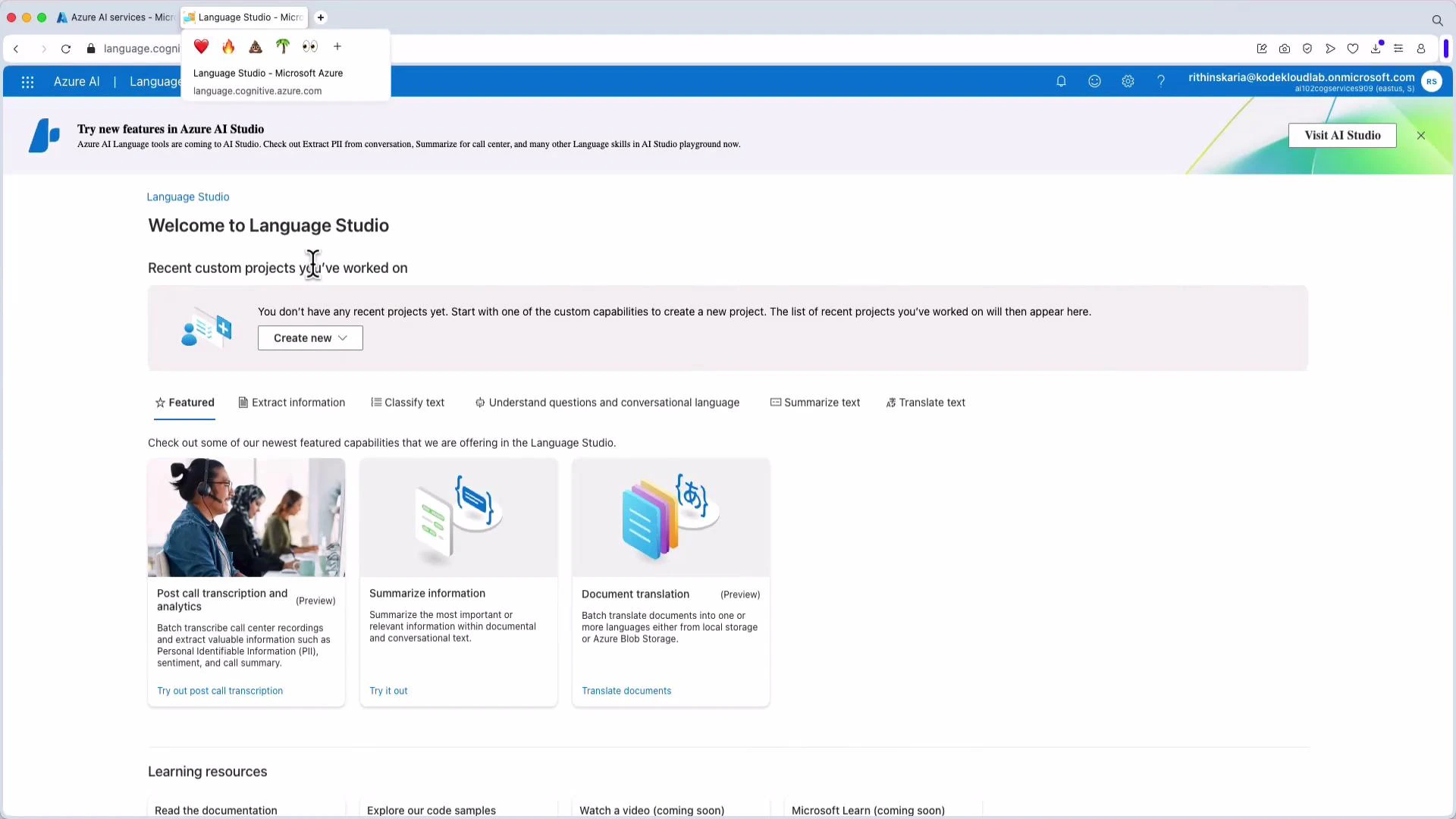

In Language Studio, choose “Custom Question Answering” to create a new project.

-

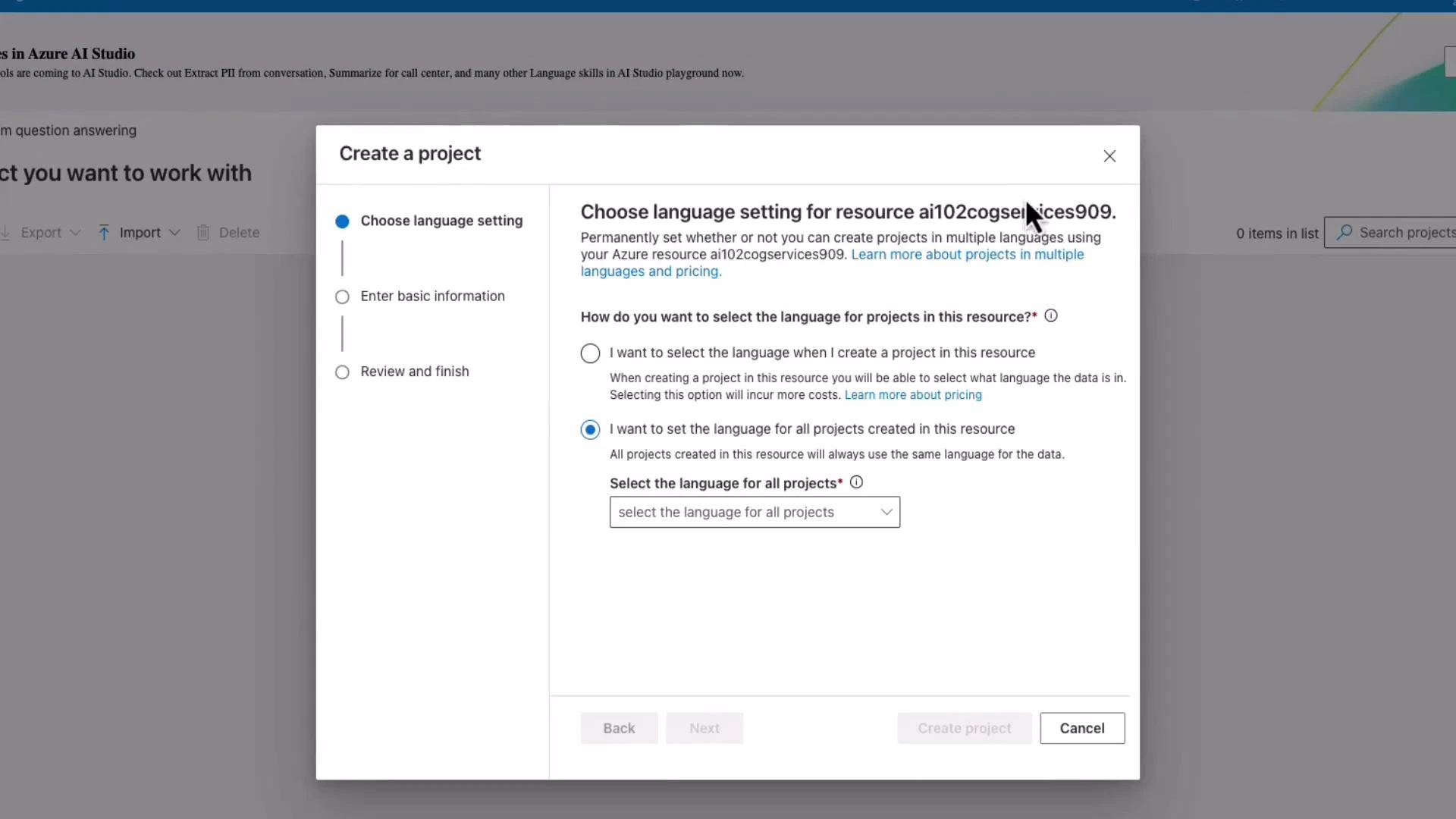

Select the project language and the Azure AI Search (Cognitive Search) resource that will be used for indexing. In some scenarios, an Azure Cognitive Search resource is required to enable AI-powered indexing.

-

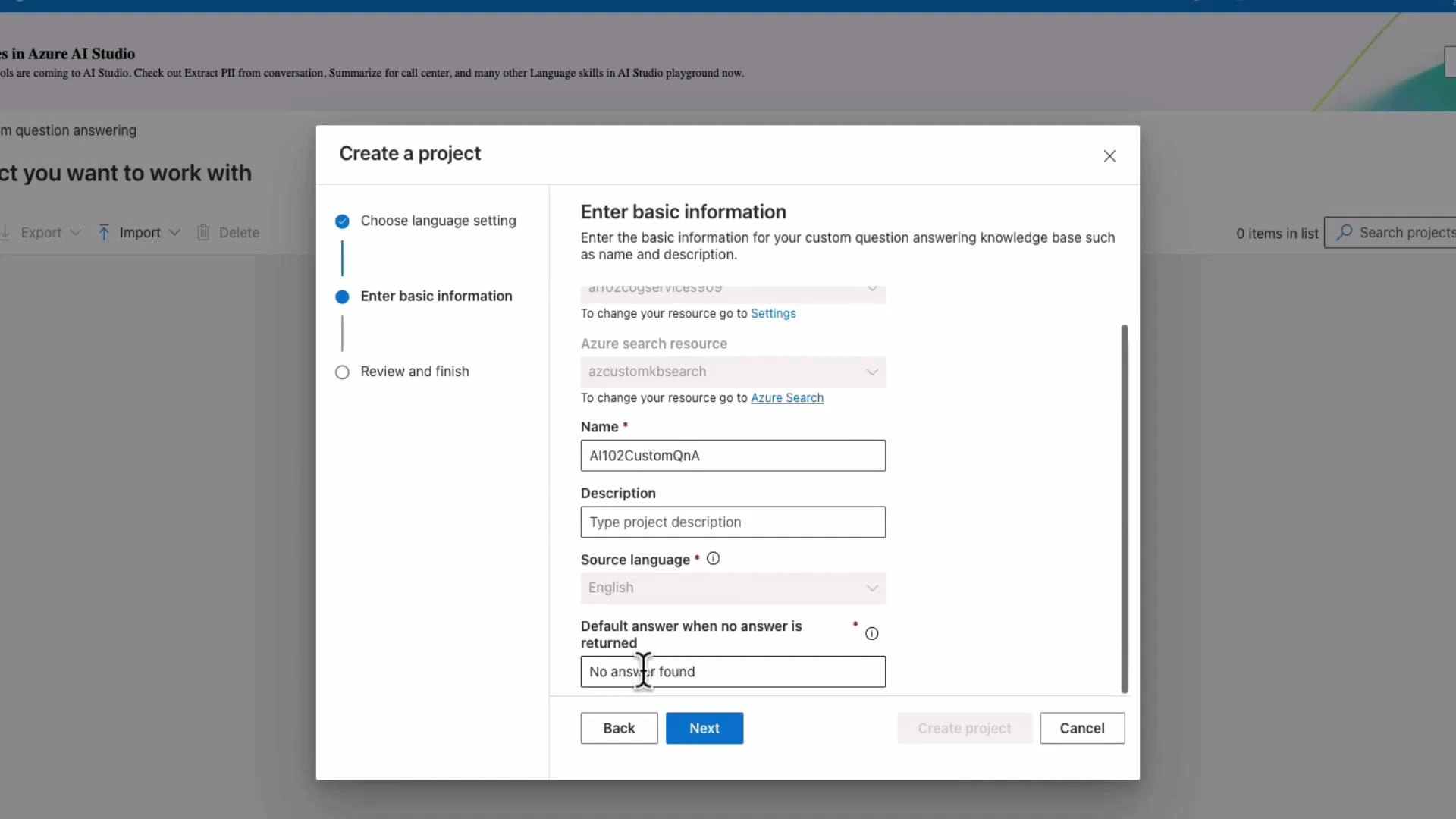

Provide a project name (for example, AI102CustomQnA), set a default fallback answer (for example, “I don’t know” or “No answer found”), and create the project.

-

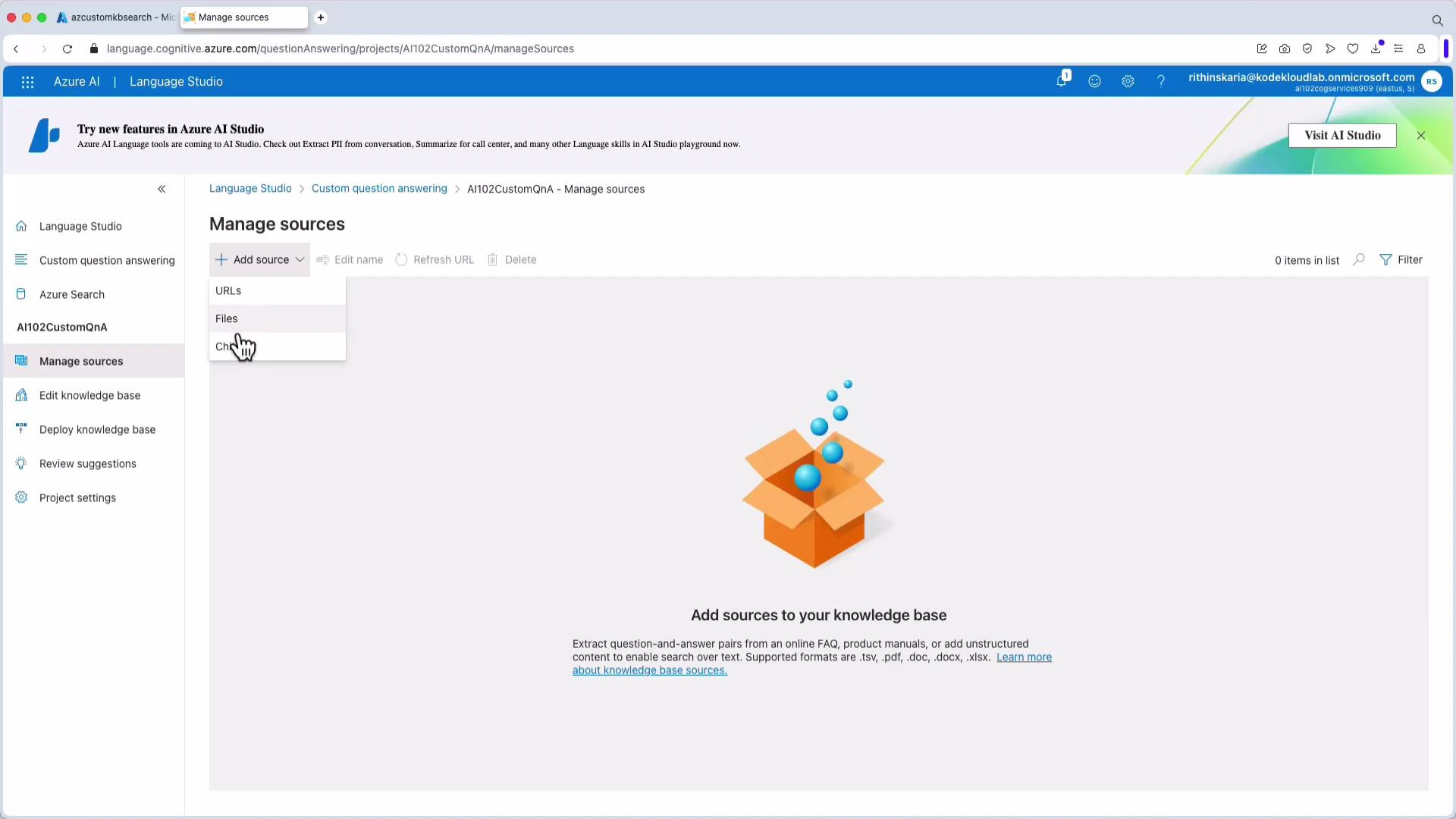

Add data sources to the knowledge base: upload files (TSV/CSV/QnA formats), point to FAQ pages (URLs), or include built-in chit-chat personalities for conversational tone.

-

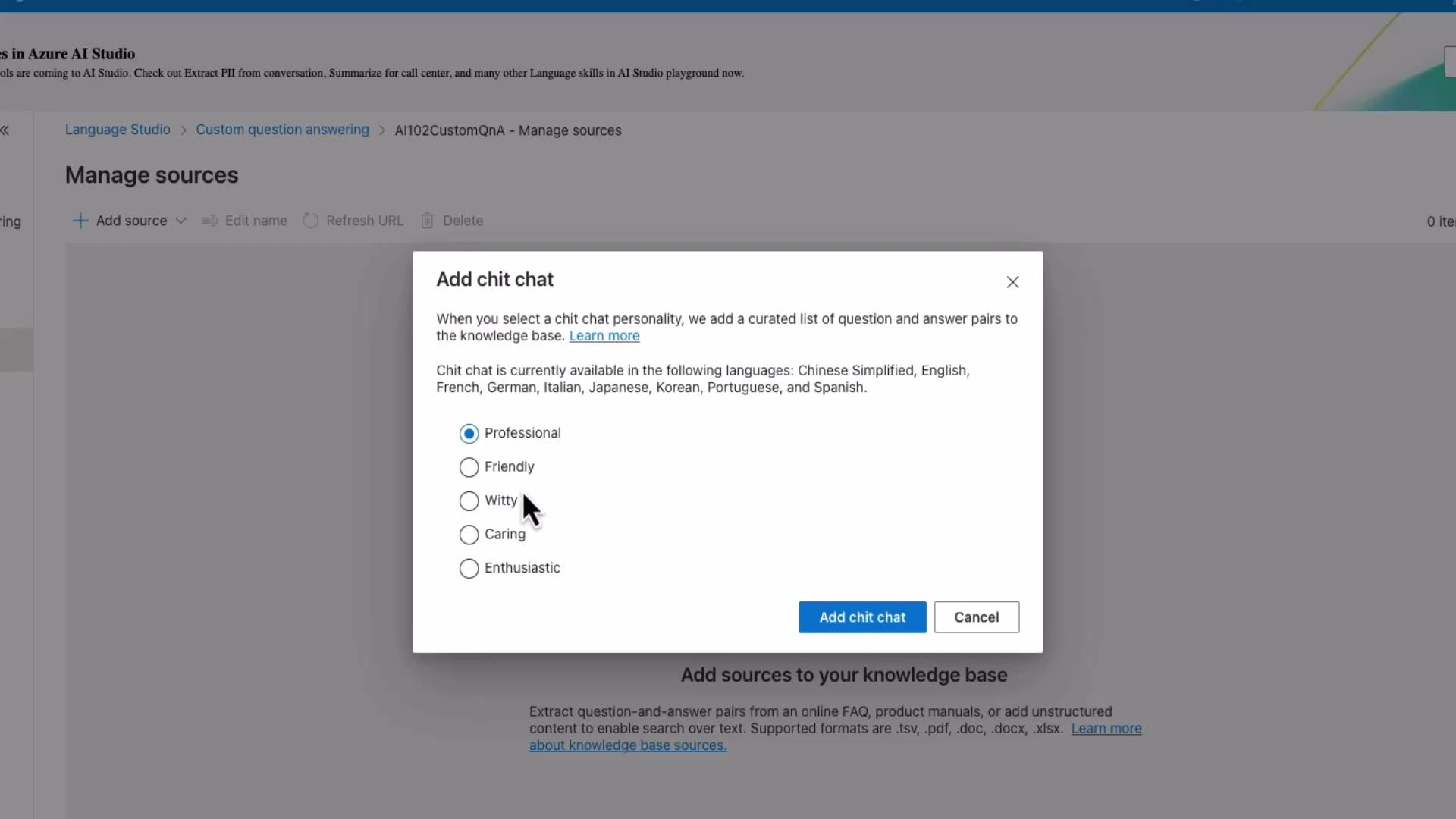

Optionally add a chit-chat personality to control conversational style (professional, friendly, witty, caring, enthusiastic).

-

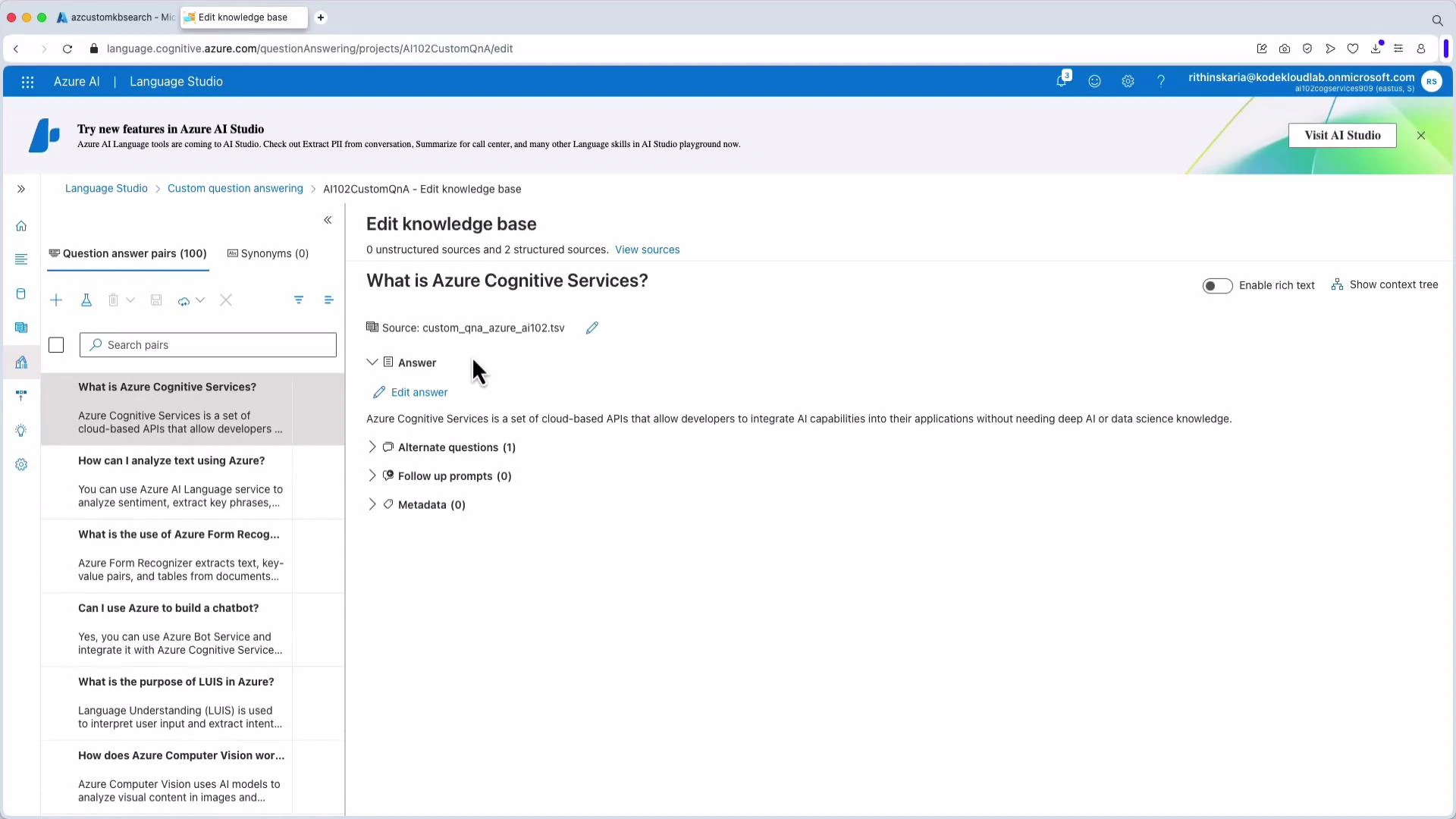

After adding sources (for example, a TSV named “AI 102”), edit QnA pairs and metadata in the knowledge base to fine-tune matches and filtering behavior.

-

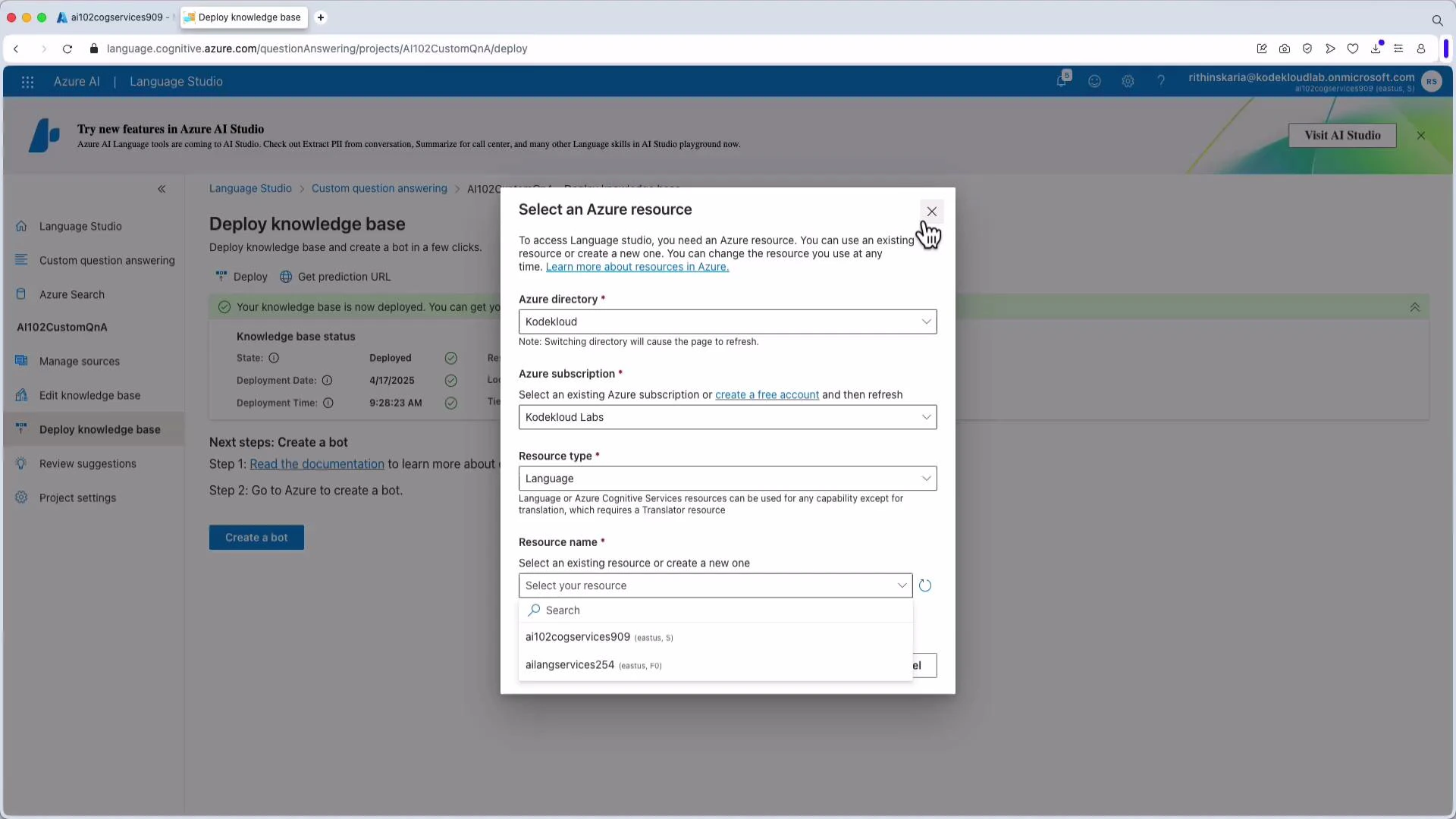

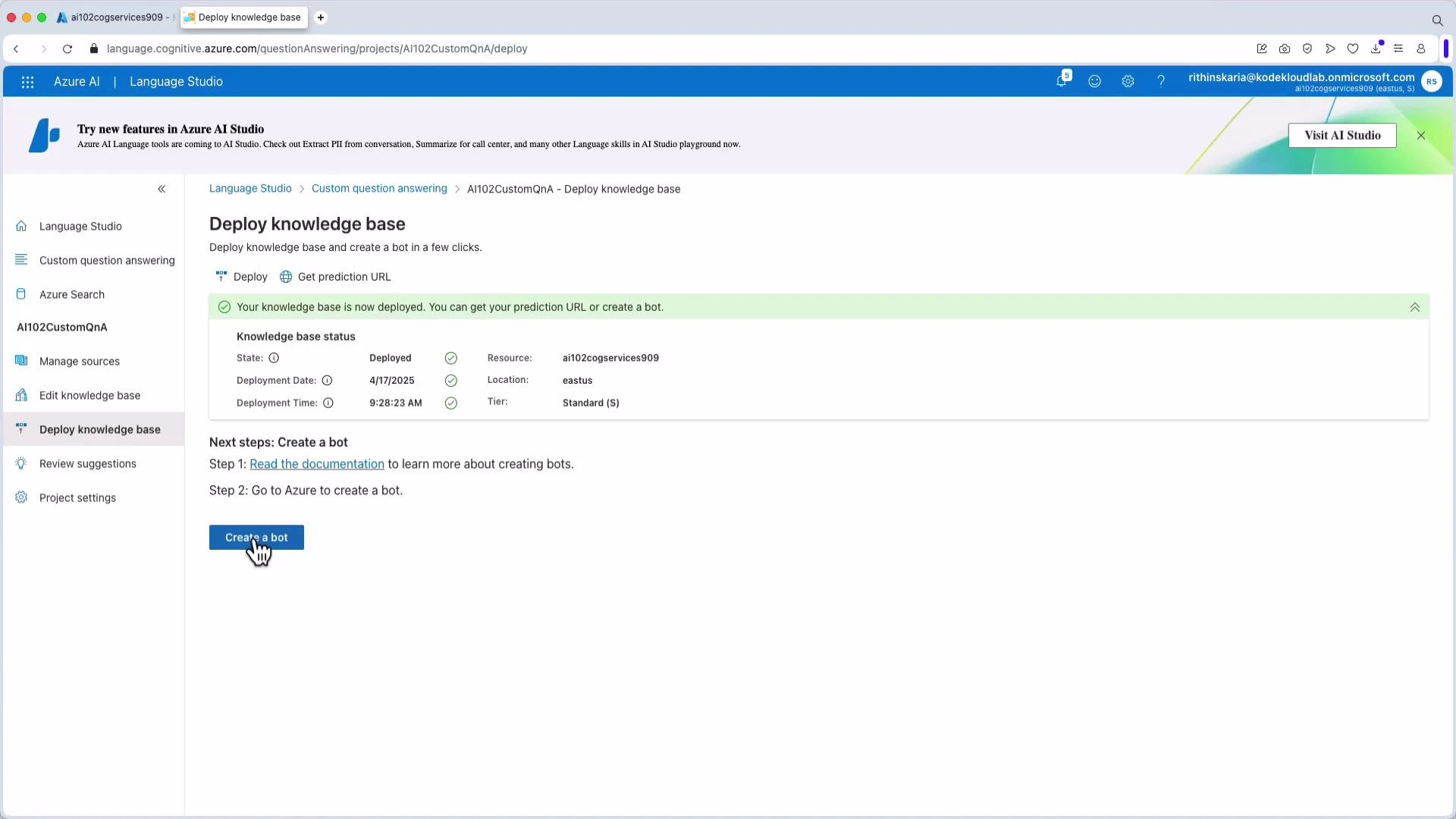

Deploy (publish) the knowledge base. Deployment usually takes a few minutes. After deployment you will receive a prediction endpoint URL and a prediction key (Ocp-Apim-Subscription-Key) to use when querying programmatically.

For production scenarios, consider using Azure Active Directory (Azure AD) authentication instead of subscription keys. Check the API version documentation for supported authentication methods and best practices.

- Optionally create a bot connected to the deployed knowledge base and integrate it with web apps, App Service, virtual machines, or other channels.

Example: Calling the prediction endpoint

Below are two common examples you can obtain from Language Studio: a curl POST and a Python snippet that calls the REST endpoint directly (no SDK). Replace placeholders with values from your portal (endpoint, project and deployment names, and keys). Curl example:Summary

- Build a Custom Question Answering project in Language Studio: create a project, add sources (files, URLs, chit-chat), edit QnA pairs and metadata, then deploy.

- Use the prediction URL and key (or Azure AD) to query the knowledge base programmatically.

- Tune responses using top, confidence thresholds, and metadata filters.

- Azure AI Language Services overview

- Custom Question Answering documentation

- Azure Cognitive Search documentation

- QnA Maker (deprecated) notes