Chain-of-thought is a prompt-engineering technique that asks a model to expose intermediate reasoning steps before presenting a final answer. Asking for this explicit decomposition encourages the model to build a logical progression, which often improves accuracy, transparency, and explainability. This lesson uses a concise example: ask the model which subject is “easy to understand but hard to master,” and instruct it to break down its thinking before answering. Modern LLMs (for example, ChatGPT-4.5) often include an analysis or reasoning section showing how they arrived at a conclusion when prompted for a chain-of-thought. Step-by-step CoT workflow:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- Define evaluation criteria.

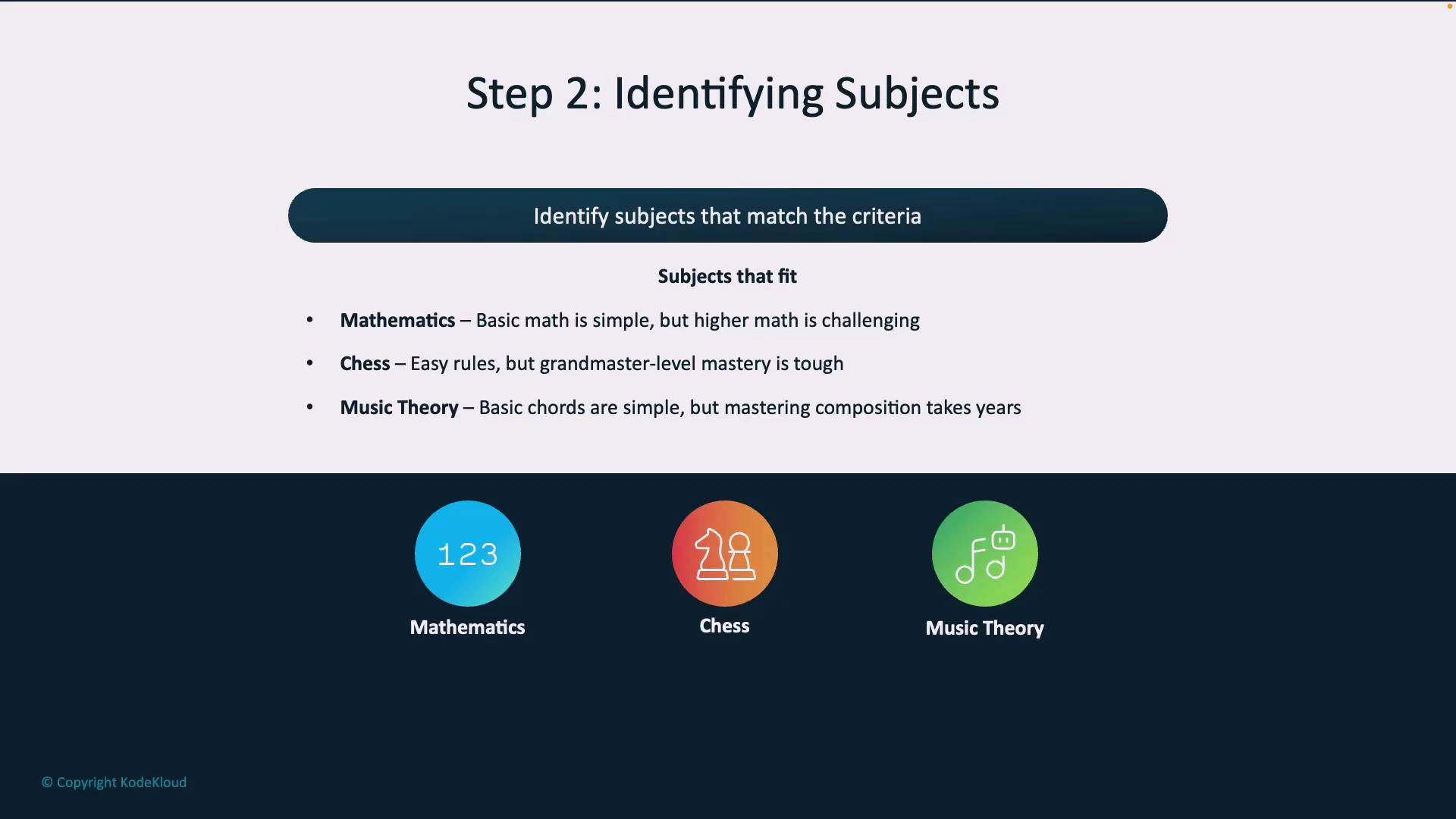

- Identify candidate subjects that match both ends of the spectrum.

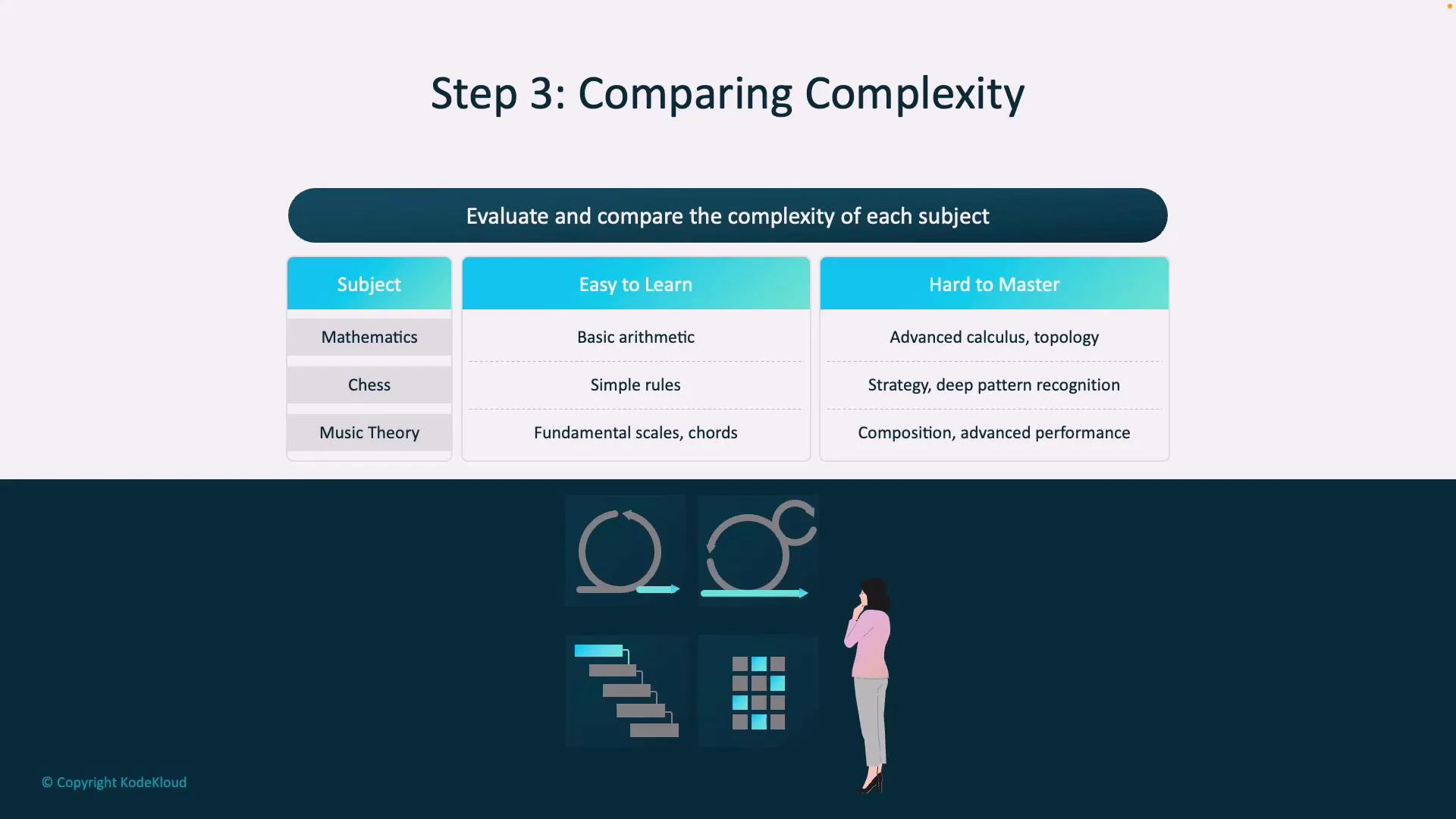

- Compare candidates against the criteria with concrete examples.

- Draw a reasoned conclusion based on the comparison.

- Mathematics: basic arithmetic is straightforward, but higher-level fields involve abstraction and technical depth.

- Chess: rules are simple to learn, while grandmaster-level play requires long-term pattern recognition and strategic nuance.

- Music theory: basic chords and notation are accessible, but professional composition and performance demand deep theoretical and practical skill.

| Subject | Easy to Learn | Hard to Master |

|---|---|---|

| Mathematics | Basic arithmetic, elementary problem solving | Abstract branches (topology, real analysis), research-level problem solving |

| Chess | Piece movements, basic tactics | Deep opening theory, endgame technique, long-term planning |

| Music theory | Major/minor scales, basic chords | Composition, advanced harmony, virtuoso performance |

- Improves transparency and traceability of model outputs.

- Helps catch flawed reasoning earlier by exposing intermediate steps.

- Useful for tasks that require planning, multi-step calculations, or justification.

- Long chains can still include incorrect or misleading intermediate steps — always verify critical conclusions.

- Explicit CoT may increase token usage and response length.

- Some models may decline to show internal chain-of-thought; consider using “explain your reasoning” style prompts that produce structured analyses.

Chain-of-thought prompts often improve reasoning and explainability. However, longer chains can still contain incorrect steps—verify any critical conclusions and cross-check with trusted sources.

| Resource | Description |

|---|---|

| Prompt engineering overview | Concepts and best practices for crafting prompts. |

| Chain-of-thought research paper summary | Academic literature describing CoT and its effects on reasoning. |

| OpenAI best practices | Practical guidelines for prompting large language models. |