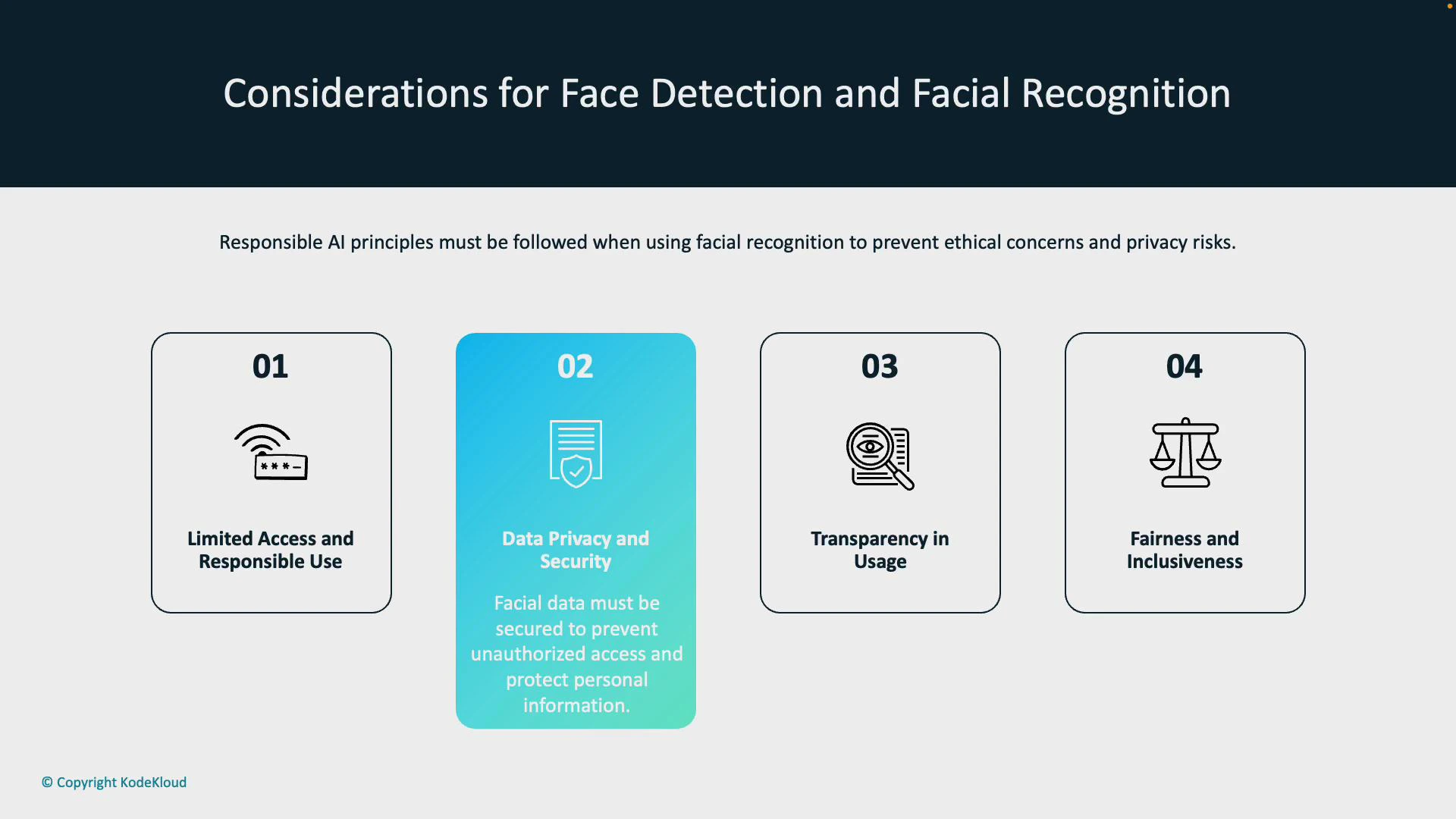

When deploying face detection and face recognition systems, follow responsible AI principles to reduce ethical risk, protect privacy, and comply with legal requirements. The guidance below summarizes the key areas to address and practical actions engineering, security, and compliance teams should take. Core considerations:Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

- Limited access and responsible use

- Data privacy and security

- Transparency in usage

- Fairness and inclusiveness

1. Limited access and responsible use

Face recognition should not be enabled by default. Restrict activation and management to authorized personnel and justified use cases only. Many cloud providers (for example, Microsoft Azure’s Face service) require documented justification or an approval workflow before enabling certain biometric features. Enforce administrative controls, role-based permissions, and approval gates for sensitive scenarios.Limit access with role-based permissions, require documented use-case approvals, and maintain logging and audit trails so decisions to enable face recognition are accountable and traceable.

2. Data privacy and security

Facial images and biometric templates are sensitive personal data and must be protected accordingly. Apply a defense-in-depth approach:- Encrypt data in transit and at rest.

- Enforce strict access controls and separation of duties.

- Minimize data collection—only capture what is necessary for the declared purpose.

- Define and implement retention schedules; securely delete or anonymize data when no longer required.

- Use secure storage, strong key management, and rotate keys as appropriate.

- Maintain audit logging and monitoring of access and processing operations.

- Obtain clear consent or another lawful basis for collection where required by local law.

Be aware of legal and regulatory obligations (e.g., GDPR, state biometric laws). Processing biometric data without a lawful basis or proper consent can lead to significant penalties and reputational harm.

3. Transparency in usage

Transparency builds trust and reduces user confusion. Communicate clearly about biometric collection and processing:- Provide visible notices (signage or UI prompts) at the point of collection.

- Document purpose, retention periods, and access rights in your privacy notice.

- Offer opt-out mechanisms where feasible and document alternatives for users who decline.

- Maintain stakeholder documentation explaining system design, intended uses, limits, and potential risks.

4. Fairness and inclusiveness

Design and evaluate systems to avoid unequal performance across demographic groups:- Use diverse, representative datasets for training and testing.

- Evaluate performance across subgroups (age, gender, skin tone) using metrics like false positive rate, false negative rate, and overall accuracy.

- Apply bias-mitigation techniques in data preparation, model selection, and post-processing.

- Keep human oversight for high-impact decisions and provide channels for appeal or correction.

- Monitor production performance continuously and retrain models when data drift or disparities appear.

Summary

Apply responsible AI principles across the lifecycle of face detection and recognition solutions:- Control and document access to biometric features.

- Protect facial data through encryption, access control, and data minimization.

- Be transparent with users and provide opt-out or alternatives.

- Evaluate and mitigate bias to ensure fairness and inclusiveness.

Quick reference table

| Consideration | Why it matters | Recommended actions |

|---|---|---|

| Limited access | Prevents unauthorized use and scope creep | Role-based permissions, approval workflows, audit logs |

| Data privacy & security | Facial data is highly sensitive | Encryption, key management, retention policies, logging |

| Transparency | Users need to know how data is used | Notices, privacy statements, opt-outs, documentation |

| Fairness & inclusiveness | Avoids disparate harm to subgroups | Representative datasets, subgroup metrics, bias mitigation |

Links and references

- Azure Face service documentation (Microsoft Learn)

- GDPR overview and guidance

- NIST Face Recognition Vendor Test (FRVT)

- Responsible AI guidance (Microsoft)