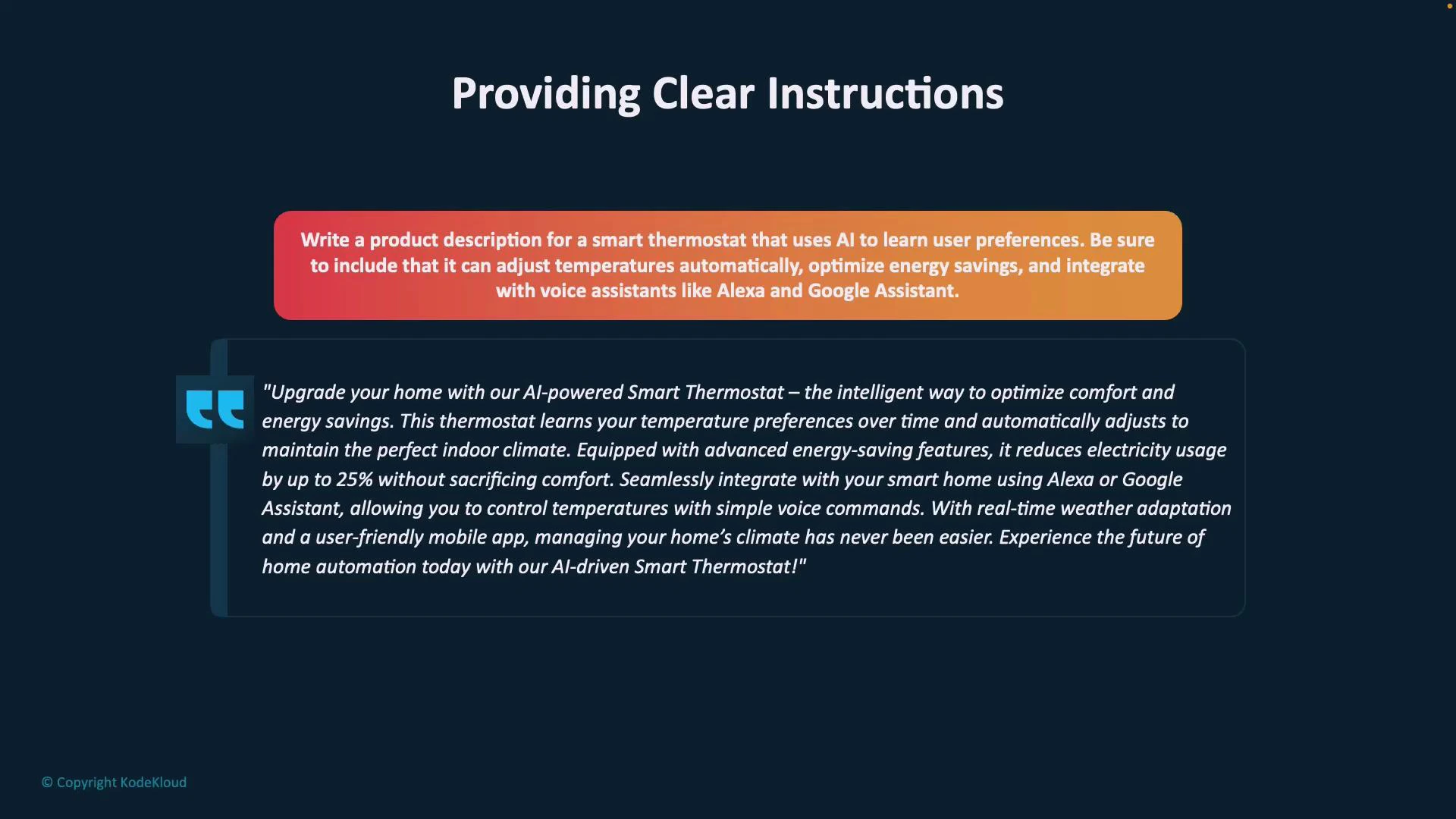

In this lesson we explain how clear, well-structured instructions (prompts) improve the quality of an AI model’s output. Clear prompts reduce ambiguity and help the model produce focused, relevant, and actionable responses. Why this matters: vague prompts yield generic results. For example, a prompt like “write a product description for a new smart thermostat” gives the model little context. The output may be correct, but it often lacks differentiating features, technical benefits, and a clear audience focus. To get richer results, provide specifics about capabilities, intended users, tone, and desired format. For example, mentioning AI features, energy-optimization algorithms, and voice-assistant integration will prompt the model to generate a more detailed and compelling product description.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Structure your prompt: primary, supporting, and grounding content

Organizing the prompt into clear sections makes it easier for the model to follow intent and preserve context. Use separators (hyphens, hashes, or labeled headers) to mark sections when your prompt includes multiple documents or long text.- Primary content: the main input or core idea (e.g., course delivery formats, deadlines, or product specifications). This is the context the model uses to perform the requested task.

- Supporting content: clarifying examples, data, user preferences, or industry context that enrich the primary content.

- Grounding content: constraints or scope-defining elements (timeframe, audience, required length) that keep answers focused and relevant.

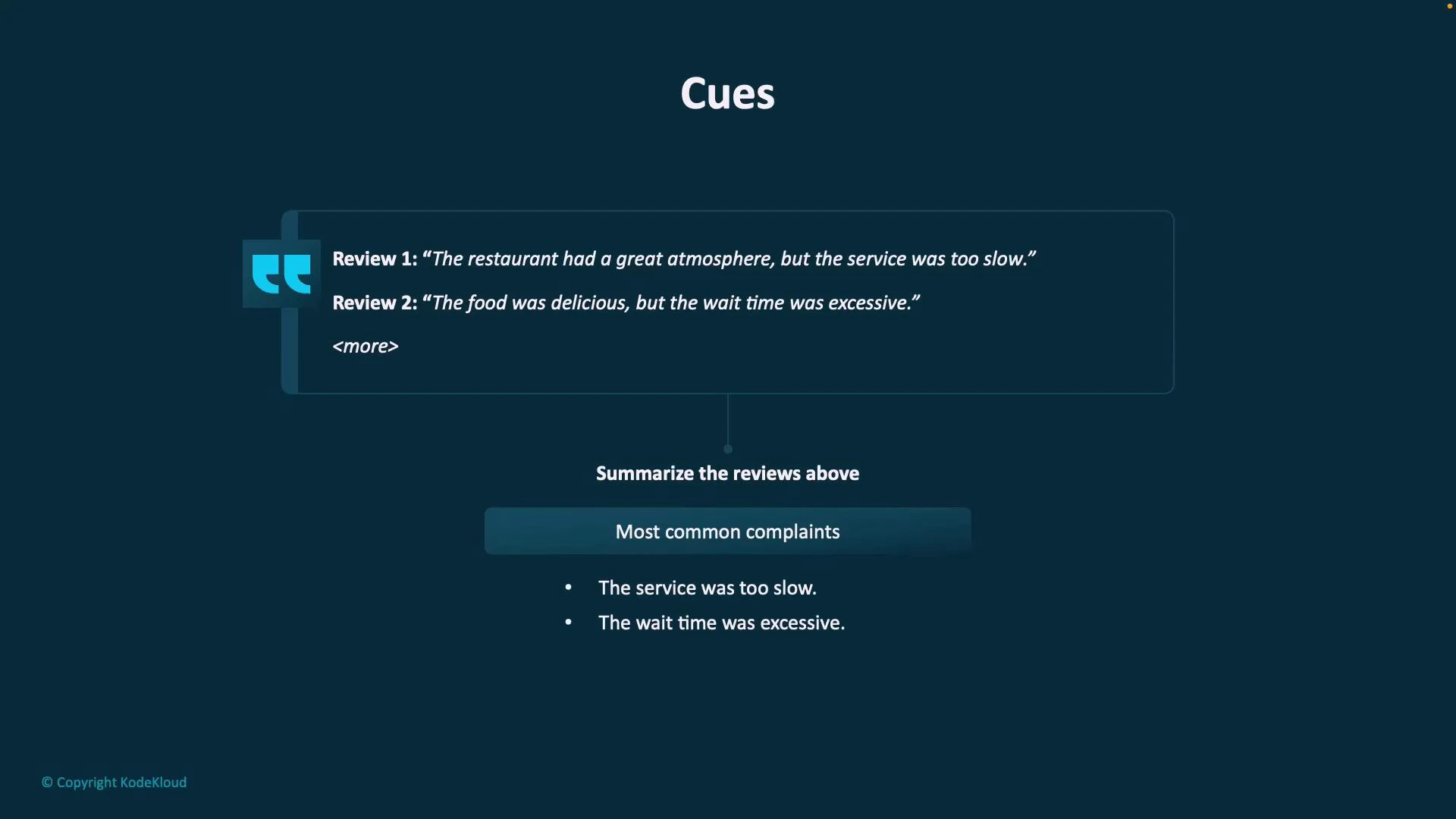

Use cues to control behavior

Cues are short phrases or directives that specify tone, structure, or intent. They tell the model not just what to produce but how to present it. Examples:- “Summarize the reviews above.”

- “Generate bullet points for a product roadmap.”

- “Explain in simple terms for non-technical stakeholders.”

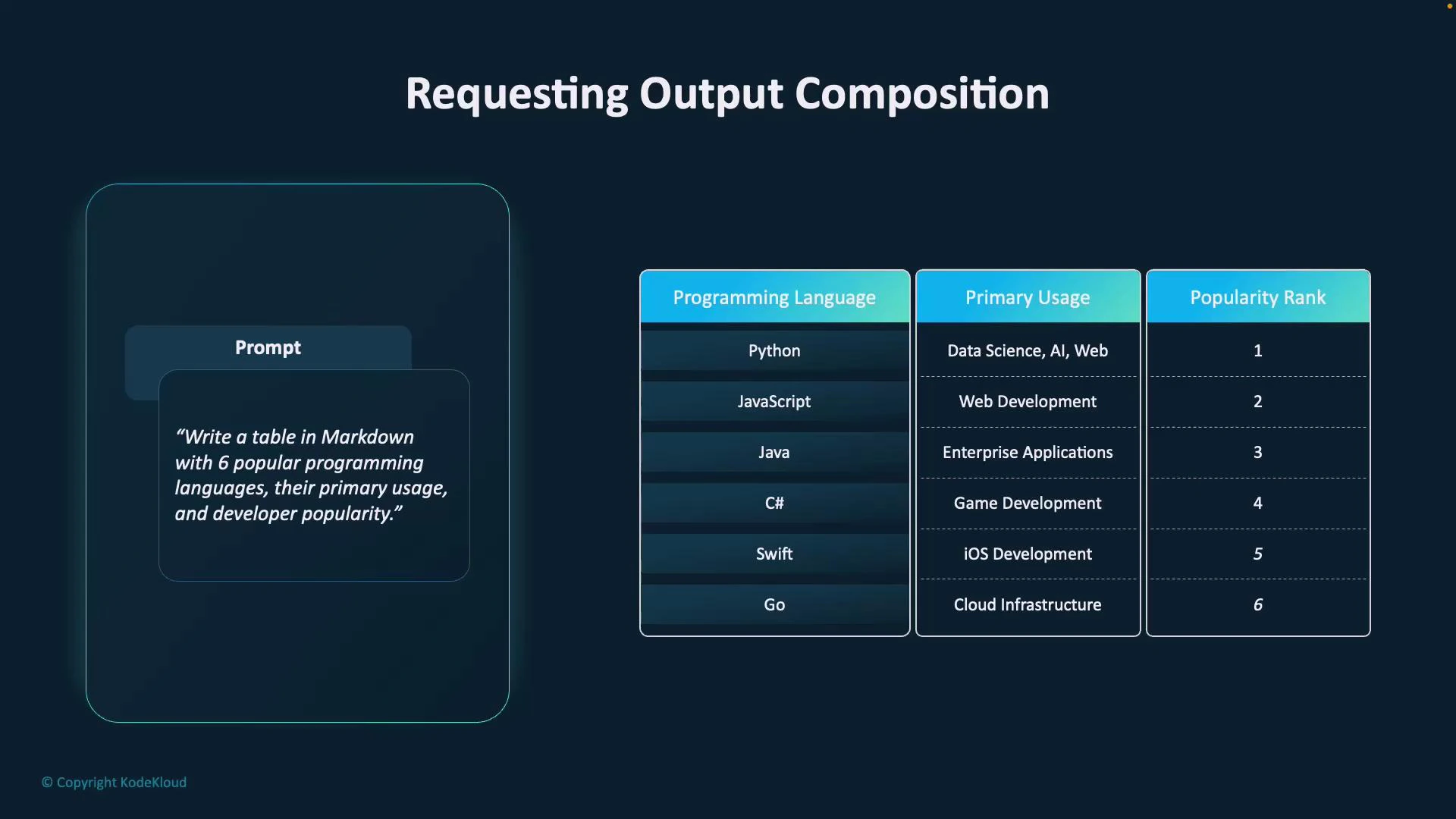

Request structured outputs explicitly

When you need machine-readable or copy/paste-ready results, ask for a specific format (table, CSV, JSON, Markdown). Specify column names or field keys so the model knows exactly what to produce.

| Format | Use case | Example prompt |

|---|---|---|

| Markdown table | Human-readable documentation or README | ”Provide a markdown table with columns: Language, Primary usage, Popularity rank.” |

| CSV | Data import into spreadsheets or pipelines | ”Output CSV rows with columns: product_id, name, price_usd.” |

| JSON | APIs, automation, or tooling | ”Return a JSON array of objects with keys: title, description, tags.” |

Practical tips and final checklist

- Start with a clear primary content block that includes the essential context.

- Add supporting details: examples, metrics, or user personas.

- Add grounding constraints: timeframes, audience, length, or exclusions.

- Add explicit cues: desired style, structure, or output format.

- When needed, request machine-readable output and list the exact columns/keys.

Practical tips: start with a clear primary content block, add supporting details and grounding constraints, and finish with explicit cues and output format requirements (e.g., “Provide a markdown table with these columns: Language, Use Case, Popularity”).

Links and references

- Prompting best practices — OpenAI Guides

- Designing prompts for structured outputs (guide)

- Practical prompt engineering patterns — blog posts and templates