Reviewing and improving a machine learning model is a recurring, critical phase in any ML workflow. This guide walks through practical steps to evaluate model performance, find gaps, and iterate so the model produces accurate, robust predictions over time.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

Training and evaluation overview

After labeling data, train your model (or fine-tune a pretrained backbone) so it can generalize to unseen examples. Once training finishes, evaluate with metrics that match your task and business needs. Key evaluation metrics:| Metric | Definition | When to use |

|---|---|---|

| Precision | TP / (TP + FP) — proportion of predicted positives that are correct | When false positives are costly (e.g., spam detection) |

| Recall | TP / (TP + FN) — proportion of actual positives detected | When missing true positives is costly (e.g., medical diagnosis) |

| F1 score | 2 * (Precision * Recall) / (Precision + Recall) — harmonic mean of precision and recall | When you need a single balanced metric |

| NER F1 (entity-level) | F1 computed on exact span + label matches | Use for strict named-entity recognition evaluation |

- too small or overly simplistic dataset,

- data leakage between training and test sets,

- or an evaluation set that doesn’t reflect real-world variability.

Perfect evaluation scores are typically a red flag. Before trusting such results, verify that there is no data leakage, confirm the evaluation set size and representativeness, and inspect your train/validation/test splits.

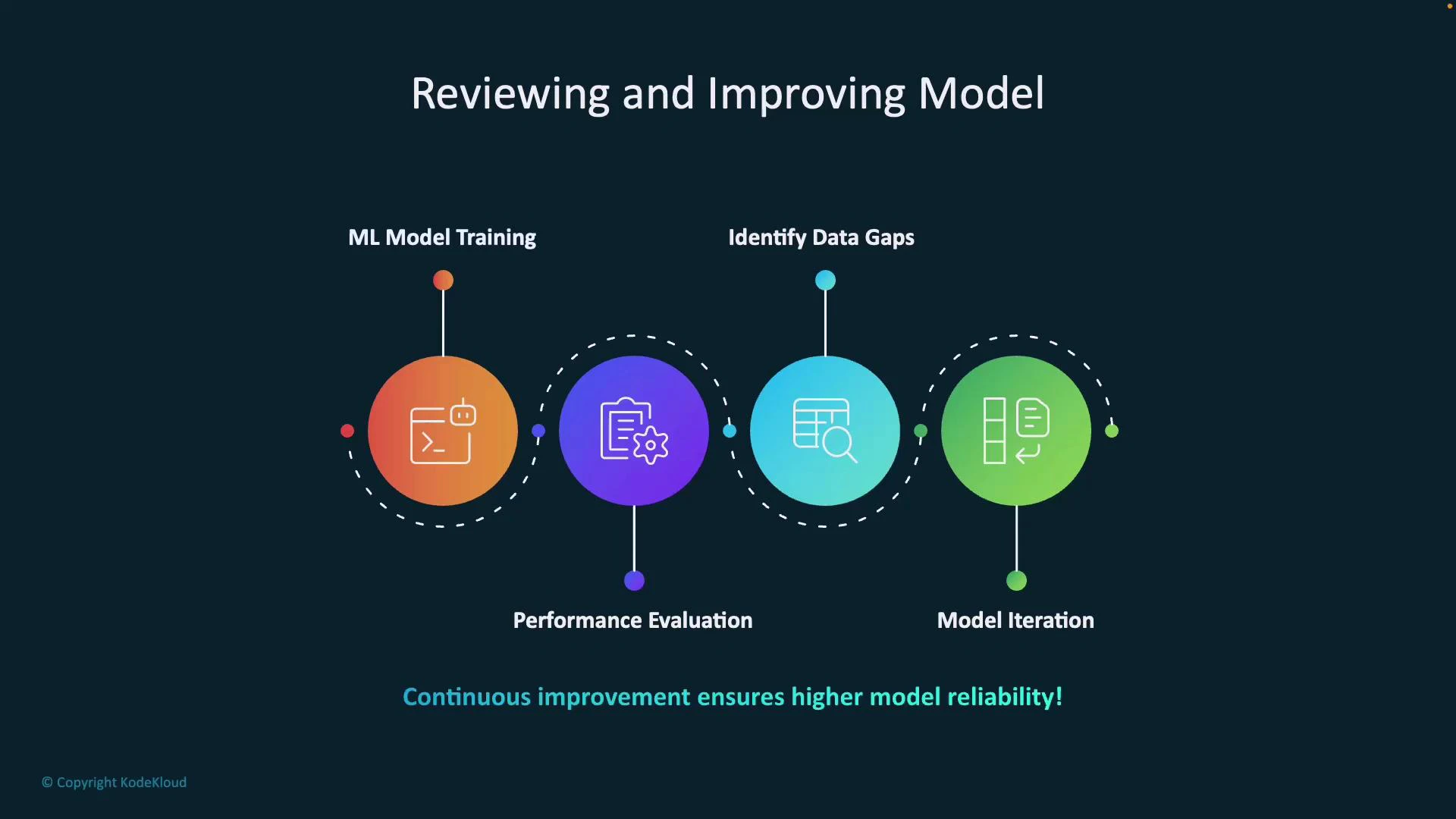

Iterative improvement cycle

Improving a model is an iterative loop. A practical cycle looks like:- Train or fine-tune the model on labeled data.

- Evaluate using appropriate metrics and robust held-out data (train/validation/test splits or cross-validation).

- Perform error analysis to find systematic failures (missing categories, frequent misclassification, label bias).

- Fix data gaps by:

- adding labeled examples for underrepresented classes,

- improving annotation quality and instructions (clear schema, training for annotators),

- using data augmentation or synthetic examples where appropriate,

- applying active learning to prioritize labeling informative samples.

- Iterate: retrain with the improved dataset and tune hyperparameters, architectures, or regularization.

- Monitor performance in production and repeat the loop as new data arrives.

- Create confusion matrices and per-class precision/recall to surface recurring mistakes.

- Inspect failure cases manually to discover annotation inconsistencies or ambiguous labels.

- Segment errors by features (e.g., text length, language, input source) to reveal hidden biases.

Practical actions when the model UI flags “not enough training data”

If your model management UI reports insufficient training data, take these actions:- Collect additional labeled examples that reflect real-world distributions and edge cases.

- Ensure the evaluation set is held out properly and mirrors production data.

- Improve annotation consistency: clear guidelines, multiple annotators, and adjudication for disagreements.

- Use cross-validation or enlarge holdout sets to stabilize metrics.

- Consider transfer learning or pretrained backbones to reduce labeled-data needs.

- Use ensembles or calibration techniques if score variance suggests instability.

Prioritize collecting diverse, representative examples and targeted error analysis—these activities usually deliver greater improvements than only tuning hyperparameters.

Monitoring and production

Continuous improvement is the backbone of reliable AI systems. In production, instrument the model to:- log predictions and key inputs,

- sample and label prediction failures,

- track drift in input distributions and label distributions,

- trigger retraining (or human-in-the-loop review) when performance drops.

References and further reading

- scikit-learn: Precision, recall, F1 — https://scikit-learn.org/stable/modules/model_evaluation.html

- Best practices for annotation and labeling — consider documentation from your annotation provider or tools such as Label Studio

- NER evaluation tools: seqeval or spaCy evaluation utilities