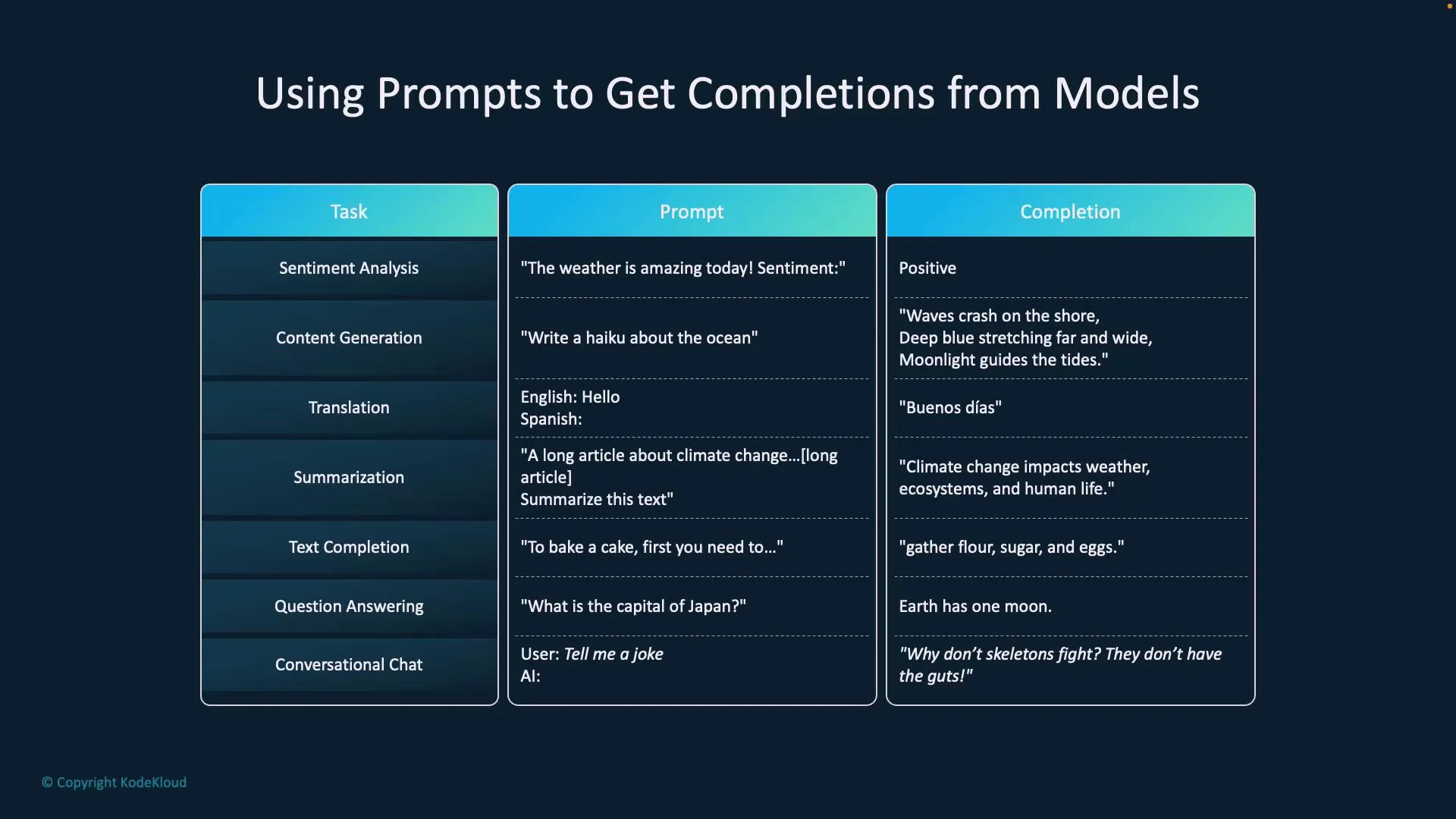

Prompts are how we instruct generative models (like GPT) to produce useful output. The model receives your prompt (the instruction or input) and returns a completion (the generated response). Clear, specific prompts lead to more accurate and relevant completions. Below is a quick reference of common prompt types, example prompts, and the typical completions they produce.Documentation Index

Fetch the complete documentation index at: https://notes.kodekloud.com/llms.txt

Use this file to discover all available pages before exploring further.

| Prompt type | Example prompt | Typical completion |

|---|---|---|

| Sentiment analysis | ”The weather is amazing today. Sentiment?" | "Positive” |

| Generation | ”Write a haiku about the ocean.” | A haiku poem about the ocean |

| Translation | ”English: Hello. Spanish:" | "Hola” |

| Summarization | ”Summarize this article:“ | A concise summary of the article |

| Text completion | ”To bake a cake, first you need to” | The continuation with steps or instructions |

| Question answering | ”What is the capital of Japan?" | "Tokyo” |

| Conversational chat | ”Tell me a joke.” | A joke (e.g., “Why don’t skeletons fight? They don’t have the guts.”) |

- Be explicit about the role and expected format (e.g., “You are a travel planner. Return a 10-day itinerary as numbered days.”).

- Provide examples or constraints (length, tone, style) to guide the model’s output.

- Use system and context messages to control persistent behavior in chat-style interactions.

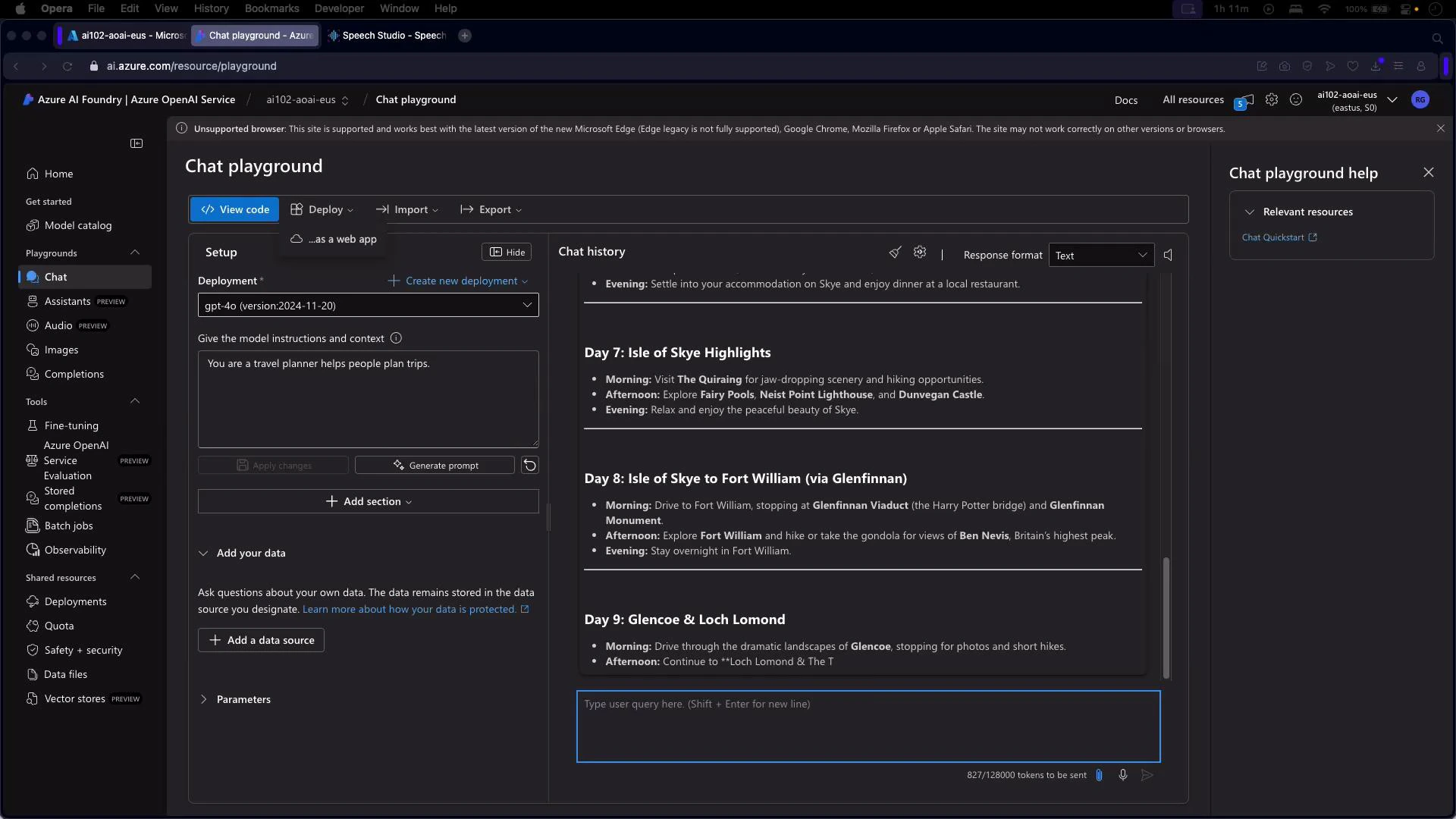

Testing prompts in Azure OpenAI Studio (Chat Playground)

Azure OpenAI Studio provides a Chat Playground inside the Azure portal so you can experiment with prompts and deployed models interactively. The playground is ideal for:- Iterating on prompt wording and role definitions.

- Verifying behavior of base or fine-tuned deployments.

- Generating sample outputs before integrating into an application.

- Select your deployment (for example, a GPT-3.5 or GPT-4.5 deployment).

- Enter a system instruction to set the model’s role (e.g., “You are an AI assistant that helps people find information.”).

- Type user prompts in the content box and observe the completion.

- Review chat history to test multi-turn interactions and context retention.

- Try the ready-made sample prompts (travel guides, recipes, code examples) and tweak them to fit your use case.

- Add custom data or test a fine-tuned model to evaluate specialized behavior.

- Set the system instruction: “You are a travel planner that helps people plan trips.”

- Enter the user prompt: “Plan a 10-day trip to Scotland.”

- Inspect the model’s day-by-day itinerary, then refine the system message or user prompt to change style, granularity, or constraints.

The playground is a quick way to validate how a fine-tuned model or a deployment responds to your prompts and any additional context before you integrate it into production.

Example: Chat completion with the OpenAI Python client (Azure)

Below is a concise, working Python example that shows how to create a chat completion against an Azure-hosted model. Update the endpoint, key, and deployment name to match your Azure resource configuration.Keep secrets out of source control. Use environment variables, Azure Key Vault, or managed identities for authentication. Also confirm the AZURE_OPENAI_API_VERSION is supported for your deployment to avoid runtime errors.

- Replace AZURE_OPENAI_API_BASE, AZURE_OPENAI_API_KEY, and DEPLOYMENT_NAME with your actual Azure values.

- If you prefer Microsoft’s Azure SDK, see the azure.ai.openai package samples in the Azure docs and use AzureKeyCredential or managed identity for authentication: https://learn.microsoft.com/azure/cognitive-services/openai/

- Tune parameters such as max_tokens, temperature, and top_p to control response length and creativity.

- Use system messages to set behavior (role, tone, constraints) and include examples or templates for predictable formatting.

- Test prompts in the playground with representative inputs and edge cases before deploying.

Links and references

- Azure OpenAI Service documentation: https://learn.microsoft.com/azure/cognitive-services/openai/

- OpenAI Python client: https://pypi.org/project/openai/