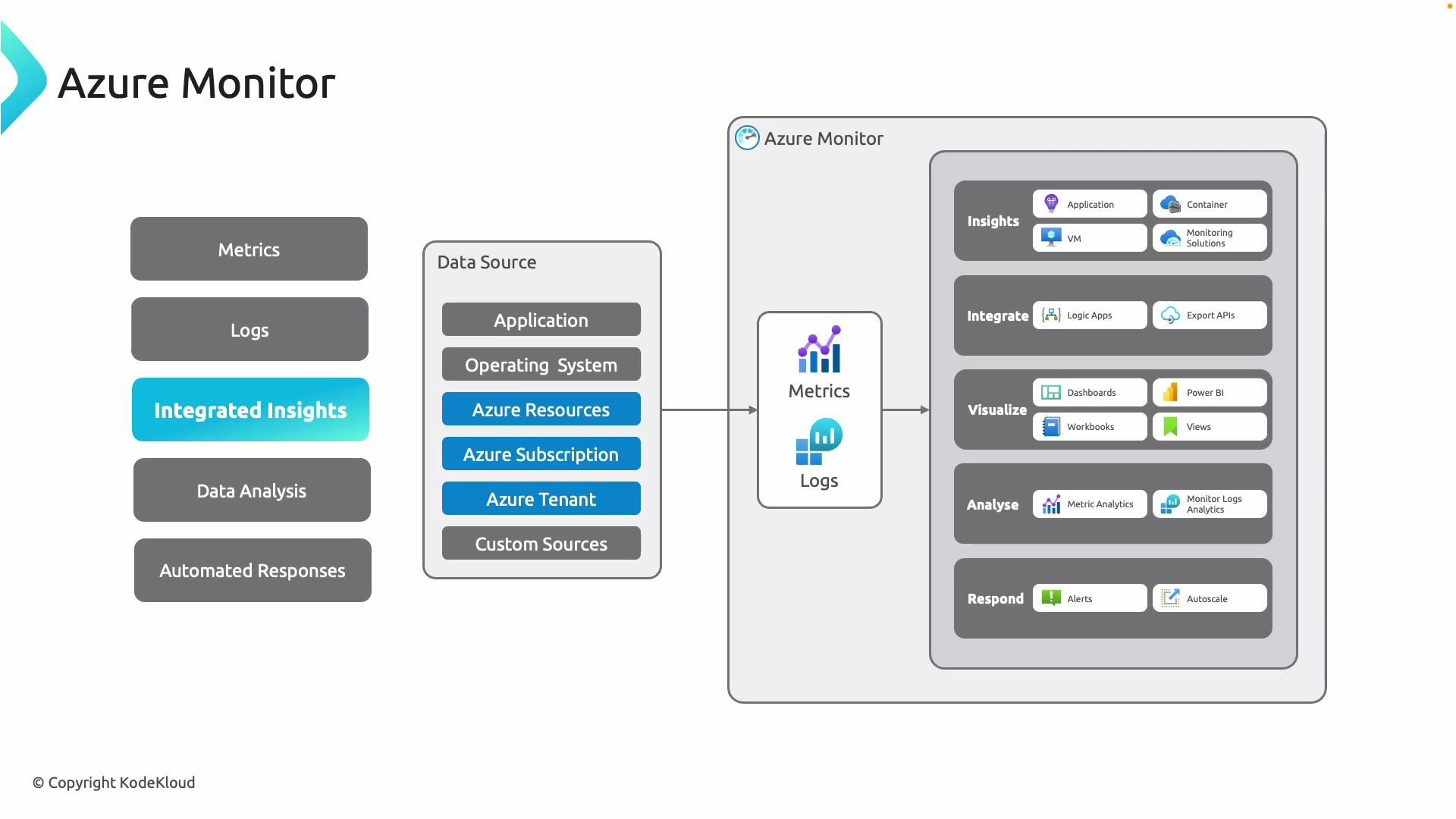

- Metrics: Lightweight numerical series (CPU, memory, latency). Collected at near‑real-time intervals for short-term health and performance tracking.

- Logs: Rich, detailed event and diagnostic data (traces, exceptions, activity logs). Used for deep troubleshooting, correlation, and forensic analysis.

- Integrated insights: Prebuilt and custom insights for applications, VMs, containers, and network resources that provide a single pane for performance, reliability, and security.

| Concept | Use case | Where to view |

|---|---|---|

| Metrics | Real‑time performance, alerts, autoscale | Metrics Explorer, Dashboards |

| Logs | Troubleshooting, long‑term retention, queries | Log Analytics, Workbooks |

| Insights | Preconfigured monitoring for specific services | Azure Monitor insights blades |

Metrics are best for near real-time numeric trends (short-term monitoring). Logs provide deeper diagnostic context and are better for forensic analysis, long-term retention, and troubleshooting.

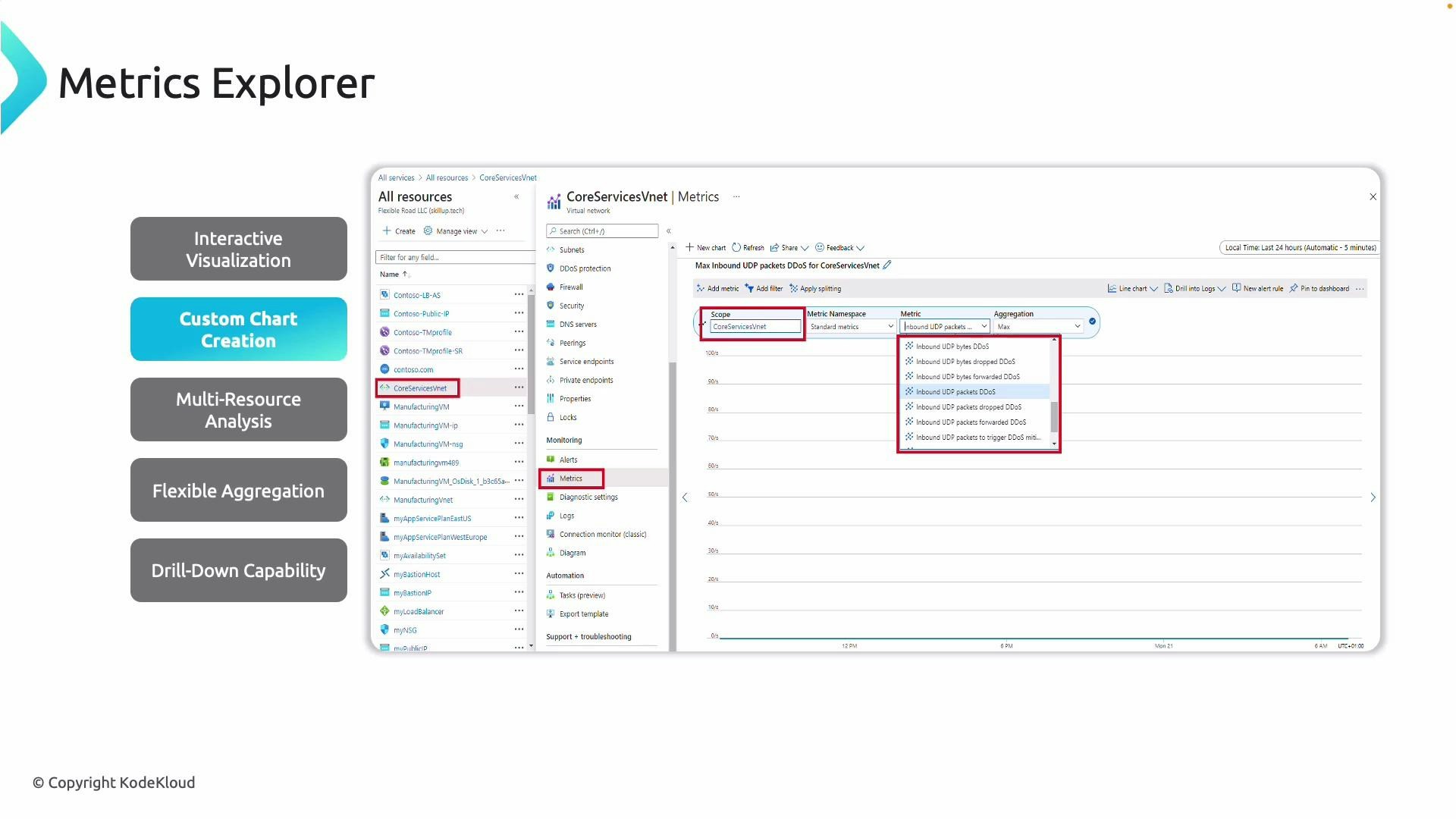

Metrics Explorer

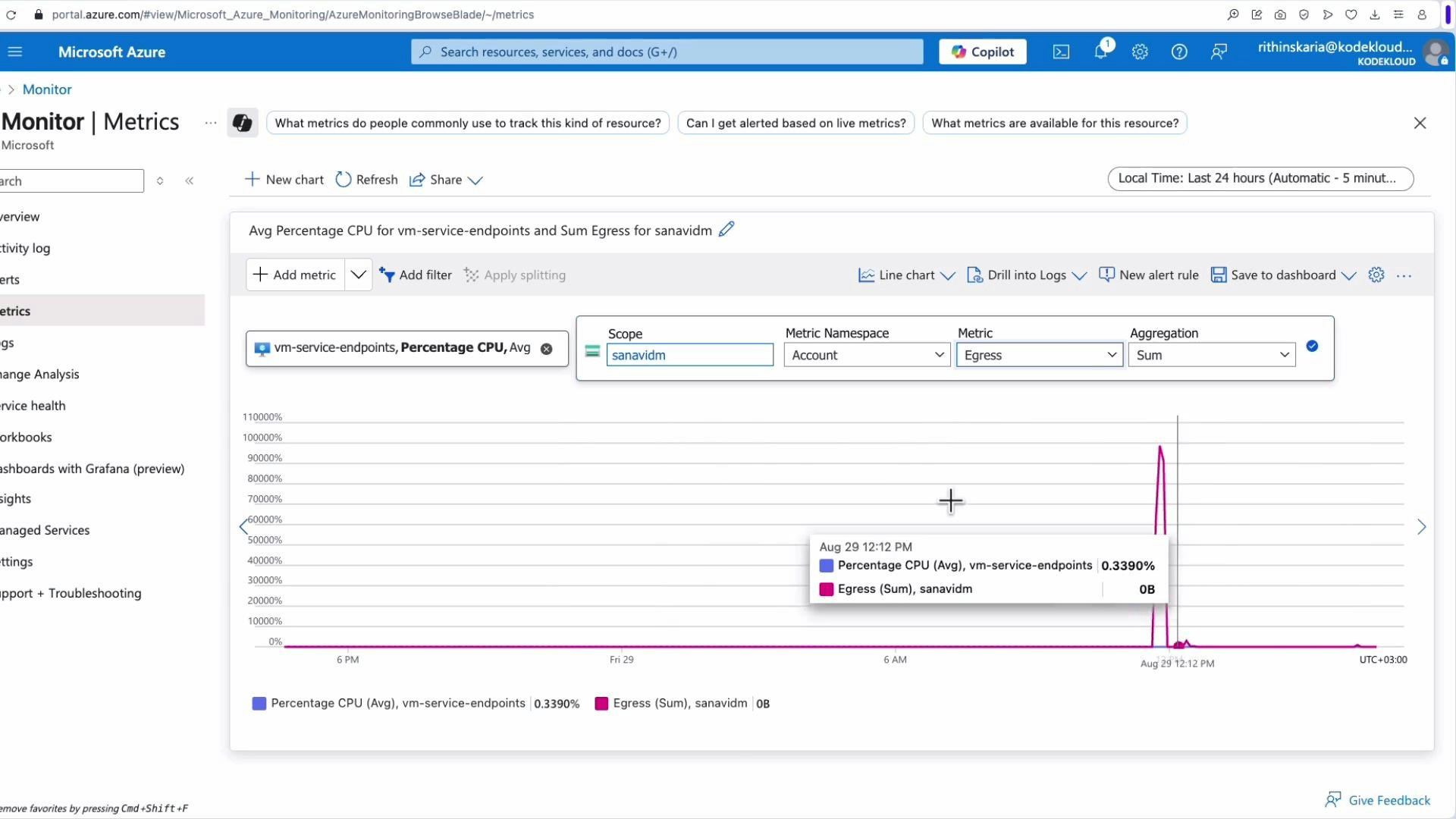

Metrics Explorer provides an interactive UI to graph real‑time and historical metrics. Use it to spot patterns — for example, spikes in network latency or sustained increases in CPU — and build KPIs like inbound dropped packets for a VNet firewall or average transaction latency for a SQL managed instance.

- Multi-resource analysis: Compare metrics across resources to locate hotspots (for example, throughput across VNets).

- Aggregations: Use sum, average, min, max, count to tailor analysis (average CPU across a scale set vs peak CPU for a single instance).

- Drill-down: Zoom into specific time windows (e.g., a 5‑minute interval during a DDoS event) for granular analysis.

- Charting and sharing: Change chart types, pin charts to dashboards, and export visualizations for operations teams.

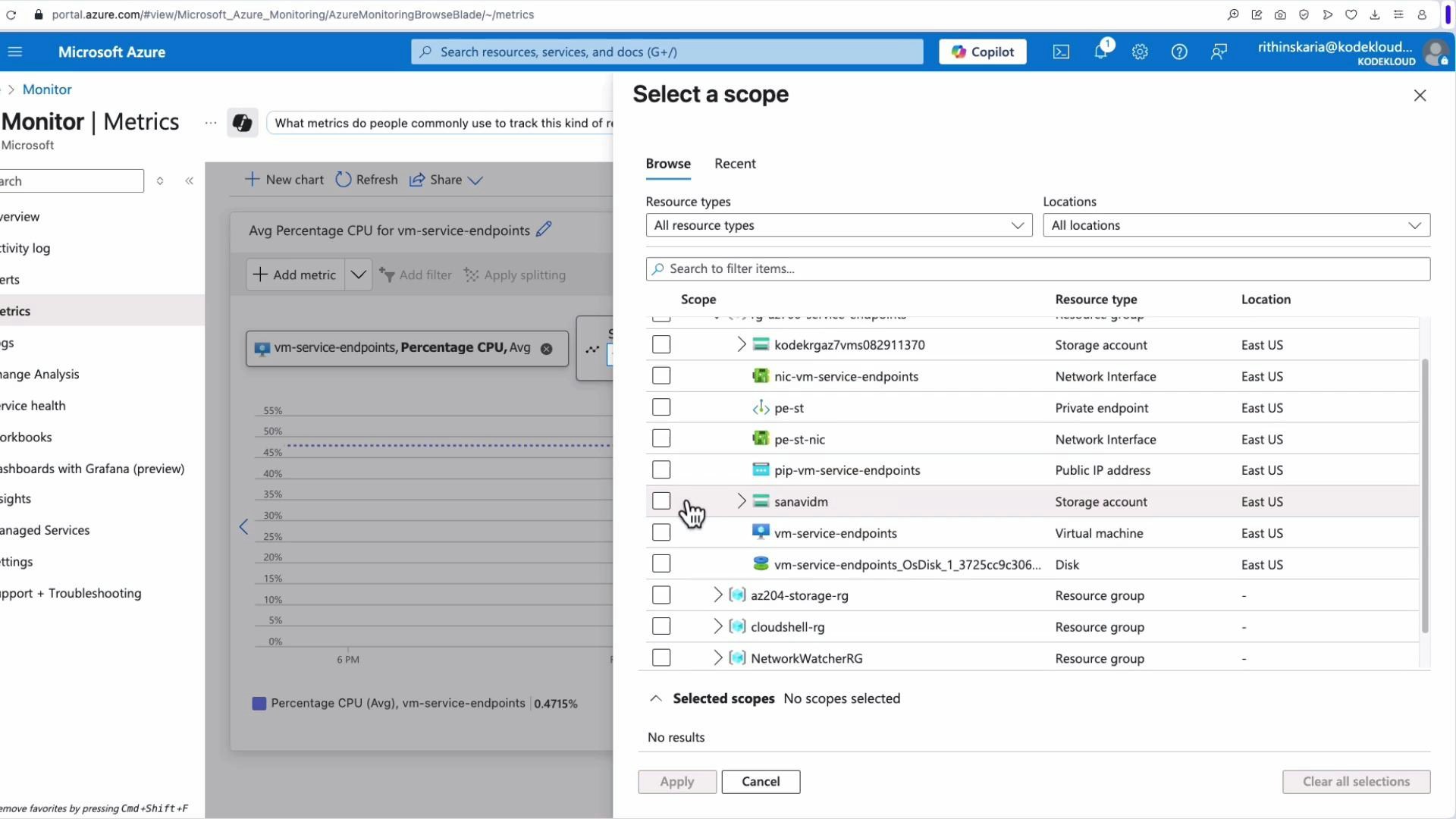

- Sign in to the Azure Portal.

- Go to Monitor > Metrics, or open a resource and select the Metrics blade.

- Select scope (one or more resources), choose metric(s), aggregation, and time range.

Metrics vs Logs and Diagnostic Settings

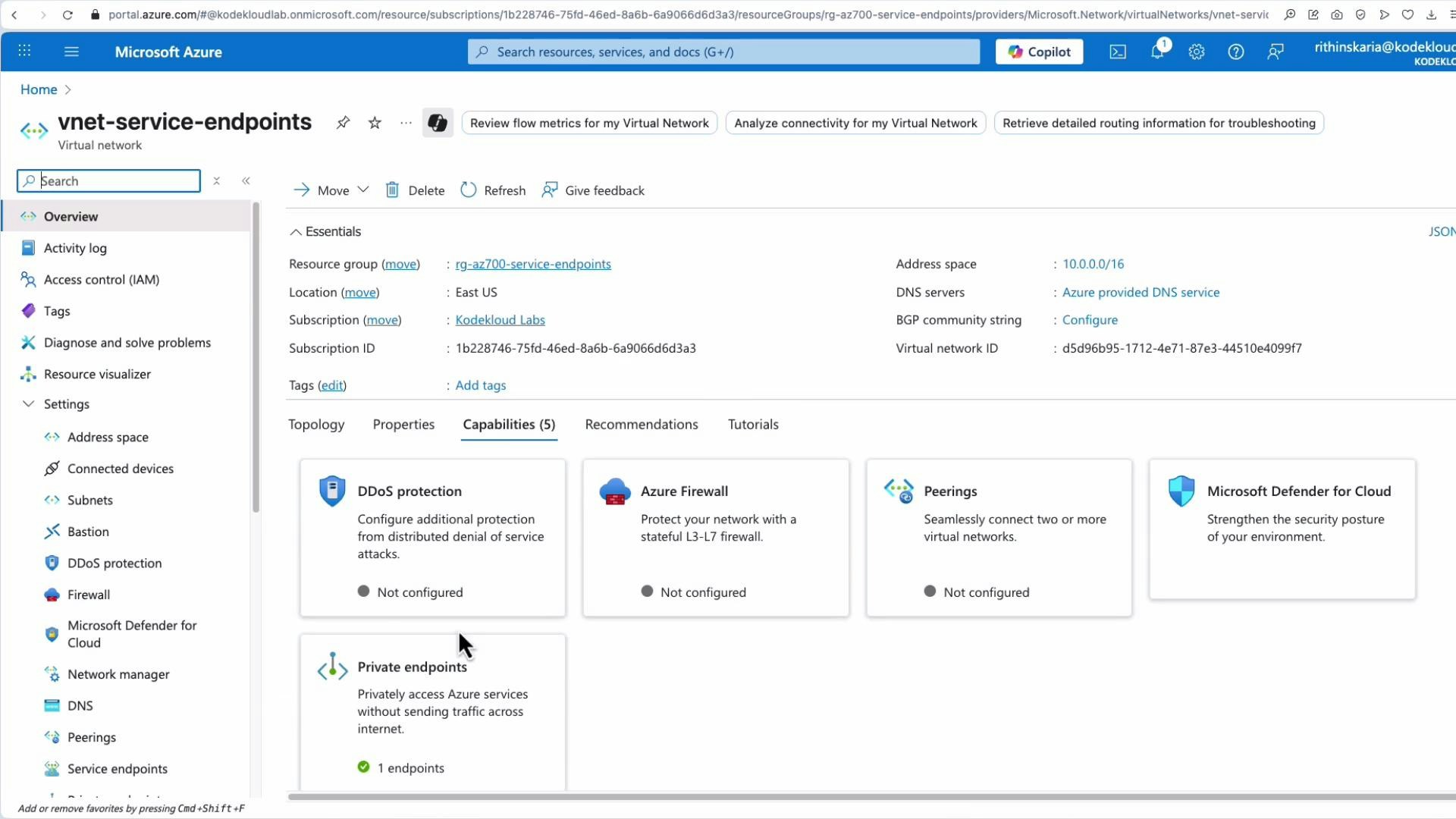

Platform metrics shown in Metrics Explorer are retained for a limited period (platform metrics retention is typically around 93 days by default; check the Azure Monitor documentation for current windows). For longer retention or richer diagnostic context, enable diagnostic settings on resources to route telemetry to long‑term stores or streaming endpoints. Common diagnostic destinations:| Destination | Best for | Notes |

|---|---|---|

| Log Analytics workspace | Querying, correlation, workbooks, advanced analytics | Supports Kusto Query Language (KQL) |

| Storage account | Long‑term archival | Lower cost, limited query capability |

| Event Hub | Streaming to external systems or SIEM | Useful for real‑time forwarding |

| Partner solutions | Third‑party analytics and monitoring | Integrations with SIEM or APM tools |

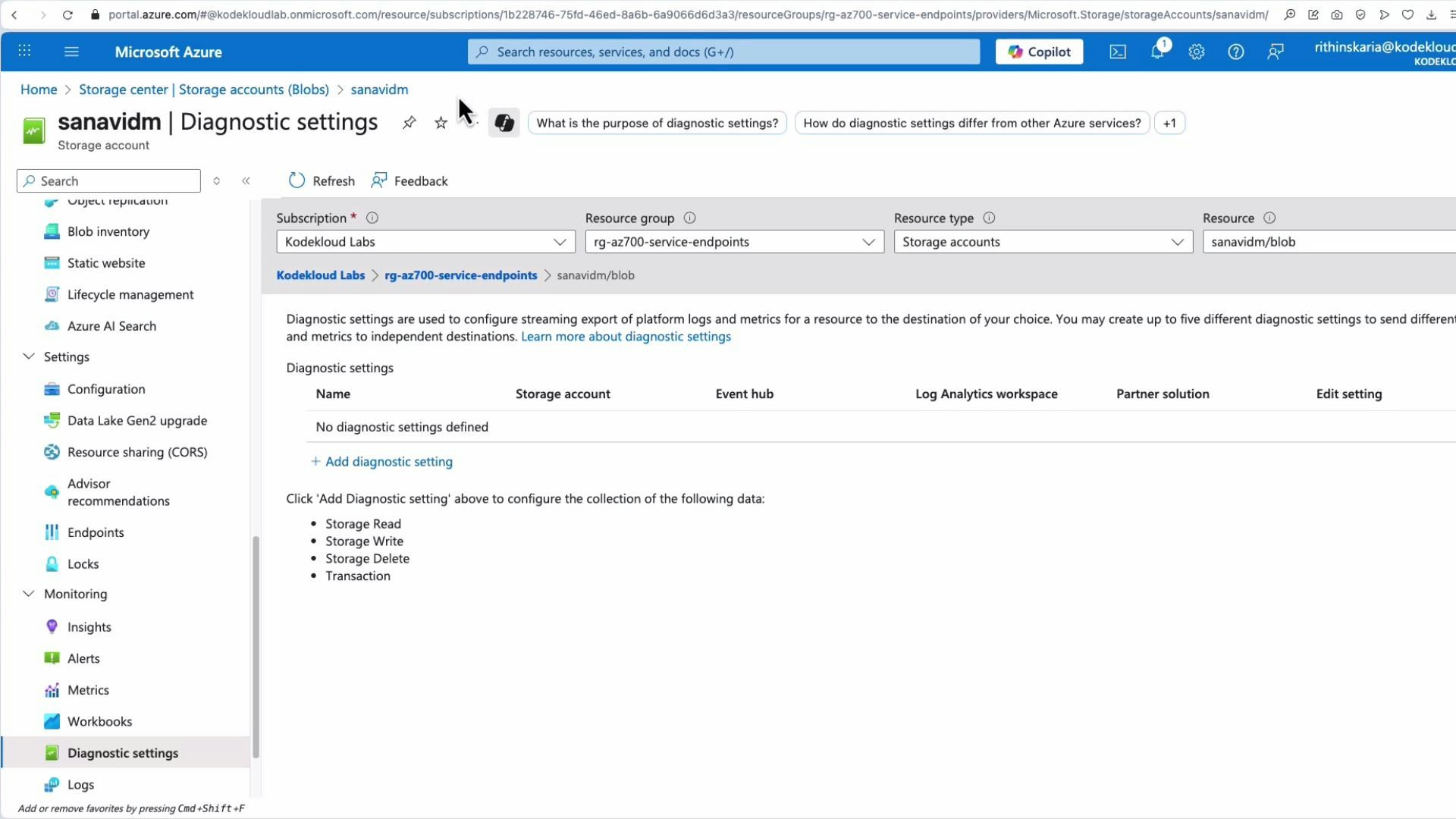

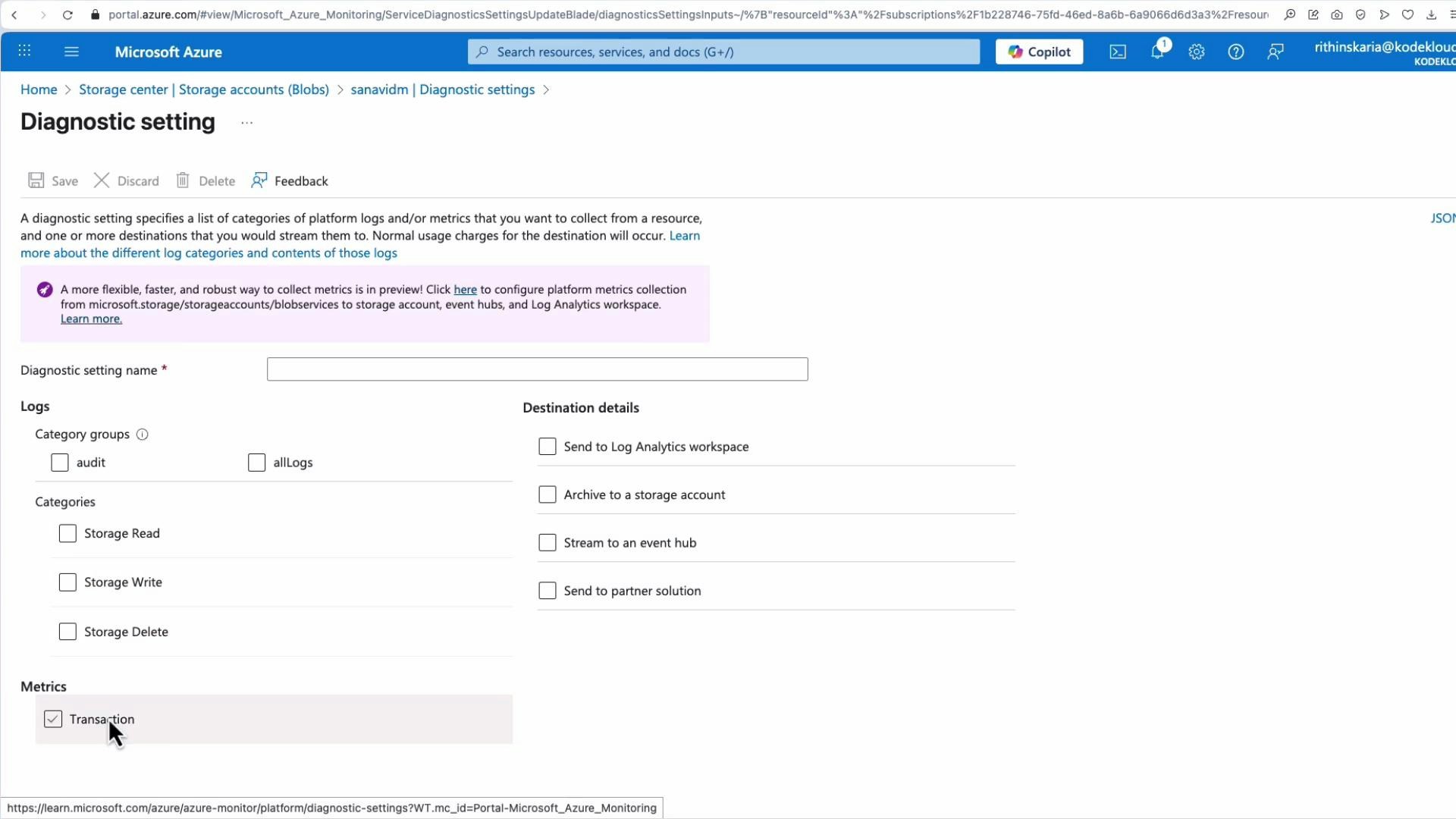

- Open the target resource in the Azure Portal.

- Under Monitoring, select Diagnostic settings.

- Create or edit a diagnostic setting, choose log categories and metrics, and select one or more destinations (Log Analytics, storage, Event Hub, or partner).

Sending diagnostics to Log Analytics or Event Hub can incur ingestion and retention costs. Evaluate retention policies and choose destinations that balance observability needs with budget.